Engineering

-

Phage resistant Escherichia coli strains developed to reduce fermentation failure

A genome engineering-based systematic strategy for developing phage resistant Escherichia coli strains has been successfully developed through the collaborative efforts of a team led by Professor Sang Yup Lee, Professor Shi Chen, and Professor Lianrong Wang. This study by Xuan Zou et al. was published in Nature Communications in August 2022 and featured in Nature Communications Editors’ Highlights. The collaboration by the School of Pharmaceutical Sciences at Wuhan University, the First Affiliated Hospital of Shenzhen University, and the KAIST Department of Chemical and Biomolecular Engineering has made an important advance in the metabolic engineering and fermentation industry as it solves a big problem of phage infection causing fermentation failure.

Systems metabolic engineering is a highly interdisciplinary field that has made the development of microbial cell factories to produce various bioproducts including chemicals, fuels, and materials possible in a sustainable and environmentally friendly way, mitigating the impact of worldwide resource depletion and climate change. Escherichia coli is one of the most important chassis microbial strains, given its wide applications in the bio-based production of a diverse range of chemicals and materials. With the development of tools and strategies for systems metabolic engineering using E. coli, a highly optimized and well-characterized cell factory will play a crucial role in converting cheap and readily available raw materials into products of great economic and industrial value.

However, the consistent problem of phage contamination in fermentation imposes a devastating impact on host cells and threatens the productivity of bacterial bioprocesses in biotechnology facilities, which can lead to widespread fermentation failure and immeasurable economic loss. Host-controlled defense systems can be developed into effective genetic engineering solutions to address bacteriophage contamination in industrial-scale fermentation; however, most of the resistance mechanisms only narrowly restrict phages and their effect on phage contamination will be limited.

There have been attempts to develop diverse abilities/systems for environmental adaptation or antiviral defense. The team’s collaborative efforts developed a new type II single-stranded DNA phosphorothioation (Ssp) defense system derived from E. coli 3234/A, which can be used in multiple industrial E. coli strains (e.g., E. coli K-12, B and W) to provide broad protection against various types of dsDNA coliphages. Furthermore, they developed a systematic genome engineering strategy involving the simultaneous genomic integration of the Ssp defense module and mutations in components that are essential to the phage life cycle. This strategy can be used to transform E. coli hosts that are highly susceptible to phage attack into strains with powerful restriction effects on the tested bacteriophages. This endows hosts with strong resistance against a wide spectrum of phage infections without affecting bacterial growth and normal physiological function. More importantly, the resulting engineered phage-resistant strains maintained the capabilities of producing the desired chemicals and recombinant proteins even under high levels of phage cocktail challenge, which provides crucial protection against phage attacks.

This is a major step forward, as it provides a systematic solution for engineering phage-resistant bacterial strains, especially industrial bioproduction strains, to protect cells from a wide range of bacteriophages. Considering the functionality of this engineering strategy with diverse E. coli strains, the strategy reported in this study can be widely extended to other bacterial species and industrial applications, which will be of great interest to researchers in academia and industry alike.

Fig. A schematic model of the systematic strategy for engineering phage-sensitive industrial E. coli strains into strains with broad antiphage activities. Through the simultaneous genomic integration of a DNA phosphorothioation-based Ssp defense module and mutations of components essential for the phage life cycle, the engineered E. coli strains show strong resistance against diverse phages tested and maintain the capabilities of producing example recombinant proteins, even under high levels of phage cocktail challenge.

2022.08.23 View 11941

Phage resistant Escherichia coli strains developed to reduce fermentation failure

A genome engineering-based systematic strategy for developing phage resistant Escherichia coli strains has been successfully developed through the collaborative efforts of a team led by Professor Sang Yup Lee, Professor Shi Chen, and Professor Lianrong Wang. This study by Xuan Zou et al. was published in Nature Communications in August 2022 and featured in Nature Communications Editors’ Highlights. The collaboration by the School of Pharmaceutical Sciences at Wuhan University, the First Affiliated Hospital of Shenzhen University, and the KAIST Department of Chemical and Biomolecular Engineering has made an important advance in the metabolic engineering and fermentation industry as it solves a big problem of phage infection causing fermentation failure.

Systems metabolic engineering is a highly interdisciplinary field that has made the development of microbial cell factories to produce various bioproducts including chemicals, fuels, and materials possible in a sustainable and environmentally friendly way, mitigating the impact of worldwide resource depletion and climate change. Escherichia coli is one of the most important chassis microbial strains, given its wide applications in the bio-based production of a diverse range of chemicals and materials. With the development of tools and strategies for systems metabolic engineering using E. coli, a highly optimized and well-characterized cell factory will play a crucial role in converting cheap and readily available raw materials into products of great economic and industrial value.

However, the consistent problem of phage contamination in fermentation imposes a devastating impact on host cells and threatens the productivity of bacterial bioprocesses in biotechnology facilities, which can lead to widespread fermentation failure and immeasurable economic loss. Host-controlled defense systems can be developed into effective genetic engineering solutions to address bacteriophage contamination in industrial-scale fermentation; however, most of the resistance mechanisms only narrowly restrict phages and their effect on phage contamination will be limited.

There have been attempts to develop diverse abilities/systems for environmental adaptation or antiviral defense. The team’s collaborative efforts developed a new type II single-stranded DNA phosphorothioation (Ssp) defense system derived from E. coli 3234/A, which can be used in multiple industrial E. coli strains (e.g., E. coli K-12, B and W) to provide broad protection against various types of dsDNA coliphages. Furthermore, they developed a systematic genome engineering strategy involving the simultaneous genomic integration of the Ssp defense module and mutations in components that are essential to the phage life cycle. This strategy can be used to transform E. coli hosts that are highly susceptible to phage attack into strains with powerful restriction effects on the tested bacteriophages. This endows hosts with strong resistance against a wide spectrum of phage infections without affecting bacterial growth and normal physiological function. More importantly, the resulting engineered phage-resistant strains maintained the capabilities of producing the desired chemicals and recombinant proteins even under high levels of phage cocktail challenge, which provides crucial protection against phage attacks.

This is a major step forward, as it provides a systematic solution for engineering phage-resistant bacterial strains, especially industrial bioproduction strains, to protect cells from a wide range of bacteriophages. Considering the functionality of this engineering strategy with diverse E. coli strains, the strategy reported in this study can be widely extended to other bacterial species and industrial applications, which will be of great interest to researchers in academia and industry alike.

Fig. A schematic model of the systematic strategy for engineering phage-sensitive industrial E. coli strains into strains with broad antiphage activities. Through the simultaneous genomic integration of a DNA phosphorothioation-based Ssp defense module and mutations of components essential for the phage life cycle, the engineered E. coli strains show strong resistance against diverse phages tested and maintain the capabilities of producing example recombinant proteins, even under high levels of phage cocktail challenge.

2022.08.23 View 11941 -

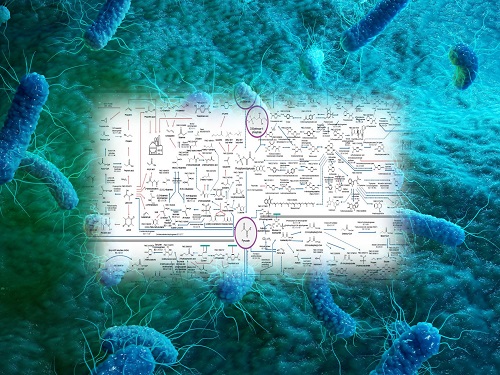

Interactive Map of Metabolical Synthesis of Chemicals

An interactive map that compiled the chemicals produced by biological, chemical and combined reactions has been distributed on the web

- A team led by Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering, organized and distributed an all-inclusive listing of chemical substances that can be synthesized using microorganisms

- It is expected to be used by researchers around the world as it enables easy assessment of the synthetic pathway through the web.

A research team comprised of Woo Dae Jang, Gi Bae Kim, and Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering at KAIST reported an interactive metabolic map of bio-based chemicals. Their research paper “An interactive metabolic map of bio-based chemicals” was published online in Trends in Biotechnology on August 10, 2022.

As a response to rapid climate change and environmental pollution, research on the production of petrochemical products using microorganisms is receiving attention as a sustainable alternative to existing methods of productions. In order to synthesize various chemical substances, materials, and fuel using microorganisms, it is necessary to first construct the biosynthetic pathway toward desired product by exploration and discovery and introduce them into microorganisms. In addition, in order to efficiently synthesize various chemical substances, it is sometimes necessary to employ chemical methods along with bioengineering methods using microorganisms at the same time. For the production of non-native chemicals, novel pathways are designed by recruiting enzymes from heterologous sources or employing enzymes designed though rational engineering, directed evolution, or ab initio design.

The research team had completed a map of chemicals which compiled all available pathways of biological and/or chemical reactions that lead to the production of various bio-based chemicals back in 2019 and published the map in Nature Catalysis. The map was distributed in the form of a poster to industries and academia so that the synthesis paths of bio-based chemicals could be checked at a glance.

The research team has expanded the bio-based chemicals map this time in the form of an interactive map on the web so that anyone with internet access can quickly explore efficient paths to synthesize desired products. The web-based map provides interactive visual tools to allow interactive visualization, exploration, and analysis of complex networks of biological and/or chemical reactions toward the desired products. In addition, the reported paper also discusses the production of natural compounds that are used for diverse purposes such as food and medicine, which will help designing novel pathways through similar approaches or by exploiting the promiscuity of enzymes described in the map. The published bio-based chemicals map is also available at http://systemsbiotech.co.kr.

The co-first authors, Dr. Woo Dae Jang and Ph.D. student Gi Bae Kim, said, “We conducted this study to address the demand for updating the previously distributed chemicals map and enhancing its versatility.” “The map is expected to be utilized in a variety of research and in efforts to set strategies and prospects for chemical production incorporating bio and chemical methods that are detailed in the map.”

Distinguished Professor Sang Yup Lee said, “The interactive bio-based chemicals map is expected to help design and optimization of the metabolic pathways for the biosynthesis of target chemicals together with the strategies of chemical conversions, serving as a blueprint for developing further ideas on the production of desired chemicals through biological and/or chemical reactions.”

The interactive metabolic map of bio-based chemicals.

2022.08.11 View 13026

Interactive Map of Metabolical Synthesis of Chemicals

An interactive map that compiled the chemicals produced by biological, chemical and combined reactions has been distributed on the web

- A team led by Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering, organized and distributed an all-inclusive listing of chemical substances that can be synthesized using microorganisms

- It is expected to be used by researchers around the world as it enables easy assessment of the synthetic pathway through the web.

A research team comprised of Woo Dae Jang, Gi Bae Kim, and Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering at KAIST reported an interactive metabolic map of bio-based chemicals. Their research paper “An interactive metabolic map of bio-based chemicals” was published online in Trends in Biotechnology on August 10, 2022.

As a response to rapid climate change and environmental pollution, research on the production of petrochemical products using microorganisms is receiving attention as a sustainable alternative to existing methods of productions. In order to synthesize various chemical substances, materials, and fuel using microorganisms, it is necessary to first construct the biosynthetic pathway toward desired product by exploration and discovery and introduce them into microorganisms. In addition, in order to efficiently synthesize various chemical substances, it is sometimes necessary to employ chemical methods along with bioengineering methods using microorganisms at the same time. For the production of non-native chemicals, novel pathways are designed by recruiting enzymes from heterologous sources or employing enzymes designed though rational engineering, directed evolution, or ab initio design.

The research team had completed a map of chemicals which compiled all available pathways of biological and/or chemical reactions that lead to the production of various bio-based chemicals back in 2019 and published the map in Nature Catalysis. The map was distributed in the form of a poster to industries and academia so that the synthesis paths of bio-based chemicals could be checked at a glance.

The research team has expanded the bio-based chemicals map this time in the form of an interactive map on the web so that anyone with internet access can quickly explore efficient paths to synthesize desired products. The web-based map provides interactive visual tools to allow interactive visualization, exploration, and analysis of complex networks of biological and/or chemical reactions toward the desired products. In addition, the reported paper also discusses the production of natural compounds that are used for diverse purposes such as food and medicine, which will help designing novel pathways through similar approaches or by exploiting the promiscuity of enzymes described in the map. The published bio-based chemicals map is also available at http://systemsbiotech.co.kr.

The co-first authors, Dr. Woo Dae Jang and Ph.D. student Gi Bae Kim, said, “We conducted this study to address the demand for updating the previously distributed chemicals map and enhancing its versatility.” “The map is expected to be utilized in a variety of research and in efforts to set strategies and prospects for chemical production incorporating bio and chemical methods that are detailed in the map.”

Distinguished Professor Sang Yup Lee said, “The interactive bio-based chemicals map is expected to help design and optimization of the metabolic pathways for the biosynthesis of target chemicals together with the strategies of chemical conversions, serving as a blueprint for developing further ideas on the production of desired chemicals through biological and/or chemical reactions.”

The interactive metabolic map of bio-based chemicals.

2022.08.11 View 13026 -

A New Therapeutic Drug for Alzheimer’s Disease without Inflammatory Side Effects

Although Aduhelm, a monoclonal antibody targeting amyloid beta (Aβ), recently became the first US FDA approved drug for Alzheimer’s disease (AD) based on its ability to decrease Aβ plaque burden in AD patients, its effect on cognitive improvement is still controversial. Moreover, about 40% of the patients treated with this antibody experienced serious side effects including cerebral edemas (ARIA-E) and hemorrhages (ARIA-H) that are likely related to inflammatory responses in the brain when the Aβ antibody binds Fc receptors (FCR) of immune cells such as microglia and macrophages.

These inflammatory side effects can cause neuronal cell death and synapse elimination by activated microglia, and even have the potential to exacerbate cognitive impairment in AD patients. Thus, current Aβ antibody-based immunotherapy holds the inherent risk of doing more harm than good due to their inflammatory side effects.

To overcome these problems, a team of researchers at KAIST in South Korea has developed a novel fusion protein drug, αAβ-Gas6, which efficiently eliminates Aβ via an entirely different mechanism than Aβ antibody-based immunotherapy. In a mouse model of AD, αAβ-Gas6 not only removed Aβ with higher potency, but also circumvented the neurotoxic inflammatory side effects associated with conventional antibody treatments.

Their findings were published on August 4 in Nature Medicine.

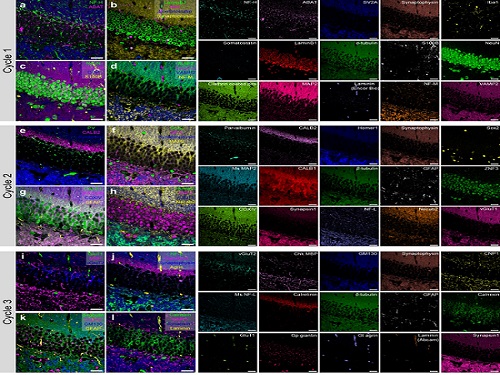

Schematic of a chimeric Gas6 fusion protein. A single chain variable fragment (scFv) of

an Amyloid β (Aβ)-targeting monoclonal antibody is fused with a truncated receptor binding

domain of Gas6, a bridging molecule for the clearance of dead cells via TAM (TYRO3, AXL,

and MERTK) receptors, which are expressed by microglia and astrocytes.

“FcR activation by Aβ targeting antibodies induces microglia-mediated Aβ phagocytosis, but it also produces inflammatory signals, inevitably damaging brain tissues,” said paper authors Chan Hyuk Kim and Won-Suk Chung, associate professors in the Department of Biological Sciences at KAIST.

“Therefore, we utilized efferocytosis, a cellular process by which dead cells are removed by phagocytes as an alternative pathway for the clearance of Aβ in the brain,” Prof. Kim and Chung said. “Efferocytosis is accompanied by anti-inflammatory responses to maintain tissue homeostasis. To exploit this process, we engineered Gas6, a soluble adaptor protein that mediates efferocytosis via TAM phagocytic receptors in such a way that its target specificity was redirected from dead cells to Aβ plaques.”

The professors and their team demonstrated that the resulting αAβ-Gas6 induced Aβ engulfment by activating not only microglial but also astrocytic phagocytosis since TAM phagocytic receptors are highly expressed by these two major phagocytes in the brain. Importantly, αAβ-Gas6 promoted the robust uptake of Aβ without showing any signs of inflammation and neurotoxicity, which contrasts sharply with the treatment using an Aβ monoclonal antibody. Moreover, they showed that αAβ-Gas6 substantially reduced excessive synapse elimination by microglia, consequently leading to better behavioral rescues in AD model mice.

“By using a mouse model of cerebral amyloid angiopathy (CAA), a cerebrovascular disorder caused by the deposition of Aβ within the walls of the brain’s blood vessels, we also showed that the intrathecal administration of Gas6 fusion protein significantly eliminated cerebrovascular amyloids, along with a reduction of microhemorrhages. These data demonstrate that aAb-Gas6 is a potent therapeutic agent in eliminating Aβ without exacerbating CAA-related microhemorrhages.”

The resulting αAβ-Gas6 clears Aβ oligomers and fibrils without causing neurotoxicity (a-b, neurons: red, and fragmented axons: yellow) and proinflammatory responses (c, TNF release), which are conversely exacerbated by the treatment of an Aβ-targeting monoclonal antibody (Aducanumab).

Professors Kim and Chung noted, “We believe our approach can be a breakthrough in treating AD without causing inflammatory side effects and synapse loss. Our approach holds promise as a novel therapeutic platform that is applicable to more than AD. By modifying the target-specificity of the fusion protein, the Gas6-fusion protein can be applied to various neurological disorders as well as autoimmune diseases affected by toxic molecules that should be removed without causing inflammatory responses.”

The number and total area of Aβ plaques (Thioflavin-T, green) were significantly reduced in αAβ-Gas6-treated AD mouse brains compared to Aducanumab-treated ones (a, b). The cognitive functions of AD model mice were significantly rescued by αAβ-Gas6 treatment, whereas Aducanumab-treated AD mice showed partial rescue in these cognitive tests (c-e).

Professors Kim and Chung founded “Illimis Therapeutics” based on this strategy of designing chimeric Gas6 fusion proteins that would remove toxic aggregates from the nervous system. Through this company, they are planning to further develop various Gas6-fusion proteins not only for Ab but also for Tau to treat AD symptoms.

This work was supported by KAIST and the Korea Health Technology R&D Project that was administered by the Korea Health Industry Development Institute (KHIDI) and the Korea Dementia Research Center (KDRC) funded by the Ministry of Health & Welfare (MOHW) and the Ministry of Science and ICT (MSIT), and KAIST.

Other contributors include Hyuncheol Jung and Se Young Lee, Sungjoon Lim, Hyeong Ryeol Choi, Yeseong Choi, Minjin Kim, Segi Kim, the Department of Biological Sciences, and the Korea Advanced Institute of Science and Technology (KAIST).

To receive more up-to-date information on this new development, follow “Illimis Therapeutics” on twitter @Illimistx.

2022.08.05 View 11219

A New Therapeutic Drug for Alzheimer’s Disease without Inflammatory Side Effects

Although Aduhelm, a monoclonal antibody targeting amyloid beta (Aβ), recently became the first US FDA approved drug for Alzheimer’s disease (AD) based on its ability to decrease Aβ plaque burden in AD patients, its effect on cognitive improvement is still controversial. Moreover, about 40% of the patients treated with this antibody experienced serious side effects including cerebral edemas (ARIA-E) and hemorrhages (ARIA-H) that are likely related to inflammatory responses in the brain when the Aβ antibody binds Fc receptors (FCR) of immune cells such as microglia and macrophages.

These inflammatory side effects can cause neuronal cell death and synapse elimination by activated microglia, and even have the potential to exacerbate cognitive impairment in AD patients. Thus, current Aβ antibody-based immunotherapy holds the inherent risk of doing more harm than good due to their inflammatory side effects.

To overcome these problems, a team of researchers at KAIST in South Korea has developed a novel fusion protein drug, αAβ-Gas6, which efficiently eliminates Aβ via an entirely different mechanism than Aβ antibody-based immunotherapy. In a mouse model of AD, αAβ-Gas6 not only removed Aβ with higher potency, but also circumvented the neurotoxic inflammatory side effects associated with conventional antibody treatments.

Their findings were published on August 4 in Nature Medicine.

Schematic of a chimeric Gas6 fusion protein. A single chain variable fragment (scFv) of

an Amyloid β (Aβ)-targeting monoclonal antibody is fused with a truncated receptor binding

domain of Gas6, a bridging molecule for the clearance of dead cells via TAM (TYRO3, AXL,

and MERTK) receptors, which are expressed by microglia and astrocytes.

“FcR activation by Aβ targeting antibodies induces microglia-mediated Aβ phagocytosis, but it also produces inflammatory signals, inevitably damaging brain tissues,” said paper authors Chan Hyuk Kim and Won-Suk Chung, associate professors in the Department of Biological Sciences at KAIST.

“Therefore, we utilized efferocytosis, a cellular process by which dead cells are removed by phagocytes as an alternative pathway for the clearance of Aβ in the brain,” Prof. Kim and Chung said. “Efferocytosis is accompanied by anti-inflammatory responses to maintain tissue homeostasis. To exploit this process, we engineered Gas6, a soluble adaptor protein that mediates efferocytosis via TAM phagocytic receptors in such a way that its target specificity was redirected from dead cells to Aβ plaques.”

The professors and their team demonstrated that the resulting αAβ-Gas6 induced Aβ engulfment by activating not only microglial but also astrocytic phagocytosis since TAM phagocytic receptors are highly expressed by these two major phagocytes in the brain. Importantly, αAβ-Gas6 promoted the robust uptake of Aβ without showing any signs of inflammation and neurotoxicity, which contrasts sharply with the treatment using an Aβ monoclonal antibody. Moreover, they showed that αAβ-Gas6 substantially reduced excessive synapse elimination by microglia, consequently leading to better behavioral rescues in AD model mice.

“By using a mouse model of cerebral amyloid angiopathy (CAA), a cerebrovascular disorder caused by the deposition of Aβ within the walls of the brain’s blood vessels, we also showed that the intrathecal administration of Gas6 fusion protein significantly eliminated cerebrovascular amyloids, along with a reduction of microhemorrhages. These data demonstrate that aAb-Gas6 is a potent therapeutic agent in eliminating Aβ without exacerbating CAA-related microhemorrhages.”

The resulting αAβ-Gas6 clears Aβ oligomers and fibrils without causing neurotoxicity (a-b, neurons: red, and fragmented axons: yellow) and proinflammatory responses (c, TNF release), which are conversely exacerbated by the treatment of an Aβ-targeting monoclonal antibody (Aducanumab).

Professors Kim and Chung noted, “We believe our approach can be a breakthrough in treating AD without causing inflammatory side effects and synapse loss. Our approach holds promise as a novel therapeutic platform that is applicable to more than AD. By modifying the target-specificity of the fusion protein, the Gas6-fusion protein can be applied to various neurological disorders as well as autoimmune diseases affected by toxic molecules that should be removed without causing inflammatory responses.”

The number and total area of Aβ plaques (Thioflavin-T, green) were significantly reduced in αAβ-Gas6-treated AD mouse brains compared to Aducanumab-treated ones (a, b). The cognitive functions of AD model mice were significantly rescued by αAβ-Gas6 treatment, whereas Aducanumab-treated AD mice showed partial rescue in these cognitive tests (c-e).

Professors Kim and Chung founded “Illimis Therapeutics” based on this strategy of designing chimeric Gas6 fusion proteins that would remove toxic aggregates from the nervous system. Through this company, they are planning to further develop various Gas6-fusion proteins not only for Ab but also for Tau to treat AD symptoms.

This work was supported by KAIST and the Korea Health Technology R&D Project that was administered by the Korea Health Industry Development Institute (KHIDI) and the Korea Dementia Research Center (KDRC) funded by the Ministry of Health & Welfare (MOHW) and the Ministry of Science and ICT (MSIT), and KAIST.

Other contributors include Hyuncheol Jung and Se Young Lee, Sungjoon Lim, Hyeong Ryeol Choi, Yeseong Choi, Minjin Kim, Segi Kim, the Department of Biological Sciences, and the Korea Advanced Institute of Science and Technology (KAIST).

To receive more up-to-date information on this new development, follow “Illimis Therapeutics” on twitter @Illimistx.

2022.08.05 View 11219 -

Metabolically Engineered Bacterium Produces Lutein

A research group at KAIST has engineered a bacterial strain capable of producing lutein. The research team applied systems metabolic engineering strategies, including substrate channeling and electron channeling, to enhance the production of lutein in an engineered Escherichia coli strain. The strategies will be also useful for the efficient production of other industrially important natural products used in the food, pharmaceutical, and cosmetic industries.

Figure: Systems metabolic engineering was employed to construct and optimize the metabolic pathways for lutein production, and substrate channeling and electron channeling strategies were additionally employed to increase the production of the lutein with high productivity.

Lutein is classified as a xanthophyll chemical that is abundant in egg yolk, fruits, and vegetables. It protects the eye from oxidative damage from radiation and reduces the risk of eye diseases including macular degeneration and cataracts. Commercialized products featuring lutein are derived from the extracts of the marigold flower, which is known to harbor abundant amounts of lutein. However, the drawback of lutein production from nature is that it takes a long time to grow and harvest marigold flowers. Furthermore, it requires additional physical and chemical-based extractions with a low yield, which makes it economically unfeasible in terms of productivity. The high cost and low yield of these bioprocesses has made it difficult to readily meet the demand for lutein.

These challenges inspired the metabolic engineers at KAIST, including researchers Dr. Seon Young Park, Ph.D. Candidate Hyunmin Eun, and Distinguished Professor Sang Yup Lee from the Department of Chemical and Biomolecular Engineering. The team’s study entitled “Metabolic engineering of Escherichia coli with electron channeling for the production of natural products” was published in Nature Catalysis on August 5, 2022.

This research details the ability to produce lutein from E. coli with a high yield using a cheap carbon source, glycerol, via systems metabolic engineering. The research group focused on solving the bottlenecks of the biosynthetic pathway for lutein production constructed within an individual cell. First, using systems metabolic engineering, which is an integrated technology to engineer the metabolism of a microorganism, lutein was produced when the lutein biosynthesis pathway was introduced, albeit in very small amounts.

To improve the productivity of lutein production, the bottleneck enzymes within the metabolic pathway were first identified. It turned out that metabolic reactions that involve a promiscuous enzyme, an enzyme that is involved in two or more metabolic reactions, and electron-requiring cytochrome P450 enzymes are the main bottleneck steps of the pathway inhibiting lutein biosynthesis.

To overcome these challenges, substrate channeling, a strategy to artificially recruit enzymes in physical proximity within the cell in order to increase the local concentrations of substrates that can be converted into products, was employed to channel more metabolic flux towards the target chemical while reducing the formation of unwanted byproducts.

Furthermore, electron channeling, a strategy similar to substrate channeling but differing in terms of increasing the local concentrations of electrons required for oxidoreduction reactions mediated by P450 and its reductase partners, was applied to further streamline the metabolic flux towards lutein biosynthesis, which led to the highest titer of lutein production achieved in a bacterial host ever reported. The same electron channeling strategy was successfully applied for the production of other natural products including nootkatone and apigenin in E. coli, showcasing the general applicability of the strategy in the research field.

“It is expected that this microbial cell factory-based production of lutein will be able to replace the current plant extraction-based process,” said Dr. Seon Young Park, the first author of the paper. She explained that another important point of the research is that integrated metabolic engineering strategies developed from this study can be generally applicable for the efficient production of other natural products useful as pharmaceuticals or nutraceuticals.

“As maintaining good health in an aging society is becoming increasingly important, we expect that the technology and strategies developed here will play pivotal roles in producing other valuable natural products of medical or nutritional importance,” explained Distinguished Professor Sang Yup Lee.

This work was supported by the Cooperative Research Program for Agriculture Science & Technology Development funded by the Rural Development Administration of Korea, with further support from the Development of Next-generation Biorefinery Platform Technologies for Leading Bio-based Chemicals Industry Project and by the Development of Platform Technologies of Microbial Cell Factories for the Next-generation Biorefineries Project of the National Research Foundation funded by the Ministry of Science and ICT of Korea.

2022.08.05 View 9220

Metabolically Engineered Bacterium Produces Lutein

A research group at KAIST has engineered a bacterial strain capable of producing lutein. The research team applied systems metabolic engineering strategies, including substrate channeling and electron channeling, to enhance the production of lutein in an engineered Escherichia coli strain. The strategies will be also useful for the efficient production of other industrially important natural products used in the food, pharmaceutical, and cosmetic industries.

Figure: Systems metabolic engineering was employed to construct and optimize the metabolic pathways for lutein production, and substrate channeling and electron channeling strategies were additionally employed to increase the production of the lutein with high productivity.

Lutein is classified as a xanthophyll chemical that is abundant in egg yolk, fruits, and vegetables. It protects the eye from oxidative damage from radiation and reduces the risk of eye diseases including macular degeneration and cataracts. Commercialized products featuring lutein are derived from the extracts of the marigold flower, which is known to harbor abundant amounts of lutein. However, the drawback of lutein production from nature is that it takes a long time to grow and harvest marigold flowers. Furthermore, it requires additional physical and chemical-based extractions with a low yield, which makes it economically unfeasible in terms of productivity. The high cost and low yield of these bioprocesses has made it difficult to readily meet the demand for lutein.

These challenges inspired the metabolic engineers at KAIST, including researchers Dr. Seon Young Park, Ph.D. Candidate Hyunmin Eun, and Distinguished Professor Sang Yup Lee from the Department of Chemical and Biomolecular Engineering. The team’s study entitled “Metabolic engineering of Escherichia coli with electron channeling for the production of natural products” was published in Nature Catalysis on August 5, 2022.

This research details the ability to produce lutein from E. coli with a high yield using a cheap carbon source, glycerol, via systems metabolic engineering. The research group focused on solving the bottlenecks of the biosynthetic pathway for lutein production constructed within an individual cell. First, using systems metabolic engineering, which is an integrated technology to engineer the metabolism of a microorganism, lutein was produced when the lutein biosynthesis pathway was introduced, albeit in very small amounts.

To improve the productivity of lutein production, the bottleneck enzymes within the metabolic pathway were first identified. It turned out that metabolic reactions that involve a promiscuous enzyme, an enzyme that is involved in two or more metabolic reactions, and electron-requiring cytochrome P450 enzymes are the main bottleneck steps of the pathway inhibiting lutein biosynthesis.

To overcome these challenges, substrate channeling, a strategy to artificially recruit enzymes in physical proximity within the cell in order to increase the local concentrations of substrates that can be converted into products, was employed to channel more metabolic flux towards the target chemical while reducing the formation of unwanted byproducts.

Furthermore, electron channeling, a strategy similar to substrate channeling but differing in terms of increasing the local concentrations of electrons required for oxidoreduction reactions mediated by P450 and its reductase partners, was applied to further streamline the metabolic flux towards lutein biosynthesis, which led to the highest titer of lutein production achieved in a bacterial host ever reported. The same electron channeling strategy was successfully applied for the production of other natural products including nootkatone and apigenin in E. coli, showcasing the general applicability of the strategy in the research field.

“It is expected that this microbial cell factory-based production of lutein will be able to replace the current plant extraction-based process,” said Dr. Seon Young Park, the first author of the paper. She explained that another important point of the research is that integrated metabolic engineering strategies developed from this study can be generally applicable for the efficient production of other natural products useful as pharmaceuticals or nutraceuticals.

“As maintaining good health in an aging society is becoming increasingly important, we expect that the technology and strategies developed here will play pivotal roles in producing other valuable natural products of medical or nutritional importance,” explained Distinguished Professor Sang Yup Lee.

This work was supported by the Cooperative Research Program for Agriculture Science & Technology Development funded by the Rural Development Administration of Korea, with further support from the Development of Next-generation Biorefinery Platform Technologies for Leading Bio-based Chemicals Industry Project and by the Development of Platform Technologies of Microbial Cell Factories for the Next-generation Biorefineries Project of the National Research Foundation funded by the Ministry of Science and ICT of Korea.

2022.08.05 View 9220 -

Shaping the AI Semiconductor Ecosystem

- As the marriage of AI and semiconductor being highlighted as the strategic technology of national enthusiasm, KAIST's achievements in the related fields accumulated through top-class education and research capabilities that surpass that of peer universities around the world are standing far apart from the rest of the pack.

As Artificial Intelligence Semiconductor, or a system of semiconductors designed for specifically for highly complicated computation need for AI to conduct its learning and deducing calculations, (hereafter AI semiconductors) stand out as a national strategic technology, the related achievements of KAIST, headed by President Kwang Hyung Lee, are also attracting attention. The Ministry of Science, ICT and Future Planning (MSIT) of Korea initiated a program to support the advancement of AI semiconductor last year with the goal of occupying 20% of the global AI semiconductor market by 2030. This year, through industry-university-research discussions, the Ministry expanded to the program with the addition of 1.2 trillion won of investment over five years through 'Support Plan for AI Semiconductor Industry Promotion'. Accordingly, major universities began putting together programs devised to train students to develop expertise in AI semiconductors.

KAIST has accumulated top-notch educational and research capabilities in the two core fields of AI semiconductor - Semiconductor and Artificial Intelligence. Notably, in the field of semiconductors, the International Solid-State Circuit Conference (ISSCC) is the world's most prestigious conference about designing of semiconductor integrated circuit. Established in 1954, with more than 60% of the participants coming from companies including Samsung, Qualcomm, TSMC, and Intel, the conference naturally focuses on practical value of the studies from the industrial point-of-view, earning the nickname the ‘Semiconductor Design Olympics’. At such conference of legacy and influence, KAIST kept its presence widely visible over other participating universities, leading in terms of the number of accepted papers over world-class schools such as Massachusetts Institute of Technology (MIT) and Stanford for the past 17 years.

Number of papers published at the InternationalSolid-State Circuit Conference (ISSCC) in 2022 sorted by nations and by institutions

Number of papers by universities presented at the International Solid-State Circuit Conference (ISCCC) in 2006~2022

In terms of the number of papers accepted at the ISSCC, KAIST ranked among top two universities each year since 2006. Looking at the average number of accepted papers over the past 17 years, KAIST stands out as an unparalleled leader. The average number of KAIST papers adopted during the period of 17 years from 2006 through 2022, was 8.4, which is almost double of that of competitors like MIT (4.6) and UCLA (3.6). In Korea, it maintains the second place overall after Samsung, the undisputed number one in the semiconductor design field. Also, this year, KAIST was ranked first among universities participating at the Symposium on VLSI Technology and Circuits, an academic conference in the field of integrated circuits that rivals the ISSCC.

Number of papers adopted by the Symposium on VLSI Technology and Circuits in 2022 submitted from the universities

With KAIST researchers working and presenting new technologies at the frontiers of all key areas of the semiconductor industry, the quality of KAIST research is also maintained at the highest level. Professor Myoungsoo Jung's research team in the School of Electrical Engineering is actively working to develop heterogeneous computing environment with high energy efficiency in response to the industry's demand for high performance at low power. In the field of materials, a research team led by Professor Byong-Guk Park of the Department of Materials Science and Engineering developed the Spin Orbit Torque (SOT)-based Magnetic RAM (MRAM) memory that operates at least 10 times faster than conventional memories to suggest a way to overcome the limitations of the existing 'von Neumann structure'.

As such, while providing solutions to major challenges in the current semiconductor industry, the development of new technologies necessary to preoccupy new fields in the semiconductor industry are also very actively pursued. In the field of Quantum Computing, which is attracting attention as next-generation computing technology needed in order to take the lead in the fields of cryptography and nonlinear computation, Professor Sanghyeon Kim's research team in the School of Electrical Engineering presented the world's first 3D integrated quantum computing system at 2021 VLSI Symposium. In Neuromorphic Computing, which is expected to bring remarkable advancements in the field of artificial intelligence by utilizing the principles of the neurology, the research team of Professor Shinhyun Choi of School of Electrical Engineering is developing a next-generation memristor that mimics neurons.

The number of papers by the International Conference on Machine Learning (ICML) and the Conference on Neural Information Processing Systems (NeurIPS), two of the world’s most prestigious academic societies in the field of artificial intelligence (KAIST 6th in the world, 1st in Asia, in 2020)

The field of artificial intelligence has also grown rapidly. Based on the number of papers from the International Conference on Machine Learning (ICML) and the Conference on Neural Information Processing Systems (NeurIPS), two of the world's most prestigious conferences in the field of artificial intelligence, KAIST ranked 6th in the world in 2020 and 1st in Asia. Since 2012, KAIST's ranking steadily inclined from 37th to 6th, climbing 31 steps over the period of eight years. In 2021, 129 papers, or about 40%, of Korean papers published at 11 top artificial intelligence conferences were presented by KAIST. Thanks to KAIST's efforts, in 2021, Korea ranked sixth after the United States, China, United Kingdom, Canada, and Germany in terms of the number of papers published by global AI academic societies.

Number of papers from Korea (and by KAIST) published at 11 top conferences in the field of artificial intelligence in 2021

In terms of content, KAIST's AI research is also at the forefront. Professor Hoi-Jun Yoo's research team in the School of Electrical Engineering compensated for the shortcomings of the “edge networks” by implementing artificial intelligence real-time learning networks on mobile devices. In order to materialize artificial intelligence, data accumulation and a huge amount of computation is required. For this, a high-performance server takes care of massive computation, and for the user terminals, the “edge network” that collects data and performs simple computations are used. Professor Yoo's research greatly increased AI’s processing speed and performance by allotting the learning task to the user terminal as well.

In June, a research team led by Professor Min-Soo Kim of the School of Computing presented a solution that is essential for processing super-scale artificial intelligence models. The super-scale machine learning system developed by the research team is expected to achieve speeds up to 8.8 times faster than Google's Tensorflow or IBM's System DS, which are mainly used in the industry.

KAIST is also making remarkable achievements in the field of AI semiconductors. In 2020, Professor Minsoo Rhu's research team in the School of Electrical Engineering succeeded in developing the world's first AI semiconductor optimized for AI recommendation systems. Due to the nature of the AI recommendation system having to handle vast amounts of contents and user information, it quickly meets its limitation because of the information bottleneck when the process is operated through a general-purpose artificial intelligence system. Professor Minsoo Rhu's team developed a semiconductor that can achieve a speed that is 21 times faster than existing systems using the 'Processing-In-Memory (PIM)' technology. PIM is a technology that improves efficiency by performing the calculations in 'RAM', or random-access memory, which is usually only used to store data temporarily just before they are processed. When PIM technology is put out on the market, it is expected that fortify competitiveness of Korean companies in the AI semiconductor market drastically, as they already hold great strength in the memory area.

KAIST does not plan to be complacent with its achievements, but is making various plans to further the distance from the competitors catching on in the fields of artificial intelligence, semiconductors, and AI semiconductors. Following the establishment of the first artificial intelligence research center in Korea in 1990, the Kim Jaechul AI Graduate School was opened in 2019 to sustain the supply chain of the experts in the field. In 2020, Artificial Intelligence Semiconductor System Research Center was launched to conduct convergent research on AI and semiconductors, which was followed by the establishment of the AI Institutes to promote “AI+X” research efforts.

Based on the internal capabilities accumulated through these efforts, KAIST is also making efforts to train human resources needed in these areas. KAIST established joint research centers with companies such as Naver, while collaborating with local governments such as Hwaseong City to simultaneously nurture professional manpower. Back in 2021, KAIST signed an agreement to establish the Semiconductor System Engineering Department with Samsung Electronics and are preparing a new semiconductor specialist training program. The newly established Department of Semiconductor System Engineering will select around 100 new students every year from 2023 and provide special scholarships to all students so that they can develop their professional skills. In addition, through close cooperation with the industry, they will receive special support which includes field trips and internships at Samsung Electronics, and joint workshops and on-site training.

KAIST has made a significant contribution to the growth of the Korean semiconductor industry ecosystem, producing 25% of doctoral workers in the domestic semiconductor field and 20% of CEOs of mid-sized and venture companies with doctoral degrees. With the dawn coming up on the AI semiconductor ecosystem, whether KAIST will reprise the pivotal role seems to be the crucial point of business.

2022.08.05 View 11379

Shaping the AI Semiconductor Ecosystem

- As the marriage of AI and semiconductor being highlighted as the strategic technology of national enthusiasm, KAIST's achievements in the related fields accumulated through top-class education and research capabilities that surpass that of peer universities around the world are standing far apart from the rest of the pack.

As Artificial Intelligence Semiconductor, or a system of semiconductors designed for specifically for highly complicated computation need for AI to conduct its learning and deducing calculations, (hereafter AI semiconductors) stand out as a national strategic technology, the related achievements of KAIST, headed by President Kwang Hyung Lee, are also attracting attention. The Ministry of Science, ICT and Future Planning (MSIT) of Korea initiated a program to support the advancement of AI semiconductor last year with the goal of occupying 20% of the global AI semiconductor market by 2030. This year, through industry-university-research discussions, the Ministry expanded to the program with the addition of 1.2 trillion won of investment over five years through 'Support Plan for AI Semiconductor Industry Promotion'. Accordingly, major universities began putting together programs devised to train students to develop expertise in AI semiconductors.

KAIST has accumulated top-notch educational and research capabilities in the two core fields of AI semiconductor - Semiconductor and Artificial Intelligence. Notably, in the field of semiconductors, the International Solid-State Circuit Conference (ISSCC) is the world's most prestigious conference about designing of semiconductor integrated circuit. Established in 1954, with more than 60% of the participants coming from companies including Samsung, Qualcomm, TSMC, and Intel, the conference naturally focuses on practical value of the studies from the industrial point-of-view, earning the nickname the ‘Semiconductor Design Olympics’. At such conference of legacy and influence, KAIST kept its presence widely visible over other participating universities, leading in terms of the number of accepted papers over world-class schools such as Massachusetts Institute of Technology (MIT) and Stanford for the past 17 years.

Number of papers published at the InternationalSolid-State Circuit Conference (ISSCC) in 2022 sorted by nations and by institutions

Number of papers by universities presented at the International Solid-State Circuit Conference (ISCCC) in 2006~2022

In terms of the number of papers accepted at the ISSCC, KAIST ranked among top two universities each year since 2006. Looking at the average number of accepted papers over the past 17 years, KAIST stands out as an unparalleled leader. The average number of KAIST papers adopted during the period of 17 years from 2006 through 2022, was 8.4, which is almost double of that of competitors like MIT (4.6) and UCLA (3.6). In Korea, it maintains the second place overall after Samsung, the undisputed number one in the semiconductor design field. Also, this year, KAIST was ranked first among universities participating at the Symposium on VLSI Technology and Circuits, an academic conference in the field of integrated circuits that rivals the ISSCC.

Number of papers adopted by the Symposium on VLSI Technology and Circuits in 2022 submitted from the universities

With KAIST researchers working and presenting new technologies at the frontiers of all key areas of the semiconductor industry, the quality of KAIST research is also maintained at the highest level. Professor Myoungsoo Jung's research team in the School of Electrical Engineering is actively working to develop heterogeneous computing environment with high energy efficiency in response to the industry's demand for high performance at low power. In the field of materials, a research team led by Professor Byong-Guk Park of the Department of Materials Science and Engineering developed the Spin Orbit Torque (SOT)-based Magnetic RAM (MRAM) memory that operates at least 10 times faster than conventional memories to suggest a way to overcome the limitations of the existing 'von Neumann structure'.

As such, while providing solutions to major challenges in the current semiconductor industry, the development of new technologies necessary to preoccupy new fields in the semiconductor industry are also very actively pursued. In the field of Quantum Computing, which is attracting attention as next-generation computing technology needed in order to take the lead in the fields of cryptography and nonlinear computation, Professor Sanghyeon Kim's research team in the School of Electrical Engineering presented the world's first 3D integrated quantum computing system at 2021 VLSI Symposium. In Neuromorphic Computing, which is expected to bring remarkable advancements in the field of artificial intelligence by utilizing the principles of the neurology, the research team of Professor Shinhyun Choi of School of Electrical Engineering is developing a next-generation memristor that mimics neurons.

The number of papers by the International Conference on Machine Learning (ICML) and the Conference on Neural Information Processing Systems (NeurIPS), two of the world’s most prestigious academic societies in the field of artificial intelligence (KAIST 6th in the world, 1st in Asia, in 2020)

The field of artificial intelligence has also grown rapidly. Based on the number of papers from the International Conference on Machine Learning (ICML) and the Conference on Neural Information Processing Systems (NeurIPS), two of the world's most prestigious conferences in the field of artificial intelligence, KAIST ranked 6th in the world in 2020 and 1st in Asia. Since 2012, KAIST's ranking steadily inclined from 37th to 6th, climbing 31 steps over the period of eight years. In 2021, 129 papers, or about 40%, of Korean papers published at 11 top artificial intelligence conferences were presented by KAIST. Thanks to KAIST's efforts, in 2021, Korea ranked sixth after the United States, China, United Kingdom, Canada, and Germany in terms of the number of papers published by global AI academic societies.

Number of papers from Korea (and by KAIST) published at 11 top conferences in the field of artificial intelligence in 2021

In terms of content, KAIST's AI research is also at the forefront. Professor Hoi-Jun Yoo's research team in the School of Electrical Engineering compensated for the shortcomings of the “edge networks” by implementing artificial intelligence real-time learning networks on mobile devices. In order to materialize artificial intelligence, data accumulation and a huge amount of computation is required. For this, a high-performance server takes care of massive computation, and for the user terminals, the “edge network” that collects data and performs simple computations are used. Professor Yoo's research greatly increased AI’s processing speed and performance by allotting the learning task to the user terminal as well.

In June, a research team led by Professor Min-Soo Kim of the School of Computing presented a solution that is essential for processing super-scale artificial intelligence models. The super-scale machine learning system developed by the research team is expected to achieve speeds up to 8.8 times faster than Google's Tensorflow or IBM's System DS, which are mainly used in the industry.

KAIST is also making remarkable achievements in the field of AI semiconductors. In 2020, Professor Minsoo Rhu's research team in the School of Electrical Engineering succeeded in developing the world's first AI semiconductor optimized for AI recommendation systems. Due to the nature of the AI recommendation system having to handle vast amounts of contents and user information, it quickly meets its limitation because of the information bottleneck when the process is operated through a general-purpose artificial intelligence system. Professor Minsoo Rhu's team developed a semiconductor that can achieve a speed that is 21 times faster than existing systems using the 'Processing-In-Memory (PIM)' technology. PIM is a technology that improves efficiency by performing the calculations in 'RAM', or random-access memory, which is usually only used to store data temporarily just before they are processed. When PIM technology is put out on the market, it is expected that fortify competitiveness of Korean companies in the AI semiconductor market drastically, as they already hold great strength in the memory area.

KAIST does not plan to be complacent with its achievements, but is making various plans to further the distance from the competitors catching on in the fields of artificial intelligence, semiconductors, and AI semiconductors. Following the establishment of the first artificial intelligence research center in Korea in 1990, the Kim Jaechul AI Graduate School was opened in 2019 to sustain the supply chain of the experts in the field. In 2020, Artificial Intelligence Semiconductor System Research Center was launched to conduct convergent research on AI and semiconductors, which was followed by the establishment of the AI Institutes to promote “AI+X” research efforts.

Based on the internal capabilities accumulated through these efforts, KAIST is also making efforts to train human resources needed in these areas. KAIST established joint research centers with companies such as Naver, while collaborating with local governments such as Hwaseong City to simultaneously nurture professional manpower. Back in 2021, KAIST signed an agreement to establish the Semiconductor System Engineering Department with Samsung Electronics and are preparing a new semiconductor specialist training program. The newly established Department of Semiconductor System Engineering will select around 100 new students every year from 2023 and provide special scholarships to all students so that they can develop their professional skills. In addition, through close cooperation with the industry, they will receive special support which includes field trips and internships at Samsung Electronics, and joint workshops and on-site training.

KAIST has made a significant contribution to the growth of the Korean semiconductor industry ecosystem, producing 25% of doctoral workers in the domestic semiconductor field and 20% of CEOs of mid-sized and venture companies with doctoral degrees. With the dawn coming up on the AI semiconductor ecosystem, whether KAIST will reprise the pivotal role seems to be the crucial point of business.

2022.08.05 View 11379 -

A System for Stable Simultaneous Communication among Thousands of IoT Devices

A mmWave Backscatter System, developed by a team led by Professor Song Min Kim is exciting news for the IoT market as it will be able to provide fast and stable connectivity even for a massive network, which could finally allow IoT devices to reach their full potential.

A research team led by Professor Song Min Kim of the KAIST School of Electrical Engineering developed a system that can support concurrent communications for tens of millions of IoT devices using backscattering millimeter-level waves (mmWave).

With their mmWave backscatter method, the research team built a design enabling simultaneous signal demodulation in a complex environment for communication where tens of thousands of IoT devices are arranged indoors. The wide frequency range of mmWave exceeds 10GHz, which provides great scalability. In addition, backscattering reflects radiated signals instead of wirelessly creating its own, which allows operation at ultralow power. Therefore, the mmWave backscatter system offers internet connectivity on a mass scale to IoT devices at a low installation cost.

This research by Kangmin Bae et al. was presented at ACM MobiSys 2022. At this world-renowned conference for mobile systems, the research won the Best Paper Award under the title “OmniScatter: Sensitivity mmWave Backscattering Using Commodity FMCW Radar”. It is meaningful that members of the KAIST School of Electrical Engineering have won the Best Paper Award at ACM MobiSys for two consecutive years, as last year was the first time the award was presented to an institute from Asia.

IoT, as a core component of 5G/6G network, is showing exponential growth, and is expected to be part of a trillion devices by 2035. To support the connection of IoT devices on a mass scale, 5G and 6G each aim to support ten times and 100 times the network density of 4G, respectively. As a result, the importance of practical systems for large-scale communication has been raised.

The mmWave is a next-generation communication technology that can be incorporated in 5G/6G standards, as it utilizes carrier waves at frequencies between 30 to 300GHz. However, due to signal reduction at high frequencies and reflection loss, the current mmWave backscatter system enables communication in limited environments. In other words, it cannot operate in complex environments where various obstacles and reflectors are present. As a result, it is limited to the large-scale connection of IoT devices that require a relatively free arrangement.

The research team found the solution in the high coding gain of an FMCW radar. The team developed a signal processing method that can fundamentally separate backscatter signals from ambient noise while maintaining the coding gain of the radar. They achieved a receiver sensitivity of over 100 thousand times that of previously reported FMCW radars, which can support communication in practical environments. Additionally, given the radar’s property where the frequency of the demodulated signal changes depending on the physical location of the tag, the team designed a system that passively assigns them channels. This lets the ultralow-power backscatter communication system to take full advantage of the frequency range at 10 GHz or higher.

The developed system can use the radar of existing commercial products as gateway, making it easily compatible. In addition, since the backscatter system works at ultralow power levels of 10uW or below, it can operate for over 40 years with a single button cell and drastically reduce installation and maintenance costs.

The research team confirmed that mmWave backscatter devices arranged randomly in an office with various obstacles and reflectors could communicate effectively. The team then took things one step further and conducted a successful trace-driven evaluation where they simultaneously received information sent by 1,100 devices.

Their research presents connectivity that greatly exceeds network density required by next-generation communication like 5G and 6G. The system is expected to become a stepping stone for the hyper-connected future to come.

Professor Kim said, “mmWave backscatter is the technology we’ve dreamt of. The mass scalability and ultralow power at which it can operate IoT devices is unmatched by any existing technology”. He added, “We look forward to this system being actively utilized to enable the wide availability of IoT in the hyper-connected generation to come”.

To demonstrate the massive connectivity of the system, a trace-driven evaluation of 1,100 concurrent tag transmissions are made. Figure shows the demodulation result of each and every 1,100 tags as red triangles, where they successfully communicate without collision.

This work was supported by Samsung Research Funding & Incubation Center of Samsung Electronics and by the ITRC (Information Technology Research Center) support program supervised by the IITP (Institute of Information & Communications Technology Planning & Evaluation).

Profile: Song Min Kim, Ph.D.Professorsongmin@kaist.ac.krhttps://smile.kaist.ac.kr

SMILE Lab.School of Electrical Engineering

2022.07.28 View 9285

A System for Stable Simultaneous Communication among Thousands of IoT Devices

A mmWave Backscatter System, developed by a team led by Professor Song Min Kim is exciting news for the IoT market as it will be able to provide fast and stable connectivity even for a massive network, which could finally allow IoT devices to reach their full potential.

A research team led by Professor Song Min Kim of the KAIST School of Electrical Engineering developed a system that can support concurrent communications for tens of millions of IoT devices using backscattering millimeter-level waves (mmWave).

With their mmWave backscatter method, the research team built a design enabling simultaneous signal demodulation in a complex environment for communication where tens of thousands of IoT devices are arranged indoors. The wide frequency range of mmWave exceeds 10GHz, which provides great scalability. In addition, backscattering reflects radiated signals instead of wirelessly creating its own, which allows operation at ultralow power. Therefore, the mmWave backscatter system offers internet connectivity on a mass scale to IoT devices at a low installation cost.

This research by Kangmin Bae et al. was presented at ACM MobiSys 2022. At this world-renowned conference for mobile systems, the research won the Best Paper Award under the title “OmniScatter: Sensitivity mmWave Backscattering Using Commodity FMCW Radar”. It is meaningful that members of the KAIST School of Electrical Engineering have won the Best Paper Award at ACM MobiSys for two consecutive years, as last year was the first time the award was presented to an institute from Asia.

IoT, as a core component of 5G/6G network, is showing exponential growth, and is expected to be part of a trillion devices by 2035. To support the connection of IoT devices on a mass scale, 5G and 6G each aim to support ten times and 100 times the network density of 4G, respectively. As a result, the importance of practical systems for large-scale communication has been raised.

The mmWave is a next-generation communication technology that can be incorporated in 5G/6G standards, as it utilizes carrier waves at frequencies between 30 to 300GHz. However, due to signal reduction at high frequencies and reflection loss, the current mmWave backscatter system enables communication in limited environments. In other words, it cannot operate in complex environments where various obstacles and reflectors are present. As a result, it is limited to the large-scale connection of IoT devices that require a relatively free arrangement.

The research team found the solution in the high coding gain of an FMCW radar. The team developed a signal processing method that can fundamentally separate backscatter signals from ambient noise while maintaining the coding gain of the radar. They achieved a receiver sensitivity of over 100 thousand times that of previously reported FMCW radars, which can support communication in practical environments. Additionally, given the radar’s property where the frequency of the demodulated signal changes depending on the physical location of the tag, the team designed a system that passively assigns them channels. This lets the ultralow-power backscatter communication system to take full advantage of the frequency range at 10 GHz or higher.

The developed system can use the radar of existing commercial products as gateway, making it easily compatible. In addition, since the backscatter system works at ultralow power levels of 10uW or below, it can operate for over 40 years with a single button cell and drastically reduce installation and maintenance costs.

The research team confirmed that mmWave backscatter devices arranged randomly in an office with various obstacles and reflectors could communicate effectively. The team then took things one step further and conducted a successful trace-driven evaluation where they simultaneously received information sent by 1,100 devices.

Their research presents connectivity that greatly exceeds network density required by next-generation communication like 5G and 6G. The system is expected to become a stepping stone for the hyper-connected future to come.

Professor Kim said, “mmWave backscatter is the technology we’ve dreamt of. The mass scalability and ultralow power at which it can operate IoT devices is unmatched by any existing technology”. He added, “We look forward to this system being actively utilized to enable the wide availability of IoT in the hyper-connected generation to come”.

To demonstrate the massive connectivity of the system, a trace-driven evaluation of 1,100 concurrent tag transmissions are made. Figure shows the demodulation result of each and every 1,100 tags as red triangles, where they successfully communicate without collision.

This work was supported by Samsung Research Funding & Incubation Center of Samsung Electronics and by the ITRC (Information Technology Research Center) support program supervised by the IITP (Institute of Information & Communications Technology Planning & Evaluation).

Profile: Song Min Kim, Ph.D.Professorsongmin@kaist.ac.krhttps://smile.kaist.ac.kr

SMILE Lab.School of Electrical Engineering

2022.07.28 View 9285 -

KAIST Honors BMW and Hyundai with the 2022 Future Mobility of the Year Award

BMW ‘iVision Circular’, Commercial Vehicle-Hyundai Motors ‘Trailer Drone’ selected as winners of the international awards for concept cars established by KAIST Cho Chun Shik Graduate School of Mobility to honor car makers that strive to present new visions in the field of eco-friendly design of automobiles and unmanned logistics.

KAIST (President Kwang Hyung Lee) hosted the “2022 Future Mobility of the Year (FMOTY) Awards” at the Convention Hall of the BEXCO International Motor Show at Busan in the afternoon of the 14th.

The Future Mobility of the Year Awards is an award ceremony that selects a model that showcases useful transportation technology and innovative service concepts for the future society among the set of concept cars exhibited at the motor show.

As a one-of-a-kind international concept car awards established by KAIST's Cho Chun Shik Graduate School of Mobility (Headed by Professor Jang In-Gwon), the auto journalists from 11 countries were invited to be the jurors to select the winner. With the inaugural awards ceremony held in 2019, over the past three years, automakers from around the globe, including internationally renowned automakers, such as, Volvo/Toyota (2019), Honda/Hyundai (2020), and Renault (2021), even a new start-up car manufacturer like Canoo, the winner of last year’s award for commercial vehicles, were honored for their award-winning works.

At this year’s awards ceremony, the 4th of its kind, BMW's “iVision Circular” and Hyundai's “'Trailer Drone” were selected as the best concept cars of the year, the former from the Private Mobility category and the latter from the Public & Commercial Vehicles category.

The jury consisting of 16 domestic and foreign auto journalists, including BBC Top Gear's Paul Horrell and Car Magazine’s Georg Kacher, evaluated 53 concept car contestants that made their entry last year. The jurors’ general comment was that while the trend of the global automobile market flowing fast towards electric vehicles, this year's award-winning works presented a new vision in the field of eco-friendly design and unmanned logistics.

Private Mobility Categry Winner: BMW iVision Circular

BMW's 'iVision Circular', the winner of the Private Mobility category, is an eco-friendly compact car in which all parts of the vehicle are designed with recycled and/or natural materials. It has received favorable reviews for its in-depth implementation of the concept of a futuristic eco-friendly car by manufacturing the tires from natural rubber and adopting a design that made recycling of its parts very easily when the car is to be disposed of.

Public & Commercial Vehicles Categry Winner: Hyundai Trailer Drone

Hyundai Motor Company’s “Trailer Drone”, the winner of the Public & Commercial Vehicles category, is an eco-friendly autonomous driving truck that can transport large-scale logistics from a port to a destination without a human driver while two unmanned vehicles push and drag a trailer. The concept car won supports from a large number of judges for the blueprint it presented for a groundbreaking logistics service that applied both eco-friendly hydrogen fuel cell and fully autonomous driving technology.

Jurors from overseas congratulated the development team of BMW and Hyundai Motor Company via a video message for providing a new direction for the global automobile industry as it strives to transform in line with the changes in the post-pandemic era.

Professor Bo-won Kim, the Vice President for Planning and Budget of KAIST, who presented the awards, said, “It is time for the K-Mobility wave to sweep over the global mobility industry.” “KAIST will lead in the various fields of mobility technologies to support global automakers,” he added.

Splitting the center are KAIST Vice President Bo-Won Kim on the right, and Seong-Kwon Lee,

the Deputy Mayor of the City of Busan on the left. To Kim's left is the Senior VP of BMW Asia-Pacific, Eastern Europe, Middle East, Africa, Jean-Philippe Parain, and to Lee's Right is Sangyup Lee,

the Head of Hyundai Motor Design Center and the Executive VP of Hyundai Motors.

At the ceremony, along with KAIST officials, including Vice President Bo-Won Kim and Professor In-Gwon Jang, the Head of Cho Chun Shik Graduate School of Mobility, are the Deputy Mayor Seong-Kwon Lee of the City of Busan and the figures from the automobile industry, including Jean-Philippe Parain, the Senior Vice President of BMW Asia-Pacific, Eastern Europe, Middle East, Africa, who is visiting Korea to receive the '2022 Future Mobility' award, and Sangyup Lee, the Head of Hyundai Motor Design Center and the Executive Vice President of Hyundai Motor Company, were in the attendance.

More information about the awards ceremony and winning works are available at the official website of this year's Future Mobility Awards (www.fmoty.org).

Profile:In-Gwon Jang, Ph.D.Presidentthe Organizing Committeethe Future Mobility of the Year Awardshttp://www.fmoty.org/

Head ProfessorKAIST Cho Chun Shik Graduate School of Mobilityhttps://gt.kaist.ac.kr

2022.07.14 View 12904

KAIST Honors BMW and Hyundai with the 2022 Future Mobility of the Year Award

BMW ‘iVision Circular’, Commercial Vehicle-Hyundai Motors ‘Trailer Drone’ selected as winners of the international awards for concept cars established by KAIST Cho Chun Shik Graduate School of Mobility to honor car makers that strive to present new visions in the field of eco-friendly design of automobiles and unmanned logistics.

KAIST (President Kwang Hyung Lee) hosted the “2022 Future Mobility of the Year (FMOTY) Awards” at the Convention Hall of the BEXCO International Motor Show at Busan in the afternoon of the 14th.

The Future Mobility of the Year Awards is an award ceremony that selects a model that showcases useful transportation technology and innovative service concepts for the future society among the set of concept cars exhibited at the motor show.

As a one-of-a-kind international concept car awards established by KAIST's Cho Chun Shik Graduate School of Mobility (Headed by Professor Jang In-Gwon), the auto journalists from 11 countries were invited to be the jurors to select the winner. With the inaugural awards ceremony held in 2019, over the past three years, automakers from around the globe, including internationally renowned automakers, such as, Volvo/Toyota (2019), Honda/Hyundai (2020), and Renault (2021), even a new start-up car manufacturer like Canoo, the winner of last year’s award for commercial vehicles, were honored for their award-winning works.

At this year’s awards ceremony, the 4th of its kind, BMW's “iVision Circular” and Hyundai's “'Trailer Drone” were selected as the best concept cars of the year, the former from the Private Mobility category and the latter from the Public & Commercial Vehicles category.

The jury consisting of 16 domestic and foreign auto journalists, including BBC Top Gear's Paul Horrell and Car Magazine’s Georg Kacher, evaluated 53 concept car contestants that made their entry last year. The jurors’ general comment was that while the trend of the global automobile market flowing fast towards electric vehicles, this year's award-winning works presented a new vision in the field of eco-friendly design and unmanned logistics.

Private Mobility Categry Winner: BMW iVision Circular

BMW's 'iVision Circular', the winner of the Private Mobility category, is an eco-friendly compact car in which all parts of the vehicle are designed with recycled and/or natural materials. It has received favorable reviews for its in-depth implementation of the concept of a futuristic eco-friendly car by manufacturing the tires from natural rubber and adopting a design that made recycling of its parts very easily when the car is to be disposed of.

Public & Commercial Vehicles Categry Winner: Hyundai Trailer Drone