ICRA

-

Professor Hyun Myung's Team Wins Challenge at ICRA by IEEE

< Photo 1. (From left) Daebeom Kim (Team Leader, Ph.D. student), Seungjae Lee (Ph.D. student), Seoyeon Jang (Ph.D. student), Jei Gong (Master's student), Professor Hyun Myung >

The Urban Robotics Lab team, led by Professor Hyun Myung from the School of Electrical Engineering at our university, achieved a remarkable first-place overall victory in the Nothing Stands Still Challenge (NSS Challenge) 2025, held at the 2025 IEEE International Conference on Robotics and Automation (ICRA), the world's most prestigious robotics conference, from May 19 to 23 in Atlanta, USA.

The NSS Challenge was co-hosted by HILTI, a global construction company based in Liechtenstein, and Stanford University's Gradient Spaces Group. It is an expanded version of the HILTI SLAM (Simultaneous Localization and Mapping)* Challenge, which has been held since 2021, and is considered one of the most prominent challenges at 2025 IEEE ICRA.*SLAM: Refers to Simultaneous Localization and Mapping, a technology where robots, drones, autonomous vehicles, etc., determine their own position and simultaneously create a map of their surroundings.

< Photo 2. Oral Presentation on the Winning Team's Technology (Speakers: Seungjae Lee, Ph.D. student, and Seoyeon Jang, Ph.D. student) >

This challenge primarily evaluates how accurately and robustly LiDAR scan data, collected at various times, can be registered in situations with frequent structural changes, such as construction and industrial environments. In particular, it is regarded as a highly technical competition because it deals with multi-session localization and mapping (Multi-session SLAM) technology that responds to structural changes occurring over multiple timeframes, rather than just single-point registration accuracy.

The Urban Robotics Lab team secured first place overall, surpassing National Taiwan University (3rd place) and Northwestern Polytechnical University of China (2nd place) by a significant margin, with their unique localization and mapping technology that solves the problem of registering LiDAR data collected across multiple times and spaces. The winning team will be awarded a prize of $4,000.

< Figure 1. Example of Multiway-Registration for Registering Multiple Scans >

The Urban Robotics Lab team independently developed a multiway-registration framework that can robustly register multiple scans even without prior connection information. This framework consists of an algorithm for summarizing feature points within scans and finding correspondences (CubicFeat), an algorithm for performing global registration based on the found correspondences (Quatro), and an algorithm for refining results based on change detection (Chamelion). This combination of technologies ensures stable registration performance based on fixed structures, even in highly dynamic industrial environments.

< Figure 2. Example of Change Detection Using the Chamelion Algorithm>

LiDAR scan registration technology is a core component of SLAM (Simultaneous Localization And Mapping) in various autonomous systems such as autonomous vehicles, autonomous robots, autonomous walking systems, and autonomous flying vehicles.

Professor Hyun Myung of the School of Electrical Engineering stated, "This award-winning technology is evaluated as a case that simultaneously proves both academic value and industrial applicability by maximizing the performance of precisely estimating the relative positions between different scans even in complex environments. I am grateful to the students who challenged themselves and never gave up, even when many teams abandoned due to the high difficulty."

< Figure 3. Competition Result Board, Lower RMSE (Root Mean Squared Error) Indicates Higher Score (Unit: meters)>

Meanwhile, the Urban Robotics Lab team first participated in the SLAM Challenge in 2022, winning second place among academic teams, and in 2023, they secured first place overall in the LiDAR category and first place among academic teams in the vision category.

2025.05.30 View 218

Professor Hyun Myung's Team Wins Challenge at ICRA by IEEE

< Photo 1. (From left) Daebeom Kim (Team Leader, Ph.D. student), Seungjae Lee (Ph.D. student), Seoyeon Jang (Ph.D. student), Jei Gong (Master's student), Professor Hyun Myung >

The Urban Robotics Lab team, led by Professor Hyun Myung from the School of Electrical Engineering at our university, achieved a remarkable first-place overall victory in the Nothing Stands Still Challenge (NSS Challenge) 2025, held at the 2025 IEEE International Conference on Robotics and Automation (ICRA), the world's most prestigious robotics conference, from May 19 to 23 in Atlanta, USA.

The NSS Challenge was co-hosted by HILTI, a global construction company based in Liechtenstein, and Stanford University's Gradient Spaces Group. It is an expanded version of the HILTI SLAM (Simultaneous Localization and Mapping)* Challenge, which has been held since 2021, and is considered one of the most prominent challenges at 2025 IEEE ICRA.*SLAM: Refers to Simultaneous Localization and Mapping, a technology where robots, drones, autonomous vehicles, etc., determine their own position and simultaneously create a map of their surroundings.

< Photo 2. Oral Presentation on the Winning Team's Technology (Speakers: Seungjae Lee, Ph.D. student, and Seoyeon Jang, Ph.D. student) >

This challenge primarily evaluates how accurately and robustly LiDAR scan data, collected at various times, can be registered in situations with frequent structural changes, such as construction and industrial environments. In particular, it is regarded as a highly technical competition because it deals with multi-session localization and mapping (Multi-session SLAM) technology that responds to structural changes occurring over multiple timeframes, rather than just single-point registration accuracy.

The Urban Robotics Lab team secured first place overall, surpassing National Taiwan University (3rd place) and Northwestern Polytechnical University of China (2nd place) by a significant margin, with their unique localization and mapping technology that solves the problem of registering LiDAR data collected across multiple times and spaces. The winning team will be awarded a prize of $4,000.

< Figure 1. Example of Multiway-Registration for Registering Multiple Scans >

The Urban Robotics Lab team independently developed a multiway-registration framework that can robustly register multiple scans even without prior connection information. This framework consists of an algorithm for summarizing feature points within scans and finding correspondences (CubicFeat), an algorithm for performing global registration based on the found correspondences (Quatro), and an algorithm for refining results based on change detection (Chamelion). This combination of technologies ensures stable registration performance based on fixed structures, even in highly dynamic industrial environments.

< Figure 2. Example of Change Detection Using the Chamelion Algorithm>

LiDAR scan registration technology is a core component of SLAM (Simultaneous Localization And Mapping) in various autonomous systems such as autonomous vehicles, autonomous robots, autonomous walking systems, and autonomous flying vehicles.

Professor Hyun Myung of the School of Electrical Engineering stated, "This award-winning technology is evaluated as a case that simultaneously proves both academic value and industrial applicability by maximizing the performance of precisely estimating the relative positions between different scans even in complex environments. I am grateful to the students who challenged themselves and never gave up, even when many teams abandoned due to the high difficulty."

< Figure 3. Competition Result Board, Lower RMSE (Root Mean Squared Error) Indicates Higher Score (Unit: meters)>

Meanwhile, the Urban Robotics Lab team first participated in the SLAM Challenge in 2022, winning second place among academic teams, and in 2023, they secured first place overall in the LiDAR category and first place among academic teams in the vision category.

2025.05.30 View 218 -

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

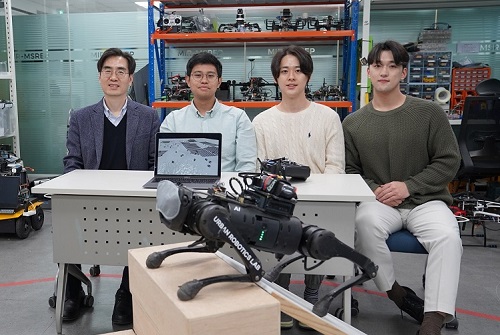

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 10519

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 10519 -

Professor Jee-Hwan Ryu Receives IEEE ICRA 2020 Outstanding Reviewer Award

Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering was selected as this year’s winner of the Outstanding Reviewer Award presented by the Institute of Electrical and Electronics Engineers International Conference on Robotics and Automation (IEEE ICRA). The award ceremony took place on June 5 during the conference that is being held online May 31 through August 31 for three months.

The IEEE ICRA Outstanding Reviewer Award is given every year to the top reviewers who have provided constructive and high-quality thesis reviews, and contributed to improving the quality of papers published as results of the conference.

Professor Ryu was one of the four winners of this year’s award. He was selected from 9,425 candidates, which was approximately three times bigger than the candidate pool in previous years. He was strongly recommended by the editorial committee of the conference.

(END)

2020.06.10 View 9840

Professor Jee-Hwan Ryu Receives IEEE ICRA 2020 Outstanding Reviewer Award

Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering was selected as this year’s winner of the Outstanding Reviewer Award presented by the Institute of Electrical and Electronics Engineers International Conference on Robotics and Automation (IEEE ICRA). The award ceremony took place on June 5 during the conference that is being held online May 31 through August 31 for three months.

The IEEE ICRA Outstanding Reviewer Award is given every year to the top reviewers who have provided constructive and high-quality thesis reviews, and contributed to improving the quality of papers published as results of the conference.

Professor Ryu was one of the four winners of this year’s award. He was selected from 9,425 candidates, which was approximately three times bigger than the candidate pool in previous years. He was strongly recommended by the editorial committee of the conference.

(END)

2020.06.10 View 9840