computing

-

KAIST Develops New AI Inference-Scaling Method for Planning

<(From Left) Professor Sungjin Ahn, Ph.D candidate Jaesik Yoon, M.S candidate Hyeonseo Cho, M.S candidate Doojin Baek, Professor Yoshua Bengio>

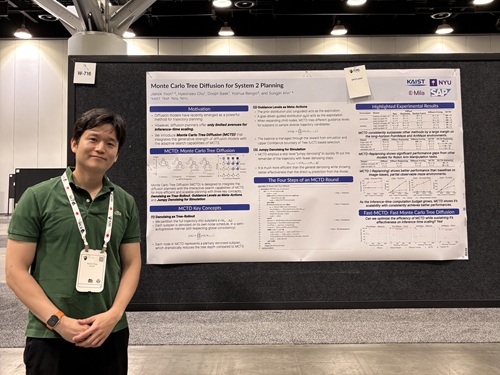

<Ph.D candidate Jaesik Yoon from professor Ahn's research team>

Diffusion models are widely used in many AI applications, but research on efficient inference-time scalability*, particularly for reasoning and planning (known as System 2 abilities) has been lacking. In response, the research team has developed a new technology that enables high-performance and efficient inference for planning based on diffusion models. This technology demonstrated its performance by achieving a 100% success rate on an giant maze-solving task that no existing model had succeeded in. The results are expected to serve as core technology in various fields requiring real-time decision-making, such as intelligent robotics and real-time generative AI.

*Inference-time scalability: Refers to an AI model’s ability to flexibly adjust performance based on the computational resources available during inference.

KAIST (President Kwang Hyung Lee) announced on the 20th that a research team led by Professor Sungjin Ahn in the School of Computing has developed a new technology that significantly improves the inference-time scalability of diffusion-based reasoning through joint research with Professor Yoshua Bengio of the University of Montreal, a world-renowned scholar in deep learning. This study was carried out as part of a collaboration between KAIST and Mila (Quebec AI Institute) through the Prefrontal AI Joint Research Center.

This technology is gaining attention as a core AI technology that, after training, allows the AI to efficiently utilize more computational resources during inference to solve complex reasoning and planning problems that cannot be addressed merely by scaling up data or model size. However, current diffusion models used across various applications lack effective methodologies for implementing such scalability particularly for reasoning and planning.

To address this, Professor Ahn’s research team collaborated with Professor Bengio to propose a novel diffusion model inference technique based on Monte Carlo Tree Search. This method explores diverse generation paths during the diffusion process in a tree structure and is designed to efficiently identify high-quality outputs even with limited computational resources. As a result, it achieved a 100% success rate on the "giant-scale maze-solving" task, where previous methods had a 0% success rate.

In the follow-up research, the team also succeeded in significantly improving the major drawback of the proposed method—its slow speed. By efficiently parallelizing the tree search and optimizing computational cost, they achieved results of equal or superior quality up to 100 times faster than the previous version. This is highly meaningful as it demonstrates the method’s inference capabilities and real-time applicability simultaneously.

Professor Sungjin Ahn stated, “This research fundamentally overcomes the limitations of existing planning method based on diffusion models, which required high computational cost,” adding, “It can serve as core technology in various areas such as intelligent robotics, simulation-based decision-making, and real-time generative AI.”

The research results were presented as Spotlight papers (top 2.6% of all accepted papers) by doctoral student Jaesik Yoon of the School of Computing at the 42nd International Conference on Machine Learning (ICML 2025), held in Vancouver, Canada, from July 13 to 19.

※ Paper titles: Monte Carlo Tree Diffusion for System 2 Planning (Jaesik Yoon, Hyeonseo Cho, Doojin Baek, Yoshua Bengio, Sungjin Ahn, ICML 25), Fast Monte Carlo Tree Diffusion: 100x Speedup via Parallel Sparse Planning (Jaesik Yoon, Hyeonseo Cho, Yoshua Bengio, Sungjin Ahn)

※ DOI: https://doi.org/10.48550/arXiv.2502.07202, https://doi.org/10.48550/arXiv.2506.09498

This research was supported by the National Research Foundation of Korea.

2025.07.21 View 476

KAIST Develops New AI Inference-Scaling Method for Planning

<(From Left) Professor Sungjin Ahn, Ph.D candidate Jaesik Yoon, M.S candidate Hyeonseo Cho, M.S candidate Doojin Baek, Professor Yoshua Bengio>

<Ph.D candidate Jaesik Yoon from professor Ahn's research team>

Diffusion models are widely used in many AI applications, but research on efficient inference-time scalability*, particularly for reasoning and planning (known as System 2 abilities) has been lacking. In response, the research team has developed a new technology that enables high-performance and efficient inference for planning based on diffusion models. This technology demonstrated its performance by achieving a 100% success rate on an giant maze-solving task that no existing model had succeeded in. The results are expected to serve as core technology in various fields requiring real-time decision-making, such as intelligent robotics and real-time generative AI.

*Inference-time scalability: Refers to an AI model’s ability to flexibly adjust performance based on the computational resources available during inference.

KAIST (President Kwang Hyung Lee) announced on the 20th that a research team led by Professor Sungjin Ahn in the School of Computing has developed a new technology that significantly improves the inference-time scalability of diffusion-based reasoning through joint research with Professor Yoshua Bengio of the University of Montreal, a world-renowned scholar in deep learning. This study was carried out as part of a collaboration between KAIST and Mila (Quebec AI Institute) through the Prefrontal AI Joint Research Center.

This technology is gaining attention as a core AI technology that, after training, allows the AI to efficiently utilize more computational resources during inference to solve complex reasoning and planning problems that cannot be addressed merely by scaling up data or model size. However, current diffusion models used across various applications lack effective methodologies for implementing such scalability particularly for reasoning and planning.

To address this, Professor Ahn’s research team collaborated with Professor Bengio to propose a novel diffusion model inference technique based on Monte Carlo Tree Search. This method explores diverse generation paths during the diffusion process in a tree structure and is designed to efficiently identify high-quality outputs even with limited computational resources. As a result, it achieved a 100% success rate on the "giant-scale maze-solving" task, where previous methods had a 0% success rate.

In the follow-up research, the team also succeeded in significantly improving the major drawback of the proposed method—its slow speed. By efficiently parallelizing the tree search and optimizing computational cost, they achieved results of equal or superior quality up to 100 times faster than the previous version. This is highly meaningful as it demonstrates the method’s inference capabilities and real-time applicability simultaneously.

Professor Sungjin Ahn stated, “This research fundamentally overcomes the limitations of existing planning method based on diffusion models, which required high computational cost,” adding, “It can serve as core technology in various areas such as intelligent robotics, simulation-based decision-making, and real-time generative AI.”

The research results were presented as Spotlight papers (top 2.6% of all accepted papers) by doctoral student Jaesik Yoon of the School of Computing at the 42nd International Conference on Machine Learning (ICML 2025), held in Vancouver, Canada, from July 13 to 19.

※ Paper titles: Monte Carlo Tree Diffusion for System 2 Planning (Jaesik Yoon, Hyeonseo Cho, Doojin Baek, Yoshua Bengio, Sungjin Ahn, ICML 25), Fast Monte Carlo Tree Diffusion: 100x Speedup via Parallel Sparse Planning (Jaesik Yoon, Hyeonseo Cho, Yoshua Bengio, Sungjin Ahn)

※ DOI: https://doi.org/10.48550/arXiv.2502.07202, https://doi.org/10.48550/arXiv.2506.09498

This research was supported by the National Research Foundation of Korea.

2025.07.21 View 476 -

KAIST Turns an Unprecedented Idea into Reality: Quantum Computing with Magnets

What started as an idea under KAIST’s Global Singularity Research Project—"Can we build a quantum computer using magnets?"—has now become a scientific reality. A KAIST-led international research team has successfully demonstrated a core quantum computing technology using magnetic materials (ferromagnets) for the first time in the world.

KAIST (represented by President Kwang-Hyung Lee) announced on the 6th of May that a team led by Professor Kab-Jin Kim from the Department of Physics, in collaboration with the Argonne National Laboratory and the University of Illinois Urbana-Champaign (UIUC), has developed a “photon-magnon hybrid chip” and successfully implemented real-time, multi-pulse interference using magnetic materials—marking a global first.

< Photo 1. Dr. Moojune Song (left) and Professor Kab-Jin Kim (right) of KAIST Department of Physics >

In simple terms, the researchers developed a special chip that synchronizes light and internal magnetic vibrations (magnons), enabling the transmission of phase information between distant magnets. They succeeded in observing and controlling interference between multiple signals in real time. This marks the first experimental evidence that magnets can serve as key components in quantum computing, serving as a pivotal step toward magnet-based quantum platforms.

The N and S poles of a magnet stem from the spin of electrons inside atoms. When many atoms align, their collective spin vibrations create a quantum particle known as a “magnon.”

Magnons are especially promising because of their nonreciprocal nature—they can carry information in only one direction, which makes them suitable for quantum noise isolation in compact quantum chips. They can also couple with both light and microwaves, enabling the potential for long-distance quantum communication over tens of kilometers.

Moreover, using special materials like antiferromagnets could allow quantum computers to operate at terahertz (THz) frequencies, far surpassing today’s hardware limitations, and possibly enabling room-temperature quantum computing without the need for bulky cryogenic equipment.

To build such a system, however, one must be able to transmit, measure, and control the phase information of magnons—the starting point and propagation of their waveforms—in real time. This had not been achieved until now.

< Figure 1. Superconducting Circuit-Based Magnon-Photon Hybrid System. (a) Schematic diagram of the device. A NbN superconducting resonator circuit fabricated on a silicon substrate is coupled with spherical YIG magnets (250 μm diameter), and magnons are generated and measured in real-time via a vertical antenna. (b) Photograph of the actual device. The distance between the two YIG spheres is 12 mm, a distance at which they cannot influence each other without the superconducting circuit. >

Professor Kim’s team used two tiny magnetic spheres made of Yttrium Iron Garnet (YIG) placed 12 mm apart with a superconducting resonator in between—similar to those used in quantum processors by Google and IBM. They input pulses into one magnet and successfully observed lossless transmission of magnon vibrations to the second magnet via the superconducting circuit.

They confirmed that from single nanosecond pulses to four microwave pulses, the magnon vibrations maintained their phase information and demonstrated predictable constructive or destructive interference in real time—known as coherent interference.

By adjusting the pulse frequencies and their intervals, the researchers could also freely control the interference patterns of magnons, effectively showing for the first time that electrical signals can be used to manipulate magnonic quantum states.

This work demonstrated that quantum gate operations using multiple pulses—a fundamental technique in quantum information processing—can be implemented using a hybrid system of magnetic materials and superconducting circuits. This opens the door for the practical use of magnet-based quantum devices.

< Figure 2. Experimental Data. (a) Measurement results of magnon-magnon band anticrossing via continuous wave measurement, showing the formation of a strong coupling hybrid system. (b) Magnon pulse exchange oscillation phenomenon between YIG spheres upon single pulse application. It can be seen that magnon information is coherently transmitted at regular time intervals through the superconducting circuit. (c,d) Magnon interference phenomenon upon dual pulse application. The magnon information state can be arbitrarily controlled by adjusting the time interval and carrier frequency between pulses. >

Professor Kab-Jin Kim stated, “This project began with a bold, even unconventional idea proposed to the Global Singularity Research Program: ‘What if we could build a quantum computer with magnets?’ The journey has been fascinating, and this study not only opens a new field of quantum spintronics, but also marks a turning point in developing high-efficiency quantum information processing devices.”

The research was co-led by postdoctoral researcher Moojune Song (KAIST), Dr. Yi Li and Dr. Valentine Novosad from Argonne National Lab, and Prof. Axel Hoffmann’s team at UIUC. The results were published in Nature Communications on April 17 and npj Spintronics on April 1, 2025.

Paper 1: Single-shot magnon interference in a magnon-superconducting-resonator hybrid circuit, Nat. Commun. 16, 3649 (2025)

DOI: https://doi.org/10.1038/s41467-025-58482-2

Paper 2: Single-shot electrical detection of short-wavelength magnon pulse transmission in a magnonic ultra-thin-film waveguide, npj Spintronics 3, 12 (2025)

DOI: https://doi.org/10.1038/s44306-025-00072-5

The research was supported by KAIST’s Global Singularity Research Initiative, the National Research Foundation of Korea (including the Mid-Career Researcher, Leading Research Center, and Quantum Information Science Human Resource Development programs), and the U.S. Department of Energy.

2025.06.12 View 4308

KAIST Turns an Unprecedented Idea into Reality: Quantum Computing with Magnets

What started as an idea under KAIST’s Global Singularity Research Project—"Can we build a quantum computer using magnets?"—has now become a scientific reality. A KAIST-led international research team has successfully demonstrated a core quantum computing technology using magnetic materials (ferromagnets) for the first time in the world.

KAIST (represented by President Kwang-Hyung Lee) announced on the 6th of May that a team led by Professor Kab-Jin Kim from the Department of Physics, in collaboration with the Argonne National Laboratory and the University of Illinois Urbana-Champaign (UIUC), has developed a “photon-magnon hybrid chip” and successfully implemented real-time, multi-pulse interference using magnetic materials—marking a global first.

< Photo 1. Dr. Moojune Song (left) and Professor Kab-Jin Kim (right) of KAIST Department of Physics >

In simple terms, the researchers developed a special chip that synchronizes light and internal magnetic vibrations (magnons), enabling the transmission of phase information between distant magnets. They succeeded in observing and controlling interference between multiple signals in real time. This marks the first experimental evidence that magnets can serve as key components in quantum computing, serving as a pivotal step toward magnet-based quantum platforms.

The N and S poles of a magnet stem from the spin of electrons inside atoms. When many atoms align, their collective spin vibrations create a quantum particle known as a “magnon.”

Magnons are especially promising because of their nonreciprocal nature—they can carry information in only one direction, which makes them suitable for quantum noise isolation in compact quantum chips. They can also couple with both light and microwaves, enabling the potential for long-distance quantum communication over tens of kilometers.

Moreover, using special materials like antiferromagnets could allow quantum computers to operate at terahertz (THz) frequencies, far surpassing today’s hardware limitations, and possibly enabling room-temperature quantum computing without the need for bulky cryogenic equipment.

To build such a system, however, one must be able to transmit, measure, and control the phase information of magnons—the starting point and propagation of their waveforms—in real time. This had not been achieved until now.

< Figure 1. Superconducting Circuit-Based Magnon-Photon Hybrid System. (a) Schematic diagram of the device. A NbN superconducting resonator circuit fabricated on a silicon substrate is coupled with spherical YIG magnets (250 μm diameter), and magnons are generated and measured in real-time via a vertical antenna. (b) Photograph of the actual device. The distance between the two YIG spheres is 12 mm, a distance at which they cannot influence each other without the superconducting circuit. >

Professor Kim’s team used two tiny magnetic spheres made of Yttrium Iron Garnet (YIG) placed 12 mm apart with a superconducting resonator in between—similar to those used in quantum processors by Google and IBM. They input pulses into one magnet and successfully observed lossless transmission of magnon vibrations to the second magnet via the superconducting circuit.

They confirmed that from single nanosecond pulses to four microwave pulses, the magnon vibrations maintained their phase information and demonstrated predictable constructive or destructive interference in real time—known as coherent interference.

By adjusting the pulse frequencies and their intervals, the researchers could also freely control the interference patterns of magnons, effectively showing for the first time that electrical signals can be used to manipulate magnonic quantum states.

This work demonstrated that quantum gate operations using multiple pulses—a fundamental technique in quantum information processing—can be implemented using a hybrid system of magnetic materials and superconducting circuits. This opens the door for the practical use of magnet-based quantum devices.

< Figure 2. Experimental Data. (a) Measurement results of magnon-magnon band anticrossing via continuous wave measurement, showing the formation of a strong coupling hybrid system. (b) Magnon pulse exchange oscillation phenomenon between YIG spheres upon single pulse application. It can be seen that magnon information is coherently transmitted at regular time intervals through the superconducting circuit. (c,d) Magnon interference phenomenon upon dual pulse application. The magnon information state can be arbitrarily controlled by adjusting the time interval and carrier frequency between pulses. >

Professor Kab-Jin Kim stated, “This project began with a bold, even unconventional idea proposed to the Global Singularity Research Program: ‘What if we could build a quantum computer with magnets?’ The journey has been fascinating, and this study not only opens a new field of quantum spintronics, but also marks a turning point in developing high-efficiency quantum information processing devices.”

The research was co-led by postdoctoral researcher Moojune Song (KAIST), Dr. Yi Li and Dr. Valentine Novosad from Argonne National Lab, and Prof. Axel Hoffmann’s team at UIUC. The results were published in Nature Communications on April 17 and npj Spintronics on April 1, 2025.

Paper 1: Single-shot magnon interference in a magnon-superconducting-resonator hybrid circuit, Nat. Commun. 16, 3649 (2025)

DOI: https://doi.org/10.1038/s41467-025-58482-2

Paper 2: Single-shot electrical detection of short-wavelength magnon pulse transmission in a magnonic ultra-thin-film waveguide, npj Spintronics 3, 12 (2025)

DOI: https://doi.org/10.1038/s44306-025-00072-5

The research was supported by KAIST’s Global Singularity Research Initiative, the National Research Foundation of Korea (including the Mid-Career Researcher, Leading Research Center, and Quantum Information Science Human Resource Development programs), and the U.S. Department of Energy.

2025.06.12 View 4308 -

KAIST's Pioneering VR Precision Technology & Choreography Tool Receive Spotlights at CHI 2025

Accurate pointing in virtual spaces is essential for seamless interaction. If pointing is not precise, selecting the desired object becomes challenging, breaking user immersion and reducing overall experience quality. KAIST researchers have developed a technology that offers a vivid, lifelike experience in virtual space, alongside a new tool that assists choreographers throughout the creative process.

KAIST (President Kwang-Hyung Lee) announced on May 13th that a research team led by Professor Sang Ho Yoon of the Graduate School of Culture Technology, in collaboration with Professor Yang Zhang of the University of California, Los Angeles (UCLA), has developed the ‘T2IRay’ technology and the ‘ChoreoCraft’ platform, which enables choreographers to work more freely and creatively in virtual reality. These technologies received two Honorable Mention awards, recognizing the top 5% of papers, at CHI 2025*, the best international conference in the field of human-computer interaction, hosted by the Association for Computing Machinery (ACM) from April 25 to May 1.

< (From left) PhD candidates Jina Kim and Kyungeun Jung along with Master's candidate, Hyunyoung Han and Professor Sang Ho Yoon of KAIST Graduate School of Culture Technology and Professor Yang Zhang (top) of UCLA >

T2IRay: Enabling Virtual Input with Precision

T2IRay introduces a novel input method that allows for precise object pointing in virtual environments by expanding traditional thumb-to-index gestures. This approach overcomes previous limitations, such as interruptions or reduced accuracy due to changes in hand position or orientation.

The technology uses a local coordinate system based on finger relationships, ensuring continuous input even as hand positions shift. It accurately captures subtle thumb movements within this coordinate system, integrating natural head movements to allow fluid, intuitive control across a wide range.

< Figure 1. T2IRay framework utilizing the delicate movements of the thumb and index fingers for AR/VR pointing >

Professor Sang Ho Yoon explained, “T2IRay can significantly enhance the user experience in AR/VR by enabling smooth, stable control even when the user’s hands are in motion.”

This study, led by first author Jina Kim, was supported by the Excellent New Researcher Support Project of the National Research Foundation of Korea under the Ministry of Science and ICT, as well as the University ICT Research Center (ITRC) Support Project of the Institute of Information and Communications Technology Planning and Evaluation (IITP).

▴ Paper title: T2IRay: Design of Thumb-to-Index Based Indirect Pointing for Continuous and Robust AR/VR Input▴ Paper link: https://doi.org/10.1145/3706598.3713442

▴ T2IRay demo video: https://youtu.be/ElJlcJbkJPY

ChoreoCraft: Creativity Support through VR for Choreographers

In addition, Professor Yoon’s team developed ‘ChoreoCraft,’ a virtual reality tool designed to support choreographers by addressing the unique challenges they face, such as memorizing complex movements, overcoming creative blocks, and managing subjective feedback.

ChoreoCraft reduces reliance on memory by allowing choreographers to save and refine movements directly within a VR space, using a motion-capture avatar for real-time interaction. It also enhances creativity by suggesting movements that naturally fit with prior choreography and musical elements. Furthermore, the system provides quantitative feedback by analyzing kinematic factors like motion stability and engagement, helping choreographers make data-driven creative decisions.

< Figure 2. ChoreoCraft's approaches to encourage creative process >

Professor Yoon noted, “ChoreoCraft is a tool designed to address the core challenges faced by choreographers, enhancing both creativity and efficiency. In user tests with professional choreographers, it received high marks for its ability to spark creative ideas and provide valuable quantitative feedback.”

This research was conducted in collaboration with doctoral candidate Kyungeun Jung and master’s candidate Hyunyoung Han, alongside the Electronics and Telecommunications Research Institute (ETRI) and One Million Co., Ltd. (CEO Hye-rang Kim), with support from the Cultural and Arts Immersive Service Development Project by the Ministry of Culture, Sports and Tourism.

▴ Paper title: ChoreoCraft: In-situ Crafting of Choreography in Virtual Reality through Creativity Support Tools▴ Paper link: https://doi.org/10.1145/3706598.3714220

▴ ChoreoCraft demo video: https://youtu.be/Ms1fwiSBjjw

*CHI (Conference on Human Factors in Computing Systems): The premier international conference on human-computer interaction, organized by the ACM, was held this year from April 25 to May 1, 2025.

2025.05.13 View 5398

KAIST's Pioneering VR Precision Technology & Choreography Tool Receive Spotlights at CHI 2025

Accurate pointing in virtual spaces is essential for seamless interaction. If pointing is not precise, selecting the desired object becomes challenging, breaking user immersion and reducing overall experience quality. KAIST researchers have developed a technology that offers a vivid, lifelike experience in virtual space, alongside a new tool that assists choreographers throughout the creative process.

KAIST (President Kwang-Hyung Lee) announced on May 13th that a research team led by Professor Sang Ho Yoon of the Graduate School of Culture Technology, in collaboration with Professor Yang Zhang of the University of California, Los Angeles (UCLA), has developed the ‘T2IRay’ technology and the ‘ChoreoCraft’ platform, which enables choreographers to work more freely and creatively in virtual reality. These technologies received two Honorable Mention awards, recognizing the top 5% of papers, at CHI 2025*, the best international conference in the field of human-computer interaction, hosted by the Association for Computing Machinery (ACM) from April 25 to May 1.

< (From left) PhD candidates Jina Kim and Kyungeun Jung along with Master's candidate, Hyunyoung Han and Professor Sang Ho Yoon of KAIST Graduate School of Culture Technology and Professor Yang Zhang (top) of UCLA >

T2IRay: Enabling Virtual Input with Precision

T2IRay introduces a novel input method that allows for precise object pointing in virtual environments by expanding traditional thumb-to-index gestures. This approach overcomes previous limitations, such as interruptions or reduced accuracy due to changes in hand position or orientation.

The technology uses a local coordinate system based on finger relationships, ensuring continuous input even as hand positions shift. It accurately captures subtle thumb movements within this coordinate system, integrating natural head movements to allow fluid, intuitive control across a wide range.

< Figure 1. T2IRay framework utilizing the delicate movements of the thumb and index fingers for AR/VR pointing >

Professor Sang Ho Yoon explained, “T2IRay can significantly enhance the user experience in AR/VR by enabling smooth, stable control even when the user’s hands are in motion.”

This study, led by first author Jina Kim, was supported by the Excellent New Researcher Support Project of the National Research Foundation of Korea under the Ministry of Science and ICT, as well as the University ICT Research Center (ITRC) Support Project of the Institute of Information and Communications Technology Planning and Evaluation (IITP).

▴ Paper title: T2IRay: Design of Thumb-to-Index Based Indirect Pointing for Continuous and Robust AR/VR Input▴ Paper link: https://doi.org/10.1145/3706598.3713442

▴ T2IRay demo video: https://youtu.be/ElJlcJbkJPY

ChoreoCraft: Creativity Support through VR for Choreographers

In addition, Professor Yoon’s team developed ‘ChoreoCraft,’ a virtual reality tool designed to support choreographers by addressing the unique challenges they face, such as memorizing complex movements, overcoming creative blocks, and managing subjective feedback.

ChoreoCraft reduces reliance on memory by allowing choreographers to save and refine movements directly within a VR space, using a motion-capture avatar for real-time interaction. It also enhances creativity by suggesting movements that naturally fit with prior choreography and musical elements. Furthermore, the system provides quantitative feedback by analyzing kinematic factors like motion stability and engagement, helping choreographers make data-driven creative decisions.

< Figure 2. ChoreoCraft's approaches to encourage creative process >

Professor Yoon noted, “ChoreoCraft is a tool designed to address the core challenges faced by choreographers, enhancing both creativity and efficiency. In user tests with professional choreographers, it received high marks for its ability to spark creative ideas and provide valuable quantitative feedback.”

This research was conducted in collaboration with doctoral candidate Kyungeun Jung and master’s candidate Hyunyoung Han, alongside the Electronics and Telecommunications Research Institute (ETRI) and One Million Co., Ltd. (CEO Hye-rang Kim), with support from the Cultural and Arts Immersive Service Development Project by the Ministry of Culture, Sports and Tourism.

▴ Paper title: ChoreoCraft: In-situ Crafting of Choreography in Virtual Reality through Creativity Support Tools▴ Paper link: https://doi.org/10.1145/3706598.3714220

▴ ChoreoCraft demo video: https://youtu.be/Ms1fwiSBjjw

*CHI (Conference on Human Factors in Computing Systems): The premier international conference on human-computer interaction, organized by the ACM, was held this year from April 25 to May 1, 2025.

2025.05.13 View 5398 -

KAIST & CMU Unveils Amuse, a Songwriting AI-Collaborator to Help Create Music

Wouldn't it be great if music creators had someone to brainstorm with, help them when they're stuck, and explore different musical directions together? Researchers of KAIST and Carnegie Mellon University (CMU) have developed AI technology similar to a fellow songwriter who helps create music.

KAIST (President Kwang-Hyung Lee) has developed an AI-based music creation support system, Amuse, by a research team led by Professor Sung-Ju Lee of the School of Electrical Engineering in collaboration with CMU. The research was presented at the ACM Conference on Human Factors in Computing Systems (CHI), one of the world’s top conferences in human-computer interaction, held in Yokohama, Japan from April 26 to May 1. It received the Best Paper Award, given to only the top 1% of all submissions.

< (From left) Professor Chris Donahue of Carnegie Mellon University, Ph.D. Student Yewon Kim and Professor Sung-Ju Lee of the School of Electrical Engineering >

The system developed by Professor Sung-Ju Lee’s research team, Amuse, is an AI-based system that converts various forms of inspiration such as text, images, and audio into harmonic structures (chord progressions) to support composition.

For example, if a user inputs a phrase, image, or sound clip such as “memories of a warm summer beach”, Amuse automatically generates and suggests chord progressions that match the inspiration.

Unlike existing generative AI, Amuse is differentiated in that it respects the user's creative flow and naturally induces creative exploration through an interactive method that allows flexible integration and modification of AI suggestions.

The core technology of the Amuse system is a generation method that blends two approaches: a large language model creates music code based on the user's prompt and inspiration, while another AI model, trained on real music data, filters out awkward or unnatural results using rejection sampling.

< Figure 1. Amuse system configuration. After extracting music keywords from user input, a large language model-based code progression is generated and refined through rejection sampling (left). Code extraction from audio input is also possible (right). The bottom is an example visualizing the chord structure of the generated code. >

The research team conducted a user study targeting actual musicians and evaluated that Amuse has high potential as a creative companion, or a Co-Creative AI, a concept in which people and AI collaborate, rather than having a generative AI simply put together a song.

The paper, in which a Ph.D. student Yewon Kim and Professor Sung-Ju Lee of KAIST School of Electrical and Electronic Engineering and Carnegie Mellon University Professor Chris Donahue participated, demonstrated the potential of creative AI system design in both academia and industry. ※ Paper title: Amuse: Human-AI Collaborative Songwriting with Multimodal Inspirations DOI: https://doi.org/10.1145/3706598.3713818

※ Research demo video: https://youtu.be/udilkRSnftI?si=FNXccC9EjxHOCrm1

※ Research homepage: https://nmsl.kaist.ac.kr/projects/amuse/

Professor Sung-Ju Lee said, “Recent generative AI technology has raised concerns in that it directly imitates copyrighted content, thereby violating the copyright of the creator, or generating results one-way regardless of the creator’s intention. Accordingly, the research team was aware of this trend, paid attention to what the creator actually needs, and focused on designing an AI system centered on the creator.”

He continued, “Amuse is an attempt to explore the possibility of collaboration with AI while maintaining the initiative of the creator, and is expected to be a starting point for suggesting a more creator-friendly direction in the development of music creation tools and generative AI systems in the future.”

This research was conducted with the support of the National Research Foundation of Korea with funding from the government (Ministry of Science and ICT). (RS-2024-00337007)

2025.05.07 View 7225

KAIST & CMU Unveils Amuse, a Songwriting AI-Collaborator to Help Create Music

Wouldn't it be great if music creators had someone to brainstorm with, help them when they're stuck, and explore different musical directions together? Researchers of KAIST and Carnegie Mellon University (CMU) have developed AI technology similar to a fellow songwriter who helps create music.

KAIST (President Kwang-Hyung Lee) has developed an AI-based music creation support system, Amuse, by a research team led by Professor Sung-Ju Lee of the School of Electrical Engineering in collaboration with CMU. The research was presented at the ACM Conference on Human Factors in Computing Systems (CHI), one of the world’s top conferences in human-computer interaction, held in Yokohama, Japan from April 26 to May 1. It received the Best Paper Award, given to only the top 1% of all submissions.

< (From left) Professor Chris Donahue of Carnegie Mellon University, Ph.D. Student Yewon Kim and Professor Sung-Ju Lee of the School of Electrical Engineering >

The system developed by Professor Sung-Ju Lee’s research team, Amuse, is an AI-based system that converts various forms of inspiration such as text, images, and audio into harmonic structures (chord progressions) to support composition.

For example, if a user inputs a phrase, image, or sound clip such as “memories of a warm summer beach”, Amuse automatically generates and suggests chord progressions that match the inspiration.

Unlike existing generative AI, Amuse is differentiated in that it respects the user's creative flow and naturally induces creative exploration through an interactive method that allows flexible integration and modification of AI suggestions.

The core technology of the Amuse system is a generation method that blends two approaches: a large language model creates music code based on the user's prompt and inspiration, while another AI model, trained on real music data, filters out awkward or unnatural results using rejection sampling.

< Figure 1. Amuse system configuration. After extracting music keywords from user input, a large language model-based code progression is generated and refined through rejection sampling (left). Code extraction from audio input is also possible (right). The bottom is an example visualizing the chord structure of the generated code. >

The research team conducted a user study targeting actual musicians and evaluated that Amuse has high potential as a creative companion, or a Co-Creative AI, a concept in which people and AI collaborate, rather than having a generative AI simply put together a song.

The paper, in which a Ph.D. student Yewon Kim and Professor Sung-Ju Lee of KAIST School of Electrical and Electronic Engineering and Carnegie Mellon University Professor Chris Donahue participated, demonstrated the potential of creative AI system design in both academia and industry. ※ Paper title: Amuse: Human-AI Collaborative Songwriting with Multimodal Inspirations DOI: https://doi.org/10.1145/3706598.3713818

※ Research demo video: https://youtu.be/udilkRSnftI?si=FNXccC9EjxHOCrm1

※ Research homepage: https://nmsl.kaist.ac.kr/projects/amuse/

Professor Sung-Ju Lee said, “Recent generative AI technology has raised concerns in that it directly imitates copyrighted content, thereby violating the copyright of the creator, or generating results one-way regardless of the creator’s intention. Accordingly, the research team was aware of this trend, paid attention to what the creator actually needs, and focused on designing an AI system centered on the creator.”

He continued, “Amuse is an attempt to explore the possibility of collaboration with AI while maintaining the initiative of the creator, and is expected to be a starting point for suggesting a more creator-friendly direction in the development of music creation tools and generative AI systems in the future.”

This research was conducted with the support of the National Research Foundation of Korea with funding from the government (Ministry of Science and ICT). (RS-2024-00337007)

2025.05.07 View 7225 -

KAIST Develops Neuromorphic Semiconductor Chip that Learns and Corrects Itself

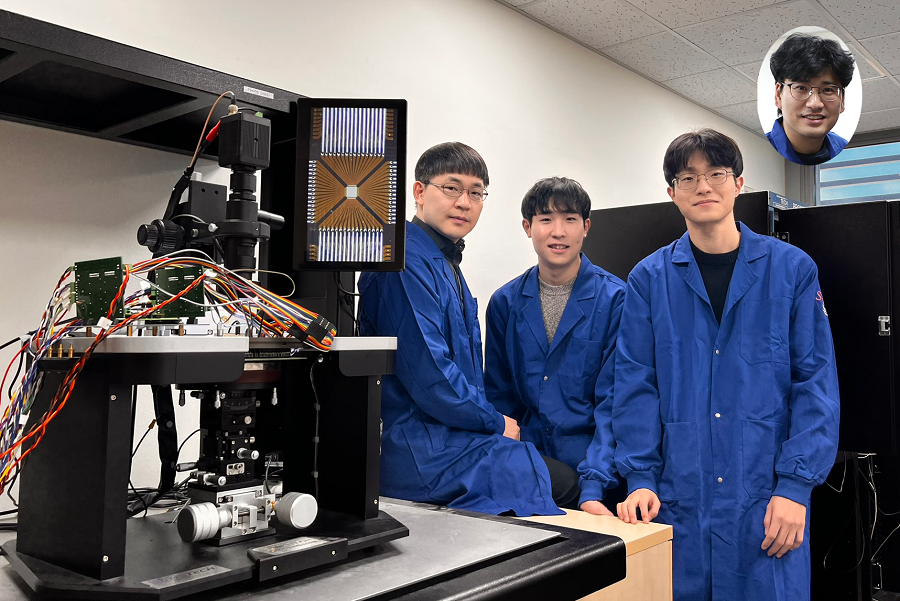

< Photo. The research team of the School of Electrical Engineering posed by the newly deveoped processor. (From center to the right) Professor Young-Gyu Yoon, Integrated Master's and Doctoral Program Students Seungjae Han and Hakcheon Jeong and Professor Shinhyun Choi >

- Professor Shinhyun Choi and Professor Young-Gyu Yoon’s Joint Research Team from the School of Electrical Engineering developed a computing chip that can learn, correct errors, and process AI tasks

- Equipping a computing chip with high-reliability memristor devices with self-error correction functions for real-time learning and image processing

Existing computer systems have separate data processing and storage devices, making them inefficient for processing complex data like AI. A KAIST research team has developed a memristor-based integrated system similar to the way our brain processes information. It is now ready for application in various devices including smart security cameras, allowing them to recognize suspicious activity immediately without having to rely on remote cloud servers, and medical devices with which it can help analyze health data in real time.

KAIST (President Kwang Hyung Lee) announced on the 17th of January that the joint research team of Professor Shinhyun Choi and Professor Young-Gyu Yoon of the School of Electrical Engineering has developed a next-generation neuromorphic semiconductor-based ultra-small computing chip that can learn and correct errors on its own.

< Figure 1. Scanning electron microscope (SEM) image of a computing chip equipped with a highly reliable selector-less 32×32 memristor crossbar array (left). Hardware system developed for real-time artificial intelligence implementation (right). >

What is special about this computing chip is that it can learn and correct errors that occur due to non-ideal characteristics that were difficult to solve in existing neuromorphic devices. For example, when processing a video stream, the chip learns to automatically separate a moving object from the background, and it becomes better at this task over time.

This self-learning ability has been proven by achieving accuracy comparable to ideal computer simulations in real-time image processing. The research team's main achievement is that it has completed a system that is both reliable and practical, beyond the development of brain-like components.

The research team has developed the world's first memristor-based integrated system that can adapt to immediate environmental changes, and has presented an innovative solution that overcomes the limitations of existing technology.

< Figure 2. Background and foreground separation results of an image containing non-ideal characteristics of memristor devices (left). Real-time image separation results through on-device learning using the memristor computing chip developed by our research team (right). >

At the heart of this innovation is a next-generation semiconductor device called a memristor*. The variable resistance characteristics of this device can replace the role of synapses in neural networks, and by utilizing it, data storage and computation can be performed simultaneously, just like our brain cells.

*Memristor: A compound word of memory and resistor, next-generation electrical device whose resistance value is determined by the amount and direction of charge that has flowed between the two terminals in the past.

The research team designed a highly reliable memristor that can precisely control resistance changes and developed an efficient system that excludes complex compensation processes through self-learning. This study is significant in that it experimentally verified the commercialization possibility of a next-generation neuromorphic semiconductor-based integrated system that supports real-time learning and inference.

This technology will revolutionize the way artificial intelligence is used in everyday devices, allowing AI tasks to be processed locally without relying on remote cloud servers, making them faster, more privacy-protected, and more energy-efficient.

“This system is like a smart workspace where everything is within arm’s reach instead of having to go back and forth between desks and file cabinets,” explained KAIST researchers Hakcheon Jeong and Seungjae Han, who led the development of this technology. “This is similar to the way our brain processes information, where everything is processed efficiently at once at one spot.”

The research was conducted with Hakcheon Jeong and Seungjae Han, the students of Integrated Master's and Doctoral Program at KAIST School of Electrical Engineering being the co-first authors, the results of which was published online in the international academic journal, Nature Electronics, on January 8, 2025.

*Paper title: Self-supervised video processing with self-calibration on an analogue computing platform based on a selector-less memristor array ( https://doi.org/10.1038/s41928-024-01318-6 )

This research was supported by the Next-Generation Intelligent Semiconductor Technology Development Project, Excellent New Researcher Project and PIM AI Semiconductor Core Technology Development Project of the National Research Foundation of Korea, and the Electronics and Telecommunications Research Institute Research and Development Support Project of the Institute of Information & communications Technology Planning & Evaluation.

2025.01.17 View 9835

KAIST Develops Neuromorphic Semiconductor Chip that Learns and Corrects Itself

< Photo. The research team of the School of Electrical Engineering posed by the newly deveoped processor. (From center to the right) Professor Young-Gyu Yoon, Integrated Master's and Doctoral Program Students Seungjae Han and Hakcheon Jeong and Professor Shinhyun Choi >

- Professor Shinhyun Choi and Professor Young-Gyu Yoon’s Joint Research Team from the School of Electrical Engineering developed a computing chip that can learn, correct errors, and process AI tasks

- Equipping a computing chip with high-reliability memristor devices with self-error correction functions for real-time learning and image processing

Existing computer systems have separate data processing and storage devices, making them inefficient for processing complex data like AI. A KAIST research team has developed a memristor-based integrated system similar to the way our brain processes information. It is now ready for application in various devices including smart security cameras, allowing them to recognize suspicious activity immediately without having to rely on remote cloud servers, and medical devices with which it can help analyze health data in real time.

KAIST (President Kwang Hyung Lee) announced on the 17th of January that the joint research team of Professor Shinhyun Choi and Professor Young-Gyu Yoon of the School of Electrical Engineering has developed a next-generation neuromorphic semiconductor-based ultra-small computing chip that can learn and correct errors on its own.

< Figure 1. Scanning electron microscope (SEM) image of a computing chip equipped with a highly reliable selector-less 32×32 memristor crossbar array (left). Hardware system developed for real-time artificial intelligence implementation (right). >

What is special about this computing chip is that it can learn and correct errors that occur due to non-ideal characteristics that were difficult to solve in existing neuromorphic devices. For example, when processing a video stream, the chip learns to automatically separate a moving object from the background, and it becomes better at this task over time.

This self-learning ability has been proven by achieving accuracy comparable to ideal computer simulations in real-time image processing. The research team's main achievement is that it has completed a system that is both reliable and practical, beyond the development of brain-like components.

The research team has developed the world's first memristor-based integrated system that can adapt to immediate environmental changes, and has presented an innovative solution that overcomes the limitations of existing technology.

< Figure 2. Background and foreground separation results of an image containing non-ideal characteristics of memristor devices (left). Real-time image separation results through on-device learning using the memristor computing chip developed by our research team (right). >

At the heart of this innovation is a next-generation semiconductor device called a memristor*. The variable resistance characteristics of this device can replace the role of synapses in neural networks, and by utilizing it, data storage and computation can be performed simultaneously, just like our brain cells.

*Memristor: A compound word of memory and resistor, next-generation electrical device whose resistance value is determined by the amount and direction of charge that has flowed between the two terminals in the past.

The research team designed a highly reliable memristor that can precisely control resistance changes and developed an efficient system that excludes complex compensation processes through self-learning. This study is significant in that it experimentally verified the commercialization possibility of a next-generation neuromorphic semiconductor-based integrated system that supports real-time learning and inference.

This technology will revolutionize the way artificial intelligence is used in everyday devices, allowing AI tasks to be processed locally without relying on remote cloud servers, making them faster, more privacy-protected, and more energy-efficient.

“This system is like a smart workspace where everything is within arm’s reach instead of having to go back and forth between desks and file cabinets,” explained KAIST researchers Hakcheon Jeong and Seungjae Han, who led the development of this technology. “This is similar to the way our brain processes information, where everything is processed efficiently at once at one spot.”

The research was conducted with Hakcheon Jeong and Seungjae Han, the students of Integrated Master's and Doctoral Program at KAIST School of Electrical Engineering being the co-first authors, the results of which was published online in the international academic journal, Nature Electronics, on January 8, 2025.

*Paper title: Self-supervised video processing with self-calibration on an analogue computing platform based on a selector-less memristor array ( https://doi.org/10.1038/s41928-024-01318-6 )

This research was supported by the Next-Generation Intelligent Semiconductor Technology Development Project, Excellent New Researcher Project and PIM AI Semiconductor Core Technology Development Project of the National Research Foundation of Korea, and the Electronics and Telecommunications Research Institute Research and Development Support Project of the Institute of Information & communications Technology Planning & Evaluation.

2025.01.17 View 9835 -

KAIST Develops Insect-Eye-Inspired Camera Capturing 9,120 Frames Per Second

< (From left) Bio and Brain Engineering PhD Student Jae-Myeong Kwon, Professor Ki-Hun Jeong, PhD Student Hyun-Kyung Kim, PhD Student Young-Gil Cha, and Professor Min H. Kim of the School of Computing >

The compound eyes of insects can detect fast-moving objects in parallel and, in low-light conditions, enhance sensitivity by integrating signals over time to determine motion. Inspired by these biological mechanisms, KAIST researchers have successfully developed a low-cost, high-speed camera that overcomes the limitations of frame rate and sensitivity faced by conventional high-speed cameras.

KAIST (represented by President Kwang Hyung Lee) announced on the 16th of January that a research team led by Professors Ki-Hun Jeong (Department of Bio and Brain Engineering) and Min H. Kim (School of Computing) has developed a novel bio-inspired camera capable of ultra-high-speed imaging with high sensitivity by mimicking the visual structure of insect eyes.

High-quality imaging under high-speed and low-light conditions is a critical challenge in many applications. While conventional high-speed cameras excel in capturing fast motion, their sensitivity decreases as frame rates increase because the time available to collect light is reduced.

To address this issue, the research team adopted an approach similar to insect vision, utilizing multiple optical channels and temporal summation. Unlike traditional monocular camera systems, the bio-inspired camera employs a compound-eye-like structure that allows for the parallel acquisition of frames from different time intervals.

< Figure 1. (A) Vision in a fast-eyed insect. Reflected light from swiftly moving objects sequentially stimulates the photoreceptors along the individual optical channels called ommatidia, of which the visual signals are separately and parallelly processed via the lamina and medulla. Each neural response is temporally summed to enhance the visual signals. The parallel processing and temporal summation allow fast and low-light imaging in dim light. (B) High-speed and high-sensitivity microlens array camera (HS-MAC). A rolling shutter image sensor is utilized to simultaneously acquire multiple frames by channel division, and temporal summation is performed in parallel to realize high speed and sensitivity even in a low-light environment. In addition, the frame components of a single fragmented array image are stitched into a single blurred frame, which is subsequently deblurred by compressive image reconstruction. >

During this process, light is accumulated over overlapping time periods for each frame, increasing the signal-to-noise ratio. The researchers demonstrated that their bio-inspired camera could capture objects up to 40 times dimmer than those detectable by conventional high-speed cameras.

The team also introduced a "channel-splitting" technique to significantly enhance the camera's speed, achieving frame rates thousands of times faster than those supported by the image sensors used in packaging. Additionally, a "compressed image restoration" algorithm was employed to eliminate blur caused by frame integration and reconstruct sharp images.

The resulting bio-inspired camera is less than one millimeter thick and extremely compact, capable of capturing 9,120 frames per second while providing clear images in low-light conditions.

< Figure 2. A high-speed, high-sensitivity biomimetic camera packaged in an image sensor. It is made small enough to fit on a finger, with a thickness of less than 1 mm. >

The research team plans to extend this technology to develop advanced image processing algorithms for 3D imaging and super-resolution imaging, aiming for applications in biomedical imaging, mobile devices, and various other camera technologies.

Hyun-Kyung Kim, a doctoral student in the Department of Bio and Brain Engineering at KAIST and the study's first author, stated, “We have experimentally validated that the insect-eye-inspired camera delivers outstanding performance in high-speed and low-light imaging despite its small size. This camera opens up possibilities for diverse applications in portable camera systems, security surveillance, and medical imaging.”

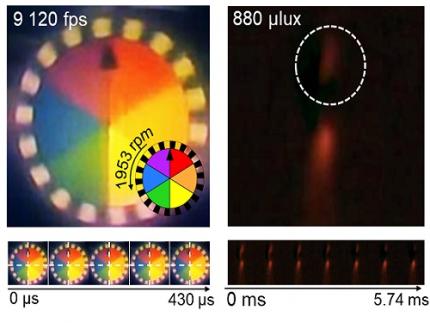

< Figure 3. Rotating plate and flame captured using the high-speed, high-sensitivity biomimetic camera. The rotating plate at 1,950 rpm was accurately captured at 9,120 fps. In addition, the pinch-off of the flame with a faint intensity of 880 µlux was accurately captured at 1,020 fps. >

This research was published in the international journal Science Advances in January 2025 (Paper Title: “Biologically-inspired microlens array camera for high-speed and high-sensitivity imaging”).

DOI: https://doi.org/10.1126/sciadv.ads3389

This study was supported by the Korea Research Institute for Defense Technology Planning and Advancement (KRIT) of the Defense Acquisition Program Administration (DAPA), the Ministry of Science and ICT, and the Ministry of Trade, Industry and Energy (MOTIE).

2025.01.16 View 9336

KAIST Develops Insect-Eye-Inspired Camera Capturing 9,120 Frames Per Second

< (From left) Bio and Brain Engineering PhD Student Jae-Myeong Kwon, Professor Ki-Hun Jeong, PhD Student Hyun-Kyung Kim, PhD Student Young-Gil Cha, and Professor Min H. Kim of the School of Computing >

The compound eyes of insects can detect fast-moving objects in parallel and, in low-light conditions, enhance sensitivity by integrating signals over time to determine motion. Inspired by these biological mechanisms, KAIST researchers have successfully developed a low-cost, high-speed camera that overcomes the limitations of frame rate and sensitivity faced by conventional high-speed cameras.

KAIST (represented by President Kwang Hyung Lee) announced on the 16th of January that a research team led by Professors Ki-Hun Jeong (Department of Bio and Brain Engineering) and Min H. Kim (School of Computing) has developed a novel bio-inspired camera capable of ultra-high-speed imaging with high sensitivity by mimicking the visual structure of insect eyes.

High-quality imaging under high-speed and low-light conditions is a critical challenge in many applications. While conventional high-speed cameras excel in capturing fast motion, their sensitivity decreases as frame rates increase because the time available to collect light is reduced.

To address this issue, the research team adopted an approach similar to insect vision, utilizing multiple optical channels and temporal summation. Unlike traditional monocular camera systems, the bio-inspired camera employs a compound-eye-like structure that allows for the parallel acquisition of frames from different time intervals.

< Figure 1. (A) Vision in a fast-eyed insect. Reflected light from swiftly moving objects sequentially stimulates the photoreceptors along the individual optical channels called ommatidia, of which the visual signals are separately and parallelly processed via the lamina and medulla. Each neural response is temporally summed to enhance the visual signals. The parallel processing and temporal summation allow fast and low-light imaging in dim light. (B) High-speed and high-sensitivity microlens array camera (HS-MAC). A rolling shutter image sensor is utilized to simultaneously acquire multiple frames by channel division, and temporal summation is performed in parallel to realize high speed and sensitivity even in a low-light environment. In addition, the frame components of a single fragmented array image are stitched into a single blurred frame, which is subsequently deblurred by compressive image reconstruction. >

During this process, light is accumulated over overlapping time periods for each frame, increasing the signal-to-noise ratio. The researchers demonstrated that their bio-inspired camera could capture objects up to 40 times dimmer than those detectable by conventional high-speed cameras.

The team also introduced a "channel-splitting" technique to significantly enhance the camera's speed, achieving frame rates thousands of times faster than those supported by the image sensors used in packaging. Additionally, a "compressed image restoration" algorithm was employed to eliminate blur caused by frame integration and reconstruct sharp images.

The resulting bio-inspired camera is less than one millimeter thick and extremely compact, capable of capturing 9,120 frames per second while providing clear images in low-light conditions.

< Figure 2. A high-speed, high-sensitivity biomimetic camera packaged in an image sensor. It is made small enough to fit on a finger, with a thickness of less than 1 mm. >

The research team plans to extend this technology to develop advanced image processing algorithms for 3D imaging and super-resolution imaging, aiming for applications in biomedical imaging, mobile devices, and various other camera technologies.

Hyun-Kyung Kim, a doctoral student in the Department of Bio and Brain Engineering at KAIST and the study's first author, stated, “We have experimentally validated that the insect-eye-inspired camera delivers outstanding performance in high-speed and low-light imaging despite its small size. This camera opens up possibilities for diverse applications in portable camera systems, security surveillance, and medical imaging.”

< Figure 3. Rotating plate and flame captured using the high-speed, high-sensitivity biomimetic camera. The rotating plate at 1,950 rpm was accurately captured at 9,120 fps. In addition, the pinch-off of the flame with a faint intensity of 880 µlux was accurately captured at 1,020 fps. >

This research was published in the international journal Science Advances in January 2025 (Paper Title: “Biologically-inspired microlens array camera for high-speed and high-sensitivity imaging”).

DOI: https://doi.org/10.1126/sciadv.ads3389

This study was supported by the Korea Research Institute for Defense Technology Planning and Advancement (KRIT) of the Defense Acquisition Program Administration (DAPA), the Ministry of Science and ICT, and the Ministry of Trade, Industry and Energy (MOTIE).

2025.01.16 View 9336 -

“Cross-Generation Collaborative Labs” for Semiconductor, Chemistry, and Computer Science Opened

< Photo of Professor Hoi-Jun Yoo (center) of the School of Electrical Engineering at the signboard unveiling ceremony >

KAIST held a ceremony to mark the opening of three additional ‘Cross-Generation Collaborative Labs’ on the morning of January 7th, 2025.

The “Next-Generation AI Semiconductor System Lab” by Professor Hoi-Jun Yoo of the School of Electrical Engineering, the “Molecular Spectroscopy and Chemical Dynamics Lab” by Professor Sang Kyu Kim of the Department of Chemistry, and the “Advanced Data Computing Lab” by Professor Sue Bok Moon of the School of Computer Science are the three new labs given the honored titled of the “Cross-Generation Collaborative Lab”.

The Cross-Generation Collaborative Lab is KAIST’s unique system that was set up to facilitate the collaboration between retiring professors and junior professors to continue the achievements and know-how the elders have accumulated over their academic career. Since its introduction in 2018, nine labs have been named to be the Cross-Generation Labs, and this year’s new addition brings the total up to twelve.

The ‘Next-Generation AI Semiconductor System Lab’ led by Professor Hoi-Jun Yoo will be operated by Professor Joo-Young Kim of the same school.

Professor Hoi-Jun Yoo is a world-renowned scholar with outstanding research achievements in the field of on-device AI semiconductor design. Professor Joo-Young Kim is an up-and-coming researcher studying large language models and design of AI semiconductors for server computers, and is currently researching technologies to design PIM (Processing-in-Memory), a core technology in the field of AI semiconductors.

Their research goal is to systematically collaborate and transfer next-generation AI semiconductor design technology, including brain-mimicking AI algorithms such as deep neural networks and generative AI, to integrate core technologies, and to maximize the usability of R&D outputs, thereby further solidifying the position of Korean AI semiconductor companies in the global market.

Professor Hoi-Jun Yoo said, “I believe that, we will be able to present a development direction of for the next-generation AI semiconductors industries at home and abroad through collaborative research and play a key role in transferring and expanding global leadership.”

< Professor Sang Kyu Kim of the Department of Chemistry (middle), at the signboard unveiling ceremony for his laboratory >

The “Molecular Spectroscopy and Chemical Dynamics Laboratory”, where Professor Sang Kyu Kim of the Department of Chemistry is in charge, will be operated by Professor Tae Kyu Kim of the same department, and another professor in the field of spectroscopy and dynamics will join in the future.

Professor Sang Kyu Kim has secured technologies for developing unique experimental equipment based on ultrashort lasers and supersonic molecular beams, and is a world leader who has been creatively pioneering new fields of experimental physical chemistry.

The research goal is to describe chemical reactions and verify from a quantum mechanical perspective and introduce new theories and technologies to pursue a complete understanding of the principles of chemical reactions. In addition, the accompanying basic scientific knowledge will be applied to the design of new materials.

Professor Sang Kyu Kim said, “I am very happy to be able to pass on the research infrastructure to the next generation through this system, and I will continue to nurture it to grow into a world-class research lab through trans-generational collaborative research.”

< Photo of Professor Sue Bok Moon (center) at the signboard unveiling ceremony by the School of Computing >

Lastly, the “Advanced Data Computing Lab” led by Professor Sue Bok Moon is joined by Professor Mee Young Cha of the same school and Professor Wonjae Lee of the Graduate School of Culture Technology.

Professor Sue Bok Moon showed the infinite possibilities of large-scale data-based social network research through Cyworld, YouTube, and Twitter, and had a great influence on related fields beyond the field of computer science.

Professor Mee Young Cha is a data scientist who analyzes difficult social issues such as misinformation, poverty, and disaster detection using big data-based AI. She is the first Korean to be recognized for her achievements as the director of the Max Planck Institute in Germany, a world-class basic science research institute. Therefore, there is high expectation for synergy effects from overseas collaborative research and technology transfer and sharing among the participating professors of the collaborative research lab. Professor Wonjae Lee is researching dynamic interaction analysis between science and technology using structural topic models.

They plan to conduct research aimed at improving the analysis and understanding of negative influences occurring online, and in particular, developing a hateful precursor detection model using emotions and morality to preemptively block hateful expressions.

Professor Sue Bok Moon said, “Through this collaborative research lab, we will play a key role in conducting in-depth collaborative research on unexpected negative influences in the AI era so that we can have a high level of competitiveness worldwide.”

The ceremonies for the unveiling of the new Cross-Generation Collaborative Lab signboard were held in front of each lab from 10:00 AM on the 7th, in the attendance of President Kwang Hyung Lee, Senior Vice President for Research Sang Yup Lee, and other key officials of KAIST and the new staff members to join the laboratories.

2025.01.07 View 6687

“Cross-Generation Collaborative Labs” for Semiconductor, Chemistry, and Computer Science Opened

< Photo of Professor Hoi-Jun Yoo (center) of the School of Electrical Engineering at the signboard unveiling ceremony >

KAIST held a ceremony to mark the opening of three additional ‘Cross-Generation Collaborative Labs’ on the morning of January 7th, 2025.

The “Next-Generation AI Semiconductor System Lab” by Professor Hoi-Jun Yoo of the School of Electrical Engineering, the “Molecular Spectroscopy and Chemical Dynamics Lab” by Professor Sang Kyu Kim of the Department of Chemistry, and the “Advanced Data Computing Lab” by Professor Sue Bok Moon of the School of Computer Science are the three new labs given the honored titled of the “Cross-Generation Collaborative Lab”.

The Cross-Generation Collaborative Lab is KAIST’s unique system that was set up to facilitate the collaboration between retiring professors and junior professors to continue the achievements and know-how the elders have accumulated over their academic career. Since its introduction in 2018, nine labs have been named to be the Cross-Generation Labs, and this year’s new addition brings the total up to twelve.

The ‘Next-Generation AI Semiconductor System Lab’ led by Professor Hoi-Jun Yoo will be operated by Professor Joo-Young Kim of the same school.

Professor Hoi-Jun Yoo is a world-renowned scholar with outstanding research achievements in the field of on-device AI semiconductor design. Professor Joo-Young Kim is an up-and-coming researcher studying large language models and design of AI semiconductors for server computers, and is currently researching technologies to design PIM (Processing-in-Memory), a core technology in the field of AI semiconductors.

Their research goal is to systematically collaborate and transfer next-generation AI semiconductor design technology, including brain-mimicking AI algorithms such as deep neural networks and generative AI, to integrate core technologies, and to maximize the usability of R&D outputs, thereby further solidifying the position of Korean AI semiconductor companies in the global market.

Professor Hoi-Jun Yoo said, “I believe that, we will be able to present a development direction of for the next-generation AI semiconductors industries at home and abroad through collaborative research and play a key role in transferring and expanding global leadership.”

< Professor Sang Kyu Kim of the Department of Chemistry (middle), at the signboard unveiling ceremony for his laboratory >

The “Molecular Spectroscopy and Chemical Dynamics Laboratory”, where Professor Sang Kyu Kim of the Department of Chemistry is in charge, will be operated by Professor Tae Kyu Kim of the same department, and another professor in the field of spectroscopy and dynamics will join in the future.

Professor Sang Kyu Kim has secured technologies for developing unique experimental equipment based on ultrashort lasers and supersonic molecular beams, and is a world leader who has been creatively pioneering new fields of experimental physical chemistry.

The research goal is to describe chemical reactions and verify from a quantum mechanical perspective and introduce new theories and technologies to pursue a complete understanding of the principles of chemical reactions. In addition, the accompanying basic scientific knowledge will be applied to the design of new materials.

Professor Sang Kyu Kim said, “I am very happy to be able to pass on the research infrastructure to the next generation through this system, and I will continue to nurture it to grow into a world-class research lab through trans-generational collaborative research.”

< Photo of Professor Sue Bok Moon (center) at the signboard unveiling ceremony by the School of Computing >

Lastly, the “Advanced Data Computing Lab” led by Professor Sue Bok Moon is joined by Professor Mee Young Cha of the same school and Professor Wonjae Lee of the Graduate School of Culture Technology.

Professor Sue Bok Moon showed the infinite possibilities of large-scale data-based social network research through Cyworld, YouTube, and Twitter, and had a great influence on related fields beyond the field of computer science.

Professor Mee Young Cha is a data scientist who analyzes difficult social issues such as misinformation, poverty, and disaster detection using big data-based AI. She is the first Korean to be recognized for her achievements as the director of the Max Planck Institute in Germany, a world-class basic science research institute. Therefore, there is high expectation for synergy effects from overseas collaborative research and technology transfer and sharing among the participating professors of the collaborative research lab. Professor Wonjae Lee is researching dynamic interaction analysis between science and technology using structural topic models.

They plan to conduct research aimed at improving the analysis and understanding of negative influences occurring online, and in particular, developing a hateful precursor detection model using emotions and morality to preemptively block hateful expressions.

Professor Sue Bok Moon said, “Through this collaborative research lab, we will play a key role in conducting in-depth collaborative research on unexpected negative influences in the AI era so that we can have a high level of competitiveness worldwide.”

The ceremonies for the unveiling of the new Cross-Generation Collaborative Lab signboard were held in front of each lab from 10:00 AM on the 7th, in the attendance of President Kwang Hyung Lee, Senior Vice President for Research Sang Yup Lee, and other key officials of KAIST and the new staff members to join the laboratories.

2025.01.07 View 6687 -

KAIST Professor Uichin Lee Receives Distinguished Paper Award from ACM

< Photo. Professor Uichin Lee (left) receiving the award >

KAIST (President Kwang Hyung Lee) announced on the 25th of October that Professor Uichin Lee’s research team from the School of Computing received the Distinguished Paper Award at the International Joint Conference on Pervasive and Ubiquitous Computing and International Symposium on Wearable Computing (Ubicomp / ISWC) hosted by the Association for Computing Machinery (ACM) in Melbourne, Australia on October 8.

The ACM Ubiquitous Computing Conference is the most prestigious international conference where leading universities and global companies from around the world present the latest research results on ubiquitous computing and wearable technologies in the field of human-computer interaction (HCI).

The main conference program is composed of invited papers published in the Proceedings of the ACM (PACM) on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT), which covers the latest research in the field of ubiquitous and wearable computing.

The Distinguished Paper Award Selection Committee selected eight papers among 205 papers published in Vol. 7 of the ACM Proceedings (PACM IMWUT) that made outstanding and exemplary contributions to the research community. The committee consists of 16 prominent experts who are current and former members of the journal's editorial board which made the selection after a rigorous review of all papers for a period that stretched over a month.

< Figure 1. BeActive mobile app to promote physical activity to form active lifestyle habits >

The research that won the Distinguished Paper Award was conducted by Dr. Junyoung Park, a graduate of the KAIST Graduate School of Data Science, as the 1st author, and was titled “Understanding Disengagement in Just-in-Time Mobile Health Interventions”

Professor Uichin Lee’s research team explored user engagement of ‘Just-in-Time Mobile Health Interventions’ that actively provide interventions in opportune situations by utilizing sensor data collected from health management apps, based on the premise that these apps are aptly in use to ensure effectiveness.

< Figure 2. Traditional user-requested digital behavior change intervention (DBCI) delivery (Pull) vs. Automatic transmission (Push) for Just-in-Time (JIT) mobile DBCI using smartphone sensing technologies >

The research team conducted a systematic analysis of user disengagement or the decline in user engagement in digital behavior change interventions. They developed the BeActive system, an app that promotes physical activities designed to help forming active lifestyle habits, and systematically analyzed the effects of users’ self-control ability and boredom-proneness on compliance with behavioral interventions over time.

The results of an 8-week field trial revealed that even if just-in-time interventions are provided according to the user’s situation, it is impossible to avoid a decline in participation. However, for users with high self-control and low boredom tendency, the compliance with just-in-time interventions delivered through the app was significantly higher than that of users in other groups.

In particular, users with high boredom proneness easily got tired of the repeated push interventions, and their compliance with the app decreased more quickly than in other groups.

< Figure 3. Just-in-time Mobile Health Intervention: a demonstrative case of the BeActive system: When a user is identified to be sitting for more than 50 mins, an automatic push notification is sent to recommend a short active break to complete for reward points. >

Professor Uichin Lee explained, “As the first study on user engagement in digital therapeutics and wellness services utilizing mobile just-in-time health interventions, this research provides a foundation for exploring ways to empower user engagement.” He further added, “By leveraging large language models (LLMs) and comprehensive context-aware technologies, it will be possible to develop user-centered AI technologies that can significantly boost engagement."

< Figure 4. A conceptual illustration of user engagement in digital health apps. Engagement in digital health apps consists of (1) engagement in using digital health apps and (2) engagement in behavioral interventions provided by digital health apps, i.e., compliance with behavioral interventions. Repeated adherences to behavioral interventions recommended by digital health apps can help achieve the distal health goals. >

This study was conducted with the support of the 2021 Biomedical Technology Development Program and the 2022 Basic Research and Development Program of the National Research Foundation of Korea funded by the Ministry of Science and ICT.

< Figure 5. A conceptual illustration of user disengagement and engagement of digital behavior change intervention (DBCI) apps. In general, user engagement of digital health intervention apps consists of two components: engagement in digital health apps and engagement in behavioral interventions recommended by such apps (known as behavioral compliance or intervention adherence). The distinctive stages of user can be divided into adoption, abandonment, and attrition. >

< Figure 6. Trends of changes in frequency of app usage and adherence to behavioral intervention over 8 weeks, ● SC: Self-Control Ability (High-SC: user group with high self-control, Low-SC: user group with low self-control) ● BD: Boredom-Proneness (High-BD: user group with high boredom-proneness, Low-BD: user group with low boredom-proneness). The app usage frequencies were declined over time, but the adherence rates of those participants with High-SC and Low-BD were significantly higher than other groups. >

2024.10.25 View 10194

KAIST Professor Uichin Lee Receives Distinguished Paper Award from ACM

< Photo. Professor Uichin Lee (left) receiving the award >

KAIST (President Kwang Hyung Lee) announced on the 25th of October that Professor Uichin Lee’s research team from the School of Computing received the Distinguished Paper Award at the International Joint Conference on Pervasive and Ubiquitous Computing and International Symposium on Wearable Computing (Ubicomp / ISWC) hosted by the Association for Computing Machinery (ACM) in Melbourne, Australia on October 8.

The ACM Ubiquitous Computing Conference is the most prestigious international conference where leading universities and global companies from around the world present the latest research results on ubiquitous computing and wearable technologies in the field of human-computer interaction (HCI).

The main conference program is composed of invited papers published in the Proceedings of the ACM (PACM) on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT), which covers the latest research in the field of ubiquitous and wearable computing.

The Distinguished Paper Award Selection Committee selected eight papers among 205 papers published in Vol. 7 of the ACM Proceedings (PACM IMWUT) that made outstanding and exemplary contributions to the research community. The committee consists of 16 prominent experts who are current and former members of the journal's editorial board which made the selection after a rigorous review of all papers for a period that stretched over a month.

< Figure 1. BeActive mobile app to promote physical activity to form active lifestyle habits >