IEEE

-

KAIST Professor Jee-Hwan Ryu Receives Global IEEE Robotics Journal Best Paper Award

- Professor Jee-Hwan Ryu of Civil and Environmental Engineering receives the Best Paper Award from the Institute of Electrical and Electronics Engineers (IEEE) Robotics Journal, officially presented at ICRA, a world-renowned robotics conference.

- This is the highest level of international recognition, awarded to only the top 5 papers out of approximately 1,500 published in 2024.

- Securing a new working channel technology for soft growing robots expands the practicality and application possibilities in the field of soft robotics.

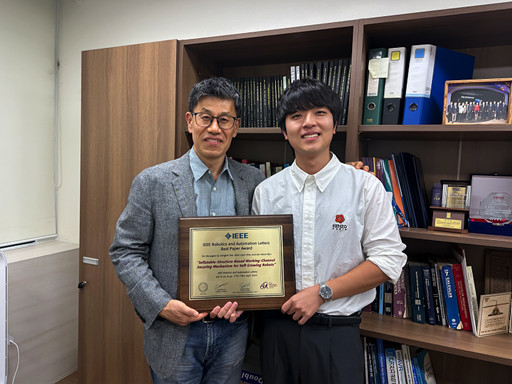

< Professor Jee-Hwan Ryu (left), Nam Gyun Kim, Ph.D. Candidate (right) from the KAIST Department of Civil and Environmental Engineering and KAIST Robotics Program >

KAIST (President Kwang-Hyung Lee) announced on the 6th that Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering received the 2024 Best Paper Award from the Robotics and Automation Letters (RA-L), a premier journal under the IEEE, at the '2025 IEEE International Conference on Robotics and Automation (ICRA)' held in Atlanta, USA, on May 22nd.

This Best Paper Award is a prestigious honor presented to only the top 5 papers out of approximately 1,500 published in 2024, boasting high international competition and authority.

The award-winning paper by Professor Ryu proposes a novel working channel securing mechanism that significantly expands the practicality and application possibilities of 'Soft Growing Robots,' which are based on soft materials that move or perform tasks through a growing motion similar to plant roots.

< IEEE Robotics Journal Award Ceremony >

Existing soft growing robots move by inflating or contracting their bodies through increasing or decreasing internal pressure, which can lead to blockages in their internal passages. In contrast, the newly developed soft growing robot achieves a growing function while maintaining the internal passage pressure equal to the external atmospheric pressure, thereby successfully securing an internal passage while retaining the robot's flexible and soft characteristics.

This structure allows various materials or tools to be freely delivered through the internal passage (working channel) within the robot and offers the advantage of performing multi-purpose tasks by flexibly replacing equipment according to the working environment.

The research team fabricated a prototype to prove the effectiveness of this technology and verified its performance through various experiments. Specifically, in the slide plate experiment, they confirmed whether materials or equipment could pass through the robot's internal channel without obstruction, and in the pipe pulling experiment, they verified if a long pipe-shaped tool could be pulled through the internal channel.

< Figure 1. Overall hardware structure of the proposed soft growing robot (left) and a cross-sectional view composing the inflatable structure (right) >

Experimental results demonstrated that the internal channel remained stable even while the robot was growing, serving as a key basis for supporting the technology's practicality and scalability.

Professor Jee-Hwan Ryu stated, "This award is very meaningful as it signifies the global recognition of Korea's robotics technology and academic achievements. Especially, it holds great significance in achieving technical progress that can greatly expand the practicality and application fields of soft growing robots. This achievement was possible thanks to the dedication and collaboration of the research team, and I will continue to contribute to the development of robotics technology through innovative research."

< Figure 2. Material supplying mechanism of the Soft Growing Robot >

This research was co-authored by Dongoh Seo, Ph.D. Candidate in Civil and Environmental Engineering, and Nam Gyun Kim, Ph.D. Candidate in Robotics. It was published in IEEE Robotics and Automation Letters on September 1, 2024.

(Paper Title: Inflatable-Structure-Based Working-Channel Securing Mechanism for Soft Growing Robots, DOI: 10.1109/LRA.2024.3426322)

This project was supported simultaneously by the National Research Foundation of Korea's Future Promising Convergence Technology Pioneer Research Project and Mid-career Researcher Project.

2025.06.09 View 2026

KAIST Professor Jee-Hwan Ryu Receives Global IEEE Robotics Journal Best Paper Award

- Professor Jee-Hwan Ryu of Civil and Environmental Engineering receives the Best Paper Award from the Institute of Electrical and Electronics Engineers (IEEE) Robotics Journal, officially presented at ICRA, a world-renowned robotics conference.

- This is the highest level of international recognition, awarded to only the top 5 papers out of approximately 1,500 published in 2024.

- Securing a new working channel technology for soft growing robots expands the practicality and application possibilities in the field of soft robotics.

< Professor Jee-Hwan Ryu (left), Nam Gyun Kim, Ph.D. Candidate (right) from the KAIST Department of Civil and Environmental Engineering and KAIST Robotics Program >

KAIST (President Kwang-Hyung Lee) announced on the 6th that Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering received the 2024 Best Paper Award from the Robotics and Automation Letters (RA-L), a premier journal under the IEEE, at the '2025 IEEE International Conference on Robotics and Automation (ICRA)' held in Atlanta, USA, on May 22nd.

This Best Paper Award is a prestigious honor presented to only the top 5 papers out of approximately 1,500 published in 2024, boasting high international competition and authority.

The award-winning paper by Professor Ryu proposes a novel working channel securing mechanism that significantly expands the practicality and application possibilities of 'Soft Growing Robots,' which are based on soft materials that move or perform tasks through a growing motion similar to plant roots.

< IEEE Robotics Journal Award Ceremony >

Existing soft growing robots move by inflating or contracting their bodies through increasing or decreasing internal pressure, which can lead to blockages in their internal passages. In contrast, the newly developed soft growing robot achieves a growing function while maintaining the internal passage pressure equal to the external atmospheric pressure, thereby successfully securing an internal passage while retaining the robot's flexible and soft characteristics.

This structure allows various materials or tools to be freely delivered through the internal passage (working channel) within the robot and offers the advantage of performing multi-purpose tasks by flexibly replacing equipment according to the working environment.

The research team fabricated a prototype to prove the effectiveness of this technology and verified its performance through various experiments. Specifically, in the slide plate experiment, they confirmed whether materials or equipment could pass through the robot's internal channel without obstruction, and in the pipe pulling experiment, they verified if a long pipe-shaped tool could be pulled through the internal channel.

< Figure 1. Overall hardware structure of the proposed soft growing robot (left) and a cross-sectional view composing the inflatable structure (right) >

Experimental results demonstrated that the internal channel remained stable even while the robot was growing, serving as a key basis for supporting the technology's practicality and scalability.

Professor Jee-Hwan Ryu stated, "This award is very meaningful as it signifies the global recognition of Korea's robotics technology and academic achievements. Especially, it holds great significance in achieving technical progress that can greatly expand the practicality and application fields of soft growing robots. This achievement was possible thanks to the dedication and collaboration of the research team, and I will continue to contribute to the development of robotics technology through innovative research."

< Figure 2. Material supplying mechanism of the Soft Growing Robot >

This research was co-authored by Dongoh Seo, Ph.D. Candidate in Civil and Environmental Engineering, and Nam Gyun Kim, Ph.D. Candidate in Robotics. It was published in IEEE Robotics and Automation Letters on September 1, 2024.

(Paper Title: Inflatable-Structure-Based Working-Channel Securing Mechanism for Soft Growing Robots, DOI: 10.1109/LRA.2024.3426322)

This project was supported simultaneously by the National Research Foundation of Korea's Future Promising Convergence Technology Pioneer Research Project and Mid-career Researcher Project.

2025.06.09 View 2026 -

Professor Hyun Myung's Team Wins First Place in a Challenge at ICRA by IEEE

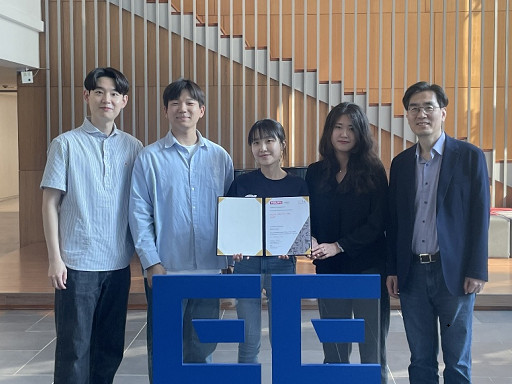

< Photo 1. (From left) Daebeom Kim (Team Leader, Ph.D. student), Seungjae Lee (Ph.D. student), Seoyeon Jang (Ph.D. student), Jei Kong (Master's student), Professor Hyun Myung >

A team of the Urban Robotics Lab, led by Professor Hyun Myung from the KAIST School of Electrical Engineering, achieved a remarkable first-place overall victory in the Nothing Stands Still Challenge (NSS Challenge) 2025, held at the 2025 IEEE International Conference on Robotics and Automation (ICRA), the world's most prestigious robotics conference, from May 19 to 23 in Atlanta, USA.

The NSS Challenge was co-hosted by HILTI, a global construction company based in Liechtenstein, and Stanford University's Gradient Spaces Group. It is an expanded version of the HILTI SLAM (Simultaneous Localization and Mapping)* Challenge, which has been held since 2021, and is considered one of the most prominent challenges at 2025 IEEE ICRA.*SLAM: Refers to Simultaneous Localization and Mapping, a technology where robots, drones, autonomous vehicles, etc., determine their own position and simultaneously create a map of their surroundings.

< Photo 2. A scene from the oral presentation on the winning team's technology (Speakers: Seungjae Lee and Seoyeon Jang, Ph.D. candidates of KAIST School of Electrical Engineering) >

This challenge primarily evaluates how accurately and robustly LiDAR scan data, collected at various times, can be registered in situations with frequent structural changes, such as construction and industrial environments. In particular, it is regarded as a highly technical competition because it deals with multi-session localization and mapping (Multi-session SLAM) technology that responds to structural changes occurring over multiple timeframes, rather than just single-point registration accuracy.

The Urban Robotics Lab team secured first place overall, surpassing National Taiwan University (3rd place) and Northwestern Polytechnical University of China (2nd place) by a significant margin, with their unique localization and mapping technology that solves the problem of registering LiDAR data collected across multiple times and spaces. The winning team will be awarded a prize of $4,000.

< Figure 1. Example of Multiway-Registration for Registering Multiple Scans >

The Urban Robotics Lab team independently developed a multiway-registration framework that can robustly register multiple scans even without prior connection information. This framework consists of an algorithm for summarizing feature points within scans and finding correspondences (CubicFeat), an algorithm for performing global registration based on the found correspondences (Quatro), and an algorithm for refining results based on change detection (Chamelion). This combination of technologies ensures stable registration performance based on fixed structures, even in highly dynamic industrial environments.

< Figure 2. Example of Change Detection Using the Chamelion Algorithm>

LiDAR scan registration technology is a core component of SLAM (Simultaneous Localization And Mapping) in various autonomous systems such as autonomous vehicles, autonomous robots, autonomous walking systems, and autonomous flying vehicles.

Professor Hyun Myung of the School of Electrical Engineering stated, "This award-winning technology is evaluated as a case that simultaneously proves both academic value and industrial applicability by maximizing the performance of precisely estimating the relative positions between different scans even in complex environments. I am grateful to the students who challenged themselves and never gave up, even when many teams abandoned due to the high difficulty."

< Figure 3. Competition Result Board, Lower RMSE (Root Mean Squared Error) Indicates Higher Score (Unit: meters)>

The Urban Robotics Lab team first participated in the SLAM Challenge in 2022, winning second place among academic teams, and in 2023, they secured first place overall in the LiDAR category and first place among academic teams in the vision category.

2025.05.30 View 2851

Professor Hyun Myung's Team Wins First Place in a Challenge at ICRA by IEEE

< Photo 1. (From left) Daebeom Kim (Team Leader, Ph.D. student), Seungjae Lee (Ph.D. student), Seoyeon Jang (Ph.D. student), Jei Kong (Master's student), Professor Hyun Myung >

A team of the Urban Robotics Lab, led by Professor Hyun Myung from the KAIST School of Electrical Engineering, achieved a remarkable first-place overall victory in the Nothing Stands Still Challenge (NSS Challenge) 2025, held at the 2025 IEEE International Conference on Robotics and Automation (ICRA), the world's most prestigious robotics conference, from May 19 to 23 in Atlanta, USA.

The NSS Challenge was co-hosted by HILTI, a global construction company based in Liechtenstein, and Stanford University's Gradient Spaces Group. It is an expanded version of the HILTI SLAM (Simultaneous Localization and Mapping)* Challenge, which has been held since 2021, and is considered one of the most prominent challenges at 2025 IEEE ICRA.*SLAM: Refers to Simultaneous Localization and Mapping, a technology where robots, drones, autonomous vehicles, etc., determine their own position and simultaneously create a map of their surroundings.

< Photo 2. A scene from the oral presentation on the winning team's technology (Speakers: Seungjae Lee and Seoyeon Jang, Ph.D. candidates of KAIST School of Electrical Engineering) >

This challenge primarily evaluates how accurately and robustly LiDAR scan data, collected at various times, can be registered in situations with frequent structural changes, such as construction and industrial environments. In particular, it is regarded as a highly technical competition because it deals with multi-session localization and mapping (Multi-session SLAM) technology that responds to structural changes occurring over multiple timeframes, rather than just single-point registration accuracy.

The Urban Robotics Lab team secured first place overall, surpassing National Taiwan University (3rd place) and Northwestern Polytechnical University of China (2nd place) by a significant margin, with their unique localization and mapping technology that solves the problem of registering LiDAR data collected across multiple times and spaces. The winning team will be awarded a prize of $4,000.

< Figure 1. Example of Multiway-Registration for Registering Multiple Scans >

The Urban Robotics Lab team independently developed a multiway-registration framework that can robustly register multiple scans even without prior connection information. This framework consists of an algorithm for summarizing feature points within scans and finding correspondences (CubicFeat), an algorithm for performing global registration based on the found correspondences (Quatro), and an algorithm for refining results based on change detection (Chamelion). This combination of technologies ensures stable registration performance based on fixed structures, even in highly dynamic industrial environments.

< Figure 2. Example of Change Detection Using the Chamelion Algorithm>

LiDAR scan registration technology is a core component of SLAM (Simultaneous Localization And Mapping) in various autonomous systems such as autonomous vehicles, autonomous robots, autonomous walking systems, and autonomous flying vehicles.

Professor Hyun Myung of the School of Electrical Engineering stated, "This award-winning technology is evaluated as a case that simultaneously proves both academic value and industrial applicability by maximizing the performance of precisely estimating the relative positions between different scans even in complex environments. I am grateful to the students who challenged themselves and never gave up, even when many teams abandoned due to the high difficulty."

< Figure 3. Competition Result Board, Lower RMSE (Root Mean Squared Error) Indicates Higher Score (Unit: meters)>

The Urban Robotics Lab team first participated in the SLAM Challenge in 2022, winning second place among academic teams, and in 2023, they secured first place overall in the LiDAR category and first place among academic teams in the vision category.

2025.05.30 View 2851 -

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

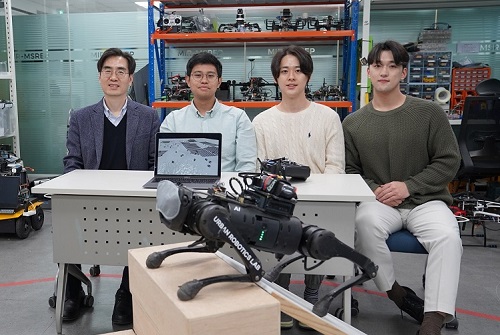

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 12346

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 12346 -

Professor Sang Kil Cha Receives IEEE Test-of-Time Award

Professor Sang Kil Cha from the Graduate School of Information Security (GSIS) in the School of Computing received the Test-of-Time Award from IEEE Security & Privacy, a top conference in the field of information security.

The Test-of-Time Award recognizes the research papers that have influenced the field of information security the most over the past decade. Three papers were selected this year, and Professor Cha is the first Korean winner of the award.

The paper by Professor Cha was published in 2012 under the title, “Unleashing Mayhem on Binary Code”. It was the first to ever suggest an algorithm that automatically finds bugs in binary code and creates exploits that links them to an attack code.

The developed algorithm is a core technique used for world-class cyber security hacking competitions like the Cyber Grand Challenge, an AI hacking contest.

Starting with this research, Professor Cha has carried out various studies to develop technologies that can find bugs and vulnerabilities through binary analyses, and is currently developing B2R2, a Korean platform that can analyze various binary codes.

2022.06.13 View 6424

Professor Sang Kil Cha Receives IEEE Test-of-Time Award

Professor Sang Kil Cha from the Graduate School of Information Security (GSIS) in the School of Computing received the Test-of-Time Award from IEEE Security & Privacy, a top conference in the field of information security.

The Test-of-Time Award recognizes the research papers that have influenced the field of information security the most over the past decade. Three papers were selected this year, and Professor Cha is the first Korean winner of the award.

The paper by Professor Cha was published in 2012 under the title, “Unleashing Mayhem on Binary Code”. It was the first to ever suggest an algorithm that automatically finds bugs in binary code and creates exploits that links them to an attack code.

The developed algorithm is a core technique used for world-class cyber security hacking competitions like the Cyber Grand Challenge, an AI hacking contest.

Starting with this research, Professor Cha has carried out various studies to develop technologies that can find bugs and vulnerabilities through binary analyses, and is currently developing B2R2, a Korean platform that can analyze various binary codes.

2022.06.13 View 6424 -

Professor Hyunjoo Jenny Lee to Co-Chair IEEE MEMS 2025

Professor Hyunjoo Jenny Lee from the School of Electrical Engineering has been appointed General Chair of the 38th IEEE MEMS 2025 (International Conference on Micro Electro Mechanical Systems). Professor Lee, who is 40, is the conference’s youngest General Chair to date and will work jointly with Professor Sheng-Shian Li of Taiwan’s National Tsing Hua University as co-chairs in 2025.

IEEE MEMS is a top-tier international conference on microelectromechanical systems and it serves as a core academic showcase for MEMS research and technology in areas such as microsensors and actuators.

With over 800 MEMS paper submissions each year, the conference only accepts and publishes about 250 of them after a rigorous review process recognized for its world-class prestige. Of all the submissions, fewer than 10% are chosen for oral presentations.

2022.04.18 View 8366

Professor Hyunjoo Jenny Lee to Co-Chair IEEE MEMS 2025

Professor Hyunjoo Jenny Lee from the School of Electrical Engineering has been appointed General Chair of the 38th IEEE MEMS 2025 (International Conference on Micro Electro Mechanical Systems). Professor Lee, who is 40, is the conference’s youngest General Chair to date and will work jointly with Professor Sheng-Shian Li of Taiwan’s National Tsing Hua University as co-chairs in 2025.

IEEE MEMS is a top-tier international conference on microelectromechanical systems and it serves as a core academic showcase for MEMS research and technology in areas such as microsensors and actuators.

With over 800 MEMS paper submissions each year, the conference only accepts and publishes about 250 of them after a rigorous review process recognized for its world-class prestige. Of all the submissions, fewer than 10% are chosen for oral presentations.

2022.04.18 View 8366 -

Prof. Changho Suh Named the 2021 James L. Massey Awardee

Professor Changho Suh from the School of Electrical Engineering was named the recipient of the 2021 James L.Massey Award. The award recognizes outstanding achievement in research and teaching by young scholars in the information theory community. The award is named in honor of James L. Massey, who was an internationally acclaimed pioneer in digital communications and revered teacher and mentor to communications engineers.

Professor Suh is a recipient of numerous awards, including the 2021 James L. Massey Research & Teaching Award for Young Scholars from the IEEE Information Theory Society, the 2019 AFOSR Grant, the 2019 Google Education Grant, the 2018 IEIE/IEEE Joint Award, the 2015 IEIE Haedong Young Engineer Award, the 2013 IEEE Communications Society Stephen O. Rice Prize, the 2011 David J. Sakrison Memorial Prize (the best dissertation award in UC Berkeley EECS), the 2009 IEEE ISIT Best Student Paper Award, the 2020 LINKGENESIS Best Teacher Award (the campus-wide Grand Prize in Teaching), and the four Departmental Teaching Awards (2013, 2019, 2020, 2021).

Dr. Suh is an IEEE Information Theory Society Distinguished Lecturer, the General Chair of the Inaugural IEEE East Asian School of Information Theory, and a Member of the Young Korean Academy of Science and Technology. He is also an Associate Editor of Machine Learning for the IEEE Transactions on Information Theory, the Editor for the IEEE Information Theory Newsletter, a Column Editor for IEEE BITS the Information Theory Magazine, an Area Chair of NeurIPS 2021, and on the Senior Program Committee of IJCAI 2019–2021.

2021.07.27 View 9620

Prof. Changho Suh Named the 2021 James L. Massey Awardee

Professor Changho Suh from the School of Electrical Engineering was named the recipient of the 2021 James L.Massey Award. The award recognizes outstanding achievement in research and teaching by young scholars in the information theory community. The award is named in honor of James L. Massey, who was an internationally acclaimed pioneer in digital communications and revered teacher and mentor to communications engineers.

Professor Suh is a recipient of numerous awards, including the 2021 James L. Massey Research & Teaching Award for Young Scholars from the IEEE Information Theory Society, the 2019 AFOSR Grant, the 2019 Google Education Grant, the 2018 IEIE/IEEE Joint Award, the 2015 IEIE Haedong Young Engineer Award, the 2013 IEEE Communications Society Stephen O. Rice Prize, the 2011 David J. Sakrison Memorial Prize (the best dissertation award in UC Berkeley EECS), the 2009 IEEE ISIT Best Student Paper Award, the 2020 LINKGENESIS Best Teacher Award (the campus-wide Grand Prize in Teaching), and the four Departmental Teaching Awards (2013, 2019, 2020, 2021).

Dr. Suh is an IEEE Information Theory Society Distinguished Lecturer, the General Chair of the Inaugural IEEE East Asian School of Information Theory, and a Member of the Young Korean Academy of Science and Technology. He is also an Associate Editor of Machine Learning for the IEEE Transactions on Information Theory, the Editor for the IEEE Information Theory Newsletter, a Column Editor for IEEE BITS the Information Theory Magazine, an Area Chair of NeurIPS 2021, and on the Senior Program Committee of IJCAI 2019–2021.

2021.07.27 View 9620 -

Prof. Junil Choi Receives the Neal Shepherd Memorial Award

Professor Junil Choi of the School of Electrical Engineering received the 2021 Neal Shepherd Memorial Award from the IEEE Vehicular Technology Society. The award recognizes the most outstanding paper relating to radio propagation published in major journals over the previous five years.

Professor Cho, the recipient of the 2015 IEEE Signal Processing Society’s and the 2019 IEEE Communications Society’s Best Paper Award, was selected as the awardee for his paper titled “The Impact of Beamwidth on Temporal Channel Variation in Vehicular Channels and Its Implications” in IEEE Transaction on Vehicular Technology in 2017.

In this paper, Professor Choi and his team derived the channel coherence time for a wireless channel as a function of the beamwidth, taking both Doppler effect and pointing error into consideration. The results showed that a nonzero optimal beamwidth exists that maximizes the channel coherence time. To reduce the impact of the overhead of doing realignment in every channel coherence time, the paper showed that the beams should be realigned every beam coherence time for the best performance.

Professor Choi said, “It is quite an honor to receive this prestigious award following Professor Joonhyun Kang who won the IEEE VTS’s Jack Neubauer Memorial Award this year. It shows that our university’s pursuit of excellence in advanced research is being well recognized.”

2021.07.26 View 7917

Prof. Junil Choi Receives the Neal Shepherd Memorial Award

Professor Junil Choi of the School of Electrical Engineering received the 2021 Neal Shepherd Memorial Award from the IEEE Vehicular Technology Society. The award recognizes the most outstanding paper relating to radio propagation published in major journals over the previous five years.

Professor Cho, the recipient of the 2015 IEEE Signal Processing Society’s and the 2019 IEEE Communications Society’s Best Paper Award, was selected as the awardee for his paper titled “The Impact of Beamwidth on Temporal Channel Variation in Vehicular Channels and Its Implications” in IEEE Transaction on Vehicular Technology in 2017.

In this paper, Professor Choi and his team derived the channel coherence time for a wireless channel as a function of the beamwidth, taking both Doppler effect and pointing error into consideration. The results showed that a nonzero optimal beamwidth exists that maximizes the channel coherence time. To reduce the impact of the overhead of doing realignment in every channel coherence time, the paper showed that the beams should be realigned every beam coherence time for the best performance.

Professor Choi said, “It is quite an honor to receive this prestigious award following Professor Joonhyun Kang who won the IEEE VTS’s Jack Neubauer Memorial Award this year. It shows that our university’s pursuit of excellence in advanced research is being well recognized.”

2021.07.26 View 7917 -

Professor Kang’s Team Receives the IEEE Jack Newbauer Memorial Award

Professor Joonhyuk Kang of the School of Electrical Engineering received the IEEE Vehicular Technology Society’s 2021 Jack Neubauer Memorial Award for his team’s paper published in IEEE Transactions on Vehicular Technology. The Jack Neubauer Memorial Award recognizes the best paper published in the IEEE Transactions on Vehicular Technology journal in the last five years.

The team of authors, Professor Kang, Professor Sung-Ah Chung at Kyungpook National University, and Professor Osvaldo Simeone of King's College London reported their research titled Mobile Edge Computing via a UAV-Mounted Cloudlet: Optimization of Bit Allocation and Path Planning in IEEE Transactions on Vehicular Technology, Vol. 67, No. 3, pp. 2049-2063, in March 2018.

Their paper shows how the trajectory of aircraft is optimized and resources are allocated when unmanned aerial vehicles perform edge computing to help mobile device calculations. This paper has currently recorded nearly 400 citations (based on Google Scholar). "We are very happy to see the results of proposing edge computing using unmanned aerial vehicles by applying optimization theory, and conducting research on trajectory and resource utilization of unmanned aerial vehicles that minimize power consumption," said Professor Kang.

2021.07.12 View 9325

Professor Kang’s Team Receives the IEEE Jack Newbauer Memorial Award

Professor Joonhyuk Kang of the School of Electrical Engineering received the IEEE Vehicular Technology Society’s 2021 Jack Neubauer Memorial Award for his team’s paper published in IEEE Transactions on Vehicular Technology. The Jack Neubauer Memorial Award recognizes the best paper published in the IEEE Transactions on Vehicular Technology journal in the last five years.

The team of authors, Professor Kang, Professor Sung-Ah Chung at Kyungpook National University, and Professor Osvaldo Simeone of King's College London reported their research titled Mobile Edge Computing via a UAV-Mounted Cloudlet: Optimization of Bit Allocation and Path Planning in IEEE Transactions on Vehicular Technology, Vol. 67, No. 3, pp. 2049-2063, in March 2018.

Their paper shows how the trajectory of aircraft is optimized and resources are allocated when unmanned aerial vehicles perform edge computing to help mobile device calculations. This paper has currently recorded nearly 400 citations (based on Google Scholar). "We are very happy to see the results of proposing edge computing using unmanned aerial vehicles by applying optimization theory, and conducting research on trajectory and resource utilization of unmanned aerial vehicles that minimize power consumption," said Professor Kang.

2021.07.12 View 9325 -

Professor Jaehyouk Choi, IT Young Engineer of the Year

Professor Jaehyouk Choi from the KAIST School of Electrical Engineering won the ‘IT Young Engineer Award’ for 2020. The award was co-presented by the Institute of Electrical and Electronics Engineers (IEEE) and the Institute of Electronics Engineers of Korea (IEIE), and sponsored by the Haedong Science and Culture Foundation.

The ‘IT Young Engineer Award’ selects only one mid-career scientist or engineer 40 years old or younger every year, who has made a great contribution to academic or technological advancements in the field of IT.

Professor Choi’s research topics include high-performance semiconductor circuit design for ultrahigh-speed communication systems including 5G communication. In particular, he is widely known for his field of the ‘ultra-low-noise, high-frequency signal generation circuit,’ key technology for next-generation wired and wireless communications, as well as for memory systems. He has published 64 papers in SCI journals and at international conferences, and applied for and registered 25 domestic and international patents.

Professor Choi is also an active member of the Technical Program Committee of international symposiums in the field of semiconductor circuits including the International Solid-State Circuits Conference (ISSCC) and the European Solid-State Circuit Conference (ESSCIRC). Beginning this year, he also serves as a distinguished lecturer at the IEEE Solid-State Circuit Society (SSCS).

(END)

2020.08.20 View 12711

Professor Jaehyouk Choi, IT Young Engineer of the Year

Professor Jaehyouk Choi from the KAIST School of Electrical Engineering won the ‘IT Young Engineer Award’ for 2020. The award was co-presented by the Institute of Electrical and Electronics Engineers (IEEE) and the Institute of Electronics Engineers of Korea (IEIE), and sponsored by the Haedong Science and Culture Foundation.

The ‘IT Young Engineer Award’ selects only one mid-career scientist or engineer 40 years old or younger every year, who has made a great contribution to academic or technological advancements in the field of IT.

Professor Choi’s research topics include high-performance semiconductor circuit design for ultrahigh-speed communication systems including 5G communication. In particular, he is widely known for his field of the ‘ultra-low-noise, high-frequency signal generation circuit,’ key technology for next-generation wired and wireless communications, as well as for memory systems. He has published 64 papers in SCI journals and at international conferences, and applied for and registered 25 domestic and international patents.

Professor Choi is also an active member of the Technical Program Committee of international symposiums in the field of semiconductor circuits including the International Solid-State Circuits Conference (ISSCC) and the European Solid-State Circuit Conference (ESSCIRC). Beginning this year, he also serves as a distinguished lecturer at the IEEE Solid-State Circuit Society (SSCS).

(END)

2020.08.20 View 12711 -

Professor Jee-Hwan Ryu Receives IEEE ICRA 2020 Outstanding Reviewer Award

Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering was selected as this year’s winner of the Outstanding Reviewer Award presented by the Institute of Electrical and Electronics Engineers International Conference on Robotics and Automation (IEEE ICRA). The award ceremony took place on June 5 during the conference that is being held online May 31 through August 31 for three months.

The IEEE ICRA Outstanding Reviewer Award is given every year to the top reviewers who have provided constructive and high-quality thesis reviews, and contributed to improving the quality of papers published as results of the conference.

Professor Ryu was one of the four winners of this year’s award. He was selected from 9,425 candidates, which was approximately three times bigger than the candidate pool in previous years. He was strongly recommended by the editorial committee of the conference.

(END)

2020.06.10 View 10877

Professor Jee-Hwan Ryu Receives IEEE ICRA 2020 Outstanding Reviewer Award

Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering was selected as this year’s winner of the Outstanding Reviewer Award presented by the Institute of Electrical and Electronics Engineers International Conference on Robotics and Automation (IEEE ICRA). The award ceremony took place on June 5 during the conference that is being held online May 31 through August 31 for three months.

The IEEE ICRA Outstanding Reviewer Award is given every year to the top reviewers who have provided constructive and high-quality thesis reviews, and contributed to improving the quality of papers published as results of the conference.

Professor Ryu was one of the four winners of this year’s award. He was selected from 9,425 candidates, which was approximately three times bigger than the candidate pool in previous years. He was strongly recommended by the editorial committee of the conference.

(END)

2020.06.10 View 10877 -

Professor Jong Chul Ye Appointed as Distinguished Lecturer of IEEE EMBS

Professor Jong Chul Ye from the Department of Bio and Brain Engineering was appointed as a distinguished lecturer by the International Association of Electrical and Electronic Engineers (IEEE) Engineering in Medicine and Biology Society (EMBS). Professor Ye was invited to deliver a lecture on his leading research on artificial intelligence (AI) technology in medical video restoration. He will serve a term of two years beginning in 2020.

IEEE EMBS's distinguished lecturer program is designed to educate researchers around the world on the latest trends and technology in biomedical engineering. Sponsored by IEEE, its members can attend lectures on the distinguished professor's research subject.

Professor Ye said, "We are at a time where the importance of AI in medical imaging is increasing.” He added, “I am proud to be appointed as a distinguished lecturer of the IEEE EMBS in recognition of my contributions to this field.”

(END)

2020.02.27 View 12919

Professor Jong Chul Ye Appointed as Distinguished Lecturer of IEEE EMBS

Professor Jong Chul Ye from the Department of Bio and Brain Engineering was appointed as a distinguished lecturer by the International Association of Electrical and Electronic Engineers (IEEE) Engineering in Medicine and Biology Society (EMBS). Professor Ye was invited to deliver a lecture on his leading research on artificial intelligence (AI) technology in medical video restoration. He will serve a term of two years beginning in 2020.

IEEE EMBS's distinguished lecturer program is designed to educate researchers around the world on the latest trends and technology in biomedical engineering. Sponsored by IEEE, its members can attend lectures on the distinguished professor's research subject.

Professor Ye said, "We are at a time where the importance of AI in medical imaging is increasing.” He added, “I am proud to be appointed as a distinguished lecturer of the IEEE EMBS in recognition of my contributions to this field.”

(END)

2020.02.27 View 12919 -

Professor Junil Choi Receives Stephen O. Rice Prize

< Professor Junil Choi (second from the left) >

Professor Junil Choi from the School of Electrical Engineering received the Stephen O. Rice Prize at the Global Communications Conference (GLOBECOM) hosted by the Institute of Electrical and Electronics Engineers (IEEE) in Hawaii on December 10, 2019.

The Stephen O. Rice Prize is awarded to only one paper of exceptional merit every year. The IEEE Communications Society evaluates all papers published in the IEEE Transactions on Communications journal within the last three years, and marks each paper by aggregating its scores on originality, the number of citations, impact, and peer evaluation.

Professor Choi won the prize for his research on one-bit analog-to-digital converters (ADCs) for multiuser massive multiple-input and multiple-output (MIMO) antenna systems published in 2016. In his paper, Professor Choi proposed a technology that can drastically reduce the power consumption of the multiuser massive MIMO antenna systems, which are the core technology for 5G and future wireless communication. Professor Choi’s paper has been cited more than 230 times in various academic journals and conference papers since its publication, and multiple follow-up studies are actively ongoing.

In 2015, Professor Choi received the IEEE Signal Processing Society Best Paper Award, an award equals to the Stephen O. Rice Prize. He was also selected as the winner of the 15th Haedong Young Engineering Researcher Award presented by the Korean Institute of Communications and Information Sciences (KICS) on December 6, 2019 for his outstanding academic achievements, including 34 international journal publications and 26 US patent registrations.

(END)

2019.12.23 View 13372

Professor Junil Choi Receives Stephen O. Rice Prize

< Professor Junil Choi (second from the left) >

Professor Junil Choi from the School of Electrical Engineering received the Stephen O. Rice Prize at the Global Communications Conference (GLOBECOM) hosted by the Institute of Electrical and Electronics Engineers (IEEE) in Hawaii on December 10, 2019.

The Stephen O. Rice Prize is awarded to only one paper of exceptional merit every year. The IEEE Communications Society evaluates all papers published in the IEEE Transactions on Communications journal within the last three years, and marks each paper by aggregating its scores on originality, the number of citations, impact, and peer evaluation.

Professor Choi won the prize for his research on one-bit analog-to-digital converters (ADCs) for multiuser massive multiple-input and multiple-output (MIMO) antenna systems published in 2016. In his paper, Professor Choi proposed a technology that can drastically reduce the power consumption of the multiuser massive MIMO antenna systems, which are the core technology for 5G and future wireless communication. Professor Choi’s paper has been cited more than 230 times in various academic journals and conference papers since its publication, and multiple follow-up studies are actively ongoing.

In 2015, Professor Choi received the IEEE Signal Processing Society Best Paper Award, an award equals to the Stephen O. Rice Prize. He was also selected as the winner of the 15th Haedong Young Engineering Researcher Award presented by the Korean Institute of Communications and Information Sciences (KICS) on December 6, 2019 for his outstanding academic achievements, including 34 international journal publications and 26 US patent registrations.

(END)

2019.12.23 View 13372