AND

-

KAIST Develops Virtual Staining Technology for 3D Histopathology

Moving beyond traditional methods of observing thinly sliced and stained cancer tissues, a collaborative international research team led by KAIST has successfully developed a groundbreaking technology. This innovation uses advanced optical techniques combined with an artificial intelligence-based deep learning algorithm to create realistic, virtually stained 3D images of cancer tissue without the need for serial sectioning nor staining. This breakthrough is anticipated to pave the way for next-generation non-invasive pathological diagnosis.

< Photo 1. (From left) Juyeon Park (Ph.D. Candidate, Department of Physics), Professor YongKeun Park (Department of Physics) (Top left) Professor Su-Jin Shin (Gangnam Severance Hospital), Professor Tae Hyun Hwang (Vanderbilt University School of Medicine) >

KAIST (President Kwang Hyung Lee) announced on the 26th that a research team led by Professor YongKeun Park of the Department of Physics, in collaboration with Professor Su-Jin Shin's team at Yonsei University Gangnam Severance Hospital, Professor Tae Hyun Hwang's team at Mayo Clinic, and Tomocube's AI research team, has developed an innovative technology capable of vividly displaying the 3D structure of cancer tissues without separate staining.

For over 200 years, conventional pathology has relied on observing cancer tissues under a microscope, a method that only shows specific cross-sections of the 3D cancer tissue. This has limited the ability to understand the three-dimensional connections and spatial arrangements between cells.

To overcome this, the research team utilized holotomography (HT), an advanced optical technology, to measure the 3D refractive index information of tissues. They then integrated an AI-based deep learning algorithm to successfully generate virtual H&E* images.* H&E (Hematoxylin & Eosin): The most widely used staining method for observing pathological tissues. Hematoxylin stains cell nuclei blue, and eosin stains cytoplasm pink.

The research team quantitatively demonstrated that the images generated by this technology are highly similar to actual stained tissue images. Furthermore, the technology exhibited consistent performance across various organs and tissues, proving its versatility and reliability as a next-generation pathological analysis tool.

< Figure 1. Comparison of conventional 3D tissue pathology procedure and the 3D virtual H&E staining technology proposed in this study. The traditional method requires preparing and staining dozens of tissue slides, while the proposed technology can reduce the number of slides by up to 10 times and quickly generate H&E images without the staining process. >

Moreover, by validating the feasibility of this technology through joint research with hospitals and research institutions in Korea and the United States, utilizing Tomocube's holotomography equipment, the team demonstrated its potential for full-scale adoption in real-world pathological research settings.

Professor YongKeun Park stated, "This research marks a major advancement by transitioning pathological analysis from conventional 2D methods to comprehensive 3D imaging. It will greatly enhance biomedical research and clinical diagnostics, particularly in understanding cancer tumor boundaries and the intricate spatial arrangements of cells within tumor microenvironments."

< Figure 2. Results of AI-based 3D virtual H&E staining and quantitative analysis of pathological tissue. The virtually stained images enabled 3D reconstruction of key pathological features such as cell nuclei and glandular lumens. Based on this, various quantitative indicators, including cell nuclear distribution, volume, and surface area, could be extracted. >

This research, with Juyeon Park, a student of the Integrated Master’s and Ph.D. Program at KAIST, as the first author, was published online in the prestigious journal Nature Communications on May 22.

(Paper title: Revealing 3D microanatomical structures of unlabeled thick cancer tissues using holotomography and virtual H&E staining.

[https://doi.org/10.1038/s41467-025-59820-0]

This study was supported by the Leader Researcher Program of the National Research Foundation of Korea, the Global Industry Technology Cooperation Center Project of the Korea Institute for Advancement of Technology, and the Korea Health Industry Development Institute.

2025.05.26 View 431

KAIST Develops Virtual Staining Technology for 3D Histopathology

Moving beyond traditional methods of observing thinly sliced and stained cancer tissues, a collaborative international research team led by KAIST has successfully developed a groundbreaking technology. This innovation uses advanced optical techniques combined with an artificial intelligence-based deep learning algorithm to create realistic, virtually stained 3D images of cancer tissue without the need for serial sectioning nor staining. This breakthrough is anticipated to pave the way for next-generation non-invasive pathological diagnosis.

< Photo 1. (From left) Juyeon Park (Ph.D. Candidate, Department of Physics), Professor YongKeun Park (Department of Physics) (Top left) Professor Su-Jin Shin (Gangnam Severance Hospital), Professor Tae Hyun Hwang (Vanderbilt University School of Medicine) >

KAIST (President Kwang Hyung Lee) announced on the 26th that a research team led by Professor YongKeun Park of the Department of Physics, in collaboration with Professor Su-Jin Shin's team at Yonsei University Gangnam Severance Hospital, Professor Tae Hyun Hwang's team at Mayo Clinic, and Tomocube's AI research team, has developed an innovative technology capable of vividly displaying the 3D structure of cancer tissues without separate staining.

For over 200 years, conventional pathology has relied on observing cancer tissues under a microscope, a method that only shows specific cross-sections of the 3D cancer tissue. This has limited the ability to understand the three-dimensional connections and spatial arrangements between cells.

To overcome this, the research team utilized holotomography (HT), an advanced optical technology, to measure the 3D refractive index information of tissues. They then integrated an AI-based deep learning algorithm to successfully generate virtual H&E* images.* H&E (Hematoxylin & Eosin): The most widely used staining method for observing pathological tissues. Hematoxylin stains cell nuclei blue, and eosin stains cytoplasm pink.

The research team quantitatively demonstrated that the images generated by this technology are highly similar to actual stained tissue images. Furthermore, the technology exhibited consistent performance across various organs and tissues, proving its versatility and reliability as a next-generation pathological analysis tool.

< Figure 1. Comparison of conventional 3D tissue pathology procedure and the 3D virtual H&E staining technology proposed in this study. The traditional method requires preparing and staining dozens of tissue slides, while the proposed technology can reduce the number of slides by up to 10 times and quickly generate H&E images without the staining process. >

Moreover, by validating the feasibility of this technology through joint research with hospitals and research institutions in Korea and the United States, utilizing Tomocube's holotomography equipment, the team demonstrated its potential for full-scale adoption in real-world pathological research settings.

Professor YongKeun Park stated, "This research marks a major advancement by transitioning pathological analysis from conventional 2D methods to comprehensive 3D imaging. It will greatly enhance biomedical research and clinical diagnostics, particularly in understanding cancer tumor boundaries and the intricate spatial arrangements of cells within tumor microenvironments."

< Figure 2. Results of AI-based 3D virtual H&E staining and quantitative analysis of pathological tissue. The virtually stained images enabled 3D reconstruction of key pathological features such as cell nuclei and glandular lumens. Based on this, various quantitative indicators, including cell nuclear distribution, volume, and surface area, could be extracted. >

This research, with Juyeon Park, a student of the Integrated Master’s and Ph.D. Program at KAIST, as the first author, was published online in the prestigious journal Nature Communications on May 22.

(Paper title: Revealing 3D microanatomical structures of unlabeled thick cancer tissues using holotomography and virtual H&E staining.

[https://doi.org/10.1038/s41467-025-59820-0]

This study was supported by the Leader Researcher Program of the National Research Foundation of Korea, the Global Industry Technology Cooperation Center Project of the Korea Institute for Advancement of Technology, and the Korea Health Industry Development Institute.

2025.05.26 View 431 -

KAIST to Develop a Korean-style ChatGPT Platform Specifically Geared Toward Medical Diagnosis and Drug Discovery

On May 23rd, KAIST (President Kwang-Hyung Lee) announced that its Digital Bio-Health AI Research Center (Director: Professor JongChul Ye of KAIST Kim Jaechul Graduate School of AI) has been selected for the Ministry of Science and ICT's 'AI Top-Tier Young Researcher Support Program (AI Star Fellowship Project).' With a total investment of ₩11.5 billion from May 2025 to December 2030, the center will embark on the full-scale development of AI technology and a platform capable of independently inferring and determining the kinds of diseases, and discovering new drugs.

< Photo. On May 20th, a kick-off meeting for the AI Star Fellowship Project was held at KAIST Kim Jaechul Graduate School of AI’s Yangjae Research Center with the KAIST research team and participating organizations of Samsung Medical Center, NAVER Cloud, and HITS. [From left to right in the front row] Professor Jaegul Joo (KAIST), Professor Yoonjae Choi (KAIST), Professor Woo Youn Kim (KAIST/HITS), Professor JongChul Ye (KAIST), Professor Sungsoo Ahn (KAIST), Dr. Haanju Yoo (NAVER Cloud), Yoonho Lee (KAIST), HyeYoon Moon (Samsung Medical Center), Dr. Su Min Kim (Samsung Medical Center) >

This project aims to foster an innovative AI research ecosystem centered on young researchers and develop an inferential AI agent that can utilize and automatically expand specialized knowledge systems in the bio and medical fields.

Professor JongChul Ye of the Kim Jaechul Graduate School of AI will serve as the lead researcher, with young researchers from KAIST including Professors Yoonjae Choi, Kimin Lee, Sungsoo Ahn, and Chanyoung Park, along with mid-career researchers like Professors Jaegul Joo and Woo Youn Kim, jointly undertaking the project. They will collaborate with various laboratories within KAIST to conduct comprehensive research covering the entire cycle from the theoretical foundations of AI inference to its practical application.

Specifically, the main goals include: - Building high-performance inference models that integrate diverse medical knowledge systems to enhance the precision and reliability of diagnosis and treatment. - Developing a convergence inference platform that efficiently combines symbol-based inference with neural network models. - Securing AI technology for new drug development and biomarker discovery based on 'cell ontology.'

Furthermore, through close collaboration with industry and medical institutions such as Samsung Medical Center, NAVER Cloud, and HITS Co., Ltd., the project aims to achieve: - Clinical diagnostic AI utilizing medical knowledge systems. - AI-based molecular target exploration for new drug development. - Commercialization of an extendible AI inference platform.

Professor JongChul Ye, Director of KAIST's Digital Bio-Health AI Research Center, stated, "At a time when competition in AI inference model development is intensifying, it is a great honor for KAIST to lead the development of AI technology specialized in the bio and medical fields with world-class young researchers." He added, "We will do our best to ensure that the participating young researchers reach a world-leading level in terms of research achievements after the completion of this seven-year project starting in 2025."

The AI Star Fellowship is a newly established program where post-doctoral researchers and faculty members within seven years of appointment participate as project leaders (PLs) to independently lead research. Multiple laboratories within a university and demand-side companies form a consortium to operate the program.

Through this initiative, KAIST plans to nurture bio-medical convergence AI talent and simultaneously promote the commercialization of core technologies in collaboration with Samsung Medical Center, NAVER Cloud, and HITS.

2025.05.26 View 492

KAIST to Develop a Korean-style ChatGPT Platform Specifically Geared Toward Medical Diagnosis and Drug Discovery

On May 23rd, KAIST (President Kwang-Hyung Lee) announced that its Digital Bio-Health AI Research Center (Director: Professor JongChul Ye of KAIST Kim Jaechul Graduate School of AI) has been selected for the Ministry of Science and ICT's 'AI Top-Tier Young Researcher Support Program (AI Star Fellowship Project).' With a total investment of ₩11.5 billion from May 2025 to December 2030, the center will embark on the full-scale development of AI technology and a platform capable of independently inferring and determining the kinds of diseases, and discovering new drugs.

< Photo. On May 20th, a kick-off meeting for the AI Star Fellowship Project was held at KAIST Kim Jaechul Graduate School of AI’s Yangjae Research Center with the KAIST research team and participating organizations of Samsung Medical Center, NAVER Cloud, and HITS. [From left to right in the front row] Professor Jaegul Joo (KAIST), Professor Yoonjae Choi (KAIST), Professor Woo Youn Kim (KAIST/HITS), Professor JongChul Ye (KAIST), Professor Sungsoo Ahn (KAIST), Dr. Haanju Yoo (NAVER Cloud), Yoonho Lee (KAIST), HyeYoon Moon (Samsung Medical Center), Dr. Su Min Kim (Samsung Medical Center) >

This project aims to foster an innovative AI research ecosystem centered on young researchers and develop an inferential AI agent that can utilize and automatically expand specialized knowledge systems in the bio and medical fields.

Professor JongChul Ye of the Kim Jaechul Graduate School of AI will serve as the lead researcher, with young researchers from KAIST including Professors Yoonjae Choi, Kimin Lee, Sungsoo Ahn, and Chanyoung Park, along with mid-career researchers like Professors Jaegul Joo and Woo Youn Kim, jointly undertaking the project. They will collaborate with various laboratories within KAIST to conduct comprehensive research covering the entire cycle from the theoretical foundations of AI inference to its practical application.

Specifically, the main goals include: - Building high-performance inference models that integrate diverse medical knowledge systems to enhance the precision and reliability of diagnosis and treatment. - Developing a convergence inference platform that efficiently combines symbol-based inference with neural network models. - Securing AI technology for new drug development and biomarker discovery based on 'cell ontology.'

Furthermore, through close collaboration with industry and medical institutions such as Samsung Medical Center, NAVER Cloud, and HITS Co., Ltd., the project aims to achieve: - Clinical diagnostic AI utilizing medical knowledge systems. - AI-based molecular target exploration for new drug development. - Commercialization of an extendible AI inference platform.

Professor JongChul Ye, Director of KAIST's Digital Bio-Health AI Research Center, stated, "At a time when competition in AI inference model development is intensifying, it is a great honor for KAIST to lead the development of AI technology specialized in the bio and medical fields with world-class young researchers." He added, "We will do our best to ensure that the participating young researchers reach a world-leading level in terms of research achievements after the completion of this seven-year project starting in 2025."

The AI Star Fellowship is a newly established program where post-doctoral researchers and faculty members within seven years of appointment participate as project leaders (PLs) to independently lead research. Multiple laboratories within a university and demand-side companies form a consortium to operate the program.

Through this initiative, KAIST plans to nurture bio-medical convergence AI talent and simultaneously promote the commercialization of core technologies in collaboration with Samsung Medical Center, NAVER Cloud, and HITS.

2025.05.26 View 492 -

“For the First Time, We Shared a Meaningful Exchange”: KAIST Develops an AI App for Parents and Minimally Verbal Autistic Children Connect

• KAIST team up with NAVER AI Lab and Dodakim Child Development Center Develop ‘AAcessTalk’, an AI-driven Communication Tool bridging the gap Between Children with Autism and their Parents

• The project earned the prestigious Best Paper Award at the ACM CHI 2025, the Premier International Conference in Human-Computer Interaction

• Families share heartwarming stories of breakthrough communication and newfound understanding.

< Photo 1. (From left) Professor Hwajung Hong and Doctoral candidate Dasom Choi of the Department of Industrial Design with SoHyun Park and Young-Ho Kim of Naver Cloud AI Lab >

For many families of minimally verbal autistic (MVA) children, communication often feels like an uphill battle. But now, thanks to a new AI-powered app developed by researchers at KAIST in collaboration with NAVER AI Lab and Dodakim Child Development Center, parents are finally experiencing moments of genuine connection with their children.

On the 16th, the KAIST (President Kwang Hyung Lee) research team, led by Professor Hwajung Hong of the Department of Industrial Design, announced the development of ‘AAcessTalk,’ an artificial intelligence (AI)-based communication tool that enables genuine communication between children with autism and their parents.

This research was recognized for its human-centered AI approach and received international attention, earning the Best Paper Award at the ACM CHI 2025*, an international conference held in Yokohama, Japan.*ACM CHI (ACM Conference on Human Factors in Computing Systems) 2025: One of the world's most prestigious academic conference in the field of Human-Computer Interaction (HCI).

This year, approximately 1,200 papers were selected out of about 5,000 submissions, with the Best Paper Award given to only the top 1%. The conference, which drew over 5,000 researchers, was the largest in its history, reflecting the growing interest in ‘Human-AI Interaction.’

Called AACessTalk, the app offers personalized vocabulary cards tailored to each child’s interests and context, while guiding parents through conversations with customized prompts. This creates a space where children’s voices can finally be heard—and where parents and children can connect on a deeper level.

Traditional augmentative and alternative communication (AAC) tools have relied heavily on fixed card systems that often fail to capture the subtle emotions and shifting interests of children with autism. AACessTalk breaks new ground by integrating AI technology that adapts in real time to the child’s mood and environment.

< Figure. Schematics of AACessTalk system. It provides personalized vocabulary cards for children with autism and context-based conversation guides for parents to focus on practical communication. Large ‘Turn Pass Button’ is placed at the child’s side to allow the child to lead the conversation. >

Among its standout features is a large ‘Turn Pass Button’ that gives children control over when to start or end conversations—allowing them to lead with agency. Another feature, the “What about Mom/Dad?” button, encourages children to ask about their parents’ thoughts, fostering mutual engagement in dialogue, something many children had never done before.

One parent shared, “For the first time, we shared a meaningful exchange.” Such stories were common among the 11 families who participated in a two-week pilot study, where children used the app to take more initiative in conversations and parents discovered new layers of their children’s language abilities.

Parents also reported moments of surprise and joy when their children used unexpected words or took the lead in conversations, breaking free from repetitive patterns. “I was amazed when my child used a word I hadn’t heard before. It helped me understand them in a whole new way,” recalled one caregiver.

Professor Hwajung Hong, who led the research at KAIST’s Department of Industrial Design, emphasized the importance of empowering children to express their own voices. “This study shows that AI can be more than a communication aid—it can be a bridge to genuine connection and understanding within families,” she said.

Looking ahead, the team plans to refine and expand human-centered AI technologies that honor neurodiversity, with a focus on bringing practical solutions to socially vulnerable groups and enriching user experiences.

This research is the result of KAIST Department of Industrial Design doctoral student Dasom Choi's internship at NAVER AI Lab.* Thesis Title: AACessTalk: Fostering Communication between Minimally Verbal Autistic Children and Parents with Contextual Guidance and Card Recommendation* DOI: 10.1145/3706598.3713792* Main Author Information: Dasom Choi (KAIST, NAVER AI Lab, First Author), SoHyun Park (NAVER AI Lab) , Kyungah Lee (Dodakim Child Development Center), Hwajung Hong (KAIST), and Young-Ho Kim (NAVER AI Lab, Corresponding Author)

This research was supported by the NAVER AI Lab internship program and grants from the National Research Foundation of Korea: the Doctoral Student Research Encouragement Grant (NRF-2024S1A5B5A19043580) and the Mid-Career Researcher Support Program for the Development of a Generative AI-Based Augmentative and Alternative Communication System for Autism Spectrum Disorder (RS-2024-00458557).

2025.05.19 View 1067

“For the First Time, We Shared a Meaningful Exchange”: KAIST Develops an AI App for Parents and Minimally Verbal Autistic Children Connect

• KAIST team up with NAVER AI Lab and Dodakim Child Development Center Develop ‘AAcessTalk’, an AI-driven Communication Tool bridging the gap Between Children with Autism and their Parents

• The project earned the prestigious Best Paper Award at the ACM CHI 2025, the Premier International Conference in Human-Computer Interaction

• Families share heartwarming stories of breakthrough communication and newfound understanding.

< Photo 1. (From left) Professor Hwajung Hong and Doctoral candidate Dasom Choi of the Department of Industrial Design with SoHyun Park and Young-Ho Kim of Naver Cloud AI Lab >

For many families of minimally verbal autistic (MVA) children, communication often feels like an uphill battle. But now, thanks to a new AI-powered app developed by researchers at KAIST in collaboration with NAVER AI Lab and Dodakim Child Development Center, parents are finally experiencing moments of genuine connection with their children.

On the 16th, the KAIST (President Kwang Hyung Lee) research team, led by Professor Hwajung Hong of the Department of Industrial Design, announced the development of ‘AAcessTalk,’ an artificial intelligence (AI)-based communication tool that enables genuine communication between children with autism and their parents.

This research was recognized for its human-centered AI approach and received international attention, earning the Best Paper Award at the ACM CHI 2025*, an international conference held in Yokohama, Japan.*ACM CHI (ACM Conference on Human Factors in Computing Systems) 2025: One of the world's most prestigious academic conference in the field of Human-Computer Interaction (HCI).

This year, approximately 1,200 papers were selected out of about 5,000 submissions, with the Best Paper Award given to only the top 1%. The conference, which drew over 5,000 researchers, was the largest in its history, reflecting the growing interest in ‘Human-AI Interaction.’

Called AACessTalk, the app offers personalized vocabulary cards tailored to each child’s interests and context, while guiding parents through conversations with customized prompts. This creates a space where children’s voices can finally be heard—and where parents and children can connect on a deeper level.

Traditional augmentative and alternative communication (AAC) tools have relied heavily on fixed card systems that often fail to capture the subtle emotions and shifting interests of children with autism. AACessTalk breaks new ground by integrating AI technology that adapts in real time to the child’s mood and environment.

< Figure. Schematics of AACessTalk system. It provides personalized vocabulary cards for children with autism and context-based conversation guides for parents to focus on practical communication. Large ‘Turn Pass Button’ is placed at the child’s side to allow the child to lead the conversation. >

Among its standout features is a large ‘Turn Pass Button’ that gives children control over when to start or end conversations—allowing them to lead with agency. Another feature, the “What about Mom/Dad?” button, encourages children to ask about their parents’ thoughts, fostering mutual engagement in dialogue, something many children had never done before.

One parent shared, “For the first time, we shared a meaningful exchange.” Such stories were common among the 11 families who participated in a two-week pilot study, where children used the app to take more initiative in conversations and parents discovered new layers of their children’s language abilities.

Parents also reported moments of surprise and joy when their children used unexpected words or took the lead in conversations, breaking free from repetitive patterns. “I was amazed when my child used a word I hadn’t heard before. It helped me understand them in a whole new way,” recalled one caregiver.

Professor Hwajung Hong, who led the research at KAIST’s Department of Industrial Design, emphasized the importance of empowering children to express their own voices. “This study shows that AI can be more than a communication aid—it can be a bridge to genuine connection and understanding within families,” she said.

Looking ahead, the team plans to refine and expand human-centered AI technologies that honor neurodiversity, with a focus on bringing practical solutions to socially vulnerable groups and enriching user experiences.

This research is the result of KAIST Department of Industrial Design doctoral student Dasom Choi's internship at NAVER AI Lab.* Thesis Title: AACessTalk: Fostering Communication between Minimally Verbal Autistic Children and Parents with Contextual Guidance and Card Recommendation* DOI: 10.1145/3706598.3713792* Main Author Information: Dasom Choi (KAIST, NAVER AI Lab, First Author), SoHyun Park (NAVER AI Lab) , Kyungah Lee (Dodakim Child Development Center), Hwajung Hong (KAIST), and Young-Ho Kim (NAVER AI Lab, Corresponding Author)

This research was supported by the NAVER AI Lab internship program and grants from the National Research Foundation of Korea: the Doctoral Student Research Encouragement Grant (NRF-2024S1A5B5A19043580) and the Mid-Career Researcher Support Program for the Development of a Generative AI-Based Augmentative and Alternative Communication System for Autism Spectrum Disorder (RS-2024-00458557).

2025.05.19 View 1067 -

KAIST Identifies Master Regulator Blocking Immunotherapy, Paving the Way for a New Lung Cancer Treatment

Immune checkpoint inhibitors, a class of immunotherapies that help immune cells attack cancer more effectively, have revolutionized cancer treatment. However, fewer than 20% of patients respond to these treatments, highlighting the urgent need for new strategies tailored to both responders and non-responders.

KAIST researchers have discovered that 'DEAD-box helicases 54 (DDX54)', a type of RNA-binding protein, is the master regulator that hinders the effectiveness of immunotherapy—opening a new path for lung cancer treatment. This breakthrough technology has been transferred to faculty startup BioRevert Inc., where it is currently being developed as a companion therapeutic and is expected to enter clinical trials by 2028.

< Photo 1. (From left) Researcher Jungeun Lee, Professor Kwang-Hyun Cho and Postdoctoral Researcher Jeong-Ryeol Gong of the Department of Bio and Brain Engineering at KAIST >

KAIST (represented by President Kwang-Hyung Lee) announced on April 8 that a research team led by Professor Kwang-Hyun Cho from the Department of Bio and Brain Engineering had identified DDX54 as a critical factor that determines the immune evasion capacity of lung cancer cells. They demonstrated that suppressing DDX54 enhances immune cell infiltration into tumors and significantly improves the efficacy of immunotherapy.

Immunotherapy using anti-PD-1 or anti-PD-L1 antibodies is considered a powerful approach in cancer treatment. However, its low response rate limits the number of patients who actually benefit.

To identify likely responders, tumor mutational burden (TMB) has recently been approved by the FDA as a key biomarker for immunotherapy. Cancers with high mutation rates are thought to be more responsive to immune checkpoint inhibitors. However, even tumors with high TMB can display an “immune-desert” phenotype—where immune cell infiltration is severely limited—resulting in poor treatment responses.

< Figure 1. DDX54 was identified as the master regulator that induces resistance to immunotherapy by orchestrating suppression of immune cell infiltration through cancer tissues as lung cancer cells become immune-evasive >

Professor Kwang-Hyun Cho's research team compared transcriptome and genome data of lung cancer patients with immune evasion capabilities through gene regulatory network analysis (A) and discovered DDX54, a master regulator that induces resistance to immunotherapy (B-F).

This study is especially significant in that it successfully demonstrated that suppressing DDX54 in immune-desert lung tumors can overcome immunotherapy resistance and improve treatment outcomes.

The team used transcriptomic and genomic data from immune-evasive lung cancer patients and employed systems biology techniques to infer gene regulatory networks. Through this analysis, they identified DDX54 as a central regulator in the immune evasion of lung cancer cells.

In a syngeneic mouse model, the suppression of DDX54 led to significant increases in the infiltration of anti-cancer immune cells such as T cells and NK cells, and greatly improved the response to immunotherapy.

Single-cell transcriptomic and spatial transcriptomic analyses further showed that combination therapy targeting DDX54 promoted the differentiation of T cells and memory T cells that suppress tumors, while reducing the infiltration of regulatory T cells and exhausted T cells that support tumor growth.

< Figure 2. In the syngeneic mouse model made of lung cancer cells, it was confirmed that inhibiting DDX54 reversed the immune-evasion ability of cancer cells and enhanced the sensitivity to anti-PD-1 therapy >

In a syngeneic mouse model made of lung cancer cells exhibiting immunotherapy resistance, the treatment applied after DDX54 inhibition resulted in statistically significant inhibition of lung cancer growth (B-D) and a significant increase in immune cell infiltration into the tumor tissue (E, F).

The mechanism is believed to involve DDX54 suppression inactivating signaling pathways such as JAK-STAT, MYC, and NF-κB, thereby downregulating immune-evasive proteins CD38 and CD47. This also reduced the infiltration of circulating monocytes—which promote tumor development—and promoted the differentiation of M1 macrophages that play anti-tumor roles.

Professor Kwang-Hyun Cho stated, “We have, for the first time, identified a master regulatory factor that enables immune evasion in lung cancer cells. By targeting this factor, we developed a new therapeutic strategy that can induce responsiveness to immunotherapy in previously resistant cancers.”

He added, “The discovery of DDX54—hidden within the complex molecular networks of cancer cells—was made possible through the systematic integration of systems biology, combining IT and BT.”

The study, led by Professor Kwang-Hyun Cho, was published in the Proceedings of the National Academy of Sciences of the United States of America (PNAS) on April 2, 2025, with Jeong-Ryeol Gong being the first author, Jungeun Lee, a co-first author, and Younghyun Han, a co-author of the article.

< Figure 3. Single-cell transcriptome and spatial transcriptome analysis confirmed that knockdown of DDX54 increased immune cell infiltration into cancer tissues >

In a syngeneic mouse model made of lung cancer cells that underwent immunotherapy in combination with DDX54 inhibition, single-cell transcriptome (H-L) and spatial transcriptome (A-G) analysis of immune cells infiltrating inside cancer tissues were performed. As a result, it was confirmed that anticancer immune cells such as T cells, B cells, and NK cells actively infiltrated the core of lung cancer tissues when DDX54 inhibition and immunotherapy were concurrently administered.

(Paper title: “DDX54 downregulation enhances anti-PD1 therapy in immune-desert lung tumors with high tumor mutational burden,” DOI: https://doi.org/10.1073/pnas.2412310122)

This work was supported by the Ministry of Science and ICT and the National Research Foundation of Korea through the Mid-Career Research Program and Basic Research Laboratory Program.

< Figure 4. The identified master regulator DDX54 was confirmed to induce CD38 and CD47 expression through Jak-Stat3, MYC, and NF-κB activation. >

DDX54 activates the Jak-Stat3, MYC, and NF-κB pathways in lung cancer cells to increase CD38 and CD47 expression (A-G). This creates a cancer microenvironment that contributes to cancer development (H) and ultimately induces immune anticancer treatment resistance.

< Figure 5. It was confirmed that an immune-inflamed environment can be created by combining DDX54 inhibition and immune checkpoint inhibitor (ICI) therapy. >

When DDX54 inhibition and ICI therapy are simultaneously administered, the cancer cell characteristics change, the immune evasion ability is restored, and the environment is transformed into an ‘immune-activated’ environment in which immune cells easily infiltrate cancer tissues. This strengthens the anticancer immune response, thereby increasing the sensitivity of immunotherapy even in lung cancer tissues that previously had low responsiveness to immunotherapy.

2025.04.08 View 2688

KAIST Identifies Master Regulator Blocking Immunotherapy, Paving the Way for a New Lung Cancer Treatment

Immune checkpoint inhibitors, a class of immunotherapies that help immune cells attack cancer more effectively, have revolutionized cancer treatment. However, fewer than 20% of patients respond to these treatments, highlighting the urgent need for new strategies tailored to both responders and non-responders.

KAIST researchers have discovered that 'DEAD-box helicases 54 (DDX54)', a type of RNA-binding protein, is the master regulator that hinders the effectiveness of immunotherapy—opening a new path for lung cancer treatment. This breakthrough technology has been transferred to faculty startup BioRevert Inc., where it is currently being developed as a companion therapeutic and is expected to enter clinical trials by 2028.

< Photo 1. (From left) Researcher Jungeun Lee, Professor Kwang-Hyun Cho and Postdoctoral Researcher Jeong-Ryeol Gong of the Department of Bio and Brain Engineering at KAIST >

KAIST (represented by President Kwang-Hyung Lee) announced on April 8 that a research team led by Professor Kwang-Hyun Cho from the Department of Bio and Brain Engineering had identified DDX54 as a critical factor that determines the immune evasion capacity of lung cancer cells. They demonstrated that suppressing DDX54 enhances immune cell infiltration into tumors and significantly improves the efficacy of immunotherapy.

Immunotherapy using anti-PD-1 or anti-PD-L1 antibodies is considered a powerful approach in cancer treatment. However, its low response rate limits the number of patients who actually benefit.

To identify likely responders, tumor mutational burden (TMB) has recently been approved by the FDA as a key biomarker for immunotherapy. Cancers with high mutation rates are thought to be more responsive to immune checkpoint inhibitors. However, even tumors with high TMB can display an “immune-desert” phenotype—where immune cell infiltration is severely limited—resulting in poor treatment responses.

< Figure 1. DDX54 was identified as the master regulator that induces resistance to immunotherapy by orchestrating suppression of immune cell infiltration through cancer tissues as lung cancer cells become immune-evasive >

Professor Kwang-Hyun Cho's research team compared transcriptome and genome data of lung cancer patients with immune evasion capabilities through gene regulatory network analysis (A) and discovered DDX54, a master regulator that induces resistance to immunotherapy (B-F).

This study is especially significant in that it successfully demonstrated that suppressing DDX54 in immune-desert lung tumors can overcome immunotherapy resistance and improve treatment outcomes.

The team used transcriptomic and genomic data from immune-evasive lung cancer patients and employed systems biology techniques to infer gene regulatory networks. Through this analysis, they identified DDX54 as a central regulator in the immune evasion of lung cancer cells.

In a syngeneic mouse model, the suppression of DDX54 led to significant increases in the infiltration of anti-cancer immune cells such as T cells and NK cells, and greatly improved the response to immunotherapy.

Single-cell transcriptomic and spatial transcriptomic analyses further showed that combination therapy targeting DDX54 promoted the differentiation of T cells and memory T cells that suppress tumors, while reducing the infiltration of regulatory T cells and exhausted T cells that support tumor growth.

< Figure 2. In the syngeneic mouse model made of lung cancer cells, it was confirmed that inhibiting DDX54 reversed the immune-evasion ability of cancer cells and enhanced the sensitivity to anti-PD-1 therapy >

In a syngeneic mouse model made of lung cancer cells exhibiting immunotherapy resistance, the treatment applied after DDX54 inhibition resulted in statistically significant inhibition of lung cancer growth (B-D) and a significant increase in immune cell infiltration into the tumor tissue (E, F).

The mechanism is believed to involve DDX54 suppression inactivating signaling pathways such as JAK-STAT, MYC, and NF-κB, thereby downregulating immune-evasive proteins CD38 and CD47. This also reduced the infiltration of circulating monocytes—which promote tumor development—and promoted the differentiation of M1 macrophages that play anti-tumor roles.

Professor Kwang-Hyun Cho stated, “We have, for the first time, identified a master regulatory factor that enables immune evasion in lung cancer cells. By targeting this factor, we developed a new therapeutic strategy that can induce responsiveness to immunotherapy in previously resistant cancers.”

He added, “The discovery of DDX54—hidden within the complex molecular networks of cancer cells—was made possible through the systematic integration of systems biology, combining IT and BT.”

The study, led by Professor Kwang-Hyun Cho, was published in the Proceedings of the National Academy of Sciences of the United States of America (PNAS) on April 2, 2025, with Jeong-Ryeol Gong being the first author, Jungeun Lee, a co-first author, and Younghyun Han, a co-author of the article.

< Figure 3. Single-cell transcriptome and spatial transcriptome analysis confirmed that knockdown of DDX54 increased immune cell infiltration into cancer tissues >

In a syngeneic mouse model made of lung cancer cells that underwent immunotherapy in combination with DDX54 inhibition, single-cell transcriptome (H-L) and spatial transcriptome (A-G) analysis of immune cells infiltrating inside cancer tissues were performed. As a result, it was confirmed that anticancer immune cells such as T cells, B cells, and NK cells actively infiltrated the core of lung cancer tissues when DDX54 inhibition and immunotherapy were concurrently administered.

(Paper title: “DDX54 downregulation enhances anti-PD1 therapy in immune-desert lung tumors with high tumor mutational burden,” DOI: https://doi.org/10.1073/pnas.2412310122)

This work was supported by the Ministry of Science and ICT and the National Research Foundation of Korea through the Mid-Career Research Program and Basic Research Laboratory Program.

< Figure 4. The identified master regulator DDX54 was confirmed to induce CD38 and CD47 expression through Jak-Stat3, MYC, and NF-κB activation. >

DDX54 activates the Jak-Stat3, MYC, and NF-κB pathways in lung cancer cells to increase CD38 and CD47 expression (A-G). This creates a cancer microenvironment that contributes to cancer development (H) and ultimately induces immune anticancer treatment resistance.

< Figure 5. It was confirmed that an immune-inflamed environment can be created by combining DDX54 inhibition and immune checkpoint inhibitor (ICI) therapy. >

When DDX54 inhibition and ICI therapy are simultaneously administered, the cancer cell characteristics change, the immune evasion ability is restored, and the environment is transformed into an ‘immune-activated’ environment in which immune cells easily infiltrate cancer tissues. This strengthens the anticancer immune response, thereby increasing the sensitivity of immunotherapy even in lung cancer tissues that previously had low responsiveness to immunotherapy.

2025.04.08 View 2688 -

KAIST develops a new, bone-like material that strengthens with use in collaboration with GIT

Materials used in apartment buildings, vehicles, and other structures deteriorate over time under repeated loads, leading to failure and breakage. A joint research team from Korea and the United States has successfully developed a bioinspired material that becomes stronger with use, taking inspiration from the way bones synthesize minerals from bodily fluids under stress, increasing bone density.

< (From left) Professor Sung Hoon Kang of the Department of Materials Science and Engineering, Johns Hopkins University Ph.D. candidates Bohan Sun and Grant Kitchen, Professor Yuhang Hu and Ph.D. candidate Dongjung He of Georgia Institute of Technology >

KAIST (represented by President Kwang Hyung Lee) announced on the 20th of February that a research team led by Professor Sung Hoon Kang from the Department of Materials Science and Engineering, in collaboration with Johns Hopkins University and the Georgia Institute of Technology, had developed a new material that strengthens with repeated use, similar to how bones become stronger with exercise.

Professor Kang’s team sought to address the issue of conventional materials degrading with repeated use. Inspired by the biological process where stress triggers cells to form minerals that strengthen bones, the team developed a material that synthesizes minerals under stress without relying on cellular activity. This innovation is expected to enable applications in a variety of fields.

To replace the function of cells, the research team created a porous piezoelectric substrate that converts mechanical force into electricity and actually generates more charge under greater force. They then synthesized a composite material by infusing it with an electrolyte containing mineral components similar to those in blood.

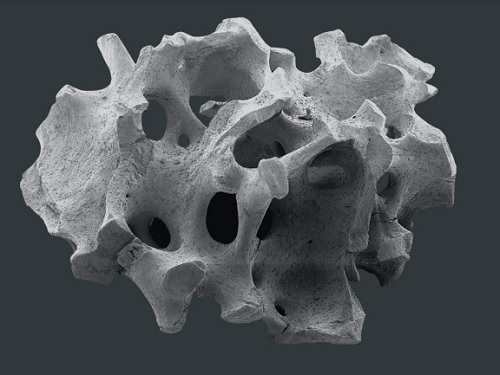

< Figure 1. Schematic diagram of the biomimetic concept based on bone and pitcher plants, the reversible strengthening mechanism, the process of fabricating porous composites, the mechanical property changes with increasing stiffness and energy dissipation after cyclic loading, and the reprogrammable self-folding mechanism and applications >

After subjecting the material to periodic forces and measuring changes in its properties, they observed that its stiffness increased proportionally with the frequency and magnitude of stress and that its energy dissipation capability improved.

The reason for such properties was found to be due to minerals forming inside the porous material under repeated stress, as observed through micro-CT imaging of its internal structure. When subjected to large forces, these minerals fractured and dissipated energy, only to reform under further cyclic stress.

Unlike conventional materials that weaken with repeated use, this new material simultaneously enhances stiffness and impact absorption over time.

< Figure 2. Comparison of the changes in properties of the newly developed new material (LIPPS) with other materials under cyclic loading. (A) Graph showing the relative change rate of energy dissipation after cyclic loading and the relative change rate of elastic modulus upon unloading. LIPPS is in a new area that existing materials have not reached, and shows the characteristics of simultaneous increases in elastic modulus and energy dissipation. (B) Graph comparing the performance of LIPPS with current state-of-the-art mechanically adaptive materials. (Left) The maximum property change rate compared to the baseline after cyclic loading, LIPPS shows much higher changes in elastic modulus, dissipated energy density and ratio, toughness (impact resistance), and stored energy density than the existing adaptive materials. (Right) The absolute value range of the reported properties before and after cyclic loading shows that LIPPS has higher elastic modulus and toughness than the existing adaptive materials. >

Moreover, because its properties improve in proportion to the magnitude and frequency of applied stress, it can self-adjust to achieve mechanical property distributions suitable for different structural applications. It also possesses self-healing capabilities.

Professor Kang stated, "This newly developed material, which strengthens and absorbs impact better with repeated use compared to conventional materials, holds great potential for applications in artificial joints, as well as in aircraft, ships, automobiles, and structural engineering."

This study, with Professor Sung Hoon Kang as the corresponding author, was published in Science Advances (Vol. 11, Issue 6, February).

(Paper title: “A material dynamically enhancing both load-bearing and energy-dissipation capability under cyclic loading”) DOI: 10.1126/sciadv.adt3979

This research was conducted as a joint effort with Johns Hopkins University's Extreme Materials Institute and the Georgia Institute of Technology, supported by the National Research Foundation of Korea’s Brain Pool Plus program.

2025.02.22 View 2015

KAIST develops a new, bone-like material that strengthens with use in collaboration with GIT

Materials used in apartment buildings, vehicles, and other structures deteriorate over time under repeated loads, leading to failure and breakage. A joint research team from Korea and the United States has successfully developed a bioinspired material that becomes stronger with use, taking inspiration from the way bones synthesize minerals from bodily fluids under stress, increasing bone density.

< (From left) Professor Sung Hoon Kang of the Department of Materials Science and Engineering, Johns Hopkins University Ph.D. candidates Bohan Sun and Grant Kitchen, Professor Yuhang Hu and Ph.D. candidate Dongjung He of Georgia Institute of Technology >

KAIST (represented by President Kwang Hyung Lee) announced on the 20th of February that a research team led by Professor Sung Hoon Kang from the Department of Materials Science and Engineering, in collaboration with Johns Hopkins University and the Georgia Institute of Technology, had developed a new material that strengthens with repeated use, similar to how bones become stronger with exercise.

Professor Kang’s team sought to address the issue of conventional materials degrading with repeated use. Inspired by the biological process where stress triggers cells to form minerals that strengthen bones, the team developed a material that synthesizes minerals under stress without relying on cellular activity. This innovation is expected to enable applications in a variety of fields.

To replace the function of cells, the research team created a porous piezoelectric substrate that converts mechanical force into electricity and actually generates more charge under greater force. They then synthesized a composite material by infusing it with an electrolyte containing mineral components similar to those in blood.

< Figure 1. Schematic diagram of the biomimetic concept based on bone and pitcher plants, the reversible strengthening mechanism, the process of fabricating porous composites, the mechanical property changes with increasing stiffness and energy dissipation after cyclic loading, and the reprogrammable self-folding mechanism and applications >

After subjecting the material to periodic forces and measuring changes in its properties, they observed that its stiffness increased proportionally with the frequency and magnitude of stress and that its energy dissipation capability improved.

The reason for such properties was found to be due to minerals forming inside the porous material under repeated stress, as observed through micro-CT imaging of its internal structure. When subjected to large forces, these minerals fractured and dissipated energy, only to reform under further cyclic stress.

Unlike conventional materials that weaken with repeated use, this new material simultaneously enhances stiffness and impact absorption over time.

< Figure 2. Comparison of the changes in properties of the newly developed new material (LIPPS) with other materials under cyclic loading. (A) Graph showing the relative change rate of energy dissipation after cyclic loading and the relative change rate of elastic modulus upon unloading. LIPPS is in a new area that existing materials have not reached, and shows the characteristics of simultaneous increases in elastic modulus and energy dissipation. (B) Graph comparing the performance of LIPPS with current state-of-the-art mechanically adaptive materials. (Left) The maximum property change rate compared to the baseline after cyclic loading, LIPPS shows much higher changes in elastic modulus, dissipated energy density and ratio, toughness (impact resistance), and stored energy density than the existing adaptive materials. (Right) The absolute value range of the reported properties before and after cyclic loading shows that LIPPS has higher elastic modulus and toughness than the existing adaptive materials. >

Moreover, because its properties improve in proportion to the magnitude and frequency of applied stress, it can self-adjust to achieve mechanical property distributions suitable for different structural applications. It also possesses self-healing capabilities.

Professor Kang stated, "This newly developed material, which strengthens and absorbs impact better with repeated use compared to conventional materials, holds great potential for applications in artificial joints, as well as in aircraft, ships, automobiles, and structural engineering."

This study, with Professor Sung Hoon Kang as the corresponding author, was published in Science Advances (Vol. 11, Issue 6, February).

(Paper title: “A material dynamically enhancing both load-bearing and energy-dissipation capability under cyclic loading”) DOI: 10.1126/sciadv.adt3979

This research was conducted as a joint effort with Johns Hopkins University's Extreme Materials Institute and the Georgia Institute of Technology, supported by the National Research Foundation of Korea’s Brain Pool Plus program.

2025.02.22 View 2015 -

KAIST Research Team Develops an AI Framework Capable of Overcoming the Strength-Ductility Dilemma in Additive-manufactured Titanium Alloys

<(From Left) Ph.D. Student Jaejung Park and Professor Seungchul Lee of KAIST Department of Mechanical Engineering and , Professor Hyoung Seop Kim of POSTECH, and M.S.–Ph.D. Integrated Program Student Jeong Ah Lee of POSTECH. >

The KAIST research team led by Professor Seungchul Lee from Department of Mechanical Engineering, in collaboration with Professor Hyoung Seop Kim’s team at POSTECH, successfully overcame the strength–ductility dilemma of Ti 6Al 4V alloy using artificial intelligence, enabling the production of high strength, high ductility metal products. The AI developed by the team accurately predicts mechanical properties based on various 3D printing process parameters while also providing uncertainty information, and it uses both to recommend process parameters that hold high promise for 3D printing.

Among various 3D printing technologies, laser powder bed fusion is an innovative method for manufacturing Ti-6Al-4V alloy, renowned for its high strength and bio-compatibility. However, this alloy made via 3D printing has traditionally faced challenges in simultaneously achieving high strength and high ductility. Although there have been attempts to address this issue by adjusting both the printing process parameters and heat treatment conditions, the vast number of possible combinations made it difficult to explore them all through experiments and simulations alone.

The active learning framework developed by the team quickly explores a wide range of 3D printing process parameters and heat treatment conditions to recommend those expected to improve both strength and ductility of the alloy. These recommendations are based on the AI model’s predictions of ultimate tensile strength and total elongation along with associated uncertainty information for each set of process parameters and heat treatment conditions. The recommended conditions are then validated by performing 3D printing and tensile tests to obtain the true mechanical property values. These new data are incorporated into further AI model training, and through iterative exploration, the optimal process parameters and heat treatment conditions for producing high-performance alloys were determined in only five iterations. With these optimized conditions, the 3D printed Ti-6Al-4V alloy achieved an ultimate tensile strength of 1190 MPa and a total elongation of 16.5%, successfully overcoming the strength–ductility dilemma.

Professor Seungchul Lee commented, “In this study, by optimizing the 3D printing process parameters and heat treatment conditions, we were able to develop a high-strength, high-ductility Ti-6Al-4V alloy with minimal experimentation trials. Compared to previous studies, we produced an alloy with a similar ultimate tensile strength but higher total elongation, as well as that with a similar elongation but greater ultimate tensile strength.” He added, “Furthermore, if our approach is applied not only to mechanical properties but also to other properties such as thermal conductivity and thermal expansion, we anticipate that it will enable efficient exploration of 3D printing process parameters and heat treatment conditions.”

This study was published in Nature Communications on January 22 (https://doi.org/10.1038/s41467-025-56267-1), and the research was supported by the National Research Foundation of Korea’s Nano & Material Technology Development Program and the Leading Research Center Program.

2025.02.21 View 3093

KAIST Research Team Develops an AI Framework Capable of Overcoming the Strength-Ductility Dilemma in Additive-manufactured Titanium Alloys

<(From Left) Ph.D. Student Jaejung Park and Professor Seungchul Lee of KAIST Department of Mechanical Engineering and , Professor Hyoung Seop Kim of POSTECH, and M.S.–Ph.D. Integrated Program Student Jeong Ah Lee of POSTECH. >

The KAIST research team led by Professor Seungchul Lee from Department of Mechanical Engineering, in collaboration with Professor Hyoung Seop Kim’s team at POSTECH, successfully overcame the strength–ductility dilemma of Ti 6Al 4V alloy using artificial intelligence, enabling the production of high strength, high ductility metal products. The AI developed by the team accurately predicts mechanical properties based on various 3D printing process parameters while also providing uncertainty information, and it uses both to recommend process parameters that hold high promise for 3D printing.

Among various 3D printing technologies, laser powder bed fusion is an innovative method for manufacturing Ti-6Al-4V alloy, renowned for its high strength and bio-compatibility. However, this alloy made via 3D printing has traditionally faced challenges in simultaneously achieving high strength and high ductility. Although there have been attempts to address this issue by adjusting both the printing process parameters and heat treatment conditions, the vast number of possible combinations made it difficult to explore them all through experiments and simulations alone.

The active learning framework developed by the team quickly explores a wide range of 3D printing process parameters and heat treatment conditions to recommend those expected to improve both strength and ductility of the alloy. These recommendations are based on the AI model’s predictions of ultimate tensile strength and total elongation along with associated uncertainty information for each set of process parameters and heat treatment conditions. The recommended conditions are then validated by performing 3D printing and tensile tests to obtain the true mechanical property values. These new data are incorporated into further AI model training, and through iterative exploration, the optimal process parameters and heat treatment conditions for producing high-performance alloys were determined in only five iterations. With these optimized conditions, the 3D printed Ti-6Al-4V alloy achieved an ultimate tensile strength of 1190 MPa and a total elongation of 16.5%, successfully overcoming the strength–ductility dilemma.

Professor Seungchul Lee commented, “In this study, by optimizing the 3D printing process parameters and heat treatment conditions, we were able to develop a high-strength, high-ductility Ti-6Al-4V alloy with minimal experimentation trials. Compared to previous studies, we produced an alloy with a similar ultimate tensile strength but higher total elongation, as well as that with a similar elongation but greater ultimate tensile strength.” He added, “Furthermore, if our approach is applied not only to mechanical properties but also to other properties such as thermal conductivity and thermal expansion, we anticipate that it will enable efficient exploration of 3D printing process parameters and heat treatment conditions.”

This study was published in Nature Communications on January 22 (https://doi.org/10.1038/s41467-025-56267-1), and the research was supported by the National Research Foundation of Korea’s Nano & Material Technology Development Program and the Leading Research Center Program.

2025.02.21 View 3093 -

KAIST Develops Wearable Carbon Dioxide Sensor to Enable Real-time Apnea Diagnosis

- Professor Seunghyup Yoo’s research team of the School of Electrical Engineering developed an ultralow-power carbon dioxide (CO2) sensor using a flexible and thin organic photodiode, and succeeded in real-time breathing monitoring by attaching it to a commercial mask

- Wearable devices with features such as low power, high stability, and flexibility can be utilized for early diagnosis of various diseases such as chronic obstructive pulmonary disease and sleep apnea

< Photo 1. From the left, School of Electrical Engineering, Ph.D. candidate DongHo Choi, Professor Seunghyup Yoo, and Department of Materials Science and Engineering, Bachelor’s candidate MinJae Kim >

Carbon dioxide (CO2) is a major respiratory metabolite, and continuous monitoring of CO2 concentration in exhaled breath is not only an important indicator for early detection and diagnosis of respiratory and circulatory system diseases, but can also be widely used for monitoring personal exercise status. KAIST researchers succeeded in accurately measuring CO2 concentration by attaching it to the inside of a mask.

KAIST (President Kwang-Hyung Lee) announced on February 10th that Professor Seunghyup Yoo's research team in the Department of Electrical and Electronic Engineering developed a low-power, high-speed wearable CO2 sensor capable of stable breathing monitoring in real time.

Existing non-invasive CO2 sensors had limitations in that they were large in size and consumed high power. In particular, optochemical CO2 sensors using fluorescent molecules have the advantage of being miniaturized and lightweight, but due to the photodegradation phenomenon of dye molecules, they are difficult to use stably for a long time, which limits their use as wearable healthcare sensors.

Optochemical CO2 sensors utilize the fact that the intensity of fluorescence emitted from fluorescent molecules decreases depending on the concentration of CO2, and it is important to effectively detect changes in fluorescence light.

To this end, the research team developed a low-power CO2 sensor consisting of an LED and an organic photodiode surrounding it. Based on high light collection efficiency, the sensor, which minimizes the amount of excitation light irradiated on fluorescent molecules, achieved a device power consumption of 171 μW, which is tens of times lower than existing sensors that consume several mW.

< Figure 1. Structure and operating principle of the developed optochemical carbon dioxide (CO2) sensor. Light emitted from the LED is converted into fluorescence through the fluorescent film, reflected from the light scattering layer, and incident on the organic photodiode. CO2 reacts with a small amount of water inside the fluorescent film to form carbonic acid (H2CO3), which increases the concentration of hydrogen ions (H+), and the fluorescence intensity due to 470 nm excitation light decreases. The circular organic photodiode with high light collection efficiency effectively detects changes in fluorescence intensity, lowers the power required light up the LED, and reduces light-induced deterioration. >

The research team also elucidated the photodegradation path of fluorescent molecules used in CO2 sensors, revealed the cause of the increase in error over time in photochemical sensors, and suggested an optical design method to suppress the occurrence of errors.

Based on this, the research team developed a sensor that effectively reduces errors caused by photodegradation, which was a chronic problem of existing photochemical sensors, and can be used continuously for up to 9 hours while existing technologies based on the same material can be used for less than 20 minutes, and can be used multiple times when replacing the CO2 detection fluorescent film.

< Figure 2. Wearable smart mask and real-time breathing monitoring. The fabricated sensor module consists of four elements (①: gas-permeable light-scattering layer, ②: color filter and organic photodiode, ③: light-emitting diode, ④: CO2-detecting fluorescent film). The thin and light sensor (D1: 400 nm, D2: 470 nm) is attached to the inside of the mask to monitor the wearer's breathing in real time. >

The developed sensor accurately measured CO2 concentration by being attached to the inside of a mask based on the advantages of being light (0.12 g), thin (0.7 mm), and flexible. In addition, it showed fast speed and high resolution that can monitor respiratory rate by distinguishing between inhalation and exhalation in real time.

< Photo 2. The developed sensor attached to the inside of the mask >

Professor Seunghyup Yoo said, "The developed sensor has excellent characteristics such as low power, high stability, and flexibility, so it can be widely applied to wearable devices, and can be used for the early diagnosis of various diseases such as hypercapnia, chronic obstructive pulmonary disease, and sleep apnea." He added, "In particular, it is expected to be used to improve side effects caused by rebreathing in environments where dust is generated or where masks are worn for long periods of time, such as during seasonal changes."

This study, in which KAIST's Department of Materials Science and Engineering's undergraduate student Minjae Kim and School of Electrical Engineering's doctoral student Dongho Choi participated as joint first authors, was published in the online version of Cell's sister journal, Device, on the 22nd of last month. (Paper title: Ultralow-power carbon dioxide sensor for real-time breath monitoring) DOI: https://doi.org/10.1016/j.device.2024.100681

< Photo 3. From the left, Professor Seunghyup Yoo of the School of Electrical Engineering, MinJae Kim, an undergraduate student in the Department of Materials Science and Engineering, and Dongho Choi, a doctoral student in the School of Electrical Engineering >

This study was supported by the Ministry of Trade, Industry and Energy's Materials and Components Technology Development Project, the National Research Foundation of Korea's Original Technology Development Project, and the KAIST Undergraduate Research Participation Project. This work was supported by the (URP) program.

2025.02.13 View 4211

KAIST Develops Wearable Carbon Dioxide Sensor to Enable Real-time Apnea Diagnosis

- Professor Seunghyup Yoo’s research team of the School of Electrical Engineering developed an ultralow-power carbon dioxide (CO2) sensor using a flexible and thin organic photodiode, and succeeded in real-time breathing monitoring by attaching it to a commercial mask

- Wearable devices with features such as low power, high stability, and flexibility can be utilized for early diagnosis of various diseases such as chronic obstructive pulmonary disease and sleep apnea

< Photo 1. From the left, School of Electrical Engineering, Ph.D. candidate DongHo Choi, Professor Seunghyup Yoo, and Department of Materials Science and Engineering, Bachelor’s candidate MinJae Kim >

Carbon dioxide (CO2) is a major respiratory metabolite, and continuous monitoring of CO2 concentration in exhaled breath is not only an important indicator for early detection and diagnosis of respiratory and circulatory system diseases, but can also be widely used for monitoring personal exercise status. KAIST researchers succeeded in accurately measuring CO2 concentration by attaching it to the inside of a mask.

KAIST (President Kwang-Hyung Lee) announced on February 10th that Professor Seunghyup Yoo's research team in the Department of Electrical and Electronic Engineering developed a low-power, high-speed wearable CO2 sensor capable of stable breathing monitoring in real time.

Existing non-invasive CO2 sensors had limitations in that they were large in size and consumed high power. In particular, optochemical CO2 sensors using fluorescent molecules have the advantage of being miniaturized and lightweight, but due to the photodegradation phenomenon of dye molecules, they are difficult to use stably for a long time, which limits their use as wearable healthcare sensors.

Optochemical CO2 sensors utilize the fact that the intensity of fluorescence emitted from fluorescent molecules decreases depending on the concentration of CO2, and it is important to effectively detect changes in fluorescence light.

To this end, the research team developed a low-power CO2 sensor consisting of an LED and an organic photodiode surrounding it. Based on high light collection efficiency, the sensor, which minimizes the amount of excitation light irradiated on fluorescent molecules, achieved a device power consumption of 171 μW, which is tens of times lower than existing sensors that consume several mW.

< Figure 1. Structure and operating principle of the developed optochemical carbon dioxide (CO2) sensor. Light emitted from the LED is converted into fluorescence through the fluorescent film, reflected from the light scattering layer, and incident on the organic photodiode. CO2 reacts with a small amount of water inside the fluorescent film to form carbonic acid (H2CO3), which increases the concentration of hydrogen ions (H+), and the fluorescence intensity due to 470 nm excitation light decreases. The circular organic photodiode with high light collection efficiency effectively detects changes in fluorescence intensity, lowers the power required light up the LED, and reduces light-induced deterioration. >

The research team also elucidated the photodegradation path of fluorescent molecules used in CO2 sensors, revealed the cause of the increase in error over time in photochemical sensors, and suggested an optical design method to suppress the occurrence of errors.

Based on this, the research team developed a sensor that effectively reduces errors caused by photodegradation, which was a chronic problem of existing photochemical sensors, and can be used continuously for up to 9 hours while existing technologies based on the same material can be used for less than 20 minutes, and can be used multiple times when replacing the CO2 detection fluorescent film.

< Figure 2. Wearable smart mask and real-time breathing monitoring. The fabricated sensor module consists of four elements (①: gas-permeable light-scattering layer, ②: color filter and organic photodiode, ③: light-emitting diode, ④: CO2-detecting fluorescent film). The thin and light sensor (D1: 400 nm, D2: 470 nm) is attached to the inside of the mask to monitor the wearer's breathing in real time. >

The developed sensor accurately measured CO2 concentration by being attached to the inside of a mask based on the advantages of being light (0.12 g), thin (0.7 mm), and flexible. In addition, it showed fast speed and high resolution that can monitor respiratory rate by distinguishing between inhalation and exhalation in real time.

< Photo 2. The developed sensor attached to the inside of the mask >

Professor Seunghyup Yoo said, "The developed sensor has excellent characteristics such as low power, high stability, and flexibility, so it can be widely applied to wearable devices, and can be used for the early diagnosis of various diseases such as hypercapnia, chronic obstructive pulmonary disease, and sleep apnea." He added, "In particular, it is expected to be used to improve side effects caused by rebreathing in environments where dust is generated or where masks are worn for long periods of time, such as during seasonal changes."

This study, in which KAIST's Department of Materials Science and Engineering's undergraduate student Minjae Kim and School of Electrical Engineering's doctoral student Dongho Choi participated as joint first authors, was published in the online version of Cell's sister journal, Device, on the 22nd of last month. (Paper title: Ultralow-power carbon dioxide sensor for real-time breath monitoring) DOI: https://doi.org/10.1016/j.device.2024.100681

< Photo 3. From the left, Professor Seunghyup Yoo of the School of Electrical Engineering, MinJae Kim, an undergraduate student in the Department of Materials Science and Engineering, and Dongho Choi, a doctoral student in the School of Electrical Engineering >

This study was supported by the Ministry of Trade, Industry and Energy's Materials and Components Technology Development Project, the National Research Foundation of Korea's Original Technology Development Project, and the KAIST Undergraduate Research Participation Project. This work was supported by the (URP) program.

2025.02.13 View 4211 -

KAIST Proves Possibility of Preventing Hair Loss with Polyphenol Coating Technology

- KAIST's Professor Haeshin Lee's research team of the Department of Chemistry developed tannic scid-based hair coating technology

- Hair protein (hair and hair follicle) targeting delivery technology using polyphenol confirms a hair loss reduction effect of up to 90% to manifest within 7 Days

- This technology, first applied to 'Grabity' shampoo, proves effect of reducing hair loss chemically and physically

< Photo. (From left) KAIST Chemistry Department Ph.D. candidate Eunu Kim, Professor Haeshin Lee >

Hair loss is a problem that hundreds of millions of people around the world are experiencing, and has a significant psychological and social impact. KAIST researchers focused on the possibility that tannic acid, a type of natural polyphenol, could contribute to preventing hair loss, and through research, discovered that tannic acid is not a simple coating agent, but rather acts as an 'adhesion mediator' that alleviates hair loss.

KAIST (President Kwang-Hyung Lee) announced on the 6th that the Chemistry Department Professor Haeshin Lee's research team developed a new hair loss prevention technology that slowly releases hair loss-alleviating functional ingredients using tannic acid-based coating technology.

Hair loss includes androgenetic alopecia (AGA) and telogen effluvium (TE), and genetic, hormonal, and environmental factors work together, and there is currently a lack of effective treatments with few side effects.

Representative hair loss treatments, minoxidil and finasteride, show some effects, but require long-term use, and not only do their effects vary depending on the body type, but some users also experience side effects.

Professor Haeshin Lee's research team proved that tannic acid can strongly bind to keratin, the main protein in hair, and can be continuously attached to the hair surface, and confirmed that this can be used to release specific functional ingredients in a controlled manner.

In particular, the research team developed a combination that included functional ingredients for hair loss relief, such as salicylic acid (SCA), niacinamide (N), and dexpanthenol (DAL), and named it 'SCANDAL.' The research results showed that the Scandal complex combined with tannic acid is gradually released when it comes into contact with water and is delivered to the hair follicles along the hair surface.

< Figure 1. Schematic diagram of the hair loss relief mechanism by the tannic acid/SCANDAL complex. Tannic acid is a polyphenol compound containing a galol group that has a 360-degree adhesive function, and it binds to the hair surface on one side and binds to the hair loss relief functional ingredient SCANDAL on the other side to store it on the hair surface. Afterwards, when it comes into contact with moisture, SCANDAL is gradually released and delivered to the scalp and hair follicles to show the hair loss relief effect. >

The research team of Goodmona Clinic (Director: Geon Min Lee) applied the shampoo containing tannic acid/Scandal complex to 12 hair loss patients for 7 days, and observed a significant hair loss reduction effect in all clinicians. The results of the experiment showed a reduction in average hair loss of 56.2%, and there were cases where hair loss was reduced by up to 90.2%.

This suggests that tannic acid can be effective in alleviating hair loss by stably maintaining the Scandal component on the hair surface and gradually releasing it and delivering it to the hair follicles.

< Figure 2. When a tannic acid coating is applied to untreated bleached hair, a coating is formed as if the cuticles are tightly attached to each other. This was confirmed through X-ray photoelectron spectroscopy (XPS) analysis, and a decrease in signal intensity was observed in the surface analysis of nitrogen of amino acids contained in keratin protein after tannic acid coating. This proves that tannic acid successfully binds to the hair surface and covers the existing amino acids. To verify this more clearly, the oxidation-reduction reaction was induced through gold ion treatment, and as a result, the entire hair turned black, and it was confirmed that tannic acid reacted with gold ions on the hair surface to form a tannic acid-gold complex. >

Professor Haeshin Lee said, “We have successfully proven that tannic acid, a type of natural polyphenol, has a strong antioxidant effect and has the property of strongly binding to proteins, so it can act as a bio-adhesive.”

Professor Lee continued, “Although there have been cases of using it as a skin and protein coating material in previous studies, this study is the first case of combining with hair and delivering hair loss relief ingredients, and it was applied to ‘Grabity’ shampoo commercialized through Polyphenol Factory, a startup company. We are working to commercialize more diverse research results, such as shampoos that dramatically increase the strength of thin hair that breaks and products that straighten curly hair.”

< Figure 3. Tannic acid and the hair loss relief functional ingredient (SCANDAL) formed a stable complex through hydrogen bonding, and it was confirmed that tannic acid bound to the hair could effectively store SCANDAL. In addition, the results of transmission electron microscopy analysis of salicylic acid (SCA), niacinamide (N), and dexpanthenol (DAL) showed that all of them formed tannic acid-SCANDAL nanocomplexes. >

The results of this study, in which a Ph.D. candidate KAIST Department of Chemistry, Eunu Kim, was the first author and Professor Haeshin Lee was the corresponding author, were published in the online edition of the international academic journal ‘Advanced Materials Interfaces’ on January 6. (Paper title: Leveraging Multifaceted Polyphenol Interactions: An Approach for Hair Loss Mitigation) DOI: 10.1002/admi.202400851

< Figure 4. The hair loss relief functional ingredient (SCANDAL) stored on the hair surface with tannic acid was slowly released upon contact with moisture and delivered to the hair follicle along the hair surface. Salicylic acid (SCA) and niacinamide (N) were each released by more than 25% within 10 minutes. When shampoo containing tannic acid/SCANDAL complex was applied to the hair of 12 participants, hair loss was reduced by about 56.2% on average, and the reduction rate ranged from a minimum of 26.6% to a maximum of 90.2%. These results suggest that tannic acid stably binds SCANDAL to the hair surface, which allows for its gradual release into the hair follicle, resulting in a hair loss alleviation effect. >

This study was conducted with the support of Polyphenol Factory, a KAIST faculty startup company.