Insik+Shin

-

Flexible User Interface Distribution for Ubiquitous Multi-Device Interaction

< Research Group of Professor Insik Shin (center) >

KAIST researchers have developed mobile software platform technology that allows a mobile application (app) to be executed simultaneously and more dynamically on multiple smart devices. Its high flexibility and broad applicability can help accelerate a shift from the current single-device paradigm to a multiple one, which enables users to utilize mobile apps in ways previously unthinkable.

Recent trends in mobile and IoT technologies in this era of 5G high-speed wireless communication have been hallmarked by the emergence of new display hardware and smart devices such as dual screens, foldable screens, smart watches, smart TVs, and smart cars. However, the current mobile app ecosystem is still confined to the conventional single-device paradigm in which users can employ only one screen on one device at a time. Due to this limitation, the real potential of multi-device environments has not been fully explored.

A KAIST research team led by Professor Insik Shin from the School of Computing, in collaboration with Professor Steve Ko’s group from the State University of New York at Buffalo, has developed mobile software platform technology named FLUID that can flexibly distribute the user interfaces (UIs) of an app to a number of other devices in real time without needing any modifications. The proposed technology provides single-device virtualization, and ensures that the interactions between the distributed UI elements across multiple devices remain intact.

This flexible multimodal interaction can be realized in diverse ubiquitous user experiences (UX), such as using live video steaming and chatting apps including YouTube, LiveMe, and AfreecaTV. FLUID can ensure that the video is not obscured by the chat window by distributing and displaying them separately on different devices respectively, which lets users enjoy the chat function while watching the video at the same time.

In addition, the UI for the destination input on a navigation app can be migrated into the passenger’s device with the help of FLUID, so that the destination can be easily and safely entered by the passenger while the driver is at the wheel.

FLUID can also support 5G multi-view apps – the latest service that allows sports or games to be viewed from various angles on a single device. With FLUID, the user can watch the event simultaneously from different viewpoints on multiple devices without switching between viewpoints on a single screen.

PhD candidate Sangeun Oh, who is the first author, and his team implemented the prototype of FLUID on the leading open-source mobile operating system, Android, and confirmed that it can successfully deliver the new UX to 20 existing legacy apps.

“This new technology can be applied to next-generation products from South Korean companies such as LG’s dual screen phone and Samsung’s foldable phone and is expected to embolden their competitiveness by giving them a head-start in the global market.” said Professor Shin.

This study will be presented at the 25th Annual International Conference on Mobile Computing and Networking (ACM MobiCom 2019) October 21 through 25 in Los Cabos, Mexico. The research was supported by the National Science Foundation (NSF) (CNS-1350883 (CAREER) and CNS-1618531).

Figure 1. Live video streaming and chatting app scenario

Figure 2. Navigation app scenario

Figure 3. 5G multi-view app scenario

Publication: Sangeun Oh, Ahyeon Kim, Sunjae Lee, Kilho Lee, Dae R. Jeong, Steven Y. Ko, and Insik Shin. 2019. FLUID: Flexible User Interface Distribution for Ubiquitous Multi-device Interaction. To be published in Proceedings of the 25th Annual International Conference on Mobile Computing and Networking (ACM MobiCom 2019). ACM, New York, NY, USA. Article Number and DOI Name TBD.

Video Material:

https://youtu.be/lGO4GwH4enA

Profile: Prof. Insik Shin, MS, PhD

ishin@kaist.ac.kr

https://cps.kaist.ac.kr/~ishin

Professor

Cyber-Physical Systems (CPS) Lab

School of Computing

Korea Advanced Institute of Science and Technology (KAIST)

http://kaist.ac.kr Daejeon 34141, Korea

Profile: Sangeun Oh, PhD Candidate

ohsang1213@kaist.ac.kr

https://cps.kaist.ac.kr/

PhD Candidate

Cyber-Physical Systems (CPS) Lab

School of Computing

Korea Advanced Institute of Science and Technology (KAIST)

http://kaist.ac.kr Daejeon 34141, Korea

Profile: Prof. Steve Ko, PhD

stevko@buffalo.edu

https://nsr.cse.buffalo.edu/?page_id=272

Associate Professor

Networked Systems Research Group

Department of Computer Science and Engineering

State University of New York at Buffalo

http://www.buffalo.edu/ Buffalo 14260, USA

(END)

2019.07.20 View 42627

Flexible User Interface Distribution for Ubiquitous Multi-Device Interaction

< Research Group of Professor Insik Shin (center) >

KAIST researchers have developed mobile software platform technology that allows a mobile application (app) to be executed simultaneously and more dynamically on multiple smart devices. Its high flexibility and broad applicability can help accelerate a shift from the current single-device paradigm to a multiple one, which enables users to utilize mobile apps in ways previously unthinkable.

Recent trends in mobile and IoT technologies in this era of 5G high-speed wireless communication have been hallmarked by the emergence of new display hardware and smart devices such as dual screens, foldable screens, smart watches, smart TVs, and smart cars. However, the current mobile app ecosystem is still confined to the conventional single-device paradigm in which users can employ only one screen on one device at a time. Due to this limitation, the real potential of multi-device environments has not been fully explored.

A KAIST research team led by Professor Insik Shin from the School of Computing, in collaboration with Professor Steve Ko’s group from the State University of New York at Buffalo, has developed mobile software platform technology named FLUID that can flexibly distribute the user interfaces (UIs) of an app to a number of other devices in real time without needing any modifications. The proposed technology provides single-device virtualization, and ensures that the interactions between the distributed UI elements across multiple devices remain intact.

This flexible multimodal interaction can be realized in diverse ubiquitous user experiences (UX), such as using live video steaming and chatting apps including YouTube, LiveMe, and AfreecaTV. FLUID can ensure that the video is not obscured by the chat window by distributing and displaying them separately on different devices respectively, which lets users enjoy the chat function while watching the video at the same time.

In addition, the UI for the destination input on a navigation app can be migrated into the passenger’s device with the help of FLUID, so that the destination can be easily and safely entered by the passenger while the driver is at the wheel.

FLUID can also support 5G multi-view apps – the latest service that allows sports or games to be viewed from various angles on a single device. With FLUID, the user can watch the event simultaneously from different viewpoints on multiple devices without switching between viewpoints on a single screen.

PhD candidate Sangeun Oh, who is the first author, and his team implemented the prototype of FLUID on the leading open-source mobile operating system, Android, and confirmed that it can successfully deliver the new UX to 20 existing legacy apps.

“This new technology can be applied to next-generation products from South Korean companies such as LG’s dual screen phone and Samsung’s foldable phone and is expected to embolden their competitiveness by giving them a head-start in the global market.” said Professor Shin.

This study will be presented at the 25th Annual International Conference on Mobile Computing and Networking (ACM MobiCom 2019) October 21 through 25 in Los Cabos, Mexico. The research was supported by the National Science Foundation (NSF) (CNS-1350883 (CAREER) and CNS-1618531).

Figure 1. Live video streaming and chatting app scenario

Figure 2. Navigation app scenario

Figure 3. 5G multi-view app scenario

Publication: Sangeun Oh, Ahyeon Kim, Sunjae Lee, Kilho Lee, Dae R. Jeong, Steven Y. Ko, and Insik Shin. 2019. FLUID: Flexible User Interface Distribution for Ubiquitous Multi-device Interaction. To be published in Proceedings of the 25th Annual International Conference on Mobile Computing and Networking (ACM MobiCom 2019). ACM, New York, NY, USA. Article Number and DOI Name TBD.

Video Material:

https://youtu.be/lGO4GwH4enA

Profile: Prof. Insik Shin, MS, PhD

ishin@kaist.ac.kr

https://cps.kaist.ac.kr/~ishin

Professor

Cyber-Physical Systems (CPS) Lab

School of Computing

Korea Advanced Institute of Science and Technology (KAIST)

http://kaist.ac.kr Daejeon 34141, Korea

Profile: Sangeun Oh, PhD Candidate

ohsang1213@kaist.ac.kr

https://cps.kaist.ac.kr/

PhD Candidate

Cyber-Physical Systems (CPS) Lab

School of Computing

Korea Advanced Institute of Science and Technology (KAIST)

http://kaist.ac.kr Daejeon 34141, Korea

Profile: Prof. Steve Ko, PhD

stevko@buffalo.edu

https://nsr.cse.buffalo.edu/?page_id=272

Associate Professor

Networked Systems Research Group

Department of Computer Science and Engineering

State University of New York at Buffalo

http://www.buffalo.edu/ Buffalo 14260, USA

(END)

2019.07.20 View 42627 -

Sound-based Touch Input Technology for Smart Tables and Mirrors

(from left: MS candidate Anish Byanjankar, Research Assistant Professor Hyosu Kim and Professor Insik Shin)

Time passes so quickly, especially in the morning. Your hands are so busy brushing your teeth and checking the weather on your smartphone. You might wish that your mirror could turn into a touch screen and free up your hands. That wish can be achieved very soon. A KAIST team has developed a smartphone-based touch sound localization technology to facilitate ubiquitous interactions, turning objects like furniture and mirrors into touch input tools.

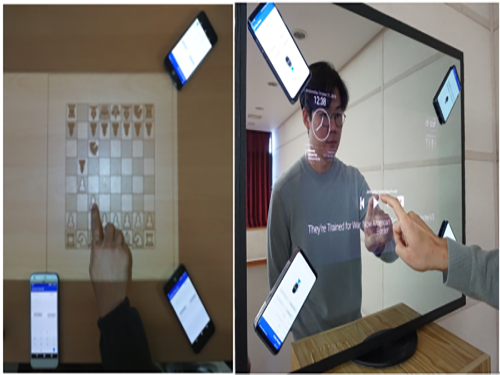

This technology analyzes touch sounds generated from a user’s touch on a surface and identifies the location of the touch input. For instance, users can turn surrounding tables or walls into virtual keyboards and write lengthy e-mails much more conveniently by using only the built-in microphone on their smartphones or tablets. Moreover, family members can enjoy a virtual chessboard or enjoy board games on their dining tables.

Additionally, traditional smart devices such as smart TVs or mirrors, which only provide simple screen display functions, can play a smarter role by adding touch input function support (see the image below).

Figure 1.Examples of using touch input technology: By using only smartphone, you can use surrounding objects as a touch screen anytime and anywhere.

The most important aspect of enabling the sound-based touch input method is to identify the location of touch inputs in a precise manner (within about 1cm error). However, it is challenging to meet these requirements, mainly because this technology can be used in diverse and dynamically changing environments. Users may use objects like desks, walls, or mirrors as touch input tools and the surrounding environments (e.g. location of nearby objects or ambient noise level) can be varied. These environmental changes can affect the characteristics of touch sounds.

To address this challenge, Professor Insik Shin from the School of Computing and his team focused on analyzing the fundamental properties of touch sounds, especially how they are transmitted through solid surfaces.

On solid surfaces, sound experiences a dispersion phenomenon that makes different frequency components travel at different speeds. Based on this phenomenon, the team observed that the arrival time difference (TDoA) between frequency components increases in proportion to the sound transmission distance, and this linear relationship is not affected by the variations of surround environments.

Based on these observations, Research Assistant Professor Hyosu Kim proposed a novel sound-based touch input technology that records touch sounds transmitted through solid surfaces, then conducts a simple calibration process to identify the relationship between TDoA and the sound transmission distance, finally achieving accurate touch input localization.

The accuracy of the proposed system was then measured. The average localization error was lower than about 0.4 cm on a 17-inch touch screen. Particularly, it provided a measurement error of less than 1cm, even with a variety of objects such as wooden desks, glass mirrors, and acrylic boards and when the position of nearby objects and noise levels changed dynamically. Experiments with practical users have also shown positive responses to all measurement factors, including user experience and accuracy.

Professor Shin said, “This is novel touch interface technology that allows a touch input system just by installing three to four microphones, so it can easily turn nearby objects into touch screens.”

The proposed system was presented at ACM SenSys, a top-tier conference in the field of mobile computing and sensing, and was selected as a best paper runner-up in November 2018.

(The demonstration video of the sound-based touch input technology)

2018.12.26 View 10546

Sound-based Touch Input Technology for Smart Tables and Mirrors

(from left: MS candidate Anish Byanjankar, Research Assistant Professor Hyosu Kim and Professor Insik Shin)

Time passes so quickly, especially in the morning. Your hands are so busy brushing your teeth and checking the weather on your smartphone. You might wish that your mirror could turn into a touch screen and free up your hands. That wish can be achieved very soon. A KAIST team has developed a smartphone-based touch sound localization technology to facilitate ubiquitous interactions, turning objects like furniture and mirrors into touch input tools.

This technology analyzes touch sounds generated from a user’s touch on a surface and identifies the location of the touch input. For instance, users can turn surrounding tables or walls into virtual keyboards and write lengthy e-mails much more conveniently by using only the built-in microphone on their smartphones or tablets. Moreover, family members can enjoy a virtual chessboard or enjoy board games on their dining tables.

Additionally, traditional smart devices such as smart TVs or mirrors, which only provide simple screen display functions, can play a smarter role by adding touch input function support (see the image below).

Figure 1.Examples of using touch input technology: By using only smartphone, you can use surrounding objects as a touch screen anytime and anywhere.

The most important aspect of enabling the sound-based touch input method is to identify the location of touch inputs in a precise manner (within about 1cm error). However, it is challenging to meet these requirements, mainly because this technology can be used in diverse and dynamically changing environments. Users may use objects like desks, walls, or mirrors as touch input tools and the surrounding environments (e.g. location of nearby objects or ambient noise level) can be varied. These environmental changes can affect the characteristics of touch sounds.

To address this challenge, Professor Insik Shin from the School of Computing and his team focused on analyzing the fundamental properties of touch sounds, especially how they are transmitted through solid surfaces.

On solid surfaces, sound experiences a dispersion phenomenon that makes different frequency components travel at different speeds. Based on this phenomenon, the team observed that the arrival time difference (TDoA) between frequency components increases in proportion to the sound transmission distance, and this linear relationship is not affected by the variations of surround environments.

Based on these observations, Research Assistant Professor Hyosu Kim proposed a novel sound-based touch input technology that records touch sounds transmitted through solid surfaces, then conducts a simple calibration process to identify the relationship between TDoA and the sound transmission distance, finally achieving accurate touch input localization.

The accuracy of the proposed system was then measured. The average localization error was lower than about 0.4 cm on a 17-inch touch screen. Particularly, it provided a measurement error of less than 1cm, even with a variety of objects such as wooden desks, glass mirrors, and acrylic boards and when the position of nearby objects and noise levels changed dynamically. Experiments with practical users have also shown positive responses to all measurement factors, including user experience and accuracy.

Professor Shin said, “This is novel touch interface technology that allows a touch input system just by installing three to four microphones, so it can easily turn nearby objects into touch screens.”

The proposed system was presented at ACM SenSys, a top-tier conference in the field of mobile computing and sensing, and was selected as a best paper runner-up in November 2018.

(The demonstration video of the sound-based touch input technology)

2018.12.26 View 10546 -

Multi-Device Mobile Platform for App Functionality Sharing

Case 1. Mr. Kim, an employee, logged on to his SNS account using a tablet PC at the airport while traveling overseas. However, a malicious virus was installed on the tablet PC and some photos posted on his SNS were deleted by someone else.

Case 2. Mr. and Mrs. Brown are busy contacting credit card and game companies, because his son, who likes games, purchased a million dollars worth of game items using his smartphone.

Case 3. Mr. Park, who enjoys games, bought a sensor-based racing game through his tablet PC. However, he could not enjoy the racing game on his tablet because it was not comfortable to tilt the device for game control.

The above cases are some of the various problems that can arise in modern society where diverse smart devices, including smartphones, exist. Recently, new technology has been developed to easily solve these problems. Professor Insik Shin from the School of Computing has developed ‘Mobile Plus,’ which is a mobile platform that can share the functionalities of applications between smart devices.

This is a novel technology that allows applications to easily share their functionalities without needing any modifications. Smartphone users often use Facebook to log in to another SNS account like Instagram, or use a gallery app to post some photos on their SNS. These examples are possible, because the applications share their login and photo management functionalities.

The functionality sharing enables users to utilize smartphones in various and convenient ways and allows app developers to easily create applications. However, current mobile platforms such as Android or iOS only support functionality sharing within a single mobile device. It is burdensome for both developers and users to share functionalities across devices because developers would need to create more complex applications and users would need to install the applications on each device.

To address this problem, Professor Shin’s research team developed platform technology to support functionality sharing between devices. The main concept is using virtualization to give the illusion that the applications running on separate devices are on a single device. They succeeded in this virtualization by extending a RPC (Remote Procedure Call) scheme to multi-device environments.

This virtualization technology enables the existing applications to share their functionalities without needing any modifications, regardless of the type of applications. So users can now use them without additional purchases or updates. Mobile Plus can support hardware functionalities like cameras, microphones, and GPS as well as application functionalities such as logins, payments, and photo sharing. Its greatest advantage is its wide range of possible applications.

Professor Shin said, "Mobile Plus is expected to have great synergy with smart home and smart car technologies. It can provide novel user experiences (UXs) so that users can easily utilize various applications of smart home/vehicle infotainment systems by using a smartphone as their hub."

This research was published at ACM MobiSys, an international conference on mobile computing that was hosted in the United States on June 21.

Figure1. Users can securely log on to SNS accounts by using their personal devices

Figure 2. Parents can control impulse shopping of their children.

Figure 3. Users can enjoy games more and more by using the smartphone as a controller.

2017.08.09 View 11369

Multi-Device Mobile Platform for App Functionality Sharing

Case 1. Mr. Kim, an employee, logged on to his SNS account using a tablet PC at the airport while traveling overseas. However, a malicious virus was installed on the tablet PC and some photos posted on his SNS were deleted by someone else.

Case 2. Mr. and Mrs. Brown are busy contacting credit card and game companies, because his son, who likes games, purchased a million dollars worth of game items using his smartphone.

Case 3. Mr. Park, who enjoys games, bought a sensor-based racing game through his tablet PC. However, he could not enjoy the racing game on his tablet because it was not comfortable to tilt the device for game control.

The above cases are some of the various problems that can arise in modern society where diverse smart devices, including smartphones, exist. Recently, new technology has been developed to easily solve these problems. Professor Insik Shin from the School of Computing has developed ‘Mobile Plus,’ which is a mobile platform that can share the functionalities of applications between smart devices.

This is a novel technology that allows applications to easily share their functionalities without needing any modifications. Smartphone users often use Facebook to log in to another SNS account like Instagram, or use a gallery app to post some photos on their SNS. These examples are possible, because the applications share their login and photo management functionalities.

The functionality sharing enables users to utilize smartphones in various and convenient ways and allows app developers to easily create applications. However, current mobile platforms such as Android or iOS only support functionality sharing within a single mobile device. It is burdensome for both developers and users to share functionalities across devices because developers would need to create more complex applications and users would need to install the applications on each device.

To address this problem, Professor Shin’s research team developed platform technology to support functionality sharing between devices. The main concept is using virtualization to give the illusion that the applications running on separate devices are on a single device. They succeeded in this virtualization by extending a RPC (Remote Procedure Call) scheme to multi-device environments.

This virtualization technology enables the existing applications to share their functionalities without needing any modifications, regardless of the type of applications. So users can now use them without additional purchases or updates. Mobile Plus can support hardware functionalities like cameras, microphones, and GPS as well as application functionalities such as logins, payments, and photo sharing. Its greatest advantage is its wide range of possible applications.

Professor Shin said, "Mobile Plus is expected to have great synergy with smart home and smart car technologies. It can provide novel user experiences (UXs) so that users can easily utilize various applications of smart home/vehicle infotainment systems by using a smartphone as their hub."

This research was published at ACM MobiSys, an international conference on mobile computing that was hosted in the United States on June 21.

Figure1. Users can securely log on to SNS accounts by using their personal devices

Figure 2. Parents can control impulse shopping of their children.

Figure 3. Users can enjoy games more and more by using the smartphone as a controller.

2017.08.09 View 11369