research

A KAIST research team has developed a new context-awareness technology that enables AI assistants to determine when to talk to their users based on user circumstances. This technology can contribute to developing advanced AI assistants that can offer pre-emptive services such as reminding users to take medication on time or modifying schedules based on the actual progress of planned tasks.

Unlike conventional AI assistants that used to act passively upon users’ commands, today’s AI assistants are evolving to provide more proactive services through self-reasoning of user circumstances. This opens up new opportunities for AI assistants to better support users in their daily lives. However, if AI assistants do not talk at the right time, they could rather interrupt their users instead of helping them.

The right time for talking is more difficult for AI assistants to determine than it appears. This is because the context can differ depending on the state of the user or the surrounding environment.

A group of researchers led by Professor Uichin Lee from the KAIST School of Computing identified key contextual factors in user circumstances that determine when the AI assistant should start, stop, or resume engaging in voice services in smart home environments. Their findings were published in the Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT) in September.

The group conducted this study in collaboration with Professor Jae-Gil Lee’s group in the KAIST School of Computing, Professor Sangsu Lee’s group in the KAIST Department of Industrial Design, and Professor Auk Kim’s group at Kangwon National University.

After developing smart speakers equipped with AI assistant function for experimental use, the researchers installed them in the rooms of 40 students who live in double-occupancy campus dormitories and collected a total of 3,500 in-situ user response data records over a period of a week.

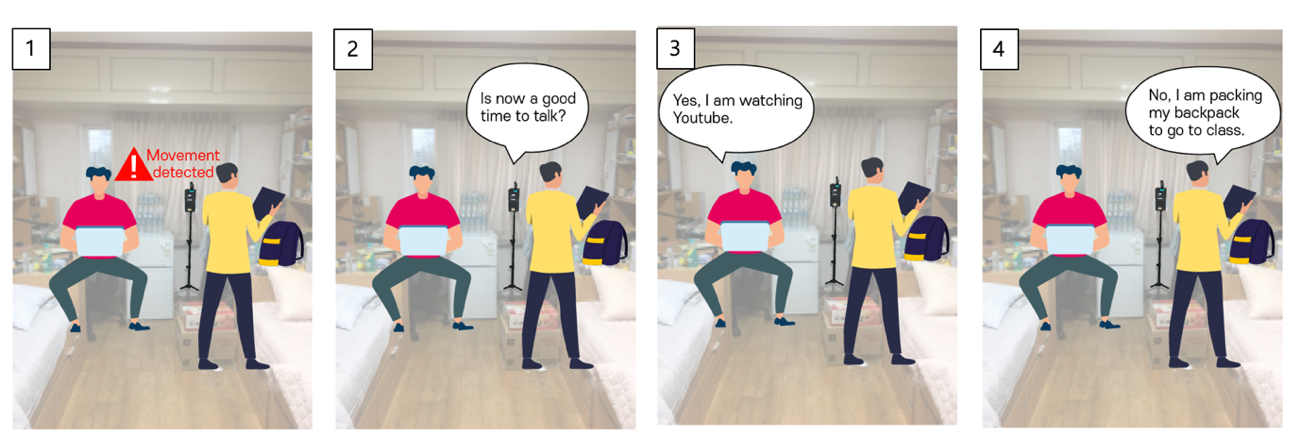

The smart speakers repeatedly asked the students a question, “Is now a good time to talk?” at random intervals or whenever a student’s movement was detected. Students answered with either “yes” or “no” and then explained why, describing what they had been doing before being questioned by the smart speakers.

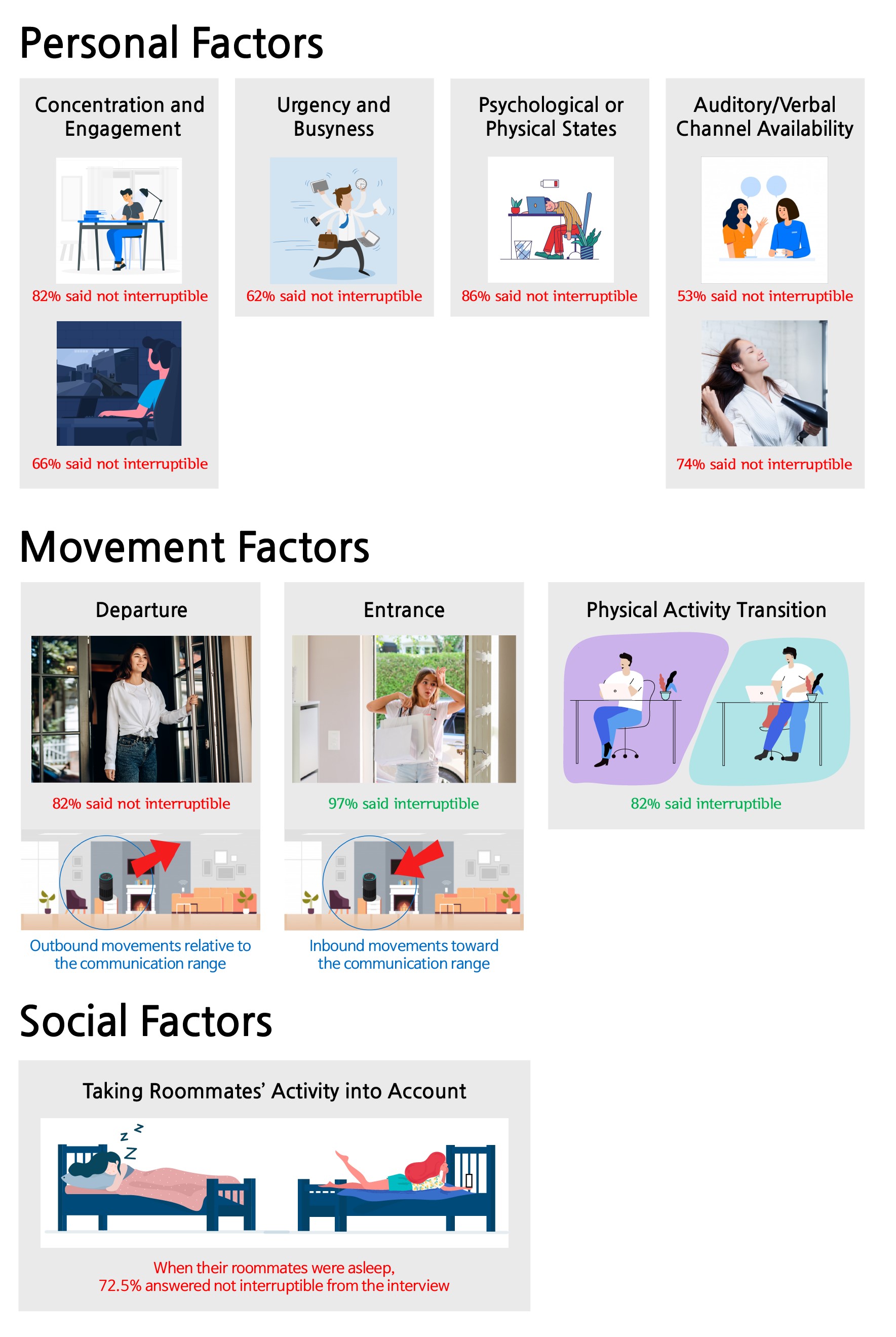

Data analysis revealed that 47% of user responses were “no” indicating they did not want to be interrupted. The research team then created 19 home activity categories to cross-analyze the key contextual factors that determine opportune moments for AI assistants to talk, and classified these factors into ‘personal,’ ‘movement,’ and ‘social’ factors respectively.

Personal factors, for instance, include:

1. the degree of concentration on or engagement in activities,

2. the degree urgency and busyness,

3. the state of user’s mental or physical condition, and

4. the state of being able to talk or listen while multitasking.

While users were busy concentrating on studying, tired, or drying hair, they found it difficult to engage in conversational interactions with the smart speakers.

Some representative movement factors include departure, entrance, and physical activity transitions. Interestingly, in movement scenarios, the team found that the communication range was an important factor. Departure is an outbound movement from the smart speaker, and entrance is an inbound movement. Users were much more available during inbound movement scenarios as opposed to outbound movement scenarios.

In general, smart speakers are located in a shared place at home, such as a living room, where multiple family members gather at the same time. In Professor Lee’s group’s experiment, almost half of the in-situ user responses were collected when both roommates were present. The group found social presence also influenced interruptibility. Roommates often wanted to minimize possible interpersonal conflicts, such as disturbing their roommates' sleep or work.

Narae Cha, the lead author of this study, explained, “By considering personal, movement, and social factors, we can envision a smart speaker that can intelligently manage the timing of conversations with users.”

She believes that this work lays the foundation for the future of AI assistants, adding, “Multi-modal sensory data can be used for context sensing, and this context information will help smart speakers proactively determine when it is a good time to start, stop, or resume conversations with their users.”

This work was supported by the National Research Foundation (NRF) of Korea.

< Image 1. In-situ experience sampling of user availability for conversations with AI assistants >

< Image 2. Key Contextual Factors that Determine Optimal Timing for AI Assistants to Talk >

Publication:

Cha, N, et al. (2020) “Hello There! Is Now a Good Time to Talk?”: Opportune Moments for Proactive Interactions with Smart Speakers. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT), Vol. 4, No. 3, Article No. 74, pp. 1-28. Available online at https://doi.org/10.1145/3411810

Link to Introductory Video:

https://youtu.be/AA8CTi2hEf0

Profile:

Uichin Lee

Associate Professor

uclee@kaist.ac.kr

http://ic.kaist.ac.kr

Interactive Computing Lab.

School of Computing

https://www.kaist.ac.kr

Korea Advanced Institute of Science and Technology (KAIST)

Daejeon, Republic of Korea

(END)

-

event 2025 KAIST Global Entrepreneurship Summer School Concludes Successfully in Silicon Valley

< A group photo taken at the 2025 GESS Special Lecture.Vice President So Young Kim from the International Office, VC Jay Eum from GFT Ventures, Professor Byungchae Jin from the Impact MBA Program at the Business School, and Research Assistant Professor Sooa Lee from the Office of Global Initiative> The “2025 KAIST Global Entrepreneurship Summer School (2025 KAIST GESS),” organized by the Office of Global Initiative of the KAIST International Office (Vice President

2025-07-01 -

research KAIST Develops AI to Easily Find Promising Materials That Capture Only CO₂

< Photo 1. (From left) Professor Jihan Kim, Ph.D. candidate Yunsung Lim and Dr. Hyunsoo Park of the Department of Chemical and Biomolecular Engineering > In order to help prevent the climate crisis, actively reducing already-emitted CO₂ is essential. Accordingly, direct air capture (DAC) — a technology that directly extracts only CO₂ from the air — is gaining attention. However, effectively capturing pure CO₂ is not easy due to water vapor (H₂O) present in the air. KAIST r

2025-06-29 -

research Military Combatants Usher in an Era of Personalized Training with New Materials

< Photo 1. (From left) Professor Steve Park of Materials Science and Engineering, Kyusoon Pak, Ph.D. Candidate (Army Major) > Traditional military training often relies on standardized methods, which has limited the provision of optimized training tailored to individual combatants' characteristics or specific combat situations. To address this, our research team developed an e-textile platform, securing core technology that can reflect the unique traits of individual combatants and

2025-06-25 -

event KAIST to Lead the Way in Nurturing Talent and Driving S&T Innovation for a G3 AI Powerhouse

* Focusing on nurturing talent and dedicating to R&D to become a G3 AI powerhouse (Top 3 AI Nations). * Leading the realization of an "AI-driven Basic Society for All" and developing technologies that leverage AI to overcome the crisis in Korea's manufacturing sector. * 50 years ago, South Korea emerged as a scientific and technological powerhouse from the ashes, with KAIST at its core, contributing to the development of scientific and technological talent, innovative technology, national

2025-06-24 -

research KAIST Researchers Unveil an AI that Generates "Unexpectedly Original" Designs

< Photo 1. Professor Jaesik Choi, KAIST Kim Jaechul Graduate School of AI > Recently, text-based image generation models can automatically create high-resolution, high-quality images solely from natural language descriptions. However, when a typical example like the Stable Diffusion model is given the text "creative," its ability to generate truly creative images remains limited. KAIST researchers have developed a technology that can enhance the creativity of text-based image generati

2025-06-20