Electrical+Engineering

-

A KAIST research team unveils new path for dense photonic integration

Integrated optical semiconductor (hereinafter referred to as optical semiconductor) technology is a next-generation semiconductor technology for which many researches and investments are being made worldwide because it can make complex optical systems such as LiDAR and quantum sensors and computers into a single small chip. In the existing semiconductor technology, the key was how small it was to make it in units of 5 nanometers or 2 nanometers, but increasing the degree of integration in optical semiconductor devices can be said to be a key technology that determines performance, price, and energy efficiency.

KAIST (President Kwang-Hyung Lee) announced on the 19th that a research team led by Professor Sangsik Kim of the Department of Electrical and Electronic Engineering discovered a new optical coupling mechanism that can increase the degree of integration of optical semiconductor devices by more than 100 times.

The degree of the number of elements that can be configured per chip is called the degree of integration. However, it is very difficult to increase the degree of integration of optical semiconductor devices, because crosstalk occurs between photons between adjacent devices due to the wave nature of light.

In previous studies, it was possible to reduce crosstalk of light only in specific polarizations, but in this study, the research team developed a method to increase the degree of integration even under polarization conditions, which were previously considered impossible, by discovering a new light coupling mechanism.

This study, led by Professor Sangsik Kim as a corresponding author and conducted with students he taught at Texas Tech University, was published in the international journal 'Light: Science & Applications' [IF=20.257] on June 2nd. done. (Paper title: Anisotropic leaky-like perturbation with subwavelength gratings enables zero crosstalk).

Professor Sangsik Kim said, "The interesting thing about this study is that it paradoxically eliminated the confusion through leaky waves (light tends to spread sideways), which was previously thought to increase the crosstalk." He went on to add, “If the optical coupling method using the leaky wave revealed in this study is applied, it will be possible to develop various optical semiconductor devices that are smaller and that has less noise.”

Professor Sangsik Kim is a researcher recognized for his expertise and research in optical semiconductor integration. Through his previous research, he developed an all-dielectric metamaterial that can control the degree of light spreading laterally by patterning a semiconductor structure at a size smaller than the wavelength, and proved this through experiments to improve the degree of integration of optical semiconductors. These studies were reported in ‘Nature Communications’ (Vol. 9, Article 1893, 2018) and ‘Optica’ (Vol. 7, pp. 881-887, 2020). In recognition of these achievements, Professor Kim has received the NSF Career Award from the National Science Foundation (NSF) and the Young Scientist Award from the Association of Korean-American Scientists and Engineers.

Meanwhile, this research was carried out with the support from the New Research Project of Excellence of the National Research Foundation of Korea and and the National Science Foundation of the US.

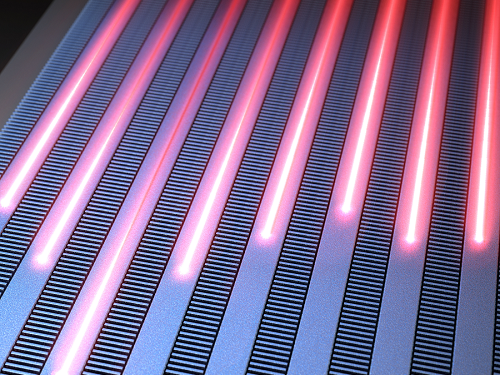

< Figure 1. Illustration depicting light propagation without crosstalk in the waveguide array of the developed metamaterial-based optical semiconductor >

2023.06.21 View 8579

A KAIST research team unveils new path for dense photonic integration

Integrated optical semiconductor (hereinafter referred to as optical semiconductor) technology is a next-generation semiconductor technology for which many researches and investments are being made worldwide because it can make complex optical systems such as LiDAR and quantum sensors and computers into a single small chip. In the existing semiconductor technology, the key was how small it was to make it in units of 5 nanometers or 2 nanometers, but increasing the degree of integration in optical semiconductor devices can be said to be a key technology that determines performance, price, and energy efficiency.

KAIST (President Kwang-Hyung Lee) announced on the 19th that a research team led by Professor Sangsik Kim of the Department of Electrical and Electronic Engineering discovered a new optical coupling mechanism that can increase the degree of integration of optical semiconductor devices by more than 100 times.

The degree of the number of elements that can be configured per chip is called the degree of integration. However, it is very difficult to increase the degree of integration of optical semiconductor devices, because crosstalk occurs between photons between adjacent devices due to the wave nature of light.

In previous studies, it was possible to reduce crosstalk of light only in specific polarizations, but in this study, the research team developed a method to increase the degree of integration even under polarization conditions, which were previously considered impossible, by discovering a new light coupling mechanism.

This study, led by Professor Sangsik Kim as a corresponding author and conducted with students he taught at Texas Tech University, was published in the international journal 'Light: Science & Applications' [IF=20.257] on June 2nd. done. (Paper title: Anisotropic leaky-like perturbation with subwavelength gratings enables zero crosstalk).

Professor Sangsik Kim said, "The interesting thing about this study is that it paradoxically eliminated the confusion through leaky waves (light tends to spread sideways), which was previously thought to increase the crosstalk." He went on to add, “If the optical coupling method using the leaky wave revealed in this study is applied, it will be possible to develop various optical semiconductor devices that are smaller and that has less noise.”

Professor Sangsik Kim is a researcher recognized for his expertise and research in optical semiconductor integration. Through his previous research, he developed an all-dielectric metamaterial that can control the degree of light spreading laterally by patterning a semiconductor structure at a size smaller than the wavelength, and proved this through experiments to improve the degree of integration of optical semiconductors. These studies were reported in ‘Nature Communications’ (Vol. 9, Article 1893, 2018) and ‘Optica’ (Vol. 7, pp. 881-887, 2020). In recognition of these achievements, Professor Kim has received the NSF Career Award from the National Science Foundation (NSF) and the Young Scientist Award from the Association of Korean-American Scientists and Engineers.

Meanwhile, this research was carried out with the support from the New Research Project of Excellence of the National Research Foundation of Korea and and the National Science Foundation of the US.

< Figure 1. Illustration depicting light propagation without crosstalk in the waveguide array of the developed metamaterial-based optical semiconductor >

2023.06.21 View 8579 -

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 12336

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 12336 -

KAIST researchers devises a technology to utilize ultrahigh-resolution micro-LED with 40% reduced self-generated heat

In the digitized modern life, various forms of future displays, such as wearable and rollable displays are required. More and more people are wanting to connect to the virtual world whenever and wherever with the use of their smartglasses or smartwatches. Even further, we’ve been hearing about medical diagnosis kit on a shirt and a theatre-hat. However, it is not quite here in our hands yet due to technical limitations of being unable to fit as many pixels as a limited surface area of a glasses while keeping the power consumption at the a level that a hand held battery can supply, all the while the resolution of 4K+ is needed in order to perfectly immerse the users into the augmented or virtual reality through a wireless smartglasses or whatever the device.

KAIST (President Kwang Hyung Lee) announced on the 22nd that Professor Sang Hyeon Kim's research team of the Department of Electrical and Electronic Engineering re-examined the phenomenon of efficiency degradation of micro-LEDs with pixels in a size of micrometers (μm, one millionth of a meter) and found that it was possible to fundamentally resolve the problem by the use of epitaxial structure engineering.

Epitaxy refers to the process of stacking gallium nitride crystals that are used as a light emitting body on top of an ultrapure silicon or sapphire substrate used for μLEDs as a medium.

μLED is being actively studied because it has the advantages of superior brightness, contrast ratio, and lifespan compared to OLED. In 2018, Samsung Electronics commercialized a product equipped with μLED called 'The Wall'. And there is a prospect that Apple may be launching a μLED-mounted product in 2025.

In order to manufacture μLEDs, pixels are formed by cutting the epitaxial structure grown on a wafer into a cylinder or cuboid shape through an etching process, and this etching process is accompanied by a plasma-based process. However, these plasmas generate defects on the side of the pixel during the pixel formation process.

Therefore, as the pixel size becomes smaller and the resolution increases, the ratio of the surface area to the volume of the pixel increases, and defects on the side of the device that occur during processing further reduce the device efficiency of the μLED. Accordingly, a considerable amount of research has been conducted on mitigating or removing sidewall defects, but this method has a limit to the degree of improvement as it must be done at the post-processing stage after the grown of the epitaxial structure is finished.

The research team identified that there is a difference in the current moving to the sidewall of the μLED depending on the epitaxial structure during μLED device operation, and based on the findings, the team built a structure that is not sensitive to sidewall defects to solve the problem of reduced efficiency due to miniaturization of μLED devices. In addition, the proposed structure reduced the self-generated heat while the device was running by about 40% compared to the existing structure, which is also of great significance in commercialization of ultrahigh-resolution μLED displays.

This study, which was led by Woo Jin Baek of Professor Sang Hyeon Kim's research team at the KAIST School of Electrical and Electronic Engineering as the first author with guidance by Professor Sang Hyeon Kim and Professor Dae-Myeong Geum of the Chungbuk National University (who was with the team as a postdoctoral researcher at the time) as corresponding authors, was published in the international journal, 'Nature Communications' on March 17th. (Title of the paper: Ultra-low-current driven InGaN blue micro light-emitting diodes for electrically efficient and self-heating relaxed microdisplay).

Professor Sang Hyeon Kim said, "This technological development has great meaning in identifying the cause of the drop in efficiency, which was an obstacle to miniaturization of μLED, and solving it with the design of the epitaxial structure.“ He added, ”We are looking forward to it being used in manufacturing of ultrahigh-resolution displays in the future."

This research was carried out with the support of the Samsung Future Technology Incubation Center.

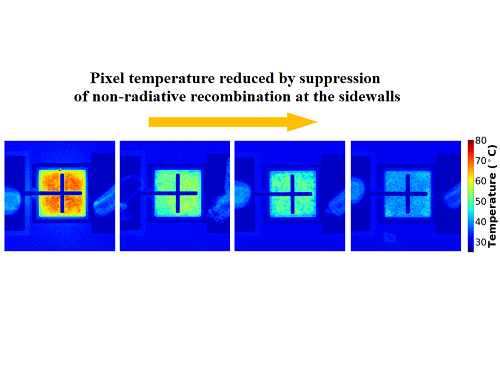

Figure 1. Image of electroluminescence distribution of μLEDs fabricated from epitaxial structures with quantum barriers of different thicknesses while the current is running

Figure 2. Thermal distribution images of devices fabricated with different epitaxial structures under the same amount of light.

Figure 3. Normalized external quantum efficiency of the device fabricated with the optimized epitaxial structure by sizes.

2023.03.23 View 8372

KAIST researchers devises a technology to utilize ultrahigh-resolution micro-LED with 40% reduced self-generated heat

In the digitized modern life, various forms of future displays, such as wearable and rollable displays are required. More and more people are wanting to connect to the virtual world whenever and wherever with the use of their smartglasses or smartwatches. Even further, we’ve been hearing about medical diagnosis kit on a shirt and a theatre-hat. However, it is not quite here in our hands yet due to technical limitations of being unable to fit as many pixels as a limited surface area of a glasses while keeping the power consumption at the a level that a hand held battery can supply, all the while the resolution of 4K+ is needed in order to perfectly immerse the users into the augmented or virtual reality through a wireless smartglasses or whatever the device.

KAIST (President Kwang Hyung Lee) announced on the 22nd that Professor Sang Hyeon Kim's research team of the Department of Electrical and Electronic Engineering re-examined the phenomenon of efficiency degradation of micro-LEDs with pixels in a size of micrometers (μm, one millionth of a meter) and found that it was possible to fundamentally resolve the problem by the use of epitaxial structure engineering.

Epitaxy refers to the process of stacking gallium nitride crystals that are used as a light emitting body on top of an ultrapure silicon or sapphire substrate used for μLEDs as a medium.

μLED is being actively studied because it has the advantages of superior brightness, contrast ratio, and lifespan compared to OLED. In 2018, Samsung Electronics commercialized a product equipped with μLED called 'The Wall'. And there is a prospect that Apple may be launching a μLED-mounted product in 2025.

In order to manufacture μLEDs, pixels are formed by cutting the epitaxial structure grown on a wafer into a cylinder or cuboid shape through an etching process, and this etching process is accompanied by a plasma-based process. However, these plasmas generate defects on the side of the pixel during the pixel formation process.

Therefore, as the pixel size becomes smaller and the resolution increases, the ratio of the surface area to the volume of the pixel increases, and defects on the side of the device that occur during processing further reduce the device efficiency of the μLED. Accordingly, a considerable amount of research has been conducted on mitigating or removing sidewall defects, but this method has a limit to the degree of improvement as it must be done at the post-processing stage after the grown of the epitaxial structure is finished.

The research team identified that there is a difference in the current moving to the sidewall of the μLED depending on the epitaxial structure during μLED device operation, and based on the findings, the team built a structure that is not sensitive to sidewall defects to solve the problem of reduced efficiency due to miniaturization of μLED devices. In addition, the proposed structure reduced the self-generated heat while the device was running by about 40% compared to the existing structure, which is also of great significance in commercialization of ultrahigh-resolution μLED displays.

This study, which was led by Woo Jin Baek of Professor Sang Hyeon Kim's research team at the KAIST School of Electrical and Electronic Engineering as the first author with guidance by Professor Sang Hyeon Kim and Professor Dae-Myeong Geum of the Chungbuk National University (who was with the team as a postdoctoral researcher at the time) as corresponding authors, was published in the international journal, 'Nature Communications' on March 17th. (Title of the paper: Ultra-low-current driven InGaN blue micro light-emitting diodes for electrically efficient and self-heating relaxed microdisplay).

Professor Sang Hyeon Kim said, "This technological development has great meaning in identifying the cause of the drop in efficiency, which was an obstacle to miniaturization of μLED, and solving it with the design of the epitaxial structure.“ He added, ”We are looking forward to it being used in manufacturing of ultrahigh-resolution displays in the future."

This research was carried out with the support of the Samsung Future Technology Incubation Center.

Figure 1. Image of electroluminescence distribution of μLEDs fabricated from epitaxial structures with quantum barriers of different thicknesses while the current is running

Figure 2. Thermal distribution images of devices fabricated with different epitaxial structures under the same amount of light.

Figure 3. Normalized external quantum efficiency of the device fabricated with the optimized epitaxial structure by sizes.

2023.03.23 View 8372 -

KAIST develops 'MetaVRain' that realizes vivid 3D real-life images

KAIST (President Kwang Hyung Lee) is a high-speed, low-power artificial intelligence (AI: Artificial Intelligent) semiconductor* MetaVRain, which implements artificial intelligence-based 3D rendering that can render images close to real life on mobile devices.

* AI semiconductor: Semiconductor equipped with artificial intelligence processing functions such as recognition, reasoning, learning, and judgment, and implemented with optimized technology based on super intelligence, ultra-low power, and ultra-reliability

The artificial intelligence semiconductor developed by the research team makes the existing ray-tracing*-based 3D rendering driven by GPU into artificial intelligence-based 3D rendering on a newly manufactured AI semiconductor, making it a 3D video capture studio that requires enormous costs. is not needed, so the cost of 3D model production can be greatly reduced and the memory used can be reduced by more than 180 times. In particular, the existing 3D graphic editing and design, which used complex software such as Blender, is replaced with simple artificial intelligence learning, so the general public can easily apply and edit the desired style.

* Ray-tracing: Technology that obtains images close to real life by tracing the trajectory of all light rays that change according to the light source, shape and texture of the object

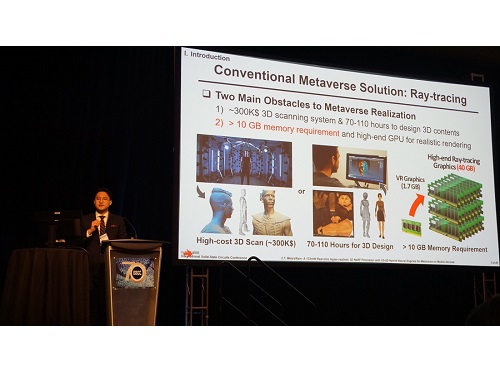

This research, in which doctoral student Donghyun Han participated as the first author, was presented at the International Solid-State Circuit Design Conference (ISSCC) held in San Francisco, USA from February 18th to 22nd by semiconductor researchers from all over the world.

(Paper Number 2.7, Paper Title: MetaVRain: A 133mW Real-time Hyper-realistic 3D NeRF Processor with 1D-2D Hybrid Neural Engines for Metaverse on Mobile Devices (Authors: Donghyeon Han, Junha Ryu, Sangyeob Kim, Sangjin Kim, and Hoi-Jun Yoo))

Professor Yoo's team discovered inefficient operations that occur when implementing 3D rendering through artificial intelligence, and developed a new concept semiconductor that combines human visual recognition methods to reduce them. When a person remembers an object, he has the cognitive ability to immediately guess what the current object looks like based on the process of starting with a rough outline and gradually specifying its shape, and if it is an object he saw right before. In imitation of such a human cognitive process, the newly developed semiconductor adopts an operation method that grasps the rough shape of an object in advance through low-resolution voxels and minimizes the amount of computation required for current rendering based on the result of rendering in the past.

MetaVRain, developed by Professor Yu's team, achieved the world's best performance by developing a state-of-the-art CMOS chip as well as a hardware architecture that mimics the human visual recognition process. MetaVRain is optimized for artificial intelligence-based 3D rendering technology and achieves a rendering speed of up to 100 FPS or more, which is 911 times faster than conventional GPUs. In addition, as a result of the study, the energy efficiency, which represents the energy consumed per video screen processing, is 26,400 times higher than that of GPU, opening the possibility of artificial intelligence-based real-time rendering in VR/AR headsets and mobile devices.

To show an example of using MetaVRain, the research team developed a smart 3D rendering application system together, and showed an example of changing the style of a 3D model according to the user's preferred style. Since you only need to give artificial intelligence an image of the desired style and perform re-learning, you can easily change the style of the 3D model without the help of complicated software. In addition to the example of the application system implemented by Professor Yu's team, it is expected that various application examples will be possible, such as creating a realistic 3D avatar modeled after a user's face, creating 3D models of various structures, and changing the weather according to the film production environment. do.

Starting with MetaVRain, the research team expects that the field of 3D graphics will also begin to be replaced by artificial intelligence, and revealed that the combination of artificial intelligence and 3D graphics is a great technological innovation for the realization of the metaverse.

Professor Hoi-Jun Yoo of the Department of Electrical and Electronic Engineering at KAIST, who led the research, said, “Currently, 3D graphics are focused on depicting what an object looks like, not how people see it.” The significance of this study was revealed as a study that enabled efficient 3D graphics by borrowing the way people recognize and express objects by imitating them.” He also foresaw the future, saying, “The realization of the metaverse will be achieved through innovation in artificial intelligence technology and innovation in artificial intelligence semiconductors, as shown in this study.”

Figure 1. Description of the MetaVRain demo screen

Photo of Presentation at the International Solid-State Circuits Conference (ISSCC)

2023.03.13 View 8505

KAIST develops 'MetaVRain' that realizes vivid 3D real-life images

KAIST (President Kwang Hyung Lee) is a high-speed, low-power artificial intelligence (AI: Artificial Intelligent) semiconductor* MetaVRain, which implements artificial intelligence-based 3D rendering that can render images close to real life on mobile devices.

* AI semiconductor: Semiconductor equipped with artificial intelligence processing functions such as recognition, reasoning, learning, and judgment, and implemented with optimized technology based on super intelligence, ultra-low power, and ultra-reliability

The artificial intelligence semiconductor developed by the research team makes the existing ray-tracing*-based 3D rendering driven by GPU into artificial intelligence-based 3D rendering on a newly manufactured AI semiconductor, making it a 3D video capture studio that requires enormous costs. is not needed, so the cost of 3D model production can be greatly reduced and the memory used can be reduced by more than 180 times. In particular, the existing 3D graphic editing and design, which used complex software such as Blender, is replaced with simple artificial intelligence learning, so the general public can easily apply and edit the desired style.

* Ray-tracing: Technology that obtains images close to real life by tracing the trajectory of all light rays that change according to the light source, shape and texture of the object

This research, in which doctoral student Donghyun Han participated as the first author, was presented at the International Solid-State Circuit Design Conference (ISSCC) held in San Francisco, USA from February 18th to 22nd by semiconductor researchers from all over the world.

(Paper Number 2.7, Paper Title: MetaVRain: A 133mW Real-time Hyper-realistic 3D NeRF Processor with 1D-2D Hybrid Neural Engines for Metaverse on Mobile Devices (Authors: Donghyeon Han, Junha Ryu, Sangyeob Kim, Sangjin Kim, and Hoi-Jun Yoo))

Professor Yoo's team discovered inefficient operations that occur when implementing 3D rendering through artificial intelligence, and developed a new concept semiconductor that combines human visual recognition methods to reduce them. When a person remembers an object, he has the cognitive ability to immediately guess what the current object looks like based on the process of starting with a rough outline and gradually specifying its shape, and if it is an object he saw right before. In imitation of such a human cognitive process, the newly developed semiconductor adopts an operation method that grasps the rough shape of an object in advance through low-resolution voxels and minimizes the amount of computation required for current rendering based on the result of rendering in the past.

MetaVRain, developed by Professor Yu's team, achieved the world's best performance by developing a state-of-the-art CMOS chip as well as a hardware architecture that mimics the human visual recognition process. MetaVRain is optimized for artificial intelligence-based 3D rendering technology and achieves a rendering speed of up to 100 FPS or more, which is 911 times faster than conventional GPUs. In addition, as a result of the study, the energy efficiency, which represents the energy consumed per video screen processing, is 26,400 times higher than that of GPU, opening the possibility of artificial intelligence-based real-time rendering in VR/AR headsets and mobile devices.

To show an example of using MetaVRain, the research team developed a smart 3D rendering application system together, and showed an example of changing the style of a 3D model according to the user's preferred style. Since you only need to give artificial intelligence an image of the desired style and perform re-learning, you can easily change the style of the 3D model without the help of complicated software. In addition to the example of the application system implemented by Professor Yu's team, it is expected that various application examples will be possible, such as creating a realistic 3D avatar modeled after a user's face, creating 3D models of various structures, and changing the weather according to the film production environment. do.

Starting with MetaVRain, the research team expects that the field of 3D graphics will also begin to be replaced by artificial intelligence, and revealed that the combination of artificial intelligence and 3D graphics is a great technological innovation for the realization of the metaverse.

Professor Hoi-Jun Yoo of the Department of Electrical and Electronic Engineering at KAIST, who led the research, said, “Currently, 3D graphics are focused on depicting what an object looks like, not how people see it.” The significance of this study was revealed as a study that enabled efficient 3D graphics by borrowing the way people recognize and express objects by imitating them.” He also foresaw the future, saying, “The realization of the metaverse will be achieved through innovation in artificial intelligence technology and innovation in artificial intelligence semiconductors, as shown in this study.”

Figure 1. Description of the MetaVRain demo screen

Photo of Presentation at the International Solid-State Circuits Conference (ISSCC)

2023.03.13 View 8505 -

KAIST’s unmanned racing car to race in the Indy Autonomous Challenge @ CES 2023 as the only contender representing Asia

- Professor David Hyunchul Shim of the School of Electrical Engineering, is at the Las Vegas Motor Speedway in Las Vegas, Nevada with his students of the Unmanned Systems Research Group (USRG), participating in the Indy Autonomous Challenge (IAC) @ CES as the only Asian team in the race.

Photo 1. Nine teams that competed at the first Indy Autonomous Challenge on October 23, 2021. (KAIST team is the right most team in the front row)

- The EE USRG team won the slot to race in the IAC @ CES 2023 rightly as the semifinals entree of the IAC @ CES 2022’ held in January of last year

- Through the partnership with Hyundai Motor Company, USRG received support to participate in the competition, and is to share the latest developments and trends of the technology with the company researchers

- With upgrades from last year, USRG is to race with a high-speed Indy racing car capable of driving up to 300 km/h and the technology developed in the process is to be used in further advancement of the high-speed autonomous vehicle technology of the future.

KAIST (President Kwang Hyung Lee) announced on the 5th that it will participate in the “Indy Autonomous Challenge (IAC) @ CES 2023”, an official event of the world's largest electronics and information technology exhibition held every year in Las Vegas, Nevada, of the United States from January 5th to 8th.

Photo 2. KAIST Racing Team participating in the Indy Autonomous Challenge @ CES 2023 (Team Leader: Sungwon Na, Team Members: Seongwoo Moon, Hyunwoo Nam, Chanhoe Ryu, Jaeyoung Kang)

“IAC @ CES 2023”, which is to be held at the Las Vegas Motor Speedway (LVMS) on January 7, seeks to advance technology developed as the result of last year's competition to share the results of such advanced high-speed autonomous vehicle technology with the public.

This competition is the 4th competition following the “Indy Autonomous Challenge (IAC)” held for the first time in Indianapolis, USA on October 23, 2021. At the IAC @ CES 2022 following the first IAC competition, the Unmmaned Systems Research Group (USRG) team led by Professor David Hyunchul Shim advanced to the semifinals out of a total of nine teams and won a spot to participate in CES 2023. As a result, the USRG comes into the challenge as the only Asian team to compete with other teams comprised of students and researchers of American and European backgrounds where the culture of motorsports is more deep-rooted.

For CES 2022, Professor David Hyunchul Shim’s research team was able to successfully develop a software that controlled the racing car to comply with the race flags and regulations while going up to 240 km/h all on its on.

Photo 3. KAIST Team’s vehicle on Las Vegas Motor Speedway during the IAC @ CES 2022

In the IAC @ CES 2023, the official racing vehicle AV-23, is a converted version of IL-15, the official racing car for Indy 500, fully automated while maintaining the optimal design for high-speed racing, and was upgraded from the last year’s competition taking up the highest speed up to 300 km/h.

This year’s competition, will develop on last year’s head-to-head autonomous racing and take the form of the single elimination tournament to have the cars overtake the others without any restrictions on the driving course, which would have the team that constantly drives at the fastest speed will win the competition.

Photo 4. KAIST Team’s vehicle overtaking the Italian team, PoliMOVE’s vehicle during one of the race in the IAC @ CES 2022

Professor Shim's team further developed on the CES 2022 certified software to fine tune the external recognition mechanisms and is now focused on precise positioning and driving control technology that factors into maintaining stability even when driving at high speed.

Professor Shim's research team won the Autonomous Driving Competition hosted by Hyundai Motor Company in 2021. Starting with this CES 2023 competition, they signed a partnership contract with Hyundai to receive financial support to participate in the CES competition and share the latest developments and trends of autonomous driving technology with Hyundai Motor's research team.

During CES 2023, the research team will also participate in other events such as the exhibition by the KAIST racing team at the IAC’s official booth located in the West Hall.

Professor David Hyunchul Shim said, “With these competitions being held overseas, there were many difficulties having to keep coming back, but the students took part in it diligently, for which I am deeply grateful. Thanks to their efforts, we were able to continue in this competition, which will be a way to verify the autonomous driving technology that we developed ourselves over the past 13 years, and I highly appreciate that.”

“While high-speed autonomous driving technology is a technology that is not yet sought out in Korea, but it can be applied most effectively for long-distance travel in the Korea,” he went on to add. “It has huge advantages in that it does not require constructions for massive infrastructure that costs enormous amount of money such as high-speed rail or urban aviation and with our design, it is minimally affected by weather conditions.” he emphasized.

On a different note, the IAC @ CES 2023 is co-hosted by the Consumer Technology Association (CTA) and Energy Systems Network (ESN), the organizers of CES. Last year’s IAC winner, Technische Universität München of Germany, and MIT-PITT-RW, a team of Massachusetts Institute of Technology (Massachusetts), University of Pittsburgh (Pennsylvania), Rochester Institute of Technology (New York), University of Waterloo (Canada), with and the University of Waterloo, along with TII EuroRacing - University of Modena and Reggio Emilia (Italy), Technology Innovation Institute (United Arab Emirates), and five other teams are in the race for the win against KAIST.

Photo 5. KAIST Team’s vehicle on the track during the IAC @ CES 2022

The Indy Autonomous Challenge is scheduled to hold its fifth competition at the Monza track in Italy in June 2023 and the sixth competition at CES 2024.

2023.01.05 View 11614

KAIST’s unmanned racing car to race in the Indy Autonomous Challenge @ CES 2023 as the only contender representing Asia

- Professor David Hyunchul Shim of the School of Electrical Engineering, is at the Las Vegas Motor Speedway in Las Vegas, Nevada with his students of the Unmanned Systems Research Group (USRG), participating in the Indy Autonomous Challenge (IAC) @ CES as the only Asian team in the race.

Photo 1. Nine teams that competed at the first Indy Autonomous Challenge on October 23, 2021. (KAIST team is the right most team in the front row)

- The EE USRG team won the slot to race in the IAC @ CES 2023 rightly as the semifinals entree of the IAC @ CES 2022’ held in January of last year

- Through the partnership with Hyundai Motor Company, USRG received support to participate in the competition, and is to share the latest developments and trends of the technology with the company researchers

- With upgrades from last year, USRG is to race with a high-speed Indy racing car capable of driving up to 300 km/h and the technology developed in the process is to be used in further advancement of the high-speed autonomous vehicle technology of the future.

KAIST (President Kwang Hyung Lee) announced on the 5th that it will participate in the “Indy Autonomous Challenge (IAC) @ CES 2023”, an official event of the world's largest electronics and information technology exhibition held every year in Las Vegas, Nevada, of the United States from January 5th to 8th.

Photo 2. KAIST Racing Team participating in the Indy Autonomous Challenge @ CES 2023 (Team Leader: Sungwon Na, Team Members: Seongwoo Moon, Hyunwoo Nam, Chanhoe Ryu, Jaeyoung Kang)

“IAC @ CES 2023”, which is to be held at the Las Vegas Motor Speedway (LVMS) on January 7, seeks to advance technology developed as the result of last year's competition to share the results of such advanced high-speed autonomous vehicle technology with the public.

This competition is the 4th competition following the “Indy Autonomous Challenge (IAC)” held for the first time in Indianapolis, USA on October 23, 2021. At the IAC @ CES 2022 following the first IAC competition, the Unmmaned Systems Research Group (USRG) team led by Professor David Hyunchul Shim advanced to the semifinals out of a total of nine teams and won a spot to participate in CES 2023. As a result, the USRG comes into the challenge as the only Asian team to compete with other teams comprised of students and researchers of American and European backgrounds where the culture of motorsports is more deep-rooted.

For CES 2022, Professor David Hyunchul Shim’s research team was able to successfully develop a software that controlled the racing car to comply with the race flags and regulations while going up to 240 km/h all on its on.

Photo 3. KAIST Team’s vehicle on Las Vegas Motor Speedway during the IAC @ CES 2022

In the IAC @ CES 2023, the official racing vehicle AV-23, is a converted version of IL-15, the official racing car for Indy 500, fully automated while maintaining the optimal design for high-speed racing, and was upgraded from the last year’s competition taking up the highest speed up to 300 km/h.

This year’s competition, will develop on last year’s head-to-head autonomous racing and take the form of the single elimination tournament to have the cars overtake the others without any restrictions on the driving course, which would have the team that constantly drives at the fastest speed will win the competition.

Photo 4. KAIST Team’s vehicle overtaking the Italian team, PoliMOVE’s vehicle during one of the race in the IAC @ CES 2022

Professor Shim's team further developed on the CES 2022 certified software to fine tune the external recognition mechanisms and is now focused on precise positioning and driving control technology that factors into maintaining stability even when driving at high speed.

Professor Shim's research team won the Autonomous Driving Competition hosted by Hyundai Motor Company in 2021. Starting with this CES 2023 competition, they signed a partnership contract with Hyundai to receive financial support to participate in the CES competition and share the latest developments and trends of autonomous driving technology with Hyundai Motor's research team.

During CES 2023, the research team will also participate in other events such as the exhibition by the KAIST racing team at the IAC’s official booth located in the West Hall.

Professor David Hyunchul Shim said, “With these competitions being held overseas, there were many difficulties having to keep coming back, but the students took part in it diligently, for which I am deeply grateful. Thanks to their efforts, we were able to continue in this competition, which will be a way to verify the autonomous driving technology that we developed ourselves over the past 13 years, and I highly appreciate that.”

“While high-speed autonomous driving technology is a technology that is not yet sought out in Korea, but it can be applied most effectively for long-distance travel in the Korea,” he went on to add. “It has huge advantages in that it does not require constructions for massive infrastructure that costs enormous amount of money such as high-speed rail or urban aviation and with our design, it is minimally affected by weather conditions.” he emphasized.

On a different note, the IAC @ CES 2023 is co-hosted by the Consumer Technology Association (CTA) and Energy Systems Network (ESN), the organizers of CES. Last year’s IAC winner, Technische Universität München of Germany, and MIT-PITT-RW, a team of Massachusetts Institute of Technology (Massachusetts), University of Pittsburgh (Pennsylvania), Rochester Institute of Technology (New York), University of Waterloo (Canada), with and the University of Waterloo, along with TII EuroRacing - University of Modena and Reggio Emilia (Italy), Technology Innovation Institute (United Arab Emirates), and five other teams are in the race for the win against KAIST.

Photo 5. KAIST Team’s vehicle on the track during the IAC @ CES 2022

The Indy Autonomous Challenge is scheduled to hold its fifth competition at the Monza track in Italy in June 2023 and the sixth competition at CES 2024.

2023.01.05 View 11614 -

EE Professor Youjip Won Elected as the President of Korean Institute of Information Scientists and Engineers for 2024

< Professor Youjip Won of KAIST School of Electrical Engineering >

Professor Youjip Won of KAIST School of Electrical Engineering was elected as the President of Korean Institute of Information Scientists and Engineers (KIISE) for the Succeding Term for 2023 on November 4th, 2022. Professor Won will serve as the 39th President of KIISE for one year starting from Jan. 1, 2024. He is one of the leading experts on Operating Systems, with a particular emphasis on storage systems.

Korean Institute of Information Scientists and Engineers (KIISE), one of the most prestigious Korean academic institutions in the field of computer and software, was founded in 1973 and boasts a membership of over 42,000 people and 437 special/group members. KIISE is responsible for annually publishing 72 periodicals and holding 50 academic conferences.

2022.11.15 View 6995

EE Professor Youjip Won Elected as the President of Korean Institute of Information Scientists and Engineers for 2024

< Professor Youjip Won of KAIST School of Electrical Engineering >

Professor Youjip Won of KAIST School of Electrical Engineering was elected as the President of Korean Institute of Information Scientists and Engineers (KIISE) for the Succeding Term for 2023 on November 4th, 2022. Professor Won will serve as the 39th President of KIISE for one year starting from Jan. 1, 2024. He is one of the leading experts on Operating Systems, with a particular emphasis on storage systems.

Korean Institute of Information Scientists and Engineers (KIISE), one of the most prestigious Korean academic institutions in the field of computer and software, was founded in 1973 and boasts a membership of over 42,000 people and 437 special/group members. KIISE is responsible for annually publishing 72 periodicals and holding 50 academic conferences.

2022.11.15 View 6995 -

Professor Shinhyun Choi’s team, selected for Nature Communications Editors’ highlight

[ From left, Ph.D. candidates See-On Park and Hakcheon Jeong, along with Master's student Jong-Yong Park and Professor Shinhyun Choi ]

See-On Park, Hakcheon Jeong, Jong-Yong Park - a team of researchers under the leadership of Professor Shinhyun Choi of the School of Electrical Engineering, developed a highly reliable variable resistor (memristor) array that simulates the behavior of neurons using a metal oxide layer with an oxygen concentration gradient, and published their work in Nature Communications. The study was selected as the Nature Communications' Editor's highlight, and as the featured article posted on the main page of the journal's website.

Link : https://www.nature.com/ncomms/

[ Figure 1. The featured image on the main page of the Nature Communications' website introducing the research by Professor Choi's team on the memristor for artificial neurons ]

Thesis title: Experimental demonstration of highly reliable dynamic memristor for artificial neuron and neuromorphic computing.

( https://doi.org/10.1038/s41467-022-30539-6 )

At KAIST, their research was introduced on the 2022 Fall issue of Breakthroughs, the biannual newsletter published by KAIST College of Engineering.

This research was conducted with the support from the Samsung Research Funding & Incubation Center of Samsung Electronics.

2022.11.01 View 10388

Professor Shinhyun Choi’s team, selected for Nature Communications Editors’ highlight

[ From left, Ph.D. candidates See-On Park and Hakcheon Jeong, along with Master's student Jong-Yong Park and Professor Shinhyun Choi ]

See-On Park, Hakcheon Jeong, Jong-Yong Park - a team of researchers under the leadership of Professor Shinhyun Choi of the School of Electrical Engineering, developed a highly reliable variable resistor (memristor) array that simulates the behavior of neurons using a metal oxide layer with an oxygen concentration gradient, and published their work in Nature Communications. The study was selected as the Nature Communications' Editor's highlight, and as the featured article posted on the main page of the journal's website.

Link : https://www.nature.com/ncomms/

[ Figure 1. The featured image on the main page of the Nature Communications' website introducing the research by Professor Choi's team on the memristor for artificial neurons ]

Thesis title: Experimental demonstration of highly reliable dynamic memristor for artificial neuron and neuromorphic computing.

( https://doi.org/10.1038/s41467-022-30539-6 )

At KAIST, their research was introduced on the 2022 Fall issue of Breakthroughs, the biannual newsletter published by KAIST College of Engineering.

This research was conducted with the support from the Samsung Research Funding & Incubation Center of Samsung Electronics.

2022.11.01 View 10388 -

A New Family of Ducks joins the Feathery KAISTians

In October of this year, KAIST signed an 'Agreement for the Training Program for AI Semiconductor Designers' with Samsung Electronics, to conduct joint research and actively nurture master's and doctorate researchers in the field of Semiconductors designed exclusively for AI devices. To celebrate this commemorative agreement for cooperation bound for mutual success, Samsung Electronics gifted a set of 5 ducks to KAIST.

The Duck Pond and the Geese have been representing KAIST as famous mascots.

It all started back in 2000, when the incumbent President, Professor Kwang Hyung Lee served was then a professor at the Department of Bio and Brain Engineering, he first picked up a pack of ducks from Yuseong Market and started taking care of it on campus around the Carillon pond. While the ducks came and went, eventually being replaced with a pack of geese over the time, for more than 20 years, the pack of feathery KAISTians stole the eyes of the passersby and were loved by both the on-campus members and the visitors, alike.

The representative of the Samsung Electronics said that the pack of ducks comprising of a new breed contains the message of SEC that it hopes that the PIM semiconductor technology will grow to become the super-gap technology that would turn heads and grab attention of the world as the mascot of Korea's technological prowess under the combined care of KAIST and SEC.

Would the ducks find KAIST likable? We will keep you informed of how they are doing!

2022.11.01 View 6721

A New Family of Ducks joins the Feathery KAISTians

In October of this year, KAIST signed an 'Agreement for the Training Program for AI Semiconductor Designers' with Samsung Electronics, to conduct joint research and actively nurture master's and doctorate researchers in the field of Semiconductors designed exclusively for AI devices. To celebrate this commemorative agreement for cooperation bound for mutual success, Samsung Electronics gifted a set of 5 ducks to KAIST.

The Duck Pond and the Geese have been representing KAIST as famous mascots.

It all started back in 2000, when the incumbent President, Professor Kwang Hyung Lee served was then a professor at the Department of Bio and Brain Engineering, he first picked up a pack of ducks from Yuseong Market and started taking care of it on campus around the Carillon pond. While the ducks came and went, eventually being replaced with a pack of geese over the time, for more than 20 years, the pack of feathery KAISTians stole the eyes of the passersby and were loved by both the on-campus members and the visitors, alike.

The representative of the Samsung Electronics said that the pack of ducks comprising of a new breed contains the message of SEC that it hopes that the PIM semiconductor technology will grow to become the super-gap technology that would turn heads and grab attention of the world as the mascot of Korea's technological prowess under the combined care of KAIST and SEC.

Would the ducks find KAIST likable? We will keep you informed of how they are doing!

2022.11.01 View 6721 -

Yuji Roh Awarded 2022 Microsoft Research PhD Fellowship

KAIST PhD candidate Yuji Roh of the School of Electrical Engineering (advisor: Prof. Steven Euijong Whang) was selected as a recipient of the 2022 Microsoft Research PhD Fellowship.

< KAIST PhD candidate Yuji Roh (advisor: Prof. Steven Euijong Whang) >

The Microsoft Research PhD Fellowship is a scholarship program that recognizes outstanding graduate students for their exceptional and innovative research in areas relevant to computer science and related fields. This year, 36 people from around the world received the fellowship, and Yuji Roh from KAIST EE is the only recipient from universities in Korea. Each selected fellow will receive a $10,000 scholarship and an opportunity to intern at Microsoft under the guidance of an experienced researcher.

Yuji Roh was named a fellow in the field of “Machine Learning” for her outstanding achievements in Trustworthy AI. Her research highlights include designing a state-of-the-art fair training framework using batch selection and developing novel algorithms for both fair and robust training. Her works have been presented at the top machine learning conferences ICML, ICLR, and NeurIPS among others. She also co-presented a tutorial on Trustworthy AI at the top data mining conference ACM SIGKDD. She is currently interning at the NVIDIA Research AI Algorithms Group developing large-scale real-world fair AI frameworks.

The list of fellowship recipients and the interview videos are displayed on the Microsoft webpage and Youtube.

The list of recipients: https://www.microsoft.com/en-us/research/academic-program/phd-fellowship/2022-recipients/

Interview (Global): https://www.youtube.com/watch?v=T4Q-XwOOoJc

Interview (Asia): https://www.youtube.com/watch?v=qwq3R1XU8UE

[Highlighted research achievements by Yuji Roh: Fair batch selection framework]

[Highlighted research achievements by Yuji Roh: Fair and robust training framework]

2022.10.28 View 15374

Yuji Roh Awarded 2022 Microsoft Research PhD Fellowship

KAIST PhD candidate Yuji Roh of the School of Electrical Engineering (advisor: Prof. Steven Euijong Whang) was selected as a recipient of the 2022 Microsoft Research PhD Fellowship.

< KAIST PhD candidate Yuji Roh (advisor: Prof. Steven Euijong Whang) >

The Microsoft Research PhD Fellowship is a scholarship program that recognizes outstanding graduate students for their exceptional and innovative research in areas relevant to computer science and related fields. This year, 36 people from around the world received the fellowship, and Yuji Roh from KAIST EE is the only recipient from universities in Korea. Each selected fellow will receive a $10,000 scholarship and an opportunity to intern at Microsoft under the guidance of an experienced researcher.

Yuji Roh was named a fellow in the field of “Machine Learning” for her outstanding achievements in Trustworthy AI. Her research highlights include designing a state-of-the-art fair training framework using batch selection and developing novel algorithms for both fair and robust training. Her works have been presented at the top machine learning conferences ICML, ICLR, and NeurIPS among others. She also co-presented a tutorial on Trustworthy AI at the top data mining conference ACM SIGKDD. She is currently interning at the NVIDIA Research AI Algorithms Group developing large-scale real-world fair AI frameworks.

The list of fellowship recipients and the interview videos are displayed on the Microsoft webpage and Youtube.

The list of recipients: https://www.microsoft.com/en-us/research/academic-program/phd-fellowship/2022-recipients/

Interview (Global): https://www.youtube.com/watch?v=T4Q-XwOOoJc

Interview (Asia): https://www.youtube.com/watch?v=qwq3R1XU8UE

[Highlighted research achievements by Yuji Roh: Fair batch selection framework]

[Highlighted research achievements by Yuji Roh: Fair and robust training framework]

2022.10.28 View 15374 -

Shaping the AI Semiconductor Ecosystem

- As the marriage of AI and semiconductor being highlighted as the strategic technology of national enthusiasm, KAIST's achievements in the related fields accumulated through top-class education and research capabilities that surpass that of peer universities around the world are standing far apart from the rest of the pack.

As Artificial Intelligence Semiconductor, or a system of semiconductors designed for specifically for highly complicated computation need for AI to conduct its learning and deducing calculations, (hereafter AI semiconductors) stand out as a national strategic technology, the related achievements of KAIST, headed by President Kwang Hyung Lee, are also attracting attention. The Ministry of Science, ICT and Future Planning (MSIT) of Korea initiated a program to support the advancement of AI semiconductor last year with the goal of occupying 20% of the global AI semiconductor market by 2030. This year, through industry-university-research discussions, the Ministry expanded to the program with the addition of 1.2 trillion won of investment over five years through 'Support Plan for AI Semiconductor Industry Promotion'. Accordingly, major universities began putting together programs devised to train students to develop expertise in AI semiconductors.

KAIST has accumulated top-notch educational and research capabilities in the two core fields of AI semiconductor - Semiconductor and Artificial Intelligence. Notably, in the field of semiconductors, the International Solid-State Circuit Conference (ISSCC) is the world's most prestigious conference about designing of semiconductor integrated circuit. Established in 1954, with more than 60% of the participants coming from companies including Samsung, Qualcomm, TSMC, and Intel, the conference naturally focuses on practical value of the studies from the industrial point-of-view, earning the nickname the ‘Semiconductor Design Olympics’. At such conference of legacy and influence, KAIST kept its presence widely visible over other participating universities, leading in terms of the number of accepted papers over world-class schools such as Massachusetts Institute of Technology (MIT) and Stanford for the past 17 years.

Number of papers published at the InternationalSolid-State Circuit Conference (ISSCC) in 2022 sorted by nations and by institutions

Number of papers by universities presented at the International Solid-State Circuit Conference (ISCCC) in 2006~2022

In terms of the number of papers accepted at the ISSCC, KAIST ranked among top two universities each year since 2006. Looking at the average number of accepted papers over the past 17 years, KAIST stands out as an unparalleled leader. The average number of KAIST papers adopted during the period of 17 years from 2006 through 2022, was 8.4, which is almost double of that of competitors like MIT (4.6) and UCLA (3.6). In Korea, it maintains the second place overall after Samsung, the undisputed number one in the semiconductor design field. Also, this year, KAIST was ranked first among universities participating at the Symposium on VLSI Technology and Circuits, an academic conference in the field of integrated circuits that rivals the ISSCC.

Number of papers adopted by the Symposium on VLSI Technology and Circuits in 2022 submitted from the universities

With KAIST researchers working and presenting new technologies at the frontiers of all key areas of the semiconductor industry, the quality of KAIST research is also maintained at the highest level. Professor Myoungsoo Jung's research team in the School of Electrical Engineering is actively working to develop heterogeneous computing environment with high energy efficiency in response to the industry's demand for high performance at low power. In the field of materials, a research team led by Professor Byong-Guk Park of the Department of Materials Science and Engineering developed the Spin Orbit Torque (SOT)-based Magnetic RAM (MRAM) memory that operates at least 10 times faster than conventional memories to suggest a way to overcome the limitations of the existing 'von Neumann structure'.

As such, while providing solutions to major challenges in the current semiconductor industry, the development of new technologies necessary to preoccupy new fields in the semiconductor industry are also very actively pursued. In the field of Quantum Computing, which is attracting attention as next-generation computing technology needed in order to take the lead in the fields of cryptography and nonlinear computation, Professor Sanghyeon Kim's research team in the School of Electrical Engineering presented the world's first 3D integrated quantum computing system at 2021 VLSI Symposium. In Neuromorphic Computing, which is expected to bring remarkable advancements in the field of artificial intelligence by utilizing the principles of the neurology, the research team of Professor Shinhyun Choi of School of Electrical Engineering is developing a next-generation memristor that mimics neurons.

The number of papers by the International Conference on Machine Learning (ICML) and the Conference on Neural Information Processing Systems (NeurIPS), two of the world’s most prestigious academic societies in the field of artificial intelligence (KAIST 6th in the world, 1st in Asia, in 2020)

The field of artificial intelligence has also grown rapidly. Based on the number of papers from the International Conference on Machine Learning (ICML) and the Conference on Neural Information Processing Systems (NeurIPS), two of the world's most prestigious conferences in the field of artificial intelligence, KAIST ranked 6th in the world in 2020 and 1st in Asia. Since 2012, KAIST's ranking steadily inclined from 37th to 6th, climbing 31 steps over the period of eight years. In 2021, 129 papers, or about 40%, of Korean papers published at 11 top artificial intelligence conferences were presented by KAIST. Thanks to KAIST's efforts, in 2021, Korea ranked sixth after the United States, China, United Kingdom, Canada, and Germany in terms of the number of papers published by global AI academic societies.

Number of papers from Korea (and by KAIST) published at 11 top conferences in the field of artificial intelligence in 2021

In terms of content, KAIST's AI research is also at the forefront. Professor Hoi-Jun Yoo's research team in the School of Electrical Engineering compensated for the shortcomings of the “edge networks” by implementing artificial intelligence real-time learning networks on mobile devices. In order to materialize artificial intelligence, data accumulation and a huge amount of computation is required. For this, a high-performance server takes care of massive computation, and for the user terminals, the “edge network” that collects data and performs simple computations are used. Professor Yoo's research greatly increased AI’s processing speed and performance by allotting the learning task to the user terminal as well.

In June, a research team led by Professor Min-Soo Kim of the School of Computing presented a solution that is essential for processing super-scale artificial intelligence models. The super-scale machine learning system developed by the research team is expected to achieve speeds up to 8.8 times faster than Google's Tensorflow or IBM's System DS, which are mainly used in the industry.

KAIST is also making remarkable achievements in the field of AI semiconductors. In 2020, Professor Minsoo Rhu's research team in the School of Electrical Engineering succeeded in developing the world's first AI semiconductor optimized for AI recommendation systems. Due to the nature of the AI recommendation system having to handle vast amounts of contents and user information, it quickly meets its limitation because of the information bottleneck when the process is operated through a general-purpose artificial intelligence system. Professor Minsoo Rhu's team developed a semiconductor that can achieve a speed that is 21 times faster than existing systems using the 'Processing-In-Memory (PIM)' technology. PIM is a technology that improves efficiency by performing the calculations in 'RAM', or random-access memory, which is usually only used to store data temporarily just before they are processed. When PIM technology is put out on the market, it is expected that fortify competitiveness of Korean companies in the AI semiconductor market drastically, as they already hold great strength in the memory area.

KAIST does not plan to be complacent with its achievements, but is making various plans to further the distance from the competitors catching on in the fields of artificial intelligence, semiconductors, and AI semiconductors. Following the establishment of the first artificial intelligence research center in Korea in 1990, the Kim Jaechul AI Graduate School was opened in 2019 to sustain the supply chain of the experts in the field. In 2020, Artificial Intelligence Semiconductor System Research Center was launched to conduct convergent research on AI and semiconductors, which was followed by the establishment of the AI Institutes to promote “AI+X” research efforts.

Based on the internal capabilities accumulated through these efforts, KAIST is also making efforts to train human resources needed in these areas. KAIST established joint research centers with companies such as Naver, while collaborating with local governments such as Hwaseong City to simultaneously nurture professional manpower. Back in 2021, KAIST signed an agreement to establish the Semiconductor System Engineering Department with Samsung Electronics and are preparing a new semiconductor specialist training program. The newly established Department of Semiconductor System Engineering will select around 100 new students every year from 2023 and provide special scholarships to all students so that they can develop their professional skills. In addition, through close cooperation with the industry, they will receive special support which includes field trips and internships at Samsung Electronics, and joint workshops and on-site training.

KAIST has made a significant contribution to the growth of the Korean semiconductor industry ecosystem, producing 25% of doctoral workers in the domestic semiconductor field and 20% of CEOs of mid-sized and venture companies with doctoral degrees. With the dawn coming up on the AI semiconductor ecosystem, whether KAIST will reprise the pivotal role seems to be the crucial point of business.

2022.08.05 View 13631

Shaping the AI Semiconductor Ecosystem

- As the marriage of AI and semiconductor being highlighted as the strategic technology of national enthusiasm, KAIST's achievements in the related fields accumulated through top-class education and research capabilities that surpass that of peer universities around the world are standing far apart from the rest of the pack.

As Artificial Intelligence Semiconductor, or a system of semiconductors designed for specifically for highly complicated computation need for AI to conduct its learning and deducing calculations, (hereafter AI semiconductors) stand out as a national strategic technology, the related achievements of KAIST, headed by President Kwang Hyung Lee, are also attracting attention. The Ministry of Science, ICT and Future Planning (MSIT) of Korea initiated a program to support the advancement of AI semiconductor last year with the goal of occupying 20% of the global AI semiconductor market by 2030. This year, through industry-university-research discussions, the Ministry expanded to the program with the addition of 1.2 trillion won of investment over five years through 'Support Plan for AI Semiconductor Industry Promotion'. Accordingly, major universities began putting together programs devised to train students to develop expertise in AI semiconductors.

KAIST has accumulated top-notch educational and research capabilities in the two core fields of AI semiconductor - Semiconductor and Artificial Intelligence. Notably, in the field of semiconductors, the International Solid-State Circuit Conference (ISSCC) is the world's most prestigious conference about designing of semiconductor integrated circuit. Established in 1954, with more than 60% of the participants coming from companies including Samsung, Qualcomm, TSMC, and Intel, the conference naturally focuses on practical value of the studies from the industrial point-of-view, earning the nickname the ‘Semiconductor Design Olympics’. At such conference of legacy and influence, KAIST kept its presence widely visible over other participating universities, leading in terms of the number of accepted papers over world-class schools such as Massachusetts Institute of Technology (MIT) and Stanford for the past 17 years.

Number of papers published at the InternationalSolid-State Circuit Conference (ISSCC) in 2022 sorted by nations and by institutions

Number of papers by universities presented at the International Solid-State Circuit Conference (ISCCC) in 2006~2022