Engineering

-

A 10-Month Journey of Tiny Flaps Completed: A Special Family Returns to KAIST Duck Pond

On the morning of June 9, 2025, gentle activity stirred early around the KAIST campus duck pond. It was the day a special family of ducks—and two goslings—were to be released back into the pond after spending a month in a temporary shelter. One by one, the ducklings cautiously emerged from their box, waddling toward the water's edge and scanning their surroundings, followed closely by their mother.

< The landscape manager from the KAIST Facilities Team releases the ducks and goslings. >

The mother duck, once a rescued loner who couldn’t integrate with the flock, returned triumphantly as the head of a new family—caring for both ducklings and goslings. Students and faculty looked on quietly, welcoming them back and reflecting on their remarkable 10-month journey.

The story began in July 2024, as a student filed a report of spotting two ducklings wandering near the pond without a mother. Based on their soft down, flat beaks, and lack of fear around humans, it was presumed they had been abandoned. Professor Won Do Heo of the Department of Biological Sciences—affectionately known as the “Goose Dad”—and the KAIST Facilities Team quickly stepped in to rescue them. After about a month of care, the ducklings were released back into the pond.

< On June 9, the day of the release, KAIST President Kwang-Hyung Lee (left), the former “Goose Dad,” and Professor Won Do Heo (right), the current “Goose Dad,” watched the flock as they freely wobbled about. >

At first, the ducklings seemed to adapt, but they started distancing themselves from the established goose flock. One eventually disappeared, and the remaining duckling was found injured by the pond during winter. Although KAIST typically avoids making human interference in the natural ecosystem, an exception was made to save the young duck’s life. It was put under the care of Professor Heo and the Facilities Team to regain its health within a month.

In the spring, the healed duck began laying eggs. Professor Heo supported the process by adjusting its diet, avoiding further intervention. On Children’s Day, May 5, the duck’s eggs hatched. The once-isolated duck had become a mother. Ten days later, on May 15, four goslings also hatched from the resident goose flock. With new life flourishing, the pond was more vibrant than ever.

< Rescued baby goslings near the pond, alongside the duck family that took them in. The mother duck—once a vulnerable duckling herself—had grown strong enough to care for others in need. >

But just days later, the mother goose disappeared, and two goslings—still unable to swim—were found shivering by the pond. Dahyeon Byeon, a student from Seoul National University who came for a visit on that day, reported this upon sighting, prompting another rescue. The vulnerable goslings were brought to the shelter to stay with the duck family.

Initially, the interspecies cohabitation was uneasy. But the mother duck did not reject the goslings. Slowly, they began to eat and sleep together, forming a new kind of family. After a month, they were released together into the pond—and to everyone’s surprise, the existing goose flock accepted both the goslings and the duck family.

< A peaceful moment for the duck family. The baby goslings naturally followed the mother duck. >

It took ten months for this family to return. From abandonment and injury to healing, birth, and unexpected bonds, this was more than a story of survival. It was a journey of transformation. The duck family’s ten-month saga is a quiet miracle—written in small moments of crisis, care, and connection—and a lasting memory on the KAIST campus.

< The resident goose flock at KAIST’s pond naturally accepted the returning duck and goslings as part of their group. >

2025.06.10 View 1091

A 10-Month Journey of Tiny Flaps Completed: A Special Family Returns to KAIST Duck Pond

On the morning of June 9, 2025, gentle activity stirred early around the KAIST campus duck pond. It was the day a special family of ducks—and two goslings—were to be released back into the pond after spending a month in a temporary shelter. One by one, the ducklings cautiously emerged from their box, waddling toward the water's edge and scanning their surroundings, followed closely by their mother.

< The landscape manager from the KAIST Facilities Team releases the ducks and goslings. >

The mother duck, once a rescued loner who couldn’t integrate with the flock, returned triumphantly as the head of a new family—caring for both ducklings and goslings. Students and faculty looked on quietly, welcoming them back and reflecting on their remarkable 10-month journey.

The story began in July 2024, as a student filed a report of spotting two ducklings wandering near the pond without a mother. Based on their soft down, flat beaks, and lack of fear around humans, it was presumed they had been abandoned. Professor Won Do Heo of the Department of Biological Sciences—affectionately known as the “Goose Dad”—and the KAIST Facilities Team quickly stepped in to rescue them. After about a month of care, the ducklings were released back into the pond.

< On June 9, the day of the release, KAIST President Kwang-Hyung Lee (left), the former “Goose Dad,” and Professor Won Do Heo (right), the current “Goose Dad,” watched the flock as they freely wobbled about. >

At first, the ducklings seemed to adapt, but they started distancing themselves from the established goose flock. One eventually disappeared, and the remaining duckling was found injured by the pond during winter. Although KAIST typically avoids making human interference in the natural ecosystem, an exception was made to save the young duck’s life. It was put under the care of Professor Heo and the Facilities Team to regain its health within a month.

In the spring, the healed duck began laying eggs. Professor Heo supported the process by adjusting its diet, avoiding further intervention. On Children’s Day, May 5, the duck’s eggs hatched. The once-isolated duck had become a mother. Ten days later, on May 15, four goslings also hatched from the resident goose flock. With new life flourishing, the pond was more vibrant than ever.

< Rescued baby goslings near the pond, alongside the duck family that took them in. The mother duck—once a vulnerable duckling herself—had grown strong enough to care for others in need. >

But just days later, the mother goose disappeared, and two goslings—still unable to swim—were found shivering by the pond. Dahyeon Byeon, a student from Seoul National University who came for a visit on that day, reported this upon sighting, prompting another rescue. The vulnerable goslings were brought to the shelter to stay with the duck family.

Initially, the interspecies cohabitation was uneasy. But the mother duck did not reject the goslings. Slowly, they began to eat and sleep together, forming a new kind of family. After a month, they were released together into the pond—and to everyone’s surprise, the existing goose flock accepted both the goslings and the duck family.

< A peaceful moment for the duck family. The baby goslings naturally followed the mother duck. >

It took ten months for this family to return. From abandonment and injury to healing, birth, and unexpected bonds, this was more than a story of survival. It was a journey of transformation. The duck family’s ten-month saga is a quiet miracle—written in small moments of crisis, care, and connection—and a lasting memory on the KAIST campus.

< The resident goose flock at KAIST’s pond naturally accepted the returning duck and goslings as part of their group. >

2025.06.10 View 1091 -

KAIST Succeeds in Real-Time Carbon Dioxide Monitoring Without Batteries or External Power

< (From left) Master's Student Gyurim Jang, Professor Kyeongha Kwon >

KAIST (President Kwang Hyung Lee) announced on June 9th that a research team led by Professor Kyeongha Kwon from the School of Electrical Engineering, in a joint study with Professor Hanjun Ryu's team at Chung-Ang University, has developed a self-powered wireless carbon dioxide (CO2) monitoring system. This innovative system harvests fine vibrational energy from its surroundings to periodically measure CO2 concentrations.

This breakthrough addresses a critical need in environmental monitoring: accurately understanding "how much" CO2 is being emitted to combat climate change and global warming. While CO2 monitoring technology is key to this, existing systems largely rely on batteries or wired power system, imposing limitations on installation and maintenance. The KAIST team tackled this by creating a self-powered wireless system that operates without external power.

The core of this new system is an "Inertia-driven Triboelectric Nanogenerator (TENG)" that converts vibrations (with amplitudes ranging from 20-4000 ㎛ and frequencies from 0-300 Hz) generated by industrial equipment or pipelines into electricity. This enables periodic CO2 concentration measurements and wireless transmission without the need for batteries.

< Figure 1. Concept and configuration of self-powered wireless CO2 monitoring system using fine vibration harvesting (a) System block diagram (b) Photo of fabricated system prototype >

The research team successfully amplified fine vibrations and induced resonance by combining spring-attached 4-stack TENGs. They achieved stable power production of 0.5 mW under conditions of 13 Hz and 0.56 g acceleration. The generated power was then used to operate a CO2 sensor and a Bluetooth Low Energy (BLE) system-on-a-chip (SoC).

Professor Kyeongha Kwon emphasized, "For efficient environmental monitoring, a system that can operate continuously without power limitations is essential." She explained, "In this research, we implemented a self-powered system that can periodically measure and wirelessly transmit CO2 concentrations based on the energy generated from an inertia-driven TENG." She added, "This technology can serve as a foundational technology for future self-powered environmental monitoring platforms integrating various sensors."

< Figure 2. TENG energy harvesting-based wireless CO2 sensing system operation results (c) Experimental setup (d) Measured CO2 concentration results powered by TENG and conventional DC power source >

This research was published on June 1st in the internationally renowned academic journal `Nano Energy (IF 16.8)`. Gyurim Jang, a master's student at KAIST, and Daniel Manaye Tiruneh, a master's student at Chung-Ang University, are the co-first authors of the paper.*Paper Title: Highly compact inertia-driven triboelectric nanogenerator for self-powered wireless CO2 monitoring via fine-vibration harvesting*DOI: 10.1016/j.nanoen.2025.110872

This research was supported by the Saudi Aramco-KAIST CO2 Management Center.

2025.06.09 View 46739

KAIST Succeeds in Real-Time Carbon Dioxide Monitoring Without Batteries or External Power

< (From left) Master's Student Gyurim Jang, Professor Kyeongha Kwon >

KAIST (President Kwang Hyung Lee) announced on June 9th that a research team led by Professor Kyeongha Kwon from the School of Electrical Engineering, in a joint study with Professor Hanjun Ryu's team at Chung-Ang University, has developed a self-powered wireless carbon dioxide (CO2) monitoring system. This innovative system harvests fine vibrational energy from its surroundings to periodically measure CO2 concentrations.

This breakthrough addresses a critical need in environmental monitoring: accurately understanding "how much" CO2 is being emitted to combat climate change and global warming. While CO2 monitoring technology is key to this, existing systems largely rely on batteries or wired power system, imposing limitations on installation and maintenance. The KAIST team tackled this by creating a self-powered wireless system that operates without external power.

The core of this new system is an "Inertia-driven Triboelectric Nanogenerator (TENG)" that converts vibrations (with amplitudes ranging from 20-4000 ㎛ and frequencies from 0-300 Hz) generated by industrial equipment or pipelines into electricity. This enables periodic CO2 concentration measurements and wireless transmission without the need for batteries.

< Figure 1. Concept and configuration of self-powered wireless CO2 monitoring system using fine vibration harvesting (a) System block diagram (b) Photo of fabricated system prototype >

The research team successfully amplified fine vibrations and induced resonance by combining spring-attached 4-stack TENGs. They achieved stable power production of 0.5 mW under conditions of 13 Hz and 0.56 g acceleration. The generated power was then used to operate a CO2 sensor and a Bluetooth Low Energy (BLE) system-on-a-chip (SoC).

Professor Kyeongha Kwon emphasized, "For efficient environmental monitoring, a system that can operate continuously without power limitations is essential." She explained, "In this research, we implemented a self-powered system that can periodically measure and wirelessly transmit CO2 concentrations based on the energy generated from an inertia-driven TENG." She added, "This technology can serve as a foundational technology for future self-powered environmental monitoring platforms integrating various sensors."

< Figure 2. TENG energy harvesting-based wireless CO2 sensing system operation results (c) Experimental setup (d) Measured CO2 concentration results powered by TENG and conventional DC power source >

This research was published on June 1st in the internationally renowned academic journal `Nano Energy (IF 16.8)`. Gyurim Jang, a master's student at KAIST, and Daniel Manaye Tiruneh, a master's student at Chung-Ang University, are the co-first authors of the paper.*Paper Title: Highly compact inertia-driven triboelectric nanogenerator for self-powered wireless CO2 monitoring via fine-vibration harvesting*DOI: 10.1016/j.nanoen.2025.110872

This research was supported by the Saudi Aramco-KAIST CO2 Management Center.

2025.06.09 View 46739 -

KAIST Professor Jee-Hwan Ryu Receives Global IEEE Robotics Journal Best Paper Award

- Professor Jee-Hwan Ryu of Civil and Environmental Engineering receives the Best Paper Award from the Institute of Electrical and Electronics Engineers (IEEE) Robotics Journal, officially presented at ICRA, a world-renowned robotics conference.

- This is the highest level of international recognition, awarded to only the top 5 papers out of approximately 1,500 published in 2024.

- Securing a new working channel technology for soft growing robots expands the practicality and application possibilities in the field of soft robotics.

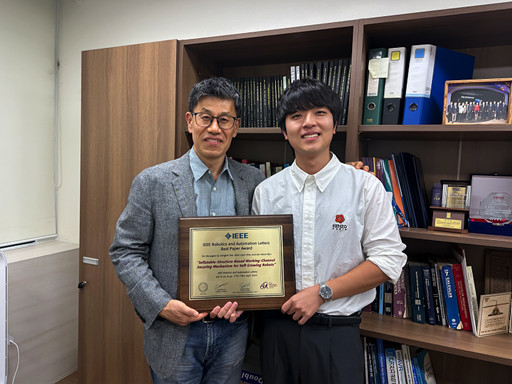

< Professor Jee-Hwan Ryu (left), Nam Gyun Kim, Ph.D. Candidate (right) from the KAIST Department of Civil and Environmental Engineering and KAIST Robotics Program >

KAIST (President Kwang-Hyung Lee) announced on the 6th that Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering received the 2024 Best Paper Award from the Robotics and Automation Letters (RA-L), a premier journal under the IEEE, at the '2025 IEEE International Conference on Robotics and Automation (ICRA)' held in Atlanta, USA, on May 22nd.

This Best Paper Award is a prestigious honor presented to only the top 5 papers out of approximately 1,500 published in 2024, boasting high international competition and authority.

The award-winning paper by Professor Ryu proposes a novel working channel securing mechanism that significantly expands the practicality and application possibilities of 'Soft Growing Robots,' which are based on soft materials that move or perform tasks through a growing motion similar to plant roots.

< IEEE Robotics Journal Award Ceremony >

Existing soft growing robots move by inflating or contracting their bodies through increasing or decreasing internal pressure, which can lead to blockages in their internal passages. In contrast, the newly developed soft growing robot achieves a growing function while maintaining the internal passage pressure equal to the external atmospheric pressure, thereby successfully securing an internal passage while retaining the robot's flexible and soft characteristics.

This structure allows various materials or tools to be freely delivered through the internal passage (working channel) within the robot and offers the advantage of performing multi-purpose tasks by flexibly replacing equipment according to the working environment.

The research team fabricated a prototype to prove the effectiveness of this technology and verified its performance through various experiments. Specifically, in the slide plate experiment, they confirmed whether materials or equipment could pass through the robot's internal channel without obstruction, and in the pipe pulling experiment, they verified if a long pipe-shaped tool could be pulled through the internal channel.

< Figure 1. Overall hardware structure of the proposed soft growing robot (left) and a cross-sectional view composing the inflatable structure (right) >

Experimental results demonstrated that the internal channel remained stable even while the robot was growing, serving as a key basis for supporting the technology's practicality and scalability.

Professor Jee-Hwan Ryu stated, "This award is very meaningful as it signifies the global recognition of Korea's robotics technology and academic achievements. Especially, it holds great significance in achieving technical progress that can greatly expand the practicality and application fields of soft growing robots. This achievement was possible thanks to the dedication and collaboration of the research team, and I will continue to contribute to the development of robotics technology through innovative research."

< Figure 2. Material supplying mechanism of the Soft Growing Robot >

This research was co-authored by Dongoh Seo, Ph.D. Candidate in Civil and Environmental Engineering, and Nam Gyun Kim, Ph.D. Candidate in Robotics. It was published in IEEE Robotics and Automation Letters on September 1, 2024.

(Paper Title: Inflatable-Structure-Based Working-Channel Securing Mechanism for Soft Growing Robots, DOI: 10.1109/LRA.2024.3426322)

This project was supported simultaneously by the National Research Foundation of Korea's Future Promising Convergence Technology Pioneer Research Project and Mid-career Researcher Project.

2025.06.09 View 1468

KAIST Professor Jee-Hwan Ryu Receives Global IEEE Robotics Journal Best Paper Award

- Professor Jee-Hwan Ryu of Civil and Environmental Engineering receives the Best Paper Award from the Institute of Electrical and Electronics Engineers (IEEE) Robotics Journal, officially presented at ICRA, a world-renowned robotics conference.

- This is the highest level of international recognition, awarded to only the top 5 papers out of approximately 1,500 published in 2024.

- Securing a new working channel technology for soft growing robots expands the practicality and application possibilities in the field of soft robotics.

< Professor Jee-Hwan Ryu (left), Nam Gyun Kim, Ph.D. Candidate (right) from the KAIST Department of Civil and Environmental Engineering and KAIST Robotics Program >

KAIST (President Kwang-Hyung Lee) announced on the 6th that Professor Jee-Hwan Ryu from the Department of Civil and Environmental Engineering received the 2024 Best Paper Award from the Robotics and Automation Letters (RA-L), a premier journal under the IEEE, at the '2025 IEEE International Conference on Robotics and Automation (ICRA)' held in Atlanta, USA, on May 22nd.

This Best Paper Award is a prestigious honor presented to only the top 5 papers out of approximately 1,500 published in 2024, boasting high international competition and authority.

The award-winning paper by Professor Ryu proposes a novel working channel securing mechanism that significantly expands the practicality and application possibilities of 'Soft Growing Robots,' which are based on soft materials that move or perform tasks through a growing motion similar to plant roots.

< IEEE Robotics Journal Award Ceremony >

Existing soft growing robots move by inflating or contracting their bodies through increasing or decreasing internal pressure, which can lead to blockages in their internal passages. In contrast, the newly developed soft growing robot achieves a growing function while maintaining the internal passage pressure equal to the external atmospheric pressure, thereby successfully securing an internal passage while retaining the robot's flexible and soft characteristics.

This structure allows various materials or tools to be freely delivered through the internal passage (working channel) within the robot and offers the advantage of performing multi-purpose tasks by flexibly replacing equipment according to the working environment.

The research team fabricated a prototype to prove the effectiveness of this technology and verified its performance through various experiments. Specifically, in the slide plate experiment, they confirmed whether materials or equipment could pass through the robot's internal channel without obstruction, and in the pipe pulling experiment, they verified if a long pipe-shaped tool could be pulled through the internal channel.

< Figure 1. Overall hardware structure of the proposed soft growing robot (left) and a cross-sectional view composing the inflatable structure (right) >

Experimental results demonstrated that the internal channel remained stable even while the robot was growing, serving as a key basis for supporting the technology's practicality and scalability.

Professor Jee-Hwan Ryu stated, "This award is very meaningful as it signifies the global recognition of Korea's robotics technology and academic achievements. Especially, it holds great significance in achieving technical progress that can greatly expand the practicality and application fields of soft growing robots. This achievement was possible thanks to the dedication and collaboration of the research team, and I will continue to contribute to the development of robotics technology through innovative research."

< Figure 2. Material supplying mechanism of the Soft Growing Robot >

This research was co-authored by Dongoh Seo, Ph.D. Candidate in Civil and Environmental Engineering, and Nam Gyun Kim, Ph.D. Candidate in Robotics. It was published in IEEE Robotics and Automation Letters on September 1, 2024.

(Paper Title: Inflatable-Structure-Based Working-Channel Securing Mechanism for Soft Growing Robots, DOI: 10.1109/LRA.2024.3426322)

This project was supported simultaneously by the National Research Foundation of Korea's Future Promising Convergence Technology Pioneer Research Project and Mid-career Researcher Project.

2025.06.09 View 1468 -

KAIST Introduces ‘Virtual Teaching Assistant’ That can Answer Even in the Middle of the Night – Successful First Deployment in Classroom

- Research teams led by Prof. Yoonjae Choi (Kim Jaechul Graduate School of AI) and Prof. Hwajeong Hong (Department of Industrial Design) at KAIST developed a Virtual Teaching Assistant (VTA) to support learning and class operations for a course with 477 students.

- The VTA responds 24/7 to students’ questions related to theory and practice by referencing lecture slides, coding assignments, and lecture videos.

- The system’s source code has been released to support future development of personalized learning support systems and their application in educational settings.

< Photo 1. (From left) PhD candidate Sunjun Kweon, Master's candidate Sooyohn Nam, PhD candidate Hyunseung Lim, Professor Hwajung Hong, Professor Yoonjae Choi >

“At first, I didn’t have high expectations for the Virtual Teaching Assistant (VTA), but it turned out to be extremely helpful—especially when I had sudden questions late at night, I could get immediate answers,” said Jiwon Yang, a Ph.D. student at KAIST. “I was also able to ask questions I would’ve hesitated to bring up with a human TA, which led me to ask even more and ultimately improved my understanding of the course.”

KAIST (President Kwang Hyung Lee) announced on June 5th that a joint research team led by Prof. Yoonjae Choi of the Kim Jaechul Graduate School of AI and Prof. Hwajeong Hong of the Department of Industrial Design has successfully developed and deployed a Virtual Teaching Assistant (VTA) that provides personalized feedback to individual students even in large-scale classes.

This study marks one of the first large-scale, real-world deployments in Korea, where the VTA was introduced in the “Programming for Artificial Intelligence” course at the KAIST Kim Jaechul Graduate School of AI, taken by 477 master’s and Ph.D. students during the Fall 2024 semester, to evaluate its effectiveness and practical applicability in an actual educational setting.

The AI teaching assistant developed in this study is a course-specialized agent, distinct from general-purpose tools like ChatGPT or conventional chatbots. The research team implemented a Retrieval-Augmented Generation (RAG) architecture, which automatically vectorizes a large volume of course materials—including lecture slides, coding assignments, and video lectures—and uses them as the basis for answering students’ questions.

< Photo 2. Teaching Assistant demonstrating to the student how the Virtual Teaching Assistant works>

When a student asks a question, the system searches for the most relevant course materials in real time based on the context of the query, and then generates a response. This process is not merely a simple call to a large language model (LLM), but rather a material-grounded question answering system tailored to the course content—ensuring both high reliability and accuracy in learning support.

Sunjun Kweon, the first author of the study and head teaching assistant for the course, explained, “Previously, TAs were overwhelmed with repetitive and basic questions—such as concepts already covered in class or simple definitions—which made it difficult to focus on more meaningful inquiries.” He added, “After introducing the VTA, students began to reduce repeated questions and focus on more essential ones. As a result, the burden on TAs was significantly reduced, allowing us to concentrate on providing more advanced learning support.”

In fact, compared to the previous year’s course, the number of questions that required direct responses from human TAs decreased by approximately 40%.

< Photo 3. A student working with VTA. >

The VTA, which was operated over a 14-week period, was actively used by more than half of the enrolled students, with a total of 3,869 Q&A interactions recorded. Notably, students without a background in AI or with limited prior knowledge tended to use the VTA more frequently, indicating that the system provided practical support as a learning aid, especially for those who needed it most.

The analysis also showed that students tended to ask the VTA more frequently about theoretical concepts than they did with human TAs. This suggests that the AI teaching assistant created an environment where students felt free to ask questions without fear of judgment or discomfort, thereby encouraging more active engagement in the learning process.

According to surveys conducted before, during, and after the course, students reported increased trust, response relevance, and comfort with the VTA over time. In particular, students who had previously hesitated to ask human TAs questions showed higher levels of satisfaction when interacting with the AI teaching assistant.

< Figure 1. Internal structure of the AI Teaching Assistant (VTA) applied in this course. It follows a Retrieval-Augmented Generation (RAG) structure that builds a vector database from course materials (PDFs, recorded lectures, coding practice materials, etc.), searches for relevant documents based on student questions and conversation history, and then generates responses based on them. >

Professor Yoonjae Choi, the lead instructor of the course and principal investigator of the study, stated, “The significance of this research lies in demonstrating that AI technology can provide practical support to both students and instructors. We hope to see this technology expanded to a wider range of courses in the future.”

The research team has released the system’s source code on GitHub, enabling other educational institutions and researchers to develop their own customized learning support systems and apply them in real-world classroom settings.

< Figure 2. Initial screen of the AI Teaching Assistant (VTA) introduced in the "Programming for AI" course. It asks for student ID input along with simple guidelines, a mechanism to ensure that only registered students can use it, blocking indiscriminate external access and ensuring limited use based on students. >

The related paper, titled “A Large-Scale Real-World Evaluation of an LLM-Based Virtual Teaching Assistant,” was accepted on May 9, 2025, to the Industry Track of ACL 2025, one of the most prestigious international conferences in the field of Natural Language Processing (NLP), recognizing the excellence of the research.

< Figure 3. Example conversation with the AI Teaching Assistant (VTA). When a student inputs a class-related question, the system internally searches for relevant class materials and then generates an answer based on them. In this way, VTA provides learning support by reflecting class content in context. >

This research was conducted with the support of the KAIST Center for Teaching and Learning Innovation, the National Research Foundation of Korea, and the National IT Industry Promotion Agency.

2025.06.05 View 1301

KAIST Introduces ‘Virtual Teaching Assistant’ That can Answer Even in the Middle of the Night – Successful First Deployment in Classroom

- Research teams led by Prof. Yoonjae Choi (Kim Jaechul Graduate School of AI) and Prof. Hwajeong Hong (Department of Industrial Design) at KAIST developed a Virtual Teaching Assistant (VTA) to support learning and class operations for a course with 477 students.

- The VTA responds 24/7 to students’ questions related to theory and practice by referencing lecture slides, coding assignments, and lecture videos.

- The system’s source code has been released to support future development of personalized learning support systems and their application in educational settings.

< Photo 1. (From left) PhD candidate Sunjun Kweon, Master's candidate Sooyohn Nam, PhD candidate Hyunseung Lim, Professor Hwajung Hong, Professor Yoonjae Choi >

“At first, I didn’t have high expectations for the Virtual Teaching Assistant (VTA), but it turned out to be extremely helpful—especially when I had sudden questions late at night, I could get immediate answers,” said Jiwon Yang, a Ph.D. student at KAIST. “I was also able to ask questions I would’ve hesitated to bring up with a human TA, which led me to ask even more and ultimately improved my understanding of the course.”

KAIST (President Kwang Hyung Lee) announced on June 5th that a joint research team led by Prof. Yoonjae Choi of the Kim Jaechul Graduate School of AI and Prof. Hwajeong Hong of the Department of Industrial Design has successfully developed and deployed a Virtual Teaching Assistant (VTA) that provides personalized feedback to individual students even in large-scale classes.

This study marks one of the first large-scale, real-world deployments in Korea, where the VTA was introduced in the “Programming for Artificial Intelligence” course at the KAIST Kim Jaechul Graduate School of AI, taken by 477 master’s and Ph.D. students during the Fall 2024 semester, to evaluate its effectiveness and practical applicability in an actual educational setting.

The AI teaching assistant developed in this study is a course-specialized agent, distinct from general-purpose tools like ChatGPT or conventional chatbots. The research team implemented a Retrieval-Augmented Generation (RAG) architecture, which automatically vectorizes a large volume of course materials—including lecture slides, coding assignments, and video lectures—and uses them as the basis for answering students’ questions.

< Photo 2. Teaching Assistant demonstrating to the student how the Virtual Teaching Assistant works>

When a student asks a question, the system searches for the most relevant course materials in real time based on the context of the query, and then generates a response. This process is not merely a simple call to a large language model (LLM), but rather a material-grounded question answering system tailored to the course content—ensuring both high reliability and accuracy in learning support.

Sunjun Kweon, the first author of the study and head teaching assistant for the course, explained, “Previously, TAs were overwhelmed with repetitive and basic questions—such as concepts already covered in class or simple definitions—which made it difficult to focus on more meaningful inquiries.” He added, “After introducing the VTA, students began to reduce repeated questions and focus on more essential ones. As a result, the burden on TAs was significantly reduced, allowing us to concentrate on providing more advanced learning support.”

In fact, compared to the previous year’s course, the number of questions that required direct responses from human TAs decreased by approximately 40%.

< Photo 3. A student working with VTA. >

The VTA, which was operated over a 14-week period, was actively used by more than half of the enrolled students, with a total of 3,869 Q&A interactions recorded. Notably, students without a background in AI or with limited prior knowledge tended to use the VTA more frequently, indicating that the system provided practical support as a learning aid, especially for those who needed it most.

The analysis also showed that students tended to ask the VTA more frequently about theoretical concepts than they did with human TAs. This suggests that the AI teaching assistant created an environment where students felt free to ask questions without fear of judgment or discomfort, thereby encouraging more active engagement in the learning process.

According to surveys conducted before, during, and after the course, students reported increased trust, response relevance, and comfort with the VTA over time. In particular, students who had previously hesitated to ask human TAs questions showed higher levels of satisfaction when interacting with the AI teaching assistant.

< Figure 1. Internal structure of the AI Teaching Assistant (VTA) applied in this course. It follows a Retrieval-Augmented Generation (RAG) structure that builds a vector database from course materials (PDFs, recorded lectures, coding practice materials, etc.), searches for relevant documents based on student questions and conversation history, and then generates responses based on them. >

Professor Yoonjae Choi, the lead instructor of the course and principal investigator of the study, stated, “The significance of this research lies in demonstrating that AI technology can provide practical support to both students and instructors. We hope to see this technology expanded to a wider range of courses in the future.”

The research team has released the system’s source code on GitHub, enabling other educational institutions and researchers to develop their own customized learning support systems and apply them in real-world classroom settings.

< Figure 2. Initial screen of the AI Teaching Assistant (VTA) introduced in the "Programming for AI" course. It asks for student ID input along with simple guidelines, a mechanism to ensure that only registered students can use it, blocking indiscriminate external access and ensuring limited use based on students. >

The related paper, titled “A Large-Scale Real-World Evaluation of an LLM-Based Virtual Teaching Assistant,” was accepted on May 9, 2025, to the Industry Track of ACL 2025, one of the most prestigious international conferences in the field of Natural Language Processing (NLP), recognizing the excellence of the research.

< Figure 3. Example conversation with the AI Teaching Assistant (VTA). When a student inputs a class-related question, the system internally searches for relevant class materials and then generates an answer based on them. In this way, VTA provides learning support by reflecting class content in context. >

This research was conducted with the support of the KAIST Center for Teaching and Learning Innovation, the National Research Foundation of Korea, and the National IT Industry Promotion Agency.

2025.06.05 View 1301 -

KAIST Research Team Develops Electronic Ink for Room-Temperature Printing of High-Resolution, Variable-Stiffness Electronics

A team of researchers from KAIST and Seoul National University has developed a groundbreaking electronic ink that enables room-temperature printing of variable-stiffness circuits capable of switching between rigid and soft modes. This advancement marks a significant leap toward next-generation wearable, implantable, and robotic devices.

< Photo 1. (From left) Professor Jae-Woong Jeong and PhD candidate Simok Lee of the School of Electrical Engineering, (in separate bubbles, from left) Professor Gun-Hee Lee of Pusan National University, Professor Seongjun Park of Seoul National University, Professor Steve Park of the Department of Materials Science and Engineering>

Variable-stiffness electronics are at the forefront of adaptive technology, offering the ability for a single device to transition between rigid and soft modes depending on its use case. Gallium, a metal known for its high rigidity contrast between solid and liquid states, is a promising candidate for such applications. However, its use has been hindered by challenges including high surface tension, low viscosity, and undesirable phase transitions during manufacturing.

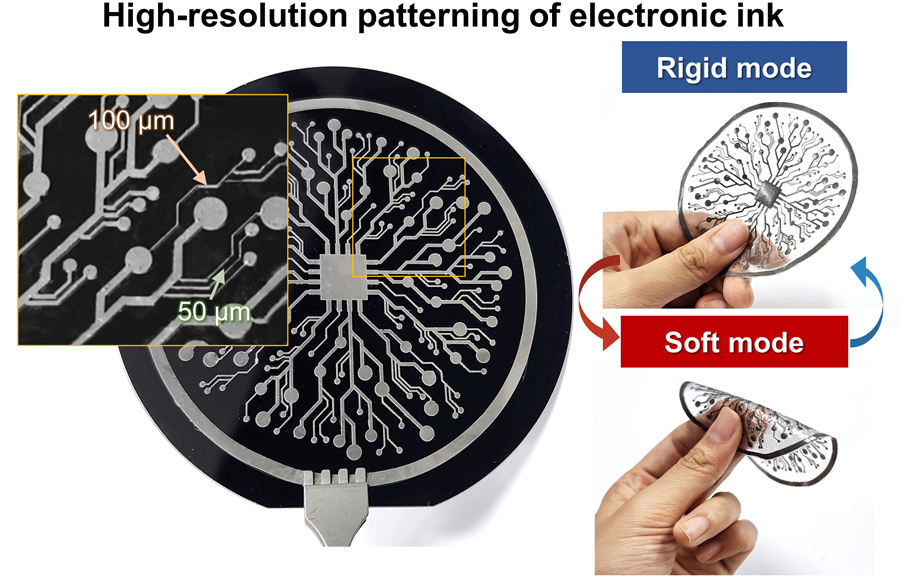

On June 4th, a research team led by Professor Jae-Woong Jeong from the School of Electrical Engineering at KAIST, Professor Seongjun Park from the Digital Healthcare Major at Seoul National University, and Professor Steve Park from the Department of Materials Science and Engineering at KAIST introduced a novel liquid metal electronic ink. This ink allows for micro-scale circuit printing – thinner than a human hair – at room temperature, with the ability to reversibly switch between rigid and soft modes depending on temperature.

The new ink combines printable viscosity with excellent electrical conductivity, enabling the creation of complex, high-resolution multilayer circuits comparable to commercial printed circuit boards (PCBs). These circuits can dynamically change stiffness in response to temperature, presenting new opportunities for multifunctional electronics, medical technologies, and robotics.

Conventional electronics typically have fixed form factors – either rigid for durability or soft for wearability. Rigid devices like smartphones and laptops offer robust performance but are uncomfortable when worn, while soft electronics are more comfortable but lack precise handling. As demand grows for devices that can adapt their stiffness to context, variable-stiffness electronics are becoming increasingly important.

< Figure 1. Fabrication process of stable, high-viscosity electronic ink by dispersing micro-sized gallium particles in a polymer matrix (left). High-resolution large-area circuit printing process through pH-controlled chemical sintering (right). >

To address this challenge, the researchers focused on gallium, which melts just below body temperature. Solid gallium is quite stiff, while its liquid form is fluid and soft. Despite its potential, gallium’s use in electronic printing has been limited by its high surface tension and instability when melted.

To overcome these issues, the team developed a pH-controlled liquid metal ink printing process. By dispersing micro-sized gallium particles into a hydrophilic polyurethane matrix using a neutral solvent (dimethyl sulfoxide, or DMSO), they created a stable, high-viscosity ink suitable for precision printing. During post-print heating, the DMSO decomposes to form an acidic environment, which removes the oxide layer on the gallium particles. This triggers the particles to coalesce into electrically conductive networks with tunable mechanical properties.

The resulting printed circuits exhibit fine feature sizes (~50 μm), high conductivity (2.27 × 10⁶ S/m), and a stiffness modulation ratio of up to 1,465 – allowing the material to shift from plastic-like rigidity to rubber-like softness. Furthermore, the ink is compatible with conventional printing techniques such as screen printing and dip coating, supporting large-area and 3D device fabrication.

< Figure 2. Key features of the electronic ink. (i) High-resolution printing and multilayer integration capability. (ii) Batch fabrication capability through large-area screen printing. (iii) Complex three-dimensional structure printing capability through dip coating. (iv) Excellent electrical conductivity and stiffness control capability.>

The team demonstrated this technology by developing a multi-functional device that operates as a rigid portable electronic under normal conditions but transforms into a soft wearable healthcare device when attached to the body. They also created a neural probe that remains stiff during surgical insertion for accurate positioning but softens once inside brain tissue to reduce inflammation – highlighting its potential for biomedical implants.

< Figure 3. Variable stiffness wearable electronics with high-resolution circuits and multilayer structure comparable to commercial printed circuit boards (PCBs). Functions as a rigid portable electronic device at room temperature, then transforms into a wearable healthcare device by softening at body temperature upon skin contact.>

“The core achievement of this research lies in overcoming the longstanding challenges of liquid metal printing through our innovative technology,” said Professor Jeong. “By controlling the ink’s acidity, we were able to electrically and mechanically connect printed gallium particles, enabling the room-temperature fabrication of high-resolution, large-area circuits with tunable stiffness. This opens up new possibilities for future personal electronics, medical devices, and robotics.”

< Figure 4. Body-temperature softening neural probe implemented by coating electronic ink on an optical waveguide structure. (Left) Remains rigid during surgery for precise manipulation and brain insertion, then softens after implantation to minimize mechanical stress on the brain and greatly enhance biocompatibility. (Right) >

This research was published in Science Advances under the title, “Phase-Change Metal Ink with pH-Controlled Chemical Sintering for Versatile and Scalable Fabrication of Variable Stiffness Electronics.” The work was supported by the National Research Foundation of Korea, the Boston-Korea Project, and the BK21 FOUR Program.

2025.06.04 View 1795

KAIST Research Team Develops Electronic Ink for Room-Temperature Printing of High-Resolution, Variable-Stiffness Electronics

A team of researchers from KAIST and Seoul National University has developed a groundbreaking electronic ink that enables room-temperature printing of variable-stiffness circuits capable of switching between rigid and soft modes. This advancement marks a significant leap toward next-generation wearable, implantable, and robotic devices.

< Photo 1. (From left) Professor Jae-Woong Jeong and PhD candidate Simok Lee of the School of Electrical Engineering, (in separate bubbles, from left) Professor Gun-Hee Lee of Pusan National University, Professor Seongjun Park of Seoul National University, Professor Steve Park of the Department of Materials Science and Engineering>

Variable-stiffness electronics are at the forefront of adaptive technology, offering the ability for a single device to transition between rigid and soft modes depending on its use case. Gallium, a metal known for its high rigidity contrast between solid and liquid states, is a promising candidate for such applications. However, its use has been hindered by challenges including high surface tension, low viscosity, and undesirable phase transitions during manufacturing.

On June 4th, a research team led by Professor Jae-Woong Jeong from the School of Electrical Engineering at KAIST, Professor Seongjun Park from the Digital Healthcare Major at Seoul National University, and Professor Steve Park from the Department of Materials Science and Engineering at KAIST introduced a novel liquid metal electronic ink. This ink allows for micro-scale circuit printing – thinner than a human hair – at room temperature, with the ability to reversibly switch between rigid and soft modes depending on temperature.

The new ink combines printable viscosity with excellent electrical conductivity, enabling the creation of complex, high-resolution multilayer circuits comparable to commercial printed circuit boards (PCBs). These circuits can dynamically change stiffness in response to temperature, presenting new opportunities for multifunctional electronics, medical technologies, and robotics.

Conventional electronics typically have fixed form factors – either rigid for durability or soft for wearability. Rigid devices like smartphones and laptops offer robust performance but are uncomfortable when worn, while soft electronics are more comfortable but lack precise handling. As demand grows for devices that can adapt their stiffness to context, variable-stiffness electronics are becoming increasingly important.

< Figure 1. Fabrication process of stable, high-viscosity electronic ink by dispersing micro-sized gallium particles in a polymer matrix (left). High-resolution large-area circuit printing process through pH-controlled chemical sintering (right). >

To address this challenge, the researchers focused on gallium, which melts just below body temperature. Solid gallium is quite stiff, while its liquid form is fluid and soft. Despite its potential, gallium’s use in electronic printing has been limited by its high surface tension and instability when melted.

To overcome these issues, the team developed a pH-controlled liquid metal ink printing process. By dispersing micro-sized gallium particles into a hydrophilic polyurethane matrix using a neutral solvent (dimethyl sulfoxide, or DMSO), they created a stable, high-viscosity ink suitable for precision printing. During post-print heating, the DMSO decomposes to form an acidic environment, which removes the oxide layer on the gallium particles. This triggers the particles to coalesce into electrically conductive networks with tunable mechanical properties.

The resulting printed circuits exhibit fine feature sizes (~50 μm), high conductivity (2.27 × 10⁶ S/m), and a stiffness modulation ratio of up to 1,465 – allowing the material to shift from plastic-like rigidity to rubber-like softness. Furthermore, the ink is compatible with conventional printing techniques such as screen printing and dip coating, supporting large-area and 3D device fabrication.

< Figure 2. Key features of the electronic ink. (i) High-resolution printing and multilayer integration capability. (ii) Batch fabrication capability through large-area screen printing. (iii) Complex three-dimensional structure printing capability through dip coating. (iv) Excellent electrical conductivity and stiffness control capability.>

The team demonstrated this technology by developing a multi-functional device that operates as a rigid portable electronic under normal conditions but transforms into a soft wearable healthcare device when attached to the body. They also created a neural probe that remains stiff during surgical insertion for accurate positioning but softens once inside brain tissue to reduce inflammation – highlighting its potential for biomedical implants.

< Figure 3. Variable stiffness wearable electronics with high-resolution circuits and multilayer structure comparable to commercial printed circuit boards (PCBs). Functions as a rigid portable electronic device at room temperature, then transforms into a wearable healthcare device by softening at body temperature upon skin contact.>

“The core achievement of this research lies in overcoming the longstanding challenges of liquid metal printing through our innovative technology,” said Professor Jeong. “By controlling the ink’s acidity, we were able to electrically and mechanically connect printed gallium particles, enabling the room-temperature fabrication of high-resolution, large-area circuits with tunable stiffness. This opens up new possibilities for future personal electronics, medical devices, and robotics.”

< Figure 4. Body-temperature softening neural probe implemented by coating electronic ink on an optical waveguide structure. (Left) Remains rigid during surgery for precise manipulation and brain insertion, then softens after implantation to minimize mechanical stress on the brain and greatly enhance biocompatibility. (Right) >

This research was published in Science Advances under the title, “Phase-Change Metal Ink with pH-Controlled Chemical Sintering for Versatile and Scalable Fabrication of Variable Stiffness Electronics.” The work was supported by the National Research Foundation of Korea, the Boston-Korea Project, and the BK21 FOUR Program.

2025.06.04 View 1795 -

RAIBO Runs over Walls with Feline Agility... Ready for Effortless Search over Mountaineous and Rough Terrains

< Photo 1. Research Team Photo (Professor Jemin Hwangbo, second from right in the front row) >

KAIST's quadrupedal robot, RAIBO, can now move at high speed across discontinuous and complex terrains such as stairs, gaps, walls, and debris. It has demonstrated its ability to run on vertical walls, leap over 1.3-meter-wide gaps, sprint at approximately 14.4 km/h over stepping stones, and move quickly and nimbly on terrain combining 30° slopes, stairs, and stepping stones. RAIBO is expected to be deployed soon for practical missions such as disaster site exploration and mountain searches.

Professor Jemin Hwangbo's research team in the Department of Mechanical Engineering at our university announced on June 3rd that they have developed a quadrupedal robot navigation framework capable of high-speed locomotion at 14.4 km/h (4m/s) even on discontinuous and complex terrains such as walls, stairs, and stepping stones.

The research team developed a quadrupedal navigation system that enables the robot to reach its target destination quickly and safely in complex and discontinuous terrain.

To achieve this, they approached the problem by breaking it down into two stages: first, developing a planner for planning foothold positions, and second, developing a tracker to accurately follow the planned foothold positions.

First, the planner module quickly searches for physically feasible foothold positions using a sampling-based optimization method with neural network-based heuristics and verifies the optimal path through simulation rollouts.

While existing methods considered various factors such as contact timing and robot posture in addition to foothold positions, this research significantly reduced computational complexity by setting only foothold positions as the search space. Furthermore, inspired by the walking method of cats, the introduction of a structure where the hind feet step on the same spots as the front feet further significantly reduced computational complexity.

< Figure 1. High-speed navigation across various discontinuous terrains >

Second, the tracker module is trained to accurately step on planned positions, and tracking training is conducted through a generative model that competes in environments of appropriate difficulty.

The tracker is trained through reinforcement learning to accurately step on planned plots, and during this process, a generative model called the 'map generator' provides the target distribution.

This generative model is trained simultaneously and adversarially with the tracker to allow the tracker to progressively adapt to more challenging difficulties. Subsequently, a sampling-based planner was designed to generate feasible foothold plans that can reflect the characteristics and performance of the trained tracker.

This hierarchical structure showed superior performance in both planning speed and stability compared to existing techniques, and experiments proved its high-speed locomotion capabilities across various obstacles and discontinuous terrains, as well as its general applicability to unseen terrains.

Professor Jemin Hwangbo stated, "We approached the problem of high-speed navigation in discontinuous terrain, which previously required a significantly large amount of computation, from the simple perspective of how to select the footprint positions. Inspired by the placements of cat's paw, allowing the hind feet to step where the front feet stepped drastically reduced computation. We expect this to significantly expand the range of discontinuous terrain that walking robots can overcome and enable them to traverse it at high speeds, contributing to the robot's ability to perform practical missions such as disaster site exploration and mountain searches."

This research achievement was published in the May 2025 issue of the international journal Science Robotics.

Paper Title: High-speed control and navigation for quadrupedal robots on complex and discrete terrain, (https://www.science.org/doi/10.1126/scirobotics.ads6192)YouTube Link: https://youtu.be/EZbM594T3c4?si=kfxLF2XnVUvYVIyk

2025.06.04 View 2096

RAIBO Runs over Walls with Feline Agility... Ready for Effortless Search over Mountaineous and Rough Terrains

< Photo 1. Research Team Photo (Professor Jemin Hwangbo, second from right in the front row) >

KAIST's quadrupedal robot, RAIBO, can now move at high speed across discontinuous and complex terrains such as stairs, gaps, walls, and debris. It has demonstrated its ability to run on vertical walls, leap over 1.3-meter-wide gaps, sprint at approximately 14.4 km/h over stepping stones, and move quickly and nimbly on terrain combining 30° slopes, stairs, and stepping stones. RAIBO is expected to be deployed soon for practical missions such as disaster site exploration and mountain searches.

Professor Jemin Hwangbo's research team in the Department of Mechanical Engineering at our university announced on June 3rd that they have developed a quadrupedal robot navigation framework capable of high-speed locomotion at 14.4 km/h (4m/s) even on discontinuous and complex terrains such as walls, stairs, and stepping stones.

The research team developed a quadrupedal navigation system that enables the robot to reach its target destination quickly and safely in complex and discontinuous terrain.

To achieve this, they approached the problem by breaking it down into two stages: first, developing a planner for planning foothold positions, and second, developing a tracker to accurately follow the planned foothold positions.

First, the planner module quickly searches for physically feasible foothold positions using a sampling-based optimization method with neural network-based heuristics and verifies the optimal path through simulation rollouts.

While existing methods considered various factors such as contact timing and robot posture in addition to foothold positions, this research significantly reduced computational complexity by setting only foothold positions as the search space. Furthermore, inspired by the walking method of cats, the introduction of a structure where the hind feet step on the same spots as the front feet further significantly reduced computational complexity.

< Figure 1. High-speed navigation across various discontinuous terrains >

Second, the tracker module is trained to accurately step on planned positions, and tracking training is conducted through a generative model that competes in environments of appropriate difficulty.

The tracker is trained through reinforcement learning to accurately step on planned plots, and during this process, a generative model called the 'map generator' provides the target distribution.

This generative model is trained simultaneously and adversarially with the tracker to allow the tracker to progressively adapt to more challenging difficulties. Subsequently, a sampling-based planner was designed to generate feasible foothold plans that can reflect the characteristics and performance of the trained tracker.

This hierarchical structure showed superior performance in both planning speed and stability compared to existing techniques, and experiments proved its high-speed locomotion capabilities across various obstacles and discontinuous terrains, as well as its general applicability to unseen terrains.

Professor Jemin Hwangbo stated, "We approached the problem of high-speed navigation in discontinuous terrain, which previously required a significantly large amount of computation, from the simple perspective of how to select the footprint positions. Inspired by the placements of cat's paw, allowing the hind feet to step where the front feet stepped drastically reduced computation. We expect this to significantly expand the range of discontinuous terrain that walking robots can overcome and enable them to traverse it at high speeds, contributing to the robot's ability to perform practical missions such as disaster site exploration and mountain searches."

This research achievement was published in the May 2025 issue of the international journal Science Robotics.

Paper Title: High-speed control and navigation for quadrupedal robots on complex and discrete terrain, (https://www.science.org/doi/10.1126/scirobotics.ads6192)YouTube Link: https://youtu.be/EZbM594T3c4?si=kfxLF2XnVUvYVIyk

2025.06.04 View 2096 -

Professor Hyun Myung's Team Wins First Place in a Challenge at ICRA by IEEE

< Photo 1. (From left) Daebeom Kim (Team Leader, Ph.D. student), Seungjae Lee (Ph.D. student), Seoyeon Jang (Ph.D. student), Jei Kong (Master's student), Professor Hyun Myung >

A team of the Urban Robotics Lab, led by Professor Hyun Myung from the KAIST School of Electrical Engineering, achieved a remarkable first-place overall victory in the Nothing Stands Still Challenge (NSS Challenge) 2025, held at the 2025 IEEE International Conference on Robotics and Automation (ICRA), the world's most prestigious robotics conference, from May 19 to 23 in Atlanta, USA.

The NSS Challenge was co-hosted by HILTI, a global construction company based in Liechtenstein, and Stanford University's Gradient Spaces Group. It is an expanded version of the HILTI SLAM (Simultaneous Localization and Mapping)* Challenge, which has been held since 2021, and is considered one of the most prominent challenges at 2025 IEEE ICRA.*SLAM: Refers to Simultaneous Localization and Mapping, a technology where robots, drones, autonomous vehicles, etc., determine their own position and simultaneously create a map of their surroundings.

< Photo 2. A scene from the oral presentation on the winning team's technology (Speakers: Seungjae Lee and Seoyeon Jang, Ph.D. candidates of KAIST School of Electrical Engineering) >

This challenge primarily evaluates how accurately and robustly LiDAR scan data, collected at various times, can be registered in situations with frequent structural changes, such as construction and industrial environments. In particular, it is regarded as a highly technical competition because it deals with multi-session localization and mapping (Multi-session SLAM) technology that responds to structural changes occurring over multiple timeframes, rather than just single-point registration accuracy.

The Urban Robotics Lab team secured first place overall, surpassing National Taiwan University (3rd place) and Northwestern Polytechnical University of China (2nd place) by a significant margin, with their unique localization and mapping technology that solves the problem of registering LiDAR data collected across multiple times and spaces. The winning team will be awarded a prize of $4,000.

< Figure 1. Example of Multiway-Registration for Registering Multiple Scans >

The Urban Robotics Lab team independently developed a multiway-registration framework that can robustly register multiple scans even without prior connection information. This framework consists of an algorithm for summarizing feature points within scans and finding correspondences (CubicFeat), an algorithm for performing global registration based on the found correspondences (Quatro), and an algorithm for refining results based on change detection (Chamelion). This combination of technologies ensures stable registration performance based on fixed structures, even in highly dynamic industrial environments.

< Figure 2. Example of Change Detection Using the Chamelion Algorithm>

LiDAR scan registration technology is a core component of SLAM (Simultaneous Localization And Mapping) in various autonomous systems such as autonomous vehicles, autonomous robots, autonomous walking systems, and autonomous flying vehicles.

Professor Hyun Myung of the School of Electrical Engineering stated, "This award-winning technology is evaluated as a case that simultaneously proves both academic value and industrial applicability by maximizing the performance of precisely estimating the relative positions between different scans even in complex environments. I am grateful to the students who challenged themselves and never gave up, even when many teams abandoned due to the high difficulty."

< Figure 3. Competition Result Board, Lower RMSE (Root Mean Squared Error) Indicates Higher Score (Unit: meters)>

The Urban Robotics Lab team first participated in the SLAM Challenge in 2022, winning second place among academic teams, and in 2023, they secured first place overall in the LiDAR category and first place among academic teams in the vision category.

2025.05.30 View 2185

Professor Hyun Myung's Team Wins First Place in a Challenge at ICRA by IEEE

< Photo 1. (From left) Daebeom Kim (Team Leader, Ph.D. student), Seungjae Lee (Ph.D. student), Seoyeon Jang (Ph.D. student), Jei Kong (Master's student), Professor Hyun Myung >

A team of the Urban Robotics Lab, led by Professor Hyun Myung from the KAIST School of Electrical Engineering, achieved a remarkable first-place overall victory in the Nothing Stands Still Challenge (NSS Challenge) 2025, held at the 2025 IEEE International Conference on Robotics and Automation (ICRA), the world's most prestigious robotics conference, from May 19 to 23 in Atlanta, USA.

The NSS Challenge was co-hosted by HILTI, a global construction company based in Liechtenstein, and Stanford University's Gradient Spaces Group. It is an expanded version of the HILTI SLAM (Simultaneous Localization and Mapping)* Challenge, which has been held since 2021, and is considered one of the most prominent challenges at 2025 IEEE ICRA.*SLAM: Refers to Simultaneous Localization and Mapping, a technology where robots, drones, autonomous vehicles, etc., determine their own position and simultaneously create a map of their surroundings.

< Photo 2. A scene from the oral presentation on the winning team's technology (Speakers: Seungjae Lee and Seoyeon Jang, Ph.D. candidates of KAIST School of Electrical Engineering) >

This challenge primarily evaluates how accurately and robustly LiDAR scan data, collected at various times, can be registered in situations with frequent structural changes, such as construction and industrial environments. In particular, it is regarded as a highly technical competition because it deals with multi-session localization and mapping (Multi-session SLAM) technology that responds to structural changes occurring over multiple timeframes, rather than just single-point registration accuracy.

The Urban Robotics Lab team secured first place overall, surpassing National Taiwan University (3rd place) and Northwestern Polytechnical University of China (2nd place) by a significant margin, with their unique localization and mapping technology that solves the problem of registering LiDAR data collected across multiple times and spaces. The winning team will be awarded a prize of $4,000.

< Figure 1. Example of Multiway-Registration for Registering Multiple Scans >

The Urban Robotics Lab team independently developed a multiway-registration framework that can robustly register multiple scans even without prior connection information. This framework consists of an algorithm for summarizing feature points within scans and finding correspondences (CubicFeat), an algorithm for performing global registration based on the found correspondences (Quatro), and an algorithm for refining results based on change detection (Chamelion). This combination of technologies ensures stable registration performance based on fixed structures, even in highly dynamic industrial environments.

< Figure 2. Example of Change Detection Using the Chamelion Algorithm>

LiDAR scan registration technology is a core component of SLAM (Simultaneous Localization And Mapping) in various autonomous systems such as autonomous vehicles, autonomous robots, autonomous walking systems, and autonomous flying vehicles.

Professor Hyun Myung of the School of Electrical Engineering stated, "This award-winning technology is evaluated as a case that simultaneously proves both academic value and industrial applicability by maximizing the performance of precisely estimating the relative positions between different scans even in complex environments. I am grateful to the students who challenged themselves and never gave up, even when many teams abandoned due to the high difficulty."

< Figure 3. Competition Result Board, Lower RMSE (Root Mean Squared Error) Indicates Higher Score (Unit: meters)>

The Urban Robotics Lab team first participated in the SLAM Challenge in 2022, winning second place among academic teams, and in 2023, they secured first place overall in the LiDAR category and first place among academic teams in the vision category.

2025.05.30 View 2185 -

KAIST-UIUC researchers develop a treatment platform to disable the ‘biofilm’ shield of superbugs

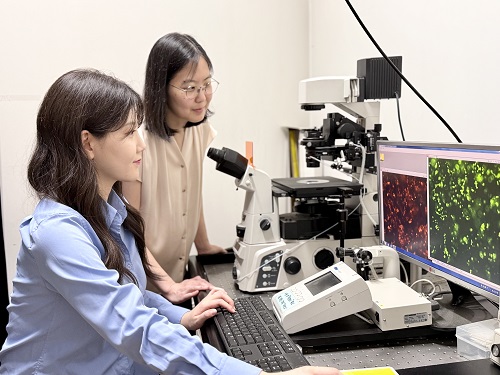

< (From left) Ph.D. Candidate Joo Hun Lee (co-author), Professor Hyunjoon Kong (co-corresponding author) and Postdoctoral Researcher Yujin Ahn (co-first author) from the Department of Chemical and Biomolecular Engineering of the University of Illinois at Urbana-Champaign and Ju Yeon Chung (co-first author) from the Integrated Master's and Doctoral Program, and Professor Hyun Jung Chung (co-corresponding author) from the Department of Biological Sciences of KAIST >

A major cause of hospital-acquired infections, the super bacteria Methicillin-resistant Staphylococcus aureus (MRSA), not only exhibits strong resistance to existing antibiotics but also forms a dense biofilm that blocks the effects of external treatments. To meet this challenge, KAIST researchers, in collaboration with an international team, successfully developed a platform that utilizes microbubbles to deliver gene-targeted nanoparticles capable of break ing down the biofilms, offering an innovative solution for treating infections resistant to conventional antibiotics.

KAIST (represented by President Kwang Hyung Lee) announced on May 29 that a research team led by Professor Hyun Jung Chung from the Department of Biological Sciences, in collaboration with Professor Hyunjoon Kong's team at the University of Illinois, has developed a microbubble-based nano-gene delivery platform (BTN MB) that precisely delivers gene suppressors into bacteria to effectively remove biofilms formed by MRSA.

The research team first designed short DNA oligonucleotides that simultaneously suppress three major MRSA genes, related to—biofilm formation (icaA), cell division (ftsZ), and antibiotic resistance (mecA)—and engineered nanoparticles (BTN) to effectively deliver them into the bacteria.

< Figure 1. Effective biofilm treatment using biofilm-targeting nanoparticles controlled by microbubbler system. Schematic illustration of BTN delivery with microbubbles (MB), enabling effective permeation of ASOs targeting bacterial genes within biofilms infecting skin wounds. Gene silencing of targets involved in biofilm formation, bacterial proliferation, and antibiotic resistance leads to effective biofilm removal and antibacterial efficacy in vivo. >

In addition, microbubbles (MB) were used to increase the permeability of the microbial membrane, specifically the biofilm formed by MRSA. By combining these two technologies, the team implemented a dual-strike strategy that fundamentally blocks bacterial growth and prevents resistance acquisition.

This treatment system operates in two stages. First, the MBs induce pressure changes within the bacterial biofilm, allowing the BTNs to penetrate. Then, the BTNs slip through the gaps in the biofilm and enter the bacteria, delivering the gene suppressors precisely. This leads to gene regulation within MRSA, simultaneously blocking biofilm regeneration, cell proliferation, and antibiotic resistance expression.

In experiments conducted in a porcine skin model and a mouse wound model infected with MRSA biofilm, the BTN MB treatment group showed a significant reduction in biofilm thickness, as well as remarkable decreases in bacterial count and inflammatory responses.

< Figure 2. (a) Schematic illustration on the evaluation of treatment efficacy of BTN-MB gene therapy. (b) Reduction in MRSA biofilm mass via simultaneous inhibition of multiple genes. (c, d) Antibacterial efficacy of BTN-MB over time in a porcine skin infection biofilm model. (e) Schematic of the experimental setup to verify antibacterial efficacy in a mouse skin wound infection model. (f) Wound healing effects in mice. (g) Antibacterial effects at the wound site. (h) Histological analysis results. >

These results are difficult to achieve with conventional antibiotic monotherapy and demonstrate the potential for treating a wide range of resistant bacterial infections.

Professor Hyun Jung Chung of KAIST, who led the research, stated, “This study presents a new therapeutic solution that combines nanotechnology, gene suppression, and physical delivery strategies to address superbug infections that existing antibiotics cannot resolve. We will continue our research with the aim of expanding its application to systemic infections and various other infectious diseases.”

< (From left) Ju Yeon Chung from the Integrated Master's and Doctoral Program, and Professor Hyun Jung Chung from the Department of Biological Sciences >

The study was co-first authored by Ju Yeon Chung, a graduate student in the Department of Biological Sciences at KAIST, and Dr. Yujin Ahn from the University of Illinois. The study was published online on May 19 in the journal, Advanced Functional Materials.

※ Paper Title: Microbubble-Controlled Delivery of Biofilm-Targeting Nanoparticles to Treat MRSA Infection ※ DOI: https://doi.org/10.1002/adfm.202508291

This study was supported by the National Research Foundation and the Ministry of Health and Welfare, Republic of Korea; and the National Science Foundation and National Institutes of Health, USA.

2025.05.29 View 1530

KAIST-UIUC researchers develop a treatment platform to disable the ‘biofilm’ shield of superbugs

< (From left) Ph.D. Candidate Joo Hun Lee (co-author), Professor Hyunjoon Kong (co-corresponding author) and Postdoctoral Researcher Yujin Ahn (co-first author) from the Department of Chemical and Biomolecular Engineering of the University of Illinois at Urbana-Champaign and Ju Yeon Chung (co-first author) from the Integrated Master's and Doctoral Program, and Professor Hyun Jung Chung (co-corresponding author) from the Department of Biological Sciences of KAIST >

A major cause of hospital-acquired infections, the super bacteria Methicillin-resistant Staphylococcus aureus (MRSA), not only exhibits strong resistance to existing antibiotics but also forms a dense biofilm that blocks the effects of external treatments. To meet this challenge, KAIST researchers, in collaboration with an international team, successfully developed a platform that utilizes microbubbles to deliver gene-targeted nanoparticles capable of break ing down the biofilms, offering an innovative solution for treating infections resistant to conventional antibiotics.

KAIST (represented by President Kwang Hyung Lee) announced on May 29 that a research team led by Professor Hyun Jung Chung from the Department of Biological Sciences, in collaboration with Professor Hyunjoon Kong's team at the University of Illinois, has developed a microbubble-based nano-gene delivery platform (BTN MB) that precisely delivers gene suppressors into bacteria to effectively remove biofilms formed by MRSA.

The research team first designed short DNA oligonucleotides that simultaneously suppress three major MRSA genes, related to—biofilm formation (icaA), cell division (ftsZ), and antibiotic resistance (mecA)—and engineered nanoparticles (BTN) to effectively deliver them into the bacteria.

< Figure 1. Effective biofilm treatment using biofilm-targeting nanoparticles controlled by microbubbler system. Schematic illustration of BTN delivery with microbubbles (MB), enabling effective permeation of ASOs targeting bacterial genes within biofilms infecting skin wounds. Gene silencing of targets involved in biofilm formation, bacterial proliferation, and antibiotic resistance leads to effective biofilm removal and antibacterial efficacy in vivo. >

In addition, microbubbles (MB) were used to increase the permeability of the microbial membrane, specifically the biofilm formed by MRSA. By combining these two technologies, the team implemented a dual-strike strategy that fundamentally blocks bacterial growth and prevents resistance acquisition.

This treatment system operates in two stages. First, the MBs induce pressure changes within the bacterial biofilm, allowing the BTNs to penetrate. Then, the BTNs slip through the gaps in the biofilm and enter the bacteria, delivering the gene suppressors precisely. This leads to gene regulation within MRSA, simultaneously blocking biofilm regeneration, cell proliferation, and antibiotic resistance expression.

In experiments conducted in a porcine skin model and a mouse wound model infected with MRSA biofilm, the BTN MB treatment group showed a significant reduction in biofilm thickness, as well as remarkable decreases in bacterial count and inflammatory responses.

< Figure 2. (a) Schematic illustration on the evaluation of treatment efficacy of BTN-MB gene therapy. (b) Reduction in MRSA biofilm mass via simultaneous inhibition of multiple genes. (c, d) Antibacterial efficacy of BTN-MB over time in a porcine skin infection biofilm model. (e) Schematic of the experimental setup to verify antibacterial efficacy in a mouse skin wound infection model. (f) Wound healing effects in mice. (g) Antibacterial effects at the wound site. (h) Histological analysis results. >

These results are difficult to achieve with conventional antibiotic monotherapy and demonstrate the potential for treating a wide range of resistant bacterial infections.

Professor Hyun Jung Chung of KAIST, who led the research, stated, “This study presents a new therapeutic solution that combines nanotechnology, gene suppression, and physical delivery strategies to address superbug infections that existing antibiotics cannot resolve. We will continue our research with the aim of expanding its application to systemic infections and various other infectious diseases.”

< (From left) Ju Yeon Chung from the Integrated Master's and Doctoral Program, and Professor Hyun Jung Chung from the Department of Biological Sciences >

The study was co-first authored by Ju Yeon Chung, a graduate student in the Department of Biological Sciences at KAIST, and Dr. Yujin Ahn from the University of Illinois. The study was published online on May 19 in the journal, Advanced Functional Materials.

※ Paper Title: Microbubble-Controlled Delivery of Biofilm-Targeting Nanoparticles to Treat MRSA Infection ※ DOI: https://doi.org/10.1002/adfm.202508291

This study was supported by the National Research Foundation and the Ministry of Health and Welfare, Republic of Korea; and the National Science Foundation and National Institutes of Health, USA.

2025.05.29 View 1530 -

KAIST to Develop a Korean-style ChatGPT Platform Specifically Geared Toward Medical Diagnosis and Drug Discovery

On May 23rd, KAIST (President Kwang-Hyung Lee) announced that its Digital Bio-Health AI Research Center (Director: Professor JongChul Ye of KAIST Kim Jaechul Graduate School of AI) has been selected for the Ministry of Science and ICT's 'AI Top-Tier Young Researcher Support Program (AI Star Fellowship Project).' With a total investment of ₩11.5 billion from May 2025 to December 2030, the center will embark on the full-scale development of AI technology and a platform capable of independently inferring and determining the kinds of diseases, and discovering new drugs.

< Photo. On May 20th, a kick-off meeting for the AI Star Fellowship Project was held at KAIST Kim Jaechul Graduate School of AI’s Yangjae Research Center with the KAIST research team and participating organizations of Samsung Medical Center, NAVER Cloud, and HITS. [From left to right in the front row] Professor Jaegul Joo (KAIST), Professor Yoonjae Choi (KAIST), Professor Woo Youn Kim (KAIST/HITS), Professor JongChul Ye (KAIST), Professor Sungsoo Ahn (KAIST), Dr. Haanju Yoo (NAVER Cloud), Yoonho Lee (KAIST), HyeYoon Moon (Samsung Medical Center), Dr. Su Min Kim (Samsung Medical Center) >

This project aims to foster an innovative AI research ecosystem centered on young researchers and develop an inferential AI agent that can utilize and automatically expand specialized knowledge systems in the bio and medical fields.