Hyun+Myung

-

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

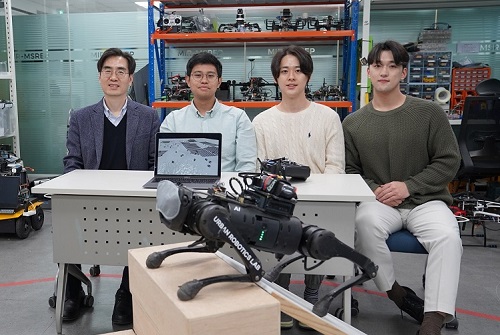

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 3648

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 3648 -

‘Mole-bot’ Optimized for Underground and Space Exploration

Biomimetic drilling robot provides new insights into the development of efficient drilling technologies

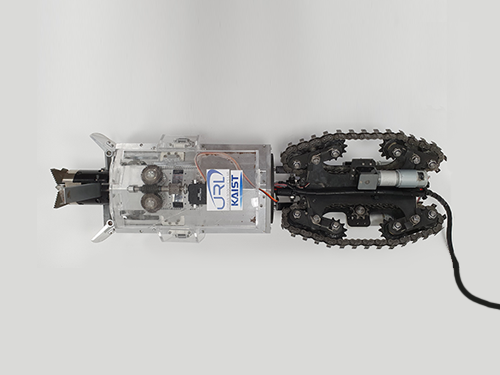

Mole-bot, a drilling biomimetic robot designed by KAIST, boasts a stout scapula, a waist inclinable on all sides, and powerful forelimbs. Most of all, the powerful torque from the expandable drilling bit mimicking the chiseling ability of a mole’s front teeth highlights the best feature of the drilling robot.

The Mole-bot is expected to be used for space exploration and mining for underground resources such as coalbed methane and Rare Earth Elements (REE), which require highly advanced drilling technologies in complex environments.

The research team, led by Professor Hyun Myung from the School of Electrical Engineering, found inspiration for their drilling bot from two striking features of the African mole-rat and European mole.

“The crushing power of the African mole-rat’s teeth is so powerful that they can dig a hole with 48 times more power than their body weight. We used this characteristic for building the main excavation tool. And its expandable drill is designed not to collide with its forelimbs,” said Professor Myung.

The 25-cm wide and 84-cm long Mole-bot can excavate three times faster with six times higher directional accuracy than conventional models. The Mole-bot weighs 26 kg.

After digging, the robot removes the excavated soil and debris using its forelimbs. This embedded muscle feature, inspired by the European mole’s scapula, converts linear motion into a powerful rotational force. For directional drilling, the robot’s elongated waist changes its direction 360° like living mammals.

For exploring underground environments, the research team developed and applied new sensor systems and algorithms to identify the robot’s position and orientation using graph-based 3D Simultaneous Localization and Mapping (SLAM) technology that matches the Earth’s magnetic field sequence, which enables 3D autonomous navigation underground.

According to Market & Market’s survey, the directional drilling market in 2016 is estimated to be 83.3 billion USD and is expected to grow to 103 billion USD in 2021. The growth of the drilling market, starting with the Shale Revolution, is likely to expand into the future development of space and polar resources. As initiated by Space X recently, more attention for planetary exploration will be on the rise and its related technology and equipment market will also increase.

The Mole-bot is a huge step forward for efficient underground drilling and exploration technologies. Unlike conventional drilling processes that use environmentally unfriendly mud compounds for cleaning debris, Mole-bot can mitigate environmental destruction. The researchers said their system saves on cost and labor and does not require additional pipelines or other ancillary equipment.

“We look forward to a more efficient resource exploration with this type of drilling robot. We also hope Mole-bot will have a very positive impact on the robotics market in terms of its extensive application spectra and economic feasibility,” said Professor Myung.

This research, made in collaboration with Professor Jung-Wuk Hong and Professor Tae-Hyuk Kwon’s team in the Department of Civil and Environmental Engineering for robot structure analysis and geotechnical experiments, was supported by the Ministry of Trade, Industry and Energy’s Industrial Technology Innovation Project.

Profile

Professor Hyun Myung

Urban Robotics Lab

http://urobot.kaist.ac.kr/

School of Electrical Engineering

KAIST

2020.06.05 View 7277

‘Mole-bot’ Optimized for Underground and Space Exploration

Biomimetic drilling robot provides new insights into the development of efficient drilling technologies

Mole-bot, a drilling biomimetic robot designed by KAIST, boasts a stout scapula, a waist inclinable on all sides, and powerful forelimbs. Most of all, the powerful torque from the expandable drilling bit mimicking the chiseling ability of a mole’s front teeth highlights the best feature of the drilling robot.

The Mole-bot is expected to be used for space exploration and mining for underground resources such as coalbed methane and Rare Earth Elements (REE), which require highly advanced drilling technologies in complex environments.

The research team, led by Professor Hyun Myung from the School of Electrical Engineering, found inspiration for their drilling bot from two striking features of the African mole-rat and European mole.

“The crushing power of the African mole-rat’s teeth is so powerful that they can dig a hole with 48 times more power than their body weight. We used this characteristic for building the main excavation tool. And its expandable drill is designed not to collide with its forelimbs,” said Professor Myung.

The 25-cm wide and 84-cm long Mole-bot can excavate three times faster with six times higher directional accuracy than conventional models. The Mole-bot weighs 26 kg.

After digging, the robot removes the excavated soil and debris using its forelimbs. This embedded muscle feature, inspired by the European mole’s scapula, converts linear motion into a powerful rotational force. For directional drilling, the robot’s elongated waist changes its direction 360° like living mammals.

For exploring underground environments, the research team developed and applied new sensor systems and algorithms to identify the robot’s position and orientation using graph-based 3D Simultaneous Localization and Mapping (SLAM) technology that matches the Earth’s magnetic field sequence, which enables 3D autonomous navigation underground.

According to Market & Market’s survey, the directional drilling market in 2016 is estimated to be 83.3 billion USD and is expected to grow to 103 billion USD in 2021. The growth of the drilling market, starting with the Shale Revolution, is likely to expand into the future development of space and polar resources. As initiated by Space X recently, more attention for planetary exploration will be on the rise and its related technology and equipment market will also increase.

The Mole-bot is a huge step forward for efficient underground drilling and exploration technologies. Unlike conventional drilling processes that use environmentally unfriendly mud compounds for cleaning debris, Mole-bot can mitigate environmental destruction. The researchers said their system saves on cost and labor and does not require additional pipelines or other ancillary equipment.

“We look forward to a more efficient resource exploration with this type of drilling robot. We also hope Mole-bot will have a very positive impact on the robotics market in terms of its extensive application spectra and economic feasibility,” said Professor Myung.

This research, made in collaboration with Professor Jung-Wuk Hong and Professor Tae-Hyuk Kwon’s team in the Department of Civil and Environmental Engineering for robot structure analysis and geotechnical experiments, was supported by the Ministry of Trade, Industry and Energy’s Industrial Technology Innovation Project.

Profile

Professor Hyun Myung

Urban Robotics Lab

http://urobot.kaist.ac.kr/

School of Electrical Engineering

KAIST

2020.06.05 View 7277 -

BBC Feautres KAIST's Jellyfish Robot

Click, a weekly BBC television program covering news and recent developments in science and technology, introduced KAIST’s robotics project, JEROS, which has been conducted by Professor Hyun Myung of the Urban Robotics Lab (http://urobot.kaist.ac.kr/). JEROS is a robotics system that detects, captures, and removes jellyfish in the ocean. For the show, please click the link below:

BBC News, Click, June 2, 2015

The Robot Jellyfish Shredders

http://www.bbc.com/news/technology-32965841

2015.06.03 View 8094

BBC Feautres KAIST's Jellyfish Robot

Click, a weekly BBC television program covering news and recent developments in science and technology, introduced KAIST’s robotics project, JEROS, which has been conducted by Professor Hyun Myung of the Urban Robotics Lab (http://urobot.kaist.ac.kr/). JEROS is a robotics system that detects, captures, and removes jellyfish in the ocean. For the show, please click the link below:

BBC News, Click, June 2, 2015

The Robot Jellyfish Shredders

http://www.bbc.com/news/technology-32965841

2015.06.03 View 8094 -

Wall Climbing Quadcopter by KAIST Urban Robotics Lab

Popular Science, an American monthly magazine devoted to general readers of science and technology, published “Watch This Creepy Drone Climb A Wall” online describing a drone that can fly and climb walls on March 19, 2015. The drone is the product of research conducted by Professor Hyun Myung of the Department of Civil and Environmental Engineering at KAIST. The flying quadcopters can turn into wall-crawling robots, or vice versa, when carrying out such assignments as cleaning windows or inspecting a building’s infrastructure. Professor Myung leads the KAIST Urban Robotics Lab (http://urobot.kaist.ac.kr/). For a link to the article, see http://www.popsci.com/watch-drone-climb-wall-video.

Another Popular Science article (posted on April 3, 2015), entitled “South Korea Gets Ready for Drone-on-Drone Warfare with North Korea,” describes a combat system of drones against hostile drones. Professor Hyunchul Shim of the Aerospace Engineering Department at KAIST developed the anti-drone system. He currently heads the Unmanned System Research Group, FDCL, http://unmanned.kaist.ac.kr/) and the Center of Field Robotics for Innovation, Exploration, aNd Defense (C-FRIEND).

2015.04.07 View 10216

Wall Climbing Quadcopter by KAIST Urban Robotics Lab

Popular Science, an American monthly magazine devoted to general readers of science and technology, published “Watch This Creepy Drone Climb A Wall” online describing a drone that can fly and climb walls on March 19, 2015. The drone is the product of research conducted by Professor Hyun Myung of the Department of Civil and Environmental Engineering at KAIST. The flying quadcopters can turn into wall-crawling robots, or vice versa, when carrying out such assignments as cleaning windows or inspecting a building’s infrastructure. Professor Myung leads the KAIST Urban Robotics Lab (http://urobot.kaist.ac.kr/). For a link to the article, see http://www.popsci.com/watch-drone-climb-wall-video.

Another Popular Science article (posted on April 3, 2015), entitled “South Korea Gets Ready for Drone-on-Drone Warfare with North Korea,” describes a combat system of drones against hostile drones. Professor Hyunchul Shim of the Aerospace Engineering Department at KAIST developed the anti-drone system. He currently heads the Unmanned System Research Group, FDCL, http://unmanned.kaist.ac.kr/) and the Center of Field Robotics for Innovation, Exploration, aNd Defense (C-FRIEND).

2015.04.07 View 10216