robot

-

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

Representing Korean Robotics at Sea: KAIST’s 26-month strife rewarded

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

- Team KAIST, composed of students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical and Engineering, came through the challenge as the first runner-up winning the prize money totaling up to $650,000 (KRW 860 million).

- Successfully led the autonomous collaboration of unmanned aerial and maritime vehicles using cutting-edge robotics and AI technology through to the final round of the competition held in Abu Dhabi from January 10 to February 6, 2024.

KAIST (President Kwang-Hyung Lee), reported on the 8th that Team KAIST, led by students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical Engineering, with Pablo Aviation as a partner, won a total prize money of $650,000 (KRW 860 million) at the Maritime Grand Challenge by the Mohamed Bin Zayed International Robotics Challenge (MBZIRC), finishing first runner-up.

This competition, which is the largest ever robotics competition held over water, is sponsored by the government of the United Arab Emirates and organized by ASPIRE, an organization under the Abu Dhabi Ministry of Science, with a total prize money of $3 million.

In the competition, which started at the end of 2021, 52 teams from around the world participated and five teams were selected to go on to the finals in February 2023 after going through the first and second stages of screening. The final round was held from January 10 to February 6, 2024, using actual unmanned ships and drones in a secluded sea area of 10 km2 off the coast of Abu Dhabi, the capital of the United Arab Emirates. A total of 18 KAIST students and Professor Jinwhan Kim and Professor Hyunchul Shim took part in this competition at the location at Abu Dhabi.

Team KAIST will receive $500,000 in prize money for taking second place in the final, and the team’s prize money totals up to $650,000 including $150,000 that was as special midterm award for finalists.

The final mission scenario is to find the target vessel on the run carrying illegal cargoes among many ships moving within the GPS-disabled marine surface, and inspect the deck for two different types of stolen cargo to recover them using the aerial vehicle to bring the small cargo and the robot manipulator topped on an unmanned ship to retrieve the larger one. The true aim of the mission is to complete it through autonomous collaboration of the unmanned ship and the aerial vehicle without human intervention throughout the entire mission process. In particular, since GPS cannot be used in this competition due to regulations, Professor Jinwhan Kim's research team developed autonomous operation techniques for unmanned ships, including searching and navigating methods using maritime radar, and Professor Hyunchul Shim's research team developed video-based navigation and a technology to combine a small autonomous robot with a drone.

The final mission is to retrieve cargo on board a ship fleeing at sea through autonomous collaboration between unmanned ships and unmanned aerial vehicles without human intervention. The overall mission consists the first stage of conducting the inspection to find the target ship among several ships moving at sea and the second stage of conducting the intervention mission to retrieve the cargoes on the deck of the ship. Each team was given a total of three opportunities, and the team that completed the highest-level mission in the shortest time during the three attempts received the highest score.

In the first attempt, KAIST was the only team to succeed in the first stage search mission, but the competition began in earnest as the Croatian team also completed the first stage mission in the second attempt. As the competition schedule was delayed due to strong winds and high waves that continued for several days, the organizers decided to hold the finals with the three teams, including the Team KAIST and the team from Croatia’s the University of Zagreb, which completed the first stage of the mission, and Team Fly-Eagle, a team of researcher from China and UAE that partially completed the first stage. The three teams were given the chance to proceed to the finals and try for the third attempt, and in the final competition, the Croatian team won, KAIST took the second place, and the combined team of UAE-China combined team took the third place. The final prize to be given for the winning team is set at $2 million with $500,000 for the runner-up team, and $250,000 for the third-place.

Professor Jinwhan Kim of the Department of Mechanical Engineering, who served as the advisor for Team KAIST, said, “I would like to express my gratitude and congratulations to the students who put in a huge academic and physical efforts in preparing for the competition over the past two years. I feel rewarded because, regardless of the results, every bit of efforts put into this up to this point will become the base of their confidence and a valuable asset in their growth into a great researcher.” Sol Han, a doctoral student in mechanical engineering who served as the team leader, said, “I am disappointed of how narrowly we missed out on winning at the end, but I am satisfied with the significance of the output we’ve got and I am grateful to the team members who worked hard together for that.”

HD Hyundai, Rainbow Robotics, Avikus, and FIMS also participated as sponsors for Team KAIST's campaign.

2024.02.09 View 1647

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

Representing Korean Robotics at Sea: KAIST’s 26-month strife rewarded

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

- Team KAIST, composed of students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical and Engineering, came through the challenge as the first runner-up winning the prize money totaling up to $650,000 (KRW 860 million).

- Successfully led the autonomous collaboration of unmanned aerial and maritime vehicles using cutting-edge robotics and AI technology through to the final round of the competition held in Abu Dhabi from January 10 to February 6, 2024.

KAIST (President Kwang-Hyung Lee), reported on the 8th that Team KAIST, led by students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical Engineering, with Pablo Aviation as a partner, won a total prize money of $650,000 (KRW 860 million) at the Maritime Grand Challenge by the Mohamed Bin Zayed International Robotics Challenge (MBZIRC), finishing first runner-up.

This competition, which is the largest ever robotics competition held over water, is sponsored by the government of the United Arab Emirates and organized by ASPIRE, an organization under the Abu Dhabi Ministry of Science, with a total prize money of $3 million.

In the competition, which started at the end of 2021, 52 teams from around the world participated and five teams were selected to go on to the finals in February 2023 after going through the first and second stages of screening. The final round was held from January 10 to February 6, 2024, using actual unmanned ships and drones in a secluded sea area of 10 km2 off the coast of Abu Dhabi, the capital of the United Arab Emirates. A total of 18 KAIST students and Professor Jinwhan Kim and Professor Hyunchul Shim took part in this competition at the location at Abu Dhabi.

Team KAIST will receive $500,000 in prize money for taking second place in the final, and the team’s prize money totals up to $650,000 including $150,000 that was as special midterm award for finalists.

The final mission scenario is to find the target vessel on the run carrying illegal cargoes among many ships moving within the GPS-disabled marine surface, and inspect the deck for two different types of stolen cargo to recover them using the aerial vehicle to bring the small cargo and the robot manipulator topped on an unmanned ship to retrieve the larger one. The true aim of the mission is to complete it through autonomous collaboration of the unmanned ship and the aerial vehicle without human intervention throughout the entire mission process. In particular, since GPS cannot be used in this competition due to regulations, Professor Jinwhan Kim's research team developed autonomous operation techniques for unmanned ships, including searching and navigating methods using maritime radar, and Professor Hyunchul Shim's research team developed video-based navigation and a technology to combine a small autonomous robot with a drone.

The final mission is to retrieve cargo on board a ship fleeing at sea through autonomous collaboration between unmanned ships and unmanned aerial vehicles without human intervention. The overall mission consists the first stage of conducting the inspection to find the target ship among several ships moving at sea and the second stage of conducting the intervention mission to retrieve the cargoes on the deck of the ship. Each team was given a total of three opportunities, and the team that completed the highest-level mission in the shortest time during the three attempts received the highest score.

In the first attempt, KAIST was the only team to succeed in the first stage search mission, but the competition began in earnest as the Croatian team also completed the first stage mission in the second attempt. As the competition schedule was delayed due to strong winds and high waves that continued for several days, the organizers decided to hold the finals with the three teams, including the Team KAIST and the team from Croatia’s the University of Zagreb, which completed the first stage of the mission, and Team Fly-Eagle, a team of researcher from China and UAE that partially completed the first stage. The three teams were given the chance to proceed to the finals and try for the third attempt, and in the final competition, the Croatian team won, KAIST took the second place, and the combined team of UAE-China combined team took the third place. The final prize to be given for the winning team is set at $2 million with $500,000 for the runner-up team, and $250,000 for the third-place.

Professor Jinwhan Kim of the Department of Mechanical Engineering, who served as the advisor for Team KAIST, said, “I would like to express my gratitude and congratulations to the students who put in a huge academic and physical efforts in preparing for the competition over the past two years. I feel rewarded because, regardless of the results, every bit of efforts put into this up to this point will become the base of their confidence and a valuable asset in their growth into a great researcher.” Sol Han, a doctoral student in mechanical engineering who served as the team leader, said, “I am disappointed of how narrowly we missed out on winning at the end, but I am satisfied with the significance of the output we’ve got and I am grateful to the team members who worked hard together for that.”

HD Hyundai, Rainbow Robotics, Avikus, and FIMS also participated as sponsors for Team KAIST's campaign.

2024.02.09 View 1647 -

KAIST develops an artificial muscle device that produces force 34 times its weight

- Professor IlKwon Oh’s research team in KAIST’s Department of Mechanical Engineering developed a soft fluidic switch using an ionic polymer artificial muscle that runs with ultra-low power to lift objects 34 times greater than its weight.

- Its light weight and small size make it applicable to various industrial fields such as soft electronics, smart textiles, and biomedical devices by controlling fluid flow with high precision, even in narrow spaces.

Soft robots, medical devices, and wearable devices have permeated our daily lives. KAIST researchers have developed a fluid switch using ionic polymer artificial muscles that operates at ultra-low power and produces a force 34 times greater than its weight. Fluid switches control fluid flow, causing the fluid to flow in a specific direction to invoke various movements.

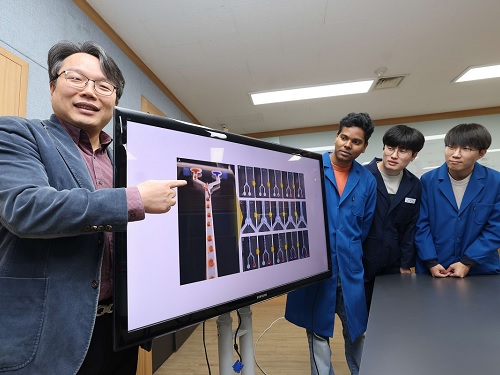

KAIST (President Kwang-Hyung Lee) announced on the 4th of January that a research team under Professor IlKwon Oh from the Department of Mechanical Engineering has developed a soft fluidic switch that operates at ultra-low voltage and can be used in narrow spaces.

Artificial muscles imitate human muscles and provide flexible and natural movements compared to traditional motors, making them one of the basic elements used in soft robots, medical devices, and wearable devices. These artificial muscles create movements in response to external stimuli such as electricity, air pressure, and temperature changes, and in order to utilize artificial muscles, it is important to control these movements precisely.

Switches based on existing motors were difficult to use within limited spaces due to their rigidity and large size. In order to address these issues, the research team developed an electro-ionic soft actuator that can control fluid flow while producing large amounts of force, even in a narrow pipe, and used it as a soft fluidic switch.

< Figure 1. The separation of fluid droplets using a soft fluid switch at ultra-low voltage. >

The ionic polymer artificial muscle developed by the research team is composed of metal electrodes and ionic polymers, and it generates force and movement in response to electricity. A polysulfonated covalent organic framework (pS-COF) made by combining organic molecules on the surface of the artificial muscle electrode was used to generate an impressive amount of force relative to its weight with ultra-low power (~0.01V).

As a result, the artificial muscle, which was manufactured to be as thin as a hair with a thickness of 180 µm, produced a force more than 34 times greater than its light weight of 10 mg to initiate smooth movement. Through this, the research team was able to precisely control the direction of fluid flow with low power.

< Figure 2. The synthesis and use of pS-COF as a common electrode-electrolyte host for electroactive soft fluid switches. A) The synthesis schematic of pS-COF. B) The schematic diagram of the operating principle of the electrochemical soft switch. C) The schematic diagram of using a pS-COF-based electrochemical soft switch to control fluid flow in dynamic operation. >

Professor IlKwon Oh, who led this research, said, “The electrochemical soft fluidic switch that operate at ultra-low power can open up many possibilities in the fields of soft robots, soft electronics, and microfluidics based on fluid control.” He added, “From smart fibers to biomedical devices, this technology has the potential to be immediately put to use in a variety of industrial settings as it can be easily applied to ultra-small electronic systems in our daily lives.”

The results of this study, in which Dr. Manmatha Mahato, a research professor in the Department of Mechanical Engineering at KAIST, participated as the first author, were published in the international academic journal Science Advances on December 13, 2023. (Paper title: Polysulfonated Covalent Organic Framework as Active Electrode Host for Mobile Cation Guests in Electrochemical Soft Actuator)

This research was conducted with support from the National Research Foundation of Korea's Leader Scientist Support Project (Creative Research Group) and Future Convergence Pioneer Project.

* Paper DOI: https://www.science.org/doi/abs/10.1126/sciadv.adk9752

2024.01.11 View 2857

KAIST develops an artificial muscle device that produces force 34 times its weight

- Professor IlKwon Oh’s research team in KAIST’s Department of Mechanical Engineering developed a soft fluidic switch using an ionic polymer artificial muscle that runs with ultra-low power to lift objects 34 times greater than its weight.

- Its light weight and small size make it applicable to various industrial fields such as soft electronics, smart textiles, and biomedical devices by controlling fluid flow with high precision, even in narrow spaces.

Soft robots, medical devices, and wearable devices have permeated our daily lives. KAIST researchers have developed a fluid switch using ionic polymer artificial muscles that operates at ultra-low power and produces a force 34 times greater than its weight. Fluid switches control fluid flow, causing the fluid to flow in a specific direction to invoke various movements.

KAIST (President Kwang-Hyung Lee) announced on the 4th of January that a research team under Professor IlKwon Oh from the Department of Mechanical Engineering has developed a soft fluidic switch that operates at ultra-low voltage and can be used in narrow spaces.

Artificial muscles imitate human muscles and provide flexible and natural movements compared to traditional motors, making them one of the basic elements used in soft robots, medical devices, and wearable devices. These artificial muscles create movements in response to external stimuli such as electricity, air pressure, and temperature changes, and in order to utilize artificial muscles, it is important to control these movements precisely.

Switches based on existing motors were difficult to use within limited spaces due to their rigidity and large size. In order to address these issues, the research team developed an electro-ionic soft actuator that can control fluid flow while producing large amounts of force, even in a narrow pipe, and used it as a soft fluidic switch.

< Figure 1. The separation of fluid droplets using a soft fluid switch at ultra-low voltage. >

The ionic polymer artificial muscle developed by the research team is composed of metal electrodes and ionic polymers, and it generates force and movement in response to electricity. A polysulfonated covalent organic framework (pS-COF) made by combining organic molecules on the surface of the artificial muscle electrode was used to generate an impressive amount of force relative to its weight with ultra-low power (~0.01V).

As a result, the artificial muscle, which was manufactured to be as thin as a hair with a thickness of 180 µm, produced a force more than 34 times greater than its light weight of 10 mg to initiate smooth movement. Through this, the research team was able to precisely control the direction of fluid flow with low power.

< Figure 2. The synthesis and use of pS-COF as a common electrode-electrolyte host for electroactive soft fluid switches. A) The synthesis schematic of pS-COF. B) The schematic diagram of the operating principle of the electrochemical soft switch. C) The schematic diagram of using a pS-COF-based electrochemical soft switch to control fluid flow in dynamic operation. >

Professor IlKwon Oh, who led this research, said, “The electrochemical soft fluidic switch that operate at ultra-low power can open up many possibilities in the fields of soft robots, soft electronics, and microfluidics based on fluid control.” He added, “From smart fibers to biomedical devices, this technology has the potential to be immediately put to use in a variety of industrial settings as it can be easily applied to ultra-small electronic systems in our daily lives.”

The results of this study, in which Dr. Manmatha Mahato, a research professor in the Department of Mechanical Engineering at KAIST, participated as the first author, were published in the international academic journal Science Advances on December 13, 2023. (Paper title: Polysulfonated Covalent Organic Framework as Active Electrode Host for Mobile Cation Guests in Electrochemical Soft Actuator)

This research was conducted with support from the National Research Foundation of Korea's Leader Scientist Support Project (Creative Research Group) and Future Convergence Pioneer Project.

* Paper DOI: https://www.science.org/doi/abs/10.1126/sciadv.adk9752

2024.01.11 View 2857 -

KAIST Research Team Develops World’s First Humanoid Pilot, PIBOT

In the Spring of last year, the legendary, fictional pilot “Maverick” flew his plane in the film “Top Gun: Maverick” that drew crowds to theatres around the world. This year, the appearance of a humanoid pilot, PIBOT, has stolen the spotlight at KAIST.

< Photo 1. Humanoid pilot robot, PIBOT >

A KAIST research team has developed a humanoid robot that can understand manuals written in natural language and fly a plane on its own. The team also announced their plans to commercialize the humanoid pilot.

< Photo 2. PIBOT on flight simulator (view from above) >

The project was led by KAIST Professor David Hyunchul Shim, and was conducted as a joint research project with Professors Jaegul Choo, Kuk-Jin Yoon, and Min Jun Kim. The study was supported by Future Challenge Funding under the project title, “Development of Human-like Pilot Robot based on Natural Language Processing”. The team utilized AI and robotics technologies, and demonstrated that the humanoid could sit itself in a real cockpit and operate the various pieces of equipment without modifying any part of the aircraft. This is a fundamental difference that distinguishes this technology from existing autopilot functions or unmanned aircrafts.

< Photo 3. PIBOT operating a flight simulator (side) >

The KAIST team’s humanoid pilot is still under development but it can already remember Jeppeson charts from all around the world, which is impossible for human pilots to do, and fly without error. In particular, it can make use of recent ChatGPT technology to remember the full Quick Reference Handbook (QRF) and respond immediately to various situations, as well as calculate safe routes in real time based on the flight status of the aircraft, with emergency response times quicker than human pilots.

Furthermore, while existing robots usually carry out repeated motions in a fixed position, PIBOT can analyze the state of the cockpit as well as the situation outside the aircraft using an embedded camera. PIBOT can accurately control the various switches in the cockpit and, using high-precision control technology, it can accurately control its robotic arms and hands even during harsh turbulence.

< Photo 4. PIBOT on-board KLA-100, Korea’s first light aircraft >

The humanoid pilot is currently capable of carrying out all operations from starting the aircraft to taxiing, takeoff and landing, cruising, and cycling using a flight control simulator. The research team plans to use the humanoid pilot to fly a real-life light aircraft to verify its abilities. Prof. Shim explained, “Humanoid pilot robots do not require the modification of existing aircrafts and can be applied immediately to automated flights. They are therefore highly applicable and practical. We expect them to be applied into various other vehicles like cars and military trucks since they can control a wide range of equipment. They will particularly be particularly helpful in situations where military resources are severely depleted.”

This research was supported by Future Challenge Funding (total: 5.7 bn KRW) from the Agency for Defense Development. The project started in 2022 as a joint research project by Prof. David Hyunchul Shim (chief of research) from the KAIST School of Electrical Engineering (EE), Prof. Jaegul Choo from the Kim Jaechul Graduate School of AI at KAIST, Prof. Kuk-Jin Yoon from the KAIST Department of Mechanical Engineering, and Prof. Min Jun Kim from the KAIST School of EE. The project is to be completed by 2026 and the involved researchers are also considering commercialization strategies for both military and civil use.

2023.08.03 View 6794

KAIST Research Team Develops World’s First Humanoid Pilot, PIBOT

In the Spring of last year, the legendary, fictional pilot “Maverick” flew his plane in the film “Top Gun: Maverick” that drew crowds to theatres around the world. This year, the appearance of a humanoid pilot, PIBOT, has stolen the spotlight at KAIST.

< Photo 1. Humanoid pilot robot, PIBOT >

A KAIST research team has developed a humanoid robot that can understand manuals written in natural language and fly a plane on its own. The team also announced their plans to commercialize the humanoid pilot.

< Photo 2. PIBOT on flight simulator (view from above) >

The project was led by KAIST Professor David Hyunchul Shim, and was conducted as a joint research project with Professors Jaegul Choo, Kuk-Jin Yoon, and Min Jun Kim. The study was supported by Future Challenge Funding under the project title, “Development of Human-like Pilot Robot based on Natural Language Processing”. The team utilized AI and robotics technologies, and demonstrated that the humanoid could sit itself in a real cockpit and operate the various pieces of equipment without modifying any part of the aircraft. This is a fundamental difference that distinguishes this technology from existing autopilot functions or unmanned aircrafts.

< Photo 3. PIBOT operating a flight simulator (side) >

The KAIST team’s humanoid pilot is still under development but it can already remember Jeppeson charts from all around the world, which is impossible for human pilots to do, and fly without error. In particular, it can make use of recent ChatGPT technology to remember the full Quick Reference Handbook (QRF) and respond immediately to various situations, as well as calculate safe routes in real time based on the flight status of the aircraft, with emergency response times quicker than human pilots.

Furthermore, while existing robots usually carry out repeated motions in a fixed position, PIBOT can analyze the state of the cockpit as well as the situation outside the aircraft using an embedded camera. PIBOT can accurately control the various switches in the cockpit and, using high-precision control technology, it can accurately control its robotic arms and hands even during harsh turbulence.

< Photo 4. PIBOT on-board KLA-100, Korea’s first light aircraft >

The humanoid pilot is currently capable of carrying out all operations from starting the aircraft to taxiing, takeoff and landing, cruising, and cycling using a flight control simulator. The research team plans to use the humanoid pilot to fly a real-life light aircraft to verify its abilities. Prof. Shim explained, “Humanoid pilot robots do not require the modification of existing aircrafts and can be applied immediately to automated flights. They are therefore highly applicable and practical. We expect them to be applied into various other vehicles like cars and military trucks since they can control a wide range of equipment. They will particularly be particularly helpful in situations where military resources are severely depleted.”

This research was supported by Future Challenge Funding (total: 5.7 bn KRW) from the Agency for Defense Development. The project started in 2022 as a joint research project by Prof. David Hyunchul Shim (chief of research) from the KAIST School of Electrical Engineering (EE), Prof. Jaegul Choo from the Kim Jaechul Graduate School of AI at KAIST, Prof. Kuk-Jin Yoon from the KAIST Department of Mechanical Engineering, and Prof. Min Jun Kim from the KAIST School of EE. The project is to be completed by 2026 and the involved researchers are also considering commercialization strategies for both military and civil use.

2023.08.03 View 6794 -

Professor Joseph J. Lim of KAIST receives the Best System Paper Award from RSS 2023, First in Korea

- Professor Joseph J. Lim from the Kim Jaechul Graduate School of AI at KAIST and his team receive an award for the most outstanding paper in the implementation of robot systems.

- Professor Lim works on AI-based perception, reasoning, and sequential decision-making to develop systems capable of intelligent decision-making, including robot learning

< Photo 1. RSS2023 Best System Paper Award Presentation >

The team of Professor Joseph J. Lim from the Kim Jaechul Graduate School of AI at KAIST has been honored with the 'Best System Paper Award' at "Robotics: Science and Systems (RSS) 2023".

The RSS conference is globally recognized as a leading event for showcasing the latest discoveries and advancements in the field of robotics. It is a venue where the greatest minds in robotics engineering and robot learning come together to share their research breakthroughs. The RSS Best System Paper Award is a prestigious honor granted to a paper that excels in presenting real-world robot system implementation and experimental results.

< Photo 2. Professor Joseph J. Lim of Kim Jaechul Graduate School of AI at KAIST >

The team led by Professor Lim, including two Master's students and an alumnus (soon to be appointed at Yonsei University), received the prestigious RSS Best System Paper Award, making it the first-ever achievement for a Korean and for a domestic institution.

< Photo 3. Certificate of the Best System Paper Award presented at RSS 2023 >

This award is especially meaningful considering the broader challenges in the field. Although recent progress in artificial intelligence and deep learning algorithms has resulted in numerous breakthroughs in robotics, most of these achievements have been confined to relatively simple and short tasks, like walking or pick-and-place. Moreover, tasks are typically performed in simulated environments rather than dealing with more complex, long-horizon real-world tasks such as factory operations or household chores. These limitations primarily stem from the considerable challenge of acquiring data required to develop and validate learning-based AI techniques, particularly in real-world complex tasks.

In light of these challenges, this paper introduced a benchmark that employs 3D printing to simplify the reproduction of furniture assembly tasks in real-world environments. Furthermore, it proposed a standard benchmark for the development and comparison of algorithms for complex and long-horizon tasks, supported by teleoperation data. Ultimately, the paper suggests a new research direction of addressing complex and long-horizon tasks and encourages diverse advancements in research by facilitating reproducible experiments in real-world environments.

Professor Lim underscored the growing potential for integrating robots into daily life, driven by an aging population and an increase in single-person households. As robots become part of everyday life, testing their performance in real-world scenarios becomes increasingly crucial. He hoped this research would serve as a cornerstone for future studies in this field.

The Master's students, Minho Heo and Doohyun Lee, from the Kim Jaechul Graduate School of AI at KAIST, also shared their aspirations to become global researchers in the domain of robot learning. Meanwhile, the alumnus of Professor Lim's research lab, Dr. Youngwoon Lee, is set to be appointed to the Graduate School of AI at Yonsei University and will continue pursuing research in robot learning.

Paper title: Furniture Bench: Reproducible Real-World Benchmark for Long-Horizon Complex Manipulation. Robotics: Science and Systems.

< Image. Conceptual Summary of the 3D Printing Technology >

2023.07.31 View 2607

Professor Joseph J. Lim of KAIST receives the Best System Paper Award from RSS 2023, First in Korea

- Professor Joseph J. Lim from the Kim Jaechul Graduate School of AI at KAIST and his team receive an award for the most outstanding paper in the implementation of robot systems.

- Professor Lim works on AI-based perception, reasoning, and sequential decision-making to develop systems capable of intelligent decision-making, including robot learning

< Photo 1. RSS2023 Best System Paper Award Presentation >

The team of Professor Joseph J. Lim from the Kim Jaechul Graduate School of AI at KAIST has been honored with the 'Best System Paper Award' at "Robotics: Science and Systems (RSS) 2023".

The RSS conference is globally recognized as a leading event for showcasing the latest discoveries and advancements in the field of robotics. It is a venue where the greatest minds in robotics engineering and robot learning come together to share their research breakthroughs. The RSS Best System Paper Award is a prestigious honor granted to a paper that excels in presenting real-world robot system implementation and experimental results.

< Photo 2. Professor Joseph J. Lim of Kim Jaechul Graduate School of AI at KAIST >

The team led by Professor Lim, including two Master's students and an alumnus (soon to be appointed at Yonsei University), received the prestigious RSS Best System Paper Award, making it the first-ever achievement for a Korean and for a domestic institution.

< Photo 3. Certificate of the Best System Paper Award presented at RSS 2023 >

This award is especially meaningful considering the broader challenges in the field. Although recent progress in artificial intelligence and deep learning algorithms has resulted in numerous breakthroughs in robotics, most of these achievements have been confined to relatively simple and short tasks, like walking or pick-and-place. Moreover, tasks are typically performed in simulated environments rather than dealing with more complex, long-horizon real-world tasks such as factory operations or household chores. These limitations primarily stem from the considerable challenge of acquiring data required to develop and validate learning-based AI techniques, particularly in real-world complex tasks.

In light of these challenges, this paper introduced a benchmark that employs 3D printing to simplify the reproduction of furniture assembly tasks in real-world environments. Furthermore, it proposed a standard benchmark for the development and comparison of algorithms for complex and long-horizon tasks, supported by teleoperation data. Ultimately, the paper suggests a new research direction of addressing complex and long-horizon tasks and encourages diverse advancements in research by facilitating reproducible experiments in real-world environments.

Professor Lim underscored the growing potential for integrating robots into daily life, driven by an aging population and an increase in single-person households. As robots become part of everyday life, testing their performance in real-world scenarios becomes increasingly crucial. He hoped this research would serve as a cornerstone for future studies in this field.

The Master's students, Minho Heo and Doohyun Lee, from the Kim Jaechul Graduate School of AI at KAIST, also shared their aspirations to become global researchers in the domain of robot learning. Meanwhile, the alumnus of Professor Lim's research lab, Dr. Youngwoon Lee, is set to be appointed to the Graduate School of AI at Yonsei University and will continue pursuing research in robot learning.

Paper title: Furniture Bench: Reproducible Real-World Benchmark for Long-Horizon Complex Manipulation. Robotics: Science and Systems.

< Image. Conceptual Summary of the 3D Printing Technology >

2023.07.31 View 2607 -

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

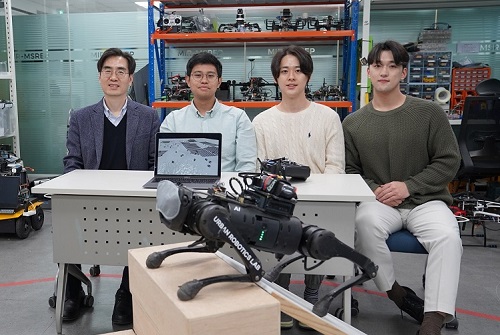

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 3680

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 3680 -

KAIST’s Robo-Dog “RaiBo” runs through the sandy beach

KAIST (President Kwang Hyung Lee) announced on the 25th that a research team led by Professor Jemin Hwangbo of the Department of Mechanical Engineering developed a quadrupedal robot control technology that can walk robustly with agility even in deformable terrain such as sandy beach.

< Photo. RAI Lab Team with Professor Hwangbo in the middle of the back row. >

Professor Hwangbo's research team developed a technology to model the force received by a walking robot on the ground made of granular materials such as sand and simulate it via a quadrupedal robot. Also, the team worked on an artificial neural network structure which is suitable in making real-time decisions needed in adapting to various types of ground without prior information while walking at the same time and applied it on to reinforcement learning. The trained neural network controller is expected to expand the scope of application of quadrupedal walking robots by proving its robustness in changing terrain, such as the ability to move in high-speed even on a sandy beach and walk and turn on soft grounds like an air mattress without losing balance.

This research, with Ph.D. Student Soo-Young Choi of KAIST Department of Mechanical Engineering as the first author, was published in January in the “Science Robotics”. (Paper title: Learning quadrupedal locomotion on deformable terrain).

Reinforcement learning is an AI learning method used to create a machine that collects data on the results of various actions in an arbitrary situation and utilizes that set of data to perform a task. Because the amount of data required for reinforcement learning is so vast, a method of collecting data through simulations that approximates physical phenomena in the real environment is widely used.

In particular, learning-based controllers in the field of walking robots have been applied to real environments after learning through data collected in simulations to successfully perform walking controls in various terrains.

However, since the performance of the learning-based controller rapidly decreases when the actual environment has any discrepancy from the learned simulation environment, it is important to implement an environment similar to the real one in the data collection stage. Therefore, in order to create a learning-based controller that can maintain balance in a deforming terrain, the simulator must provide a similar contact experience.

The research team defined a contact model that predicted the force generated upon contact from the motion dynamics of a walking body based on a ground reaction force model that considered the additional mass effect of granular media defined in previous studies.

Furthermore, by calculating the force generated from one or several contacts at each time step, the deforming terrain was efficiently simulated.

The research team also introduced an artificial neural network structure that implicitly predicts ground characteristics by using a recurrent neural network that analyzes time-series data from the robot's sensors.

The learned controller was mounted on the robot 'RaiBo', which was built hands-on by the research team to show high-speed walking of up to 3.03 m/s on a sandy beach where the robot's feet were completely submerged in the sand. Even when applied to harder grounds, such as grassy fields, and a running track, it was able to run stably by adapting to the characteristics of the ground without any additional programming or revision to the controlling algorithm.

In addition, it rotated with stability at 1.54 rad/s (approximately 90° per second) on an air mattress and demonstrated its quick adaptability even in the situation in which the terrain suddenly turned soft.

The research team demonstrated the importance of providing a suitable contact experience during the learning process by comparison with a controller that assumed the ground to be rigid, and proved that the proposed recurrent neural network modifies the controller's walking method according to the ground properties.

The simulation and learning methodology developed by the research team is expected to contribute to robots performing practical tasks as it expands the range of terrains that various walking robots can operate on.

The first author, Suyoung Choi, said, “It has been shown that providing a learning-based controller with a close contact experience with real deforming ground is essential for application to deforming terrain.” He went on to add that “The proposed controller can be used without prior information on the terrain, so it can be applied to various robot walking studies.”

This research was carried out with the support of the Samsung Research Funding & Incubation Center of Samsung Electronics.

< Figure 1. Adaptability of the proposed controller to various ground environments. The controller learned from a wide range of randomized granular media simulations showed adaptability to various natural and artificial terrains, and demonstrated high-speed walking ability and energy efficiency. >

< Figure 2. Contact model definition for simulation of granular substrates. The research team used a model that considered the additional mass effect for the vertical force and a Coulomb friction model for the horizontal direction while approximating the contact with the granular medium as occurring at a point. Furthermore, a model that simulates the ground resistance that can occur on the side of the foot was introduced and used for simulation. >

2023.01.26 View 8031

KAIST’s Robo-Dog “RaiBo” runs through the sandy beach

KAIST (President Kwang Hyung Lee) announced on the 25th that a research team led by Professor Jemin Hwangbo of the Department of Mechanical Engineering developed a quadrupedal robot control technology that can walk robustly with agility even in deformable terrain such as sandy beach.

< Photo. RAI Lab Team with Professor Hwangbo in the middle of the back row. >

Professor Hwangbo's research team developed a technology to model the force received by a walking robot on the ground made of granular materials such as sand and simulate it via a quadrupedal robot. Also, the team worked on an artificial neural network structure which is suitable in making real-time decisions needed in adapting to various types of ground without prior information while walking at the same time and applied it on to reinforcement learning. The trained neural network controller is expected to expand the scope of application of quadrupedal walking robots by proving its robustness in changing terrain, such as the ability to move in high-speed even on a sandy beach and walk and turn on soft grounds like an air mattress without losing balance.

This research, with Ph.D. Student Soo-Young Choi of KAIST Department of Mechanical Engineering as the first author, was published in January in the “Science Robotics”. (Paper title: Learning quadrupedal locomotion on deformable terrain).

Reinforcement learning is an AI learning method used to create a machine that collects data on the results of various actions in an arbitrary situation and utilizes that set of data to perform a task. Because the amount of data required for reinforcement learning is so vast, a method of collecting data through simulations that approximates physical phenomena in the real environment is widely used.

In particular, learning-based controllers in the field of walking robots have been applied to real environments after learning through data collected in simulations to successfully perform walking controls in various terrains.

However, since the performance of the learning-based controller rapidly decreases when the actual environment has any discrepancy from the learned simulation environment, it is important to implement an environment similar to the real one in the data collection stage. Therefore, in order to create a learning-based controller that can maintain balance in a deforming terrain, the simulator must provide a similar contact experience.

The research team defined a contact model that predicted the force generated upon contact from the motion dynamics of a walking body based on a ground reaction force model that considered the additional mass effect of granular media defined in previous studies.

Furthermore, by calculating the force generated from one or several contacts at each time step, the deforming terrain was efficiently simulated.

The research team also introduced an artificial neural network structure that implicitly predicts ground characteristics by using a recurrent neural network that analyzes time-series data from the robot's sensors.

The learned controller was mounted on the robot 'RaiBo', which was built hands-on by the research team to show high-speed walking of up to 3.03 m/s on a sandy beach where the robot's feet were completely submerged in the sand. Even when applied to harder grounds, such as grassy fields, and a running track, it was able to run stably by adapting to the characteristics of the ground without any additional programming or revision to the controlling algorithm.

In addition, it rotated with stability at 1.54 rad/s (approximately 90° per second) on an air mattress and demonstrated its quick adaptability even in the situation in which the terrain suddenly turned soft.

The research team demonstrated the importance of providing a suitable contact experience during the learning process by comparison with a controller that assumed the ground to be rigid, and proved that the proposed recurrent neural network modifies the controller's walking method according to the ground properties.

The simulation and learning methodology developed by the research team is expected to contribute to robots performing practical tasks as it expands the range of terrains that various walking robots can operate on.

The first author, Suyoung Choi, said, “It has been shown that providing a learning-based controller with a close contact experience with real deforming ground is essential for application to deforming terrain.” He went on to add that “The proposed controller can be used without prior information on the terrain, so it can be applied to various robot walking studies.”

This research was carried out with the support of the Samsung Research Funding & Incubation Center of Samsung Electronics.

< Figure 1. Adaptability of the proposed controller to various ground environments. The controller learned from a wide range of randomized granular media simulations showed adaptability to various natural and artificial terrains, and demonstrated high-speed walking ability and energy efficiency. >

< Figure 2. Contact model definition for simulation of granular substrates. The research team used a model that considered the additional mass effect for the vertical force and a Coulomb friction model for the horizontal direction while approximating the contact with the granular medium as occurring at a point. Furthermore, a model that simulates the ground resistance that can occur on the side of the foot was introduced and used for simulation. >

2023.01.26 View 8031 -

KAIST to showcase a pack of KAIST Start-ups at CES 2023

- KAIST is to run an Exclusive Booth at the Venetian Expo (Hall G) in Eureka Park, at CES 2023, to be held in Las Vegas from Thursday, January 5th through Sunday, the 8th.

- Twelve businesses recently put together by KAIST faculty, alumni, and the start-ups given legal usage of KAIST technologies will be showcased.

- Out of the participating start-ups, the products by Fluiz and Hills Robotics were selected as the “CES Innovation Award 2023 Honoree”, scoring top in their respective categories.

On January 3, KAIST announced that there will be a KAIST booth at Consumer Electronics Show (CES) 2023, the most influential tech event in the world, to be held in Las Vegas from January 3 to 8.

At this exclusive corner, KAIST will introduce the technologies of KAIST start-ups over the exhibition period.

KAIST first started holding its exclusive booth in CES 2019 with five start-up businesses, following up at CES 2020 with 12 start-ups and at CES 2022 with 10 start-ups. At CES 2023, which would be KAIST’s fourth conference, KAIST will be accompanying 12 businesses including start-ups by the faculty members, alumni, and technology transfer companies that just began their businesses with technologies from their research findings that stands a head above others.

To maximize the publicity opportunity, KAIST will support each company’s marketing strategies through cooperation with the Korea International Trade Association (KITA), and provide an opportunity for the school and each startup to create global identity and exhibit the excellence of their technologies at the convention.

The following companies will be at the KAIST Booth in Eureka Park:

The twelve startups mentioned above aim to achieve global technology commecialization in their respective fields of expertise spanning from eXtended Reality (XR) and gaming, to AI and robotics, vehicle and transport, mobile platform, smart city, autonomous driving, healthcare, internet of thing (IoT), through joint research and development, technology transfer and investment attraction from world’s leading institutions and enterprises.

In particular, Fluiz and Hills Robotics won the CES Innovation Award as 2023 Honorees and is expected to attain greater achievements in the future.

A staff member from the KAIST Institute of Technology Value Creation said, “The KAIST Showcase for CES 2023 has prepared a new pitching space for each of the companies for their own IR efforts, and we hope that KAIST startups will actively and effectively market their products and technologies while they are at the convention. We hope it will help them utilize their time here to establish their name in presence here which will eventually serve as a good foothold for them and their predecessors to further global commercialization goals.”

2023.01.04 View 5482

KAIST to showcase a pack of KAIST Start-ups at CES 2023

- KAIST is to run an Exclusive Booth at the Venetian Expo (Hall G) in Eureka Park, at CES 2023, to be held in Las Vegas from Thursday, January 5th through Sunday, the 8th.

- Twelve businesses recently put together by KAIST faculty, alumni, and the start-ups given legal usage of KAIST technologies will be showcased.

- Out of the participating start-ups, the products by Fluiz and Hills Robotics were selected as the “CES Innovation Award 2023 Honoree”, scoring top in their respective categories.

On January 3, KAIST announced that there will be a KAIST booth at Consumer Electronics Show (CES) 2023, the most influential tech event in the world, to be held in Las Vegas from January 3 to 8.

At this exclusive corner, KAIST will introduce the technologies of KAIST start-ups over the exhibition period.

KAIST first started holding its exclusive booth in CES 2019 with five start-up businesses, following up at CES 2020 with 12 start-ups and at CES 2022 with 10 start-ups. At CES 2023, which would be KAIST’s fourth conference, KAIST will be accompanying 12 businesses including start-ups by the faculty members, alumni, and technology transfer companies that just began their businesses with technologies from their research findings that stands a head above others.

To maximize the publicity opportunity, KAIST will support each company’s marketing strategies through cooperation with the Korea International Trade Association (KITA), and provide an opportunity for the school and each startup to create global identity and exhibit the excellence of their technologies at the convention.

The following companies will be at the KAIST Booth in Eureka Park:

The twelve startups mentioned above aim to achieve global technology commecialization in their respective fields of expertise spanning from eXtended Reality (XR) and gaming, to AI and robotics, vehicle and transport, mobile platform, smart city, autonomous driving, healthcare, internet of thing (IoT), through joint research and development, technology transfer and investment attraction from world’s leading institutions and enterprises.

In particular, Fluiz and Hills Robotics won the CES Innovation Award as 2023 Honorees and is expected to attain greater achievements in the future.

A staff member from the KAIST Institute of Technology Value Creation said, “The KAIST Showcase for CES 2023 has prepared a new pitching space for each of the companies for their own IR efforts, and we hope that KAIST startups will actively and effectively market their products and technologies while they are at the convention. We hope it will help them utilize their time here to establish their name in presence here which will eventually serve as a good foothold for them and their predecessors to further global commercialization goals.”

2023.01.04 View 5482 -

A Quick but Clingy Creepy-Crawler that will MARVEL You

Engineered by KAIST Mechanics, a quadrupedal robot climbs steel walls and crawls across metal ceilings at the fastest speed that the world has ever seen.

< Photo 1. (From left) KAIST ME Prof. Hae-Won Park, Ph.D. Student Yong Um, Ph.D. Student Seungwoo Hong >

- Professor Hae-Won Park's team at the Department of Mechanical Engineering developed a quadrupedal robot that can move at a high speed on ferrous walls and ceilings.

- It is expected to make a wide variety of contributions as it is to be used to conduct inspections and repairs of large steel structures such as ships, bridges, and transmission towers, offering an alternative to dangerous or risky activities required in hazardous environments while maintaining productivity and efficiency through automation and unmanning of such operations.

- The study was published as the cover paper of the December issue of Science Robotics.

KAIST (President Kwang Hyung Lee) announced on the 26th that a research team led by Professor Hae-Won Park of the Department of Mechanical Engineering developed a quadrupedal walking robot that can move at high speed on steel walls and ceilings named M.A.R.V.E.L. - rightly so as it is a Magnetically Adhesive Robot for Versatile and Expeditious Locomotion as described in their paper, “Agile and Versatile Climbing on Ferromagnetic Surfaces with a Quadrupedal Robot.” (DOI: 10.1126/scirobotics.add1017)

To make this happen, Professor Park's research team developed a foot pad that can quickly turn the magnetic adhesive force on and off while retaining high adhesive force even on an uneven surface through the use of the Electro-Permanent Magnet (EPM), a device that can magnetize and demagnetize an electromagnet with little power, and the Magneto-Rheological Elastomer (MRE), an elastic material made by mixing a magnetic response factor, such as iron powder, with an elastic material, such as rubber, which they mounted on a small quadrupedal robot they made in-house, at their own laboratory. These walking robots are expected to be put into a wide variety of usage, including being programmed to perform inspections, repairs, and maintenance tasks on large structures made of steel, such as ships, bridges, transmission towers, large storage areas, and construction sites.

This study, in which Seungwoo Hong and Yong Um of the Department of Mechanical Engineering participated as co-first authors, was published as the cover paper in the December issue of Science Robotics.

< Image on the Cover of 2022 December issue of Science Robotics >

Existing wall-climbing robots use wheels or endless tracks, so their mobility is limited on surfaces with steps or irregularities. On the other hand, walking robots for climbing can expect improved mobility in obstacle terrain, but have disadvantages in that they have significantly slower moving speeds or cannot perform various movements.

In order to enable fast movement of the walking robot, the sole of the foot must have strong adhesion force and be able to control the adhesion to quickly switch from sticking to the surface or to be off of it. In addition, it is necessary to maintain the adhesion force even on a rough or uneven surface.

To solve this problem, the research team used the EPM and MRE for the first time in designing the soles of walking robots. An EPM is a magnet that can turn on and off the electromagnetic force with a short current pulse. Unlike general electromagnets, it has the advantage that it does not require energy to maintain the magnetic force. The research team proposed a new EPM with a rectangular structure arrangement, enabling faster switching while significantly lowering the voltage required for switching compared to existing electromagnets.

In addition, the research team was able to increase the frictional force without significantly reducing the magnetic force of the sole by covering the sole with an MRE. The proposed sole weighs only 169 g, but provides a vertical gripping force of about *535 Newtons (N) and a frictional force of 445 N, which is sufficient gripping force for a quadrupedal robot weighing 8 kg.

* 535 N converted to kg is 54.5 kg, and 445 N is 45.4 kg. In other words, even if an external force of up to 54.5 kg in the vertical direction and up to 45.4 kg in the horizontal direction is applied (or even if a corresponding weight is hung), the sole of the foot does not come off the steel plate.

MARVEL climbed up a vertical wall at high speed at a speed of 70 cm per second, and was able to walk while hanging upside down from the ceiling at a maximum speed of 50 cm per second. This is the world's fastest speed for a walking climbing robot. In addition, the research team demonstrated that the robot can climb at a speed of up to 35 cm even on a surface that is painted, dirty with dust and the rust-tainted surfaces of water tanks, proving the robot's performance in a real environment. It was experimentally demonstrated that the robot not only exhibited high speed, but also can switch from floor to wall and from wall to ceiling, and overcome 5-cm high obstacles protruding from walls without difficulty.

The new climbing quadrupedal robot is expected to be widely used for inspection, repair, and maintenance of large steel structures such as ships, bridges, transmission towers, oil pipelines, large storage areas, and construction sites. As the works required in these places involves risks such as falls, suffocation and other accidents that may result in serious injuries or casualties, the need for automation is of utmost urgency.

One of the first co-authors of the paper, a Ph.D. student, Yong Um of KAIST’s Department of Mechanical Engineering, said, "By the use of the magnetic soles made up of the EPM and MRE and the non-linear model predictive controller suitable for climbing, the robot can speedily move through a variety of ferromagnetic surfaces including walls and ceilings, not just level grounds. We believe this would become a cornerstone that will expand the mobility and the places of pedal-mobile robots can venture into." He added, “These robots can be put into good use in executing dangerous and difficult tasks on steel structures in places like the shipbuilding yards.”

This research was carried out with support from the National Research Foundation of Korea's Basic Research in Science & Engineering Program for Mid-Career Researchers and Korea Shipbuilding & Offshore Engineering Co., Ltd..

< Figure 1. The quadrupedal robot (MARVEL) walking over various ferrous surfaces. (A) vertical wall (B) ceiling. (C) over obstacles on a vertical wall (D) making floor-to-wall and wall-to-ceiling transitions (E) moving over a storage tank (F) walking on a wall with a 2-kg weight and over a ceiling with a 3-kg load. >

< Figure 2. Description of the magnetic foot (A) Components of the magnet sole: ankle, Square Eletro-Permanent Magnet(S-EPM), MRE footpad. (B) Components of the S-EPM and MRE footpad. (C) Working principle of the S-EPM. When the magnetization direction is aligned as shown in the left figure, magnetic flux comes out of the keeper and circulates through the steel plate, generating holding force (ON state). Conversely, if the magnetization direction is aligned as shown in the figure on the right, the magnetic flux circulates inside the S-EPM and the holding force disappears (OFF state). >

Video Introduction: Agile and versatile climbing on ferromagnetic surfaces with a quadrupedal robot - YouTube

2022.12.30 View 8540

A Quick but Clingy Creepy-Crawler that will MARVEL You

Engineered by KAIST Mechanics, a quadrupedal robot climbs steel walls and crawls across metal ceilings at the fastest speed that the world has ever seen.

< Photo 1. (From left) KAIST ME Prof. Hae-Won Park, Ph.D. Student Yong Um, Ph.D. Student Seungwoo Hong >

- Professor Hae-Won Park's team at the Department of Mechanical Engineering developed a quadrupedal robot that can move at a high speed on ferrous walls and ceilings.

- It is expected to make a wide variety of contributions as it is to be used to conduct inspections and repairs of large steel structures such as ships, bridges, and transmission towers, offering an alternative to dangerous or risky activities required in hazardous environments while maintaining productivity and efficiency through automation and unmanning of such operations.

- The study was published as the cover paper of the December issue of Science Robotics.

KAIST (President Kwang Hyung Lee) announced on the 26th that a research team led by Professor Hae-Won Park of the Department of Mechanical Engineering developed a quadrupedal walking robot that can move at high speed on steel walls and ceilings named M.A.R.V.E.L. - rightly so as it is a Magnetically Adhesive Robot for Versatile and Expeditious Locomotion as described in their paper, “Agile and Versatile Climbing on Ferromagnetic Surfaces with a Quadrupedal Robot.” (DOI: 10.1126/scirobotics.add1017)

To make this happen, Professor Park's research team developed a foot pad that can quickly turn the magnetic adhesive force on and off while retaining high adhesive force even on an uneven surface through the use of the Electro-Permanent Magnet (EPM), a device that can magnetize and demagnetize an electromagnet with little power, and the Magneto-Rheological Elastomer (MRE), an elastic material made by mixing a magnetic response factor, such as iron powder, with an elastic material, such as rubber, which they mounted on a small quadrupedal robot they made in-house, at their own laboratory. These walking robots are expected to be put into a wide variety of usage, including being programmed to perform inspections, repairs, and maintenance tasks on large structures made of steel, such as ships, bridges, transmission towers, large storage areas, and construction sites.

This study, in which Seungwoo Hong and Yong Um of the Department of Mechanical Engineering participated as co-first authors, was published as the cover paper in the December issue of Science Robotics.

< Image on the Cover of 2022 December issue of Science Robotics >

Existing wall-climbing robots use wheels or endless tracks, so their mobility is limited on surfaces with steps or irregularities. On the other hand, walking robots for climbing can expect improved mobility in obstacle terrain, but have disadvantages in that they have significantly slower moving speeds or cannot perform various movements.

In order to enable fast movement of the walking robot, the sole of the foot must have strong adhesion force and be able to control the adhesion to quickly switch from sticking to the surface or to be off of it. In addition, it is necessary to maintain the adhesion force even on a rough or uneven surface.

To solve this problem, the research team used the EPM and MRE for the first time in designing the soles of walking robots. An EPM is a magnet that can turn on and off the electromagnetic force with a short current pulse. Unlike general electromagnets, it has the advantage that it does not require energy to maintain the magnetic force. The research team proposed a new EPM with a rectangular structure arrangement, enabling faster switching while significantly lowering the voltage required for switching compared to existing electromagnets.

In addition, the research team was able to increase the frictional force without significantly reducing the magnetic force of the sole by covering the sole with an MRE. The proposed sole weighs only 169 g, but provides a vertical gripping force of about *535 Newtons (N) and a frictional force of 445 N, which is sufficient gripping force for a quadrupedal robot weighing 8 kg.

* 535 N converted to kg is 54.5 kg, and 445 N is 45.4 kg. In other words, even if an external force of up to 54.5 kg in the vertical direction and up to 45.4 kg in the horizontal direction is applied (or even if a corresponding weight is hung), the sole of the foot does not come off the steel plate.

MARVEL climbed up a vertical wall at high speed at a speed of 70 cm per second, and was able to walk while hanging upside down from the ceiling at a maximum speed of 50 cm per second. This is the world's fastest speed for a walking climbing robot. In addition, the research team demonstrated that the robot can climb at a speed of up to 35 cm even on a surface that is painted, dirty with dust and the rust-tainted surfaces of water tanks, proving the robot's performance in a real environment. It was experimentally demonstrated that the robot not only exhibited high speed, but also can switch from floor to wall and from wall to ceiling, and overcome 5-cm high obstacles protruding from walls without difficulty.