AI

-

KAIST and Merck Sign MOU to Boost Biotech Innovation

< (From left) KAIST President Kwang-Hyung Lee and Merck CEO Matthias Heinzel >

KAIST (President Kwang-Hyung Lee) signed a Memorandum of Understanding (MOU) with Merck Life Science (CEO Matthias Heinzel) on May 29 to foster innovation and technology creation in advanced biotechnology.

Since May of last year, the two institutions have been discussing multidimensional innovation programs and will now focus on industry-academia cooperation to tackle bioindustry challenges with this MOU as a foundation.

KAIST will conduct joint research projects in various advanced biotechnology fields, such as synthetic biology, mRNA, cell line engineering, and organoids, using the chemical and biological portfolios provided by Merck.

Additionally, KAIST will establish an Experience Lab in collaboration with the Department of Materials Science and Engineering and the Graduate School of Medical Science and Engineering. This lab will support the discovery and analysis of candidate substances in materials science and biology.

Programs to enhance researchers' capabilities will also be offered. Scholarships for graduate students and awards for professors will be implemented. Researchers will have opportunities to participate in global academic events and educational programs hosted by Merck, such as the Curious 2024 Future Insight Conference and the Innovation Cup.

M Ventures, a venture capital subsidiary of Merck Group, will collaborate with KAIST's startup institute to support technology commercialization and continue to develop their startup ecosystem.

The signing ceremony at KAIST's main campus in Daejeon was attended by the CEO of Merck Life Science and the President of KAIST along with representatives from both institutions.

Matthias Heinzel, a member of the Executive Board of Merck and CEO Life Science, said, “This agreement with KAIST is a significant step toward accelerating the development of the life science industry both in Korea and globally. Advancing life science research and fostering the next generation of scientists is essential for discovering new medicines to meet global health needs.”

President Kwang-Hyung Lee responded, “We are pleased to share a vision for scientific advancement with Merck, a leading global technology company. We anticipate that this partnership will strengthen the connection between Merck’s life science business and the global scientific community.”

In March, Merck, a global science and technology company with over 350 years of history, announced a plan to invest 430 billion KRW (€300 million) to build a bioprocessing center in Daejeon, where KAIST is located. This is Merck's largest investment in the Asia-Pacific region.

2024.05.30 View 1104

KAIST and Merck Sign MOU to Boost Biotech Innovation

< (From left) KAIST President Kwang-Hyung Lee and Merck CEO Matthias Heinzel >

KAIST (President Kwang-Hyung Lee) signed a Memorandum of Understanding (MOU) with Merck Life Science (CEO Matthias Heinzel) on May 29 to foster innovation and technology creation in advanced biotechnology.

Since May of last year, the two institutions have been discussing multidimensional innovation programs and will now focus on industry-academia cooperation to tackle bioindustry challenges with this MOU as a foundation.

KAIST will conduct joint research projects in various advanced biotechnology fields, such as synthetic biology, mRNA, cell line engineering, and organoids, using the chemical and biological portfolios provided by Merck.

Additionally, KAIST will establish an Experience Lab in collaboration with the Department of Materials Science and Engineering and the Graduate School of Medical Science and Engineering. This lab will support the discovery and analysis of candidate substances in materials science and biology.

Programs to enhance researchers' capabilities will also be offered. Scholarships for graduate students and awards for professors will be implemented. Researchers will have opportunities to participate in global academic events and educational programs hosted by Merck, such as the Curious 2024 Future Insight Conference and the Innovation Cup.

M Ventures, a venture capital subsidiary of Merck Group, will collaborate with KAIST's startup institute to support technology commercialization and continue to develop their startup ecosystem.

The signing ceremony at KAIST's main campus in Daejeon was attended by the CEO of Merck Life Science and the President of KAIST along with representatives from both institutions.

Matthias Heinzel, a member of the Executive Board of Merck and CEO Life Science, said, “This agreement with KAIST is a significant step toward accelerating the development of the life science industry both in Korea and globally. Advancing life science research and fostering the next generation of scientists is essential for discovering new medicines to meet global health needs.”

President Kwang-Hyung Lee responded, “We are pleased to share a vision for scientific advancement with Merck, a leading global technology company. We anticipate that this partnership will strengthen the connection between Merck’s life science business and the global scientific community.”

In March, Merck, a global science and technology company with over 350 years of history, announced a plan to invest 430 billion KRW (€300 million) to build a bioprocessing center in Daejeon, where KAIST is located. This is Merck's largest investment in the Asia-Pacific region.

2024.05.30 View 1104 -

NYU-KAIST Global AI & Digital Governance Conference Held

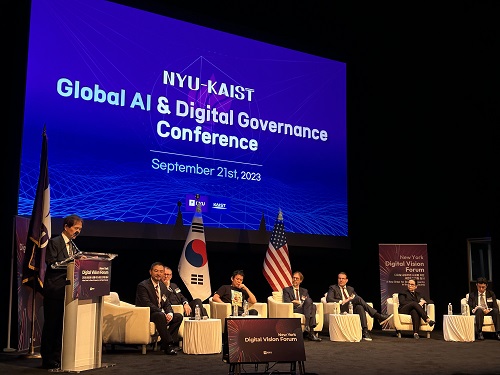

< Photo 1. Opening of NYU-KAIST Global AI & Digital Governance Conference >

In attendance of the Minister of Science and ICT Jong-ho Lee, NYU President Linda G. Mills, and KAIST President Kwang Hyung Lee, KAIST co-hosted the NYU-KAIST Global AI & Digital Governance Conference at the Paulson Center of New York University (NYU) in New York City, USA on September 21st, 9:30 pm.

At the conference, KAIST and NYU discussed the direction and policies for ‘global AI and digital governance’ with participants of upto 300 people which includes scholars, professors, and students involved in the academic field of AI and digitalization from both Korea and the United States and other international backgrounds. This conference was a forum of an international discussion that sought new directions for AI and digital technology take in the future and gathered consensus on regulations.

Following a welcoming address by KAIST President, Kwang Hyung Lee and a congratulatory message from the Minister of Science and ICT, Jong-ho Lee, a panel discussion was held, moderated by Professor Matthew Liao, a graduate of Princeton and Oxford University, currently serving as a professor at NYU and the director at the Center for Bioethics of the NYU School of Global Public Health.

Six prominent scholars took part in the panel discussion. Prof. Kyung-hyun Cho of NYU Applied Mathematics and Data Science Center, a KAIST graduate who has joined the ranks of the world-class in AI language models and Professor Jong Chul Ye, the Director of Promotion Council for Digital Health at KAIST, who is leading innovative research in the field of medical AI working in collaboration with major hospitals at home and abroad was on the panel. Additionally, Professor Luciano Floridi, a founding member of the Yale University Center for Digital Ethics, Professor Shannon Vallor, the Baillie Gifford Professor in the Ethics of Data and Artificial Intelligence at the University of Edinburgh of the UK, Professor Stefaan Verhulst, a Co-Founder and the DIrector of GovLab‘s Data Program at NYU’s Tandon School of Engineering, and Professor Urs Gasser, who is in charge of public policy, governance and innovative technology at the Technical University of Munich, also participated.

Professor Matthew Liao from NYU led the discussion on various topics such as the ways to to regulate AI and digital technologies; the concerns about how deep learning technology being developed in medicinal purposes could be used in warfare; the scope of responsibilities Al scientists' responsibility should carry in ensuring the usage of AI are limited to benign purposes only; the effects of external regulation on the AI model developers and the research they pursue; and on the lessons that can be learned from the regulations in other fields.

During the panel discussion, there was an exchange of ideas about a system of standards that could harmonize digital development and regulatory and social ethics in today’s situation in which digital transformation accelerates technological development at a global level, there is a looming concern that while such advancements are bringing economic vitality it may create digital divides and probles like manipulation of public opinion. Professor Jong-cheol Ye of KAIST (Director of the Promotion Council for Digital Health), in particular, emphasized that it is important to find a point of balance that does not hinder the advancements rather than opting to enforcing strict regulations.

< Photo 2. Panel Discussion in Session at NYU-KAIST Global AI & Digital Governance Conference >

KAIST President Kwang Hyung Lee explained, “At the Digital Governance Forum we had last October, we focused on exploring new governance to solve digital challenges in the time of global digital transition, and this year’s main focus was on regulations.”

“This conference served as an opportunity of immense value as we came to understand that appropriate regulations can be a motivation to spur further developments rather than a hurdle when it comes to technological advancements, and that it is important for us to clearly understand artificial intelligence and consider what should and can be regulated when we are to set regulations on artificial intelligence,” he continued.

Earlier, KAIST signed a cooperation agreement with NYU to build a joint campus, June last year and held a plaque presentation ceremony for the KAIST NYU Joint Campus last September to promote joint research between the two universities. KAIST is currently conducting joint research with NYU in nine fields, including AI and digital research. The KAIST-NYU Joint Campus was conceived with the goal of building an innovative sandbox campus centering aroung science, technology, engineering, and mathematics (STEM) combining NYU's excellent humanities and arts as well as basic science and convergence research capabilities with KAIST's science and technology.

KAIST has contributed to the development of Korea's industry and economy through technological innovation aiding in the nation’s transformation into an innovative nation with scientific and technological prowess. KAIST will now pursue an anchor/base strategy to raise KAIST's awareness in New York through the NYU Joint Campus by establishing a KAIST campus within the campus of NYU, the heart of New York.

2023.09.22 View 3704

NYU-KAIST Global AI & Digital Governance Conference Held

< Photo 1. Opening of NYU-KAIST Global AI & Digital Governance Conference >

In attendance of the Minister of Science and ICT Jong-ho Lee, NYU President Linda G. Mills, and KAIST President Kwang Hyung Lee, KAIST co-hosted the NYU-KAIST Global AI & Digital Governance Conference at the Paulson Center of New York University (NYU) in New York City, USA on September 21st, 9:30 pm.

At the conference, KAIST and NYU discussed the direction and policies for ‘global AI and digital governance’ with participants of upto 300 people which includes scholars, professors, and students involved in the academic field of AI and digitalization from both Korea and the United States and other international backgrounds. This conference was a forum of an international discussion that sought new directions for AI and digital technology take in the future and gathered consensus on regulations.

Following a welcoming address by KAIST President, Kwang Hyung Lee and a congratulatory message from the Minister of Science and ICT, Jong-ho Lee, a panel discussion was held, moderated by Professor Matthew Liao, a graduate of Princeton and Oxford University, currently serving as a professor at NYU and the director at the Center for Bioethics of the NYU School of Global Public Health.

Six prominent scholars took part in the panel discussion. Prof. Kyung-hyun Cho of NYU Applied Mathematics and Data Science Center, a KAIST graduate who has joined the ranks of the world-class in AI language models and Professor Jong Chul Ye, the Director of Promotion Council for Digital Health at KAIST, who is leading innovative research in the field of medical AI working in collaboration with major hospitals at home and abroad was on the panel. Additionally, Professor Luciano Floridi, a founding member of the Yale University Center for Digital Ethics, Professor Shannon Vallor, the Baillie Gifford Professor in the Ethics of Data and Artificial Intelligence at the University of Edinburgh of the UK, Professor Stefaan Verhulst, a Co-Founder and the DIrector of GovLab‘s Data Program at NYU’s Tandon School of Engineering, and Professor Urs Gasser, who is in charge of public policy, governance and innovative technology at the Technical University of Munich, also participated.

Professor Matthew Liao from NYU led the discussion on various topics such as the ways to to regulate AI and digital technologies; the concerns about how deep learning technology being developed in medicinal purposes could be used in warfare; the scope of responsibilities Al scientists' responsibility should carry in ensuring the usage of AI are limited to benign purposes only; the effects of external regulation on the AI model developers and the research they pursue; and on the lessons that can be learned from the regulations in other fields.

During the panel discussion, there was an exchange of ideas about a system of standards that could harmonize digital development and regulatory and social ethics in today’s situation in which digital transformation accelerates technological development at a global level, there is a looming concern that while such advancements are bringing economic vitality it may create digital divides and probles like manipulation of public opinion. Professor Jong-cheol Ye of KAIST (Director of the Promotion Council for Digital Health), in particular, emphasized that it is important to find a point of balance that does not hinder the advancements rather than opting to enforcing strict regulations.

< Photo 2. Panel Discussion in Session at NYU-KAIST Global AI & Digital Governance Conference >

KAIST President Kwang Hyung Lee explained, “At the Digital Governance Forum we had last October, we focused on exploring new governance to solve digital challenges in the time of global digital transition, and this year’s main focus was on regulations.”

“This conference served as an opportunity of immense value as we came to understand that appropriate regulations can be a motivation to spur further developments rather than a hurdle when it comes to technological advancements, and that it is important for us to clearly understand artificial intelligence and consider what should and can be regulated when we are to set regulations on artificial intelligence,” he continued.

Earlier, KAIST signed a cooperation agreement with NYU to build a joint campus, June last year and held a plaque presentation ceremony for the KAIST NYU Joint Campus last September to promote joint research between the two universities. KAIST is currently conducting joint research with NYU in nine fields, including AI and digital research. The KAIST-NYU Joint Campus was conceived with the goal of building an innovative sandbox campus centering aroung science, technology, engineering, and mathematics (STEM) combining NYU's excellent humanities and arts as well as basic science and convergence research capabilities with KAIST's science and technology.

KAIST has contributed to the development of Korea's industry and economy through technological innovation aiding in the nation’s transformation into an innovative nation with scientific and technological prowess. KAIST will now pursue an anchor/base strategy to raise KAIST's awareness in New York through the NYU Joint Campus by establishing a KAIST campus within the campus of NYU, the heart of New York.

2023.09.22 View 3704 -

MVITRO Co., Ltd. Signs to Donate KRW 1 Billion as Development Fund toward KAIST-NYU Joint Campus

KAIST (President Kwang Hyung Lee) announced on the 29th that it has solicited a development fund of KRW 1 billion from MVITRO (CEO Young Woo Lee) for joint research at the KAIST-NYU Joint Campus, which is being pursued to be KAIST's first campus on the United States.

KAIST plans to use this development fund for research and development of various solutions in the field of 'Healthcare at Home' among several joint researches being conducted with New York University (hereinafter referred to as NYU).

Young Woo Lee, the CEO of MVITRO, said, "We decided to make the donation with the hope that the KAIST-NYU Joint Campus will become an ecosystem that would help with Korean companies’ advancement into the US."

After announcing its plans to enter New York in 2021, KAIST has formed partnerships with NYU and New York City last year. Currently, NYU and KAIST are devising plans for mid- to long-term joint research in nine fields of studies including AI and bio-medicine and technology, and are promoting cooperation in the field of education, including exchange students, minors, double majors, and joint degrees under the joint campus agreement,

The ceremony for the consigning of MVITRO Co., Ltd.’s donation was held at the main campus of KAIST in the afternoon of the 29th and was attended by KAIST officials such as President Kwang Hyung Lee and Jae-Hung Han, the executive director of KAIST Development Foundation, along with the NYU President-Designate Linda G. Mills, and the CEO of MVITRO, Young Woo Lee.

< Photo. (from left) Kwang Hyung Lee, the President of KAIST, Linda G. Mills, the President-Designate of NYU, and Young Woo Lee, the CEO of MVITRO, pose for the photo with the signed letter of donation on May 29, 2023 at KAIST >

Linda Mills, the nominee designated to be NYU president next term said, “I am proud to join our colleagues in celebrating this important gift from MVITRO, which will help support the partnership between KAIST and NYU. This global partnership leverages the distinctive strengths of both universities to drive advances in research poised to deliver profound impact, such as the intersections of healthcare, technology, and AI."

President Kwang Hyung Lee said, "The KAIST-NYU Joint Campus will be the first step in extending KAIST's excellent science and technology capabilities to the international stage and will serve as a bridgehead to help excellent technological advancements venture into the United States." Then, President Lee added, "I would like to express my gratitude to MVITRO for sympathizing with this vision. I will work with NYU to lead the creation of global values.”

On a different note, MVITRO Co., Ltd., is a home medical device maker that collaborated with Hyundai Futurenet Co., Ltd. to develop an IoT product that combined a painless laser lancet (blood collector) and a blood glucose meter into one for a convenient at-home health support, which received favorable reviews from overseas buyers at CES 2023.

2023.05.30 View 4145

MVITRO Co., Ltd. Signs to Donate KRW 1 Billion as Development Fund toward KAIST-NYU Joint Campus

KAIST (President Kwang Hyung Lee) announced on the 29th that it has solicited a development fund of KRW 1 billion from MVITRO (CEO Young Woo Lee) for joint research at the KAIST-NYU Joint Campus, which is being pursued to be KAIST's first campus on the United States.

KAIST plans to use this development fund for research and development of various solutions in the field of 'Healthcare at Home' among several joint researches being conducted with New York University (hereinafter referred to as NYU).

Young Woo Lee, the CEO of MVITRO, said, "We decided to make the donation with the hope that the KAIST-NYU Joint Campus will become an ecosystem that would help with Korean companies’ advancement into the US."

After announcing its plans to enter New York in 2021, KAIST has formed partnerships with NYU and New York City last year. Currently, NYU and KAIST are devising plans for mid- to long-term joint research in nine fields of studies including AI and bio-medicine and technology, and are promoting cooperation in the field of education, including exchange students, minors, double majors, and joint degrees under the joint campus agreement,

The ceremony for the consigning of MVITRO Co., Ltd.’s donation was held at the main campus of KAIST in the afternoon of the 29th and was attended by KAIST officials such as President Kwang Hyung Lee and Jae-Hung Han, the executive director of KAIST Development Foundation, along with the NYU President-Designate Linda G. Mills, and the CEO of MVITRO, Young Woo Lee.

< Photo. (from left) Kwang Hyung Lee, the President of KAIST, Linda G. Mills, the President-Designate of NYU, and Young Woo Lee, the CEO of MVITRO, pose for the photo with the signed letter of donation on May 29, 2023 at KAIST >

Linda Mills, the nominee designated to be NYU president next term said, “I am proud to join our colleagues in celebrating this important gift from MVITRO, which will help support the partnership between KAIST and NYU. This global partnership leverages the distinctive strengths of both universities to drive advances in research poised to deliver profound impact, such as the intersections of healthcare, technology, and AI."

President Kwang Hyung Lee said, "The KAIST-NYU Joint Campus will be the first step in extending KAIST's excellent science and technology capabilities to the international stage and will serve as a bridgehead to help excellent technological advancements venture into the United States." Then, President Lee added, "I would like to express my gratitude to MVITRO for sympathizing with this vision. I will work with NYU to lead the creation of global values.”

On a different note, MVITRO Co., Ltd., is a home medical device maker that collaborated with Hyundai Futurenet Co., Ltd. to develop an IoT product that combined a painless laser lancet (blood collector) and a blood glucose meter into one for a convenient at-home health support, which received favorable reviews from overseas buyers at CES 2023.

2023.05.30 View 4145 -

KAIST Holds 2023 Commencement Ceremony

< Photo 1. On the 17th, KAIST held the 2023 Commencement Ceremony for a total of 2,870 students, including 691 doctors. >

KAIST held its 2023 commencement ceremony at the Sports Complex of its main campus in Daejeon at 2 p.m. on February 27. It was the first commencement ceremony to invite all its graduates since the start of COVID-19 quarantine measures.

KAIST awarded a total of 2,870 degrees including 691 PhD degrees, 1,464 master’s degrees, and 715 bachelor’s degrees, which adds to the total of 74,999 degrees KAIST has conferred since its foundation in 1971, which includes 15,772 PhD, 38,360 master’s and 20,867 bachelor’s degrees.

This year’s Cum Laude, Gabin Ryu, from the Department of Mechanical Engineering received the Minister of Science and ICT Award. Seung-ju Lee from the School of Computing received the Chairman of the KAIST Board of Trustees Award, while Jantakan Nedsaengtip, an international student from Thailand received the KAIST Presidential Award, and Jaeyong Hwang from the Department of Physics and Junmo Lee from the Department of Industrial and Systems Engineering each received the President of the Alumni Association Award and the Chairman of the KAIST Development Foundation Award, respectively.

Minister Jong-ho Lee of the Ministry of Science and ICT awarded the recipients of the academic awards and delivered a congratulatory speech.

Yujin Cha from the Department of Bio and Brain Engineering, who received a PhD degree after 19 years since his entrance to KAIST as an undergraduate student in 2004 gave a speech on behalf of the graduates to move and inspire the graduates and the guests.

After Cha received a bachelor’s degree from the Department of Nuclear and Quantum Engineering, he entered a medical graduate school and became a radiation oncology specialist. But after experiencing the death of a young patient who suffered from osteosarcoma, he returned to his alma mater to become a scientist. As he believes that science and technology is the ultimate solution to the limitations of modern medicine, he started as a PhD student at the Department of Bio and Brain Engineering in 2018, hoping to find such solutions.

During his course, he identified the characteristics of the decision-making process of doctors during diagnosis, and developed a brain-inspired AI algorithm. It is an original and challenging study that attempted to develop a fundamental machine learning theory from the data he collected from 200 doctors of different specialties.

Cha said, “Humans and AI can cooperate by humans utilizing the unique learning abilities of AI to develop our expertise, while AIs can mimic us humans’ learning abilities to improve.” He added, “My ultimate goal is to develop technology to a level at which humans and machines influence each other and ‘coevolve’, and applying it not only to medicine, but in all areas.”

Cha, who is currently an assistant professor at the KAIST Biomedical Research Center, has also written Artificial Intelligence for Doctors in 2017 to help medical personnel use AI in clinical fields, and the book was selected as one of the 2018 Sejong Books in the academic category.

During his speech at this year’s commencement ceremony, he shared that “there are so many things in the world that are difficult to solve and many things to solve them with, but I believe the things that can really broaden the horizons of the world and find fundamental solutions to the problems at hand are science and technology.”

Meanwhile, singer-songwriter Sae Byul Park who studied at the KAIST Graduate School of Culture Technology will also receive her PhD degree.

Natural language processing (NLP) is a field in AI that teaches a computer to understand and analyze human language that is actively being studied. An example of NLP is ChatGTP, which recently received a lot of attention. For her research, Park analyzed music rather than language using NLP technology.

To analyze music, which is in the form of sound, using the methods for NLP, it is necessary to rebuild notes and beats into a form of words or sentences as in a language. For this, Park designed an algorithm called Mel2Word and applied it to her research.

She also suggested that by converting melodies into texts for analysis, one would be able to quantitatively express music as sentences or words with meaning and context rather than as simple sounds representing a certain note.

Park said, “music has always been considered as a product of subjective emotion, but this research provides a framework that can calculate and analyze music.”

Park’s study can later be developed into a tool to measure the similarities between musical work, as well as a piece’s originality, artistry and popularity, and it can be used as a clue to explore the fundamental principles of how humans respond to music from a cognitive science perspective.

Park began her Ph.D. program in 2014, while carrying on with her musical activities as well as public and university lectures alongside, and dealing with personally major events including marriage and childbirth during the course of years. She already met the requirements to receive her degree in 2019, but delayed her graduation in order to improve the level of completion of her research, and finally graduated with her current achievements after nine years.

Professor Juhan Nam, who supervised Park’s research, said, “Park, who has a bachelor’s degree in psychology, later learned to code for graduate school, and has complete high-quality research in the field of artificial intelligence.” He added, “Though it took a long time, her attitude of not giving up until the end as a researcher is also excellent.”

Sae Byul Park is currently lecturing courses entitled Culture Technology and Music Information Retrieval at the Underwood International College of Yonsei University.

Park said, “the 10 or so years I’ve spent at KAIST as a graduate student was a time I could learn and prosper not only academically but from all angles of life.” She added, “having received a doctorate degree is not the end, but a ‘commencement’. Therefore, I will start to root deeper from the seeds I sowed and work harder as a both a scholar and an artist.”

< Photo 2. From left) Yujin Cha (Valedictorian, Medical-Scientist Program Ph.D. graduate), Saebyeol Park (a singer-songwriter, Ph.D. graduate from the Graduate School of Culture and Technology), Junseok Moon and Inah Seo (the two highlighted CEO graduates from the Department of Management Engineering's master’s program) >

Young entrepreneurs who dream of solving social problems will also be wearing their graduation caps. Two such graduates are Jun-seok Moon and Inah Seo, receiving their master’s degrees in social entrepreneurship MBA from the KAIST College of Business.

Before entrance, Moon ran a café helping African refugees stand on their own feet. Then, he entered KAIST to later expand his business and learn social entrepreneurship in order to sustainably help refugees in the blind spots of human rights and welfare.

During his master’s course, Moon realized that he could achieve active carbon reduction by changing the coffee alone, and switched his business field and founded Equal Table. The amount of carbon an individual can reduce by refraining from using a single paper cup is 10g, while changing the coffee itself can reduce it by 300g.

1kg of coffee emits 15kg of carbon over the course of its production, distribution, processing, and consumption, but Moon produces nearly carbon-neutral coffee beans by having innovated the entire process. In particular, the company-to-company ESG business solution is Moon’s new start-up area. It provides companies with carbon-reduced coffee made by roasting raw beans from carbon-neutral certified farms with 100% renewable energy, and shows how much carbon has been reduced in its making. Equal Table will launch the service this month in collaboration with SK Telecom, its first partner.

Inah Seo, who also graduated with Moon, founded Conscious Wear to start a fashion business reducing environmental pollution. In order to realize her mission, she felt the need to gain the appropriate expertise in management, and enrolled for the social entrepreneurship MBA.

Out of the various fashion industries, Seo focused on the leather market, which is worth 80 trillion won. Due to thickness or contamination issues, only about 60% of animal skin fabric is used, and the rest is discarded. Heavy metals are used during such processes, which also directly affects the environment.

During the social entrepreneurship MBA course, Seo collaborated with SK Chemicals, which had links through the program, and launched eco-friendly leather bags. The bags used discarded leather that was recycled by grinding and reprocessing into a biomaterial called PO3G. It was the first case in which PO3G that is over 90% biodegradable was applied to regenerated leather. In other words, it can reduce environmental pollution in the processing and disposal stages, while also reducing carbon emissions and water usage by one-tenth compared to existing cowhide products.

The social entrepreneurship MBA course, from which Moon and Seo graduated, will run in integration with the Graduate School of Green Growth as an Impact MBA program starting this year. KAIST plans to steadily foster entrepreneurs who will lead meaningful changes in the environment and society as well as economic values through innovative technologies and ideas.

< Photo 3. NYU President Emeritus John Sexton (left), who received this year's honorary doctorate of science, poses with President Kwang Hyung Lee >

Meanwhile, during this day’s commencement ceremony, KAIST also presented President Emeritus John Sexton of New York University with an honorary doctorate in science. He was recognized for laying the foundation for the cooperation between KAIST and New York University, such as promoting joint campuses.

< Photo 4. At the commencement ceremony of KAIST held on the 17th, President Kwang Hyung Lee is encouraging the graduates with his commencement address. >

President Kwang Hyung Lee emphasized in his commencement speech that, “if you can draw up the future and work hard toward your goal, the future can become a work of art that you create with your own hands,” and added, “Never stop on the journey toward your dreams, and do not give up even when you are met with failure. Failure happens to everyone, all the time. The important thing is to know 'why you failed', and to use those elements of failure as the driving force for the next try.”

2023.02.20 View 9469

KAIST Holds 2023 Commencement Ceremony

< Photo 1. On the 17th, KAIST held the 2023 Commencement Ceremony for a total of 2,870 students, including 691 doctors. >

KAIST held its 2023 commencement ceremony at the Sports Complex of its main campus in Daejeon at 2 p.m. on February 27. It was the first commencement ceremony to invite all its graduates since the start of COVID-19 quarantine measures.

KAIST awarded a total of 2,870 degrees including 691 PhD degrees, 1,464 master’s degrees, and 715 bachelor’s degrees, which adds to the total of 74,999 degrees KAIST has conferred since its foundation in 1971, which includes 15,772 PhD, 38,360 master’s and 20,867 bachelor’s degrees.

This year’s Cum Laude, Gabin Ryu, from the Department of Mechanical Engineering received the Minister of Science and ICT Award. Seung-ju Lee from the School of Computing received the Chairman of the KAIST Board of Trustees Award, while Jantakan Nedsaengtip, an international student from Thailand received the KAIST Presidential Award, and Jaeyong Hwang from the Department of Physics and Junmo Lee from the Department of Industrial and Systems Engineering each received the President of the Alumni Association Award and the Chairman of the KAIST Development Foundation Award, respectively.

Minister Jong-ho Lee of the Ministry of Science and ICT awarded the recipients of the academic awards and delivered a congratulatory speech.

Yujin Cha from the Department of Bio and Brain Engineering, who received a PhD degree after 19 years since his entrance to KAIST as an undergraduate student in 2004 gave a speech on behalf of the graduates to move and inspire the graduates and the guests.

After Cha received a bachelor’s degree from the Department of Nuclear and Quantum Engineering, he entered a medical graduate school and became a radiation oncology specialist. But after experiencing the death of a young patient who suffered from osteosarcoma, he returned to his alma mater to become a scientist. As he believes that science and technology is the ultimate solution to the limitations of modern medicine, he started as a PhD student at the Department of Bio and Brain Engineering in 2018, hoping to find such solutions.

During his course, he identified the characteristics of the decision-making process of doctors during diagnosis, and developed a brain-inspired AI algorithm. It is an original and challenging study that attempted to develop a fundamental machine learning theory from the data he collected from 200 doctors of different specialties.

Cha said, “Humans and AI can cooperate by humans utilizing the unique learning abilities of AI to develop our expertise, while AIs can mimic us humans’ learning abilities to improve.” He added, “My ultimate goal is to develop technology to a level at which humans and machines influence each other and ‘coevolve’, and applying it not only to medicine, but in all areas.”

Cha, who is currently an assistant professor at the KAIST Biomedical Research Center, has also written Artificial Intelligence for Doctors in 2017 to help medical personnel use AI in clinical fields, and the book was selected as one of the 2018 Sejong Books in the academic category.

During his speech at this year’s commencement ceremony, he shared that “there are so many things in the world that are difficult to solve and many things to solve them with, but I believe the things that can really broaden the horizons of the world and find fundamental solutions to the problems at hand are science and technology.”

Meanwhile, singer-songwriter Sae Byul Park who studied at the KAIST Graduate School of Culture Technology will also receive her PhD degree.

Natural language processing (NLP) is a field in AI that teaches a computer to understand and analyze human language that is actively being studied. An example of NLP is ChatGTP, which recently received a lot of attention. For her research, Park analyzed music rather than language using NLP technology.

To analyze music, which is in the form of sound, using the methods for NLP, it is necessary to rebuild notes and beats into a form of words or sentences as in a language. For this, Park designed an algorithm called Mel2Word and applied it to her research.

She also suggested that by converting melodies into texts for analysis, one would be able to quantitatively express music as sentences or words with meaning and context rather than as simple sounds representing a certain note.

Park said, “music has always been considered as a product of subjective emotion, but this research provides a framework that can calculate and analyze music.”

Park’s study can later be developed into a tool to measure the similarities between musical work, as well as a piece’s originality, artistry and popularity, and it can be used as a clue to explore the fundamental principles of how humans respond to music from a cognitive science perspective.

Park began her Ph.D. program in 2014, while carrying on with her musical activities as well as public and university lectures alongside, and dealing with personally major events including marriage and childbirth during the course of years. She already met the requirements to receive her degree in 2019, but delayed her graduation in order to improve the level of completion of her research, and finally graduated with her current achievements after nine years.

Professor Juhan Nam, who supervised Park’s research, said, “Park, who has a bachelor’s degree in psychology, later learned to code for graduate school, and has complete high-quality research in the field of artificial intelligence.” He added, “Though it took a long time, her attitude of not giving up until the end as a researcher is also excellent.”

Sae Byul Park is currently lecturing courses entitled Culture Technology and Music Information Retrieval at the Underwood International College of Yonsei University.

Park said, “the 10 or so years I’ve spent at KAIST as a graduate student was a time I could learn and prosper not only academically but from all angles of life.” She added, “having received a doctorate degree is not the end, but a ‘commencement’. Therefore, I will start to root deeper from the seeds I sowed and work harder as a both a scholar and an artist.”

< Photo 2. From left) Yujin Cha (Valedictorian, Medical-Scientist Program Ph.D. graduate), Saebyeol Park (a singer-songwriter, Ph.D. graduate from the Graduate School of Culture and Technology), Junseok Moon and Inah Seo (the two highlighted CEO graduates from the Department of Management Engineering's master’s program) >

Young entrepreneurs who dream of solving social problems will also be wearing their graduation caps. Two such graduates are Jun-seok Moon and Inah Seo, receiving their master’s degrees in social entrepreneurship MBA from the KAIST College of Business.

Before entrance, Moon ran a café helping African refugees stand on their own feet. Then, he entered KAIST to later expand his business and learn social entrepreneurship in order to sustainably help refugees in the blind spots of human rights and welfare.

During his master’s course, Moon realized that he could achieve active carbon reduction by changing the coffee alone, and switched his business field and founded Equal Table. The amount of carbon an individual can reduce by refraining from using a single paper cup is 10g, while changing the coffee itself can reduce it by 300g.

1kg of coffee emits 15kg of carbon over the course of its production, distribution, processing, and consumption, but Moon produces nearly carbon-neutral coffee beans by having innovated the entire process. In particular, the company-to-company ESG business solution is Moon’s new start-up area. It provides companies with carbon-reduced coffee made by roasting raw beans from carbon-neutral certified farms with 100% renewable energy, and shows how much carbon has been reduced in its making. Equal Table will launch the service this month in collaboration with SK Telecom, its first partner.

Inah Seo, who also graduated with Moon, founded Conscious Wear to start a fashion business reducing environmental pollution. In order to realize her mission, she felt the need to gain the appropriate expertise in management, and enrolled for the social entrepreneurship MBA.

Out of the various fashion industries, Seo focused on the leather market, which is worth 80 trillion won. Due to thickness or contamination issues, only about 60% of animal skin fabric is used, and the rest is discarded. Heavy metals are used during such processes, which also directly affects the environment.

During the social entrepreneurship MBA course, Seo collaborated with SK Chemicals, which had links through the program, and launched eco-friendly leather bags. The bags used discarded leather that was recycled by grinding and reprocessing into a biomaterial called PO3G. It was the first case in which PO3G that is over 90% biodegradable was applied to regenerated leather. In other words, it can reduce environmental pollution in the processing and disposal stages, while also reducing carbon emissions and water usage by one-tenth compared to existing cowhide products.

The social entrepreneurship MBA course, from which Moon and Seo graduated, will run in integration with the Graduate School of Green Growth as an Impact MBA program starting this year. KAIST plans to steadily foster entrepreneurs who will lead meaningful changes in the environment and society as well as economic values through innovative technologies and ideas.

< Photo 3. NYU President Emeritus John Sexton (left), who received this year's honorary doctorate of science, poses with President Kwang Hyung Lee >

Meanwhile, during this day’s commencement ceremony, KAIST also presented President Emeritus John Sexton of New York University with an honorary doctorate in science. He was recognized for laying the foundation for the cooperation between KAIST and New York University, such as promoting joint campuses.

< Photo 4. At the commencement ceremony of KAIST held on the 17th, President Kwang Hyung Lee is encouraging the graduates with his commencement address. >

President Kwang Hyung Lee emphasized in his commencement speech that, “if you can draw up the future and work hard toward your goal, the future can become a work of art that you create with your own hands,” and added, “Never stop on the journey toward your dreams, and do not give up even when you are met with failure. Failure happens to everyone, all the time. The important thing is to know 'why you failed', and to use those elements of failure as the driving force for the next try.”

2023.02.20 View 9469 -

KAIST confers Honorary Doctorate of Science on NYU President Emeritus John Edward Sexton

< Photo 1. NYU President Emeritus John Edward Sexton posing with KAIST President Kwang Hyung Lee holding the Honorary Doctorate at the KAIST Commencement Ceremony >

KAIST (President Kwang Hyung Lee) announced that it conferred an honorary doctorate of science degree on NYU President Emeritus John Edward Sexton at the Commencement Ceremony held on the 17th.

An official from KAIST explained, "KAIST is conferring an honorary doctorate for President Sexton's longstanding leadership in higher education, and for his contributions to the process of establishing the groundwork for collaboration with NYU through which KAIST is to become a leading global value-creating university."

President Emeritus Sexton served as the president of NYU from 2002 to 2015, establishing two degree-granting campuses and several global academic sites of NYU around the world. Because of its steady rise in university rankings, such as its medical school earning the number two position in the United States, not only has NYU joined the ranks of first-class universities, but it has also achieved remarkable growth, with the number of students increasing dramatically from 29,000 to 60,000.

In addition, during his tenure as president at NYU, President Emeritus Sexton successfully expanded fundraising to support the University’s academic goals. During his 14-year tenure as president, he organized initiatives such as 'Raise $1 Million Every Day' and 'Call to Action' to raise $4.9 billion in donations, the largest in NYU history to date.

President Emeritus Sexton is famous for teaching full time even during his presidential tenure and for the anecdotes about his special care for students, addressing the school members as “family”. In particular, he is famous for giving hugs to all graduates at the commencement ceremony. Minister Park Jin of the Ministry of Foreign Affairs of Korea, who graduated from NYU School of Law in 1999 with a Master of Studies in Law, is one of the graduates who received President Sexton's hug.

President Emeritus Sexton, born in 1942, visited KAIST on the 17th to receive the honorary doctorate and to encourage the expedited development of the KAIST-NYU Joint Campus, for which he helped lay the foundation.

President Emeritus Sexton said, "I like the slogan, 'Onward and upward together,'" and added, "I look forward to having the two universities achieve their shared vision of becoming the world-class universities together through cooperation to establish the KAIST-NYU Joint Campus."

< Photo 2. NYU President Emeritus John Edward Sexton giving the acceptance speech at the KAIST Commencement Ceremony >

The US Ambassador to Korea, the Honorable Philip Goldberg, also attended the commencement ceremony at KAIST to congratulate President Emeritus Sexton on the conferment of the honorary doctorate. Ambassador Goldberg has been serving as the US Ambassador to Korea since July of last year.

President Kwang Hyung Lee said, “President Emeritus Sexton was a president best described as an innovator who promoted diversity in education and pursued academic excellence throughout his life.” He went on to say, “The KAIST-NYU Joint Campus, which will be completed on the foundation laid by President Emeritus Sexton, will serve as the focal point that will attract global talents flooding into New York by the driving force created from the synergy of the two universities as well as serving as a starting point for KAIST's outstanding talents to pursue their dreams toward the world.”

KAIST signed a cooperation agreement with NYU in June of 2022 to build a joint campus, and held a presentation of signage for the KAIST-NYU Joint Campus in September. Currently, about 60 faculty members are planning to begin joint research initiatives in seven fields, including robotics, AI, brain sciences, and climate change. In addition, cooperation in the field of education, including student exchange, minors, double majors, and joint degrees, is under discussion.

2023.02.17 View 4886

KAIST confers Honorary Doctorate of Science on NYU President Emeritus John Edward Sexton

< Photo 1. NYU President Emeritus John Edward Sexton posing with KAIST President Kwang Hyung Lee holding the Honorary Doctorate at the KAIST Commencement Ceremony >

KAIST (President Kwang Hyung Lee) announced that it conferred an honorary doctorate of science degree on NYU President Emeritus John Edward Sexton at the Commencement Ceremony held on the 17th.

An official from KAIST explained, "KAIST is conferring an honorary doctorate for President Sexton's longstanding leadership in higher education, and for his contributions to the process of establishing the groundwork for collaboration with NYU through which KAIST is to become a leading global value-creating university."

President Emeritus Sexton served as the president of NYU from 2002 to 2015, establishing two degree-granting campuses and several global academic sites of NYU around the world. Because of its steady rise in university rankings, such as its medical school earning the number two position in the United States, not only has NYU joined the ranks of first-class universities, but it has also achieved remarkable growth, with the number of students increasing dramatically from 29,000 to 60,000.

In addition, during his tenure as president at NYU, President Emeritus Sexton successfully expanded fundraising to support the University’s academic goals. During his 14-year tenure as president, he organized initiatives such as 'Raise $1 Million Every Day' and 'Call to Action' to raise $4.9 billion in donations, the largest in NYU history to date.

President Emeritus Sexton is famous for teaching full time even during his presidential tenure and for the anecdotes about his special care for students, addressing the school members as “family”. In particular, he is famous for giving hugs to all graduates at the commencement ceremony. Minister Park Jin of the Ministry of Foreign Affairs of Korea, who graduated from NYU School of Law in 1999 with a Master of Studies in Law, is one of the graduates who received President Sexton's hug.

President Emeritus Sexton, born in 1942, visited KAIST on the 17th to receive the honorary doctorate and to encourage the expedited development of the KAIST-NYU Joint Campus, for which he helped lay the foundation.

President Emeritus Sexton said, "I like the slogan, 'Onward and upward together,'" and added, "I look forward to having the two universities achieve their shared vision of becoming the world-class universities together through cooperation to establish the KAIST-NYU Joint Campus."

< Photo 2. NYU President Emeritus John Edward Sexton giving the acceptance speech at the KAIST Commencement Ceremony >

The US Ambassador to Korea, the Honorable Philip Goldberg, also attended the commencement ceremony at KAIST to congratulate President Emeritus Sexton on the conferment of the honorary doctorate. Ambassador Goldberg has been serving as the US Ambassador to Korea since July of last year.

President Kwang Hyung Lee said, “President Emeritus Sexton was a president best described as an innovator who promoted diversity in education and pursued academic excellence throughout his life.” He went on to say, “The KAIST-NYU Joint Campus, which will be completed on the foundation laid by President Emeritus Sexton, will serve as the focal point that will attract global talents flooding into New York by the driving force created from the synergy of the two universities as well as serving as a starting point for KAIST's outstanding talents to pursue their dreams toward the world.”

KAIST signed a cooperation agreement with NYU in June of 2022 to build a joint campus, and held a presentation of signage for the KAIST-NYU Joint Campus in September. Currently, about 60 faculty members are planning to begin joint research initiatives in seven fields, including robotics, AI, brain sciences, and climate change. In addition, cooperation in the field of education, including student exchange, minors, double majors, and joint degrees, is under discussion.

2023.02.17 View 4886 -

UAE Space Program Leaders named to be the 1st of the honorees of KAIST Alumni Association's special recognition for graduates of foreign nationality

The KAIST Alumni Association (Chairman, Chil-Hee Chung) announced on the 12th that the winners of the 2023 KAIST Distinguished Alumni Award and International Alumni Award has been selected.

The KAIST Distinguished Alumni Award, which produced the first recipient in 1992, is an award given to alumni who have contributed to the development of the nation and society, or who have glorified the honor of their alma mater with outstanding academic achievements and social and/or communal contributions.

On a special note, this year, there has been an addition to the honors, “the KAIST Distinguished International Alumni Award” to honor and encourage overseas alumni who are making their marks in the international community that will boost positive recognition of KAIST in the global setting and will later become a bridge that will expedite Korea's international efforts in the future.

As of 2022, the number of international students who succeeded in earning KAIST degrees has exceeded 1,700, and they are actively doing their part back in their home countries as leaders in various fields in which they belong, spanning from science and technology, to politics, industry and other corners of the society.

(From left) Omran Sharaf, the Assistant Minister of UAE Foreign Affairs and International Cooperation for Advanced Science and Technology, Amer Al Sayegh the Director General of Space Project at MBRSC, and Mohammed Al Harmi the Director General of Administration at MBRSC (Photos provided by the courtesy of MBRSC)

To celebrate and honor their outstanding achievements, the KAIST Alumni Association selected a team of three alumni of the United Arab Emirates (UAE) to receive the Distinguished International Alumni Award for the first time. The named honorees are Omran Sharaf, a master’s graduate from the Graduate School of Science and Technology Policy, and Amer Al Sayegh and Mohammed Al Harmi, master’s graduates of the Department of Aerospace Engineering - all three of the class of 2013 in leading positions in the UAE space program to lead the advancement of the science and technology of the country.

Currently, the three alums are in directorship of the Mohammed Bin Rashid Space Centre (MBRSC) with Mr. Omran Sharaf, who has recently been appointed as the Assistant Minister in charge of Advanced Science and Technology at the UAE Ministry of Foreign Affairs and International Cooperation, being the Project Director of the Emirates Mars Mission of MBRSC and Mr. Amer Al Sayegh in the Director General position in charge of Space Project and Mr. Mohammed Al Harmi, the Director General of Administration, at MBRSC.

They received technology transfer from “SatRec I”, Korea's first satellite system exporter and KAIST alumni company, for about 10 years from 2006, while carrying out their master’s studies at the same time.

Afterwards, they returned to UAE to lead the Emirates Mars Mission, which is already showing tangible progress including the successful launch of the Mars probe "Amal" (ال امل, meaning ‘Hope’ in Arabic), which was the first in the Arab world and the fifth in the world to successfully enter into orbit around Mars, and the UAE’s first independently developed Earth observation satellite "KhalifaSat".

An official from the KAIST Alumni Association said, "We selected the Distinguished International Alumni after evaluating their industrious leadership in promoting various space industry strategies, ranging from the development of Mars probes and Earth observation satellites, as well as lunar exploration, asteroid exploration, and Mars residence plans."

(From left) Joo-Sun Choi, President & CEO of Samsung Display Co. Ltd., Jung Goo Cho, the CEO of Green Power Co. Ltd., Jong Seung Park, the President of Agency for Defense Development (ADD), Kyunghyun Cho, Professor of New York University (NYU)

Also, four of the Korean graduates, Joo-Sun Choi, the CEO of Samsung Display, Jung Goo Cho, the CEO of Green Power Co. Ltd., Jong Seung Park, the President of Agency for Defense Development (ADD), and Kyunghyun Cho, a Professor of New York University (NYU), were selected as the winners of the “Distinguished Alumni Award”.

Mr. Joo-Sun Choi (Electrical and Electronic Engineering, M.S. in 1989, Ph.D. in 1995), the CEO of Samsung Display, led the successful development and mass-production of the world's first ultra-high-definition QD-OLED Displays, and preemptively transformed the structure of business of the industry and has been leading the way in technological innovation.

Mr. Jung Goo Cho (Electrical and Electronic Engineering, M.S. in 1988, Ph.D. in 1992), the CEO of Green Power Co. Ltd., developed wireless power technology for the first time in Korea in the early 2000s and applied it to semiconductor/display lines and led the wireless power charging technology in various fields, such as developing KAIST On-Line Electric Vehicles (OLEV) and commercializing the world's first wireless charger for 11kW electric vehicles.

Mr. Jong Seung Park (Mechanical Engineering, M.S. in 1988, Ph.D., in 1991), The President of ADD is an expert with abundant science and technology knowledge and organizational management capabilities. He is contributing greatly to national defense and security through science and technology.

Mr. Kyunghyun Cho (Computer Science, B.S., in 2009), the Professor of Computer Science and Data Science at NYU, is a world-renowned expert in Artificial Intelligence (AI), advancing the concept of 'Neural Machine Translation' in the field of natural language processing, to make great contributions to AI translation technology and related industries.

Chairman Chil-Hee Chung, the 26th Chair of KAIST Alumni Association “As each year goes by, I feel that the influence of KAIST alumni goes beyond science and technology to affect our society as a whole.” He went on to say, “This year, as it was more meaningful to extend the award to honor the international members of our Alums, we look forward to seeing more of our alumni continuing their social and academic endeavors to play an active role in the global stage in taking on the global challenges.”

The Ceremony for KAIST Distinguished Alumni and International Alumni Award Honorees will be conducted at the Annual New Year’s Event of KAIST Alumni Association for 2023 to be held on Friday, January 13th, at the Grand InterContinental Seoul Parnas.

2023.01.12 View 7446

UAE Space Program Leaders named to be the 1st of the honorees of KAIST Alumni Association's special recognition for graduates of foreign nationality

The KAIST Alumni Association (Chairman, Chil-Hee Chung) announced on the 12th that the winners of the 2023 KAIST Distinguished Alumni Award and International Alumni Award has been selected.

The KAIST Distinguished Alumni Award, which produced the first recipient in 1992, is an award given to alumni who have contributed to the development of the nation and society, or who have glorified the honor of their alma mater with outstanding academic achievements and social and/or communal contributions.

On a special note, this year, there has been an addition to the honors, “the KAIST Distinguished International Alumni Award” to honor and encourage overseas alumni who are making their marks in the international community that will boost positive recognition of KAIST in the global setting and will later become a bridge that will expedite Korea's international efforts in the future.

As of 2022, the number of international students who succeeded in earning KAIST degrees has exceeded 1,700, and they are actively doing their part back in their home countries as leaders in various fields in which they belong, spanning from science and technology, to politics, industry and other corners of the society.

(From left) Omran Sharaf, the Assistant Minister of UAE Foreign Affairs and International Cooperation for Advanced Science and Technology, Amer Al Sayegh the Director General of Space Project at MBRSC, and Mohammed Al Harmi the Director General of Administration at MBRSC (Photos provided by the courtesy of MBRSC)

To celebrate and honor their outstanding achievements, the KAIST Alumni Association selected a team of three alumni of the United Arab Emirates (UAE) to receive the Distinguished International Alumni Award for the first time. The named honorees are Omran Sharaf, a master’s graduate from the Graduate School of Science and Technology Policy, and Amer Al Sayegh and Mohammed Al Harmi, master’s graduates of the Department of Aerospace Engineering - all three of the class of 2013 in leading positions in the UAE space program to lead the advancement of the science and technology of the country.

Currently, the three alums are in directorship of the Mohammed Bin Rashid Space Centre (MBRSC) with Mr. Omran Sharaf, who has recently been appointed as the Assistant Minister in charge of Advanced Science and Technology at the UAE Ministry of Foreign Affairs and International Cooperation, being the Project Director of the Emirates Mars Mission of MBRSC and Mr. Amer Al Sayegh in the Director General position in charge of Space Project and Mr. Mohammed Al Harmi, the Director General of Administration, at MBRSC.

They received technology transfer from “SatRec I”, Korea's first satellite system exporter and KAIST alumni company, for about 10 years from 2006, while carrying out their master’s studies at the same time.

Afterwards, they returned to UAE to lead the Emirates Mars Mission, which is already showing tangible progress including the successful launch of the Mars probe "Amal" (ال امل, meaning ‘Hope’ in Arabic), which was the first in the Arab world and the fifth in the world to successfully enter into orbit around Mars, and the UAE’s first independently developed Earth observation satellite "KhalifaSat".

An official from the KAIST Alumni Association said, "We selected the Distinguished International Alumni after evaluating their industrious leadership in promoting various space industry strategies, ranging from the development of Mars probes and Earth observation satellites, as well as lunar exploration, asteroid exploration, and Mars residence plans."

(From left) Joo-Sun Choi, President & CEO of Samsung Display Co. Ltd., Jung Goo Cho, the CEO of Green Power Co. Ltd., Jong Seung Park, the President of Agency for Defense Development (ADD), Kyunghyun Cho, Professor of New York University (NYU)

Also, four of the Korean graduates, Joo-Sun Choi, the CEO of Samsung Display, Jung Goo Cho, the CEO of Green Power Co. Ltd., Jong Seung Park, the President of Agency for Defense Development (ADD), and Kyunghyun Cho, a Professor of New York University (NYU), were selected as the winners of the “Distinguished Alumni Award”.

Mr. Joo-Sun Choi (Electrical and Electronic Engineering, M.S. in 1989, Ph.D. in 1995), the CEO of Samsung Display, led the successful development and mass-production of the world's first ultra-high-definition QD-OLED Displays, and preemptively transformed the structure of business of the industry and has been leading the way in technological innovation.

Mr. Jung Goo Cho (Electrical and Electronic Engineering, M.S. in 1988, Ph.D. in 1992), the CEO of Green Power Co. Ltd., developed wireless power technology for the first time in Korea in the early 2000s and applied it to semiconductor/display lines and led the wireless power charging technology in various fields, such as developing KAIST On-Line Electric Vehicles (OLEV) and commercializing the world's first wireless charger for 11kW electric vehicles.

Mr. Jong Seung Park (Mechanical Engineering, M.S. in 1988, Ph.D., in 1991), The President of ADD is an expert with abundant science and technology knowledge and organizational management capabilities. He is contributing greatly to national defense and security through science and technology.

Mr. Kyunghyun Cho (Computer Science, B.S., in 2009), the Professor of Computer Science and Data Science at NYU, is a world-renowned expert in Artificial Intelligence (AI), advancing the concept of 'Neural Machine Translation' in the field of natural language processing, to make great contributions to AI translation technology and related industries.

Chairman Chil-Hee Chung, the 26th Chair of KAIST Alumni Association “As each year goes by, I feel that the influence of KAIST alumni goes beyond science and technology to affect our society as a whole.” He went on to say, “This year, as it was more meaningful to extend the award to honor the international members of our Alums, we look forward to seeing more of our alumni continuing their social and academic endeavors to play an active role in the global stage in taking on the global challenges.”

The Ceremony for KAIST Distinguished Alumni and International Alumni Award Honorees will be conducted at the Annual New Year’s Event of KAIST Alumni Association for 2023 to be held on Friday, January 13th, at the Grand InterContinental Seoul Parnas.

2023.01.12 View 7446 -

2022 Global Startup Internship Fair (GSIF)

From November 30 to December 1, 2022, the Center for Global Strategies and Planning at KAIST held the 2022 Global Startup Internship Fair (GSIF) on-line and off-line, as well.

Including the globally acknowledged unicorn companies such as PsiQuantum and Moloco, eleven startups — ImpriMed, Vessel AI, Genedit, Medic Life Sciences, Bringko, Brave Turtles, Neozips, Luckmon and CUPIX — joined the fair. Among the eleven invited companies, six were founded by KAIST Alumni representatives. The invited companies sought student interns in the field of AI, biotechnology, quantum, logistics, games, advertisement, real estate, and e-commerce. In response, about 100 KAIST students with various backgrounds have shown their interest in the event through pre-reservation.

Participating companies at this fair introduced their companies and conducted recruitment and career counseling with KAIST students. Sungwon Lim, the CEO of ImpriMed and a KAIST alumni, said, “It was very meaningful to introduce ImpriMed to junior students and share my experiences that I gained while pioneering and operating startups in the United States.” To share his journey as a global startup CEO, Lim has been invited as an off-line speaker during this event.

< ImpriMed CEO, Sungwon Lim >

In addition to the recruiting sessions, the fair held information sessions offering guidelines and useful tips on seeking opportunities overseas including information on obtaining a J1 visa, applying to U.S. internships, relocating to Silicon Valley, and writing CVs, cover letters, and business emails.

Professor Man-Sung Yim, the Associate Vice President of the International Office at KAIST, stressed, “A growing number of students at KAIST want to become a global entrepreneur, and hands-on experience gained from U.S. startups is absolutely necessary to achieve their goals.” He added, “the 2022 GSIF was one of those opportunities for KAIST students to further their dream of becoming global leaders.”

2022.12.01 View 3589

2022 Global Startup Internship Fair (GSIF)

From November 30 to December 1, 2022, the Center for Global Strategies and Planning at KAIST held the 2022 Global Startup Internship Fair (GSIF) on-line and off-line, as well.

Including the globally acknowledged unicorn companies such as PsiQuantum and Moloco, eleven startups — ImpriMed, Vessel AI, Genedit, Medic Life Sciences, Bringko, Brave Turtles, Neozips, Luckmon and CUPIX — joined the fair. Among the eleven invited companies, six were founded by KAIST Alumni representatives. The invited companies sought student interns in the field of AI, biotechnology, quantum, logistics, games, advertisement, real estate, and e-commerce. In response, about 100 KAIST students with various backgrounds have shown their interest in the event through pre-reservation.

Participating companies at this fair introduced their companies and conducted recruitment and career counseling with KAIST students. Sungwon Lim, the CEO of ImpriMed and a KAIST alumni, said, “It was very meaningful to introduce ImpriMed to junior students and share my experiences that I gained while pioneering and operating startups in the United States.” To share his journey as a global startup CEO, Lim has been invited as an off-line speaker during this event.

< ImpriMed CEO, Sungwon Lim >

In addition to the recruiting sessions, the fair held information sessions offering guidelines and useful tips on seeking opportunities overseas including information on obtaining a J1 visa, applying to U.S. internships, relocating to Silicon Valley, and writing CVs, cover letters, and business emails.

Professor Man-Sung Yim, the Associate Vice President of the International Office at KAIST, stressed, “A growing number of students at KAIST want to become a global entrepreneur, and hands-on experience gained from U.S. startups is absolutely necessary to achieve their goals.” He added, “the 2022 GSIF was one of those opportunities for KAIST students to further their dream of becoming global leaders.”

2022.12.01 View 3589 -

Anonymous Donor Makes a Gift of Property Valued at 30 Billion KRW

The KAIST Development Foundation announced on May 9 that an anonymous donor in his 50s made a gift of real estate valued at 30 billion KRW. This is the first donation from an anonymous benefactor on such a grand scale. The benefactor expressed his wishes to fund scholarships for students in need and R&D for medical and bio sciences.

According to the Development Foundation official, the benefactor is reported to have said that he felt burdened that he earned much more than he needed and was looking for the right way to share his assets. The benefactor refused to hold an official donation ceremony and meeting with high-level university administrators.

The donor believes that KAIST is filled with young and dynamic energy, saying, “I would like to help KAIST move forward and create breakthroughs that will benefit the nation as well as all humanity.”

Before making up his mind to give his asset to KAIST, he had planned to establish his own social foundation but he changed his mind. “I decided that an investment in education would be the best investment,” he said. He explained that he was inspired by his KAIST graduate friend who is running a company. He was deeply motivated to help KAIST after witnessing the KAIST graduate’s passion for conducting his business.

After receiving the gift, KAIST President Kwang Hyung Lee was thankful for the full support and trust of the benefactor. “We will spare no effort to foster next-generation talents and advance science and technology in the field of biomedicine.”

2022.05.11 View 3231

Anonymous Donor Makes a Gift of Property Valued at 30 Billion KRW

The KAIST Development Foundation announced on May 9 that an anonymous donor in his 50s made a gift of real estate valued at 30 billion KRW. This is the first donation from an anonymous benefactor on such a grand scale. The benefactor expressed his wishes to fund scholarships for students in need and R&D for medical and bio sciences.

According to the Development Foundation official, the benefactor is reported to have said that he felt burdened that he earned much more than he needed and was looking for the right way to share his assets. The benefactor refused to hold an official donation ceremony and meeting with high-level university administrators.

The donor believes that KAIST is filled with young and dynamic energy, saying, “I would like to help KAIST move forward and create breakthroughs that will benefit the nation as well as all humanity.”

Before making up his mind to give his asset to KAIST, he had planned to establish his own social foundation but he changed his mind. “I decided that an investment in education would be the best investment,” he said. He explained that he was inspired by his KAIST graduate friend who is running a company. He was deeply motivated to help KAIST after witnessing the KAIST graduate’s passion for conducting his business.

After receiving the gift, KAIST President Kwang Hyung Lee was thankful for the full support and trust of the benefactor. “We will spare no effort to foster next-generation talents and advance science and technology in the field of biomedicine.”

2022.05.11 View 3231 -

KAA Recognizes 4 Distinguished Alumni of the Year

The KAIST Alumni Association (KAA) recognized four distinguished alumni of the year during a ceremony on February 25 in Seoul. The four Distinguished Alumni Awardees are Distinguished Professor Sukbok Chang from the KAIST Department of Chemistry, Hyunshil Ahn, head of the AI Economy Institute and an editorial writer at The Korea Economic Daily, CEO Hwan-ho Sung of PSTech, and President Hark Kyu Park of Samsung Electronics.

Distinguished Professor Sukbok Chang who received his MS from the Department of Chemistry in 1985 has been a pioneer in the novel field of ‘carbon-hydrogen bond activation reactions’. He has significantly contributed to raising Korea’s international reputation in natural sciences and received the Kyungam Academic Award in 2013, the 14th Korea Science Award in 2015, the 1st Science and Technology Prize of Korea Toray in 2018, and the Best Scientist/Engineer Award Korea in 2019. Furthermore, he was named as a Highly Cited Researcher who ranked in the top 1% of citations by field and publication year in the Web of Science citation index for seven consecutive years from 2015 to 2021, demonstrating his leadership as a global scholar.

Hyunshil Ahn, a graduate of the School of Business and Technology Management with an MS in 1985 and a PhD in 1987, was appointed as the first head of the AI Economy Institute when The Korea Economic Daily was the first Korean media outlet to establish an AI economy lab. He has contributed to creating new roles for the press and media in the 4th industrial revolution, and added to the popularization of AI technology through regulation reform and consulting on industrial policies.

PSTech CEO Hwan-ho Sung is a graduate of the School of Electrical Engineering where he received an MS in 1988 and a PhD in EMBA in 2008. He has run the electronics company PSTech for over 20 years and successfully localized the production of power equipment, which previously depended on foreign technology. His development of the world’s first power equipment that can be applied to new industries including semiconductors and displays was recognized through this award.

Samsung Electronics President Hark Kyu Park graduated from the School of Business and Technology Management with an MS in 1986. He not only enhanced Korea’s national competitiveness by expanding the semiconductor industry, but also established contract-based semiconductor departments at Korean universities including KAIST, Sungkyunkwan University, Yonsei University, and Postech, and semiconductor track courses at KAIST, Sogang University, Seoul National University, and Postech to nurture professional talents. He also led the national semiconductor coexistence system by leading private sector-government-academia collaborations to strengthen competence in semiconductors, and continues to make unconditional investments in strong small businesses.

KAA President Chilhee Chung said, “Thanks to our alumni contributing at the highest levels of our society, the name of our alma mater shines brighter. As role models for our younger alumni, I hope greater honours will follow our awardees in the future.”

2022.03.03 View 4740

KAA Recognizes 4 Distinguished Alumni of the Year

The KAIST Alumni Association (KAA) recognized four distinguished alumni of the year during a ceremony on February 25 in Seoul. The four Distinguished Alumni Awardees are Distinguished Professor Sukbok Chang from the KAIST Department of Chemistry, Hyunshil Ahn, head of the AI Economy Institute and an editorial writer at The Korea Economic Daily, CEO Hwan-ho Sung of PSTech, and President Hark Kyu Park of Samsung Electronics.

Distinguished Professor Sukbok Chang who received his MS from the Department of Chemistry in 1985 has been a pioneer in the novel field of ‘carbon-hydrogen bond activation reactions’. He has significantly contributed to raising Korea’s international reputation in natural sciences and received the Kyungam Academic Award in 2013, the 14th Korea Science Award in 2015, the 1st Science and Technology Prize of Korea Toray in 2018, and the Best Scientist/Engineer Award Korea in 2019. Furthermore, he was named as a Highly Cited Researcher who ranked in the top 1% of citations by field and publication year in the Web of Science citation index for seven consecutive years from 2015 to 2021, demonstrating his leadership as a global scholar.

Hyunshil Ahn, a graduate of the School of Business and Technology Management with an MS in 1985 and a PhD in 1987, was appointed as the first head of the AI Economy Institute when The Korea Economic Daily was the first Korean media outlet to establish an AI economy lab. He has contributed to creating new roles for the press and media in the 4th industrial revolution, and added to the popularization of AI technology through regulation reform and consulting on industrial policies.

PSTech CEO Hwan-ho Sung is a graduate of the School of Electrical Engineering where he received an MS in 1988 and a PhD in EMBA in 2008. He has run the electronics company PSTech for over 20 years and successfully localized the production of power equipment, which previously depended on foreign technology. His development of the world’s first power equipment that can be applied to new industries including semiconductors and displays was recognized through this award.

Samsung Electronics President Hark Kyu Park graduated from the School of Business and Technology Management with an MS in 1986. He not only enhanced Korea’s national competitiveness by expanding the semiconductor industry, but also established contract-based semiconductor departments at Korean universities including KAIST, Sungkyunkwan University, Yonsei University, and Postech, and semiconductor track courses at KAIST, Sogang University, Seoul National University, and Postech to nurture professional talents. He also led the national semiconductor coexistence system by leading private sector-government-academia collaborations to strengthen competence in semiconductors, and continues to make unconditional investments in strong small businesses.