guides

-

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

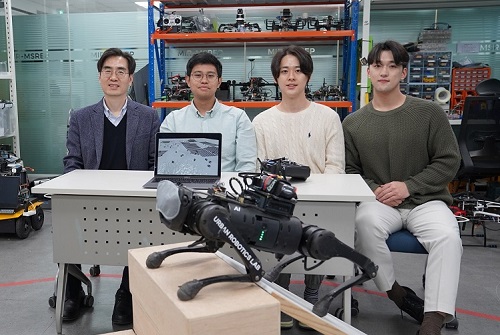

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 3559

KAIST debuts “DreamWaQer” - a quadrupedal robot that can walk in the dark

- The team led by Professor Hyun Myung of the School of Electrical Engineering developed “DreamWaQ”, a deep reinforcement learning-based walking robot control technology that can walk in an atypical environment without visual and/or tactile information

- Utilization of “DreamWaQ” technology can enable mass production of various types of “DreamWaQers”

- Expected to be used in exploration of atypical environment involving unique circumstances such as disasters by fire.

A team of Korean engineering researchers has developed a quadrupedal robot technology that can climb up and down the steps and moves without falling over in uneven environments such as tree roots without the help of visual or tactile sensors even in disastrous situations in which visual confirmation is impeded due to darkness or thick smoke from the flames.

KAIST (President Kwang Hyung Lee) announced on the 29th of March that Professor Hyun Myung's research team at the Urban Robotics Lab in the School of Electrical Engineering developed a walking robot control technology that enables robust 'blind locomotion' in various atypical environments.

< (From left) Prof. Hyun Myung, Doctoral Candidates I Made Aswin Nahrendra, Byeongho Yu, and Minho Oh. In the foreground is the DreamWaQer, a quadrupedal robot equipped with DreamWaQ technology. >

The KAIST research team developed "DreamWaQ" technology, which was named so as it enables walking robots to move about even in the dark, just as a person can walk without visual help fresh out of bed and going to the bathroom in the dark. With this technology installed atop any legged robots, it will be possible to create various types of "DreamWaQers".

Existing walking robot controllers are based on kinematics and/or dynamics models. This is expressed as a model-based control method. In particular, on atypical environments like the open, uneven fields, it is necessary to obtain the feature information of the terrain more quickly in order to maintain stability as it walks. However, it has been shown to depend heavily on the cognitive ability to survey the surrounding environment.

In contrast, the controller developed by Professor Hyun Myung's research team based on deep reinforcement learning (RL) methods can quickly calculate appropriate control commands for each motor of the walking robot through data of various environments obtained from the simulator. Whereas the existing controllers that learned from simulations required a separate re-orchestration to make it work with an actual robot, this controller developed by the research team is expected to be easily applied to various walking robots because it does not require an additional tuning process.

DreamWaQ, the controller developed by the research team, is largely composed of a context estimation network that estimates the ground and robot information and a policy network that computes control commands. The context-aided estimator network estimates the ground information implicitly and the robot’s status explicitly through inertial information and joint information. This information is fed into the policy network to be used to generate optimal control commands. Both networks are learned together in the simulation.

While the context-aided estimator network is learned through supervised learning, the policy network is learned through an actor-critic architecture, a deep RL methodology. The actor network can only implicitly infer surrounding terrain information. In the simulation, the surrounding terrain information is known, and the critic, or the value network, that has the exact terrain information evaluates the policy of the actor network.

This whole learning process takes only about an hour in a GPU-enabled PC, and the actual robot is equipped with only the network of learned actors. Without looking at the surrounding terrain, it goes through the process of imagining which environment is similar to one of the various environments learned in the simulation using only the inertial sensor (IMU) inside the robot and the measurement of joint angles. If it suddenly encounters an offset, such as a staircase, it will not know until its foot touches the step, but it will quickly draw up terrain information the moment its foot touches the surface. Then the control command suitable for the estimated terrain information is transmitted to each motor, enabling rapidly adapted walking.

The DreamWaQer robot walked not only in the laboratory environment, but also in an outdoor environment around the campus with many curbs and speed bumps, and over a field with many tree roots and gravel, demonstrating its abilities by overcoming a staircase with a difference of a height that is two-thirds of its body. In addition, regardless of the environment, the research team confirmed that it was capable of stable walking ranging from a slow speed of 0.3 m/s to a rather fast speed of 1.0 m/s.

The results of this study were produced by a student in doctorate course, I Made Aswin Nahrendra, as the first author, and his colleague Byeongho Yu as a co-author. It has been accepted to be presented at the upcoming IEEE International Conference on Robotics and Automation (ICRA) scheduled to be held in London at the end of May. (Paper title: DreamWaQ: Learning Robust Quadrupedal Locomotion With Implicit Terrain Imagination via Deep Reinforcement Learning)

The videos of the walking robot DreamWaQer equipped with the developed DreamWaQ can be found at the address below.

Main Introduction: https://youtu.be/JC1_bnTxPiQ Experiment Sketches: https://youtu.be/mhUUZVbeDA0

Meanwhile, this research was carried out with the support from the Robot Industry Core Technology Development Program of the Ministry of Trade, Industry and Energy (MOTIE). (Task title: Development of Mobile Intelligence SW for Autonomous Navigation of Legged Robots in Dynamic and Atypical Environments for Real Application)

< Figure 1. Overview of DreamWaQ, a controller developed by this research team. This network consists of an estimator network that learns implicit and explicit estimates together, a policy network that acts as a controller, and a value network that provides guides to the policies during training. When implemented in a real robot, only the estimator and policy network are used. Both networks run in less than 1 ms on the robot's on-board computer. >

< Figure 2. Since the estimator can implicitly estimate the ground information as the foot touches the surface, it is possible to adapt quickly to rapidly changing ground conditions. >

< Figure 3. Results showing that even a small walking robot was able to overcome steps with height differences of about 20cm. >

2023.05.18 View 3559 -

Study of T Cells from COVID-19 Convalescents Guides Vaccine Strategies

Researchers confirm that most COVID-19 patients in their convalescent stage carry stem cell-like memory T cells for months

A KAIST immunology research team found that most convalescent patients of COVID-19 develop and maintain T cell memory for over 10 months regardless of the severity of their symptoms. In addition, memory T cells proliferate rapidly after encountering their cognate antigen and accomplish their multifunctional roles. This study provides new insights for effective vaccine strategies against COVID-19, considering the self-renewal capacity and multipotency of memory T cells.

COVID-19 is a disease caused by severe acute respiratory syndrome coronavirus-2 (SARS-CoV-2) infection. When patients recover from COVID-19, SARS-CoV-2-specific adaptive immune memory is developed. The adaptive immune system consists of two principal components: B cells that produce antibodies and T cells that eliminate infected cells. The current results suggest that the protective immune function of memory T cells will be implemented upon re-exposure to SARS-CoV-2.

Recently, the role of memory T cells against SARS-CoV-2 has been gaining attention as neutralizing antibodies wane after recovery. Although memory T cells cannot prevent the infection itself, they play a central role in preventing the severe progression of COVID-19. However, the longevity and functional maintenance of SARS-CoV-2-specific memory T cells remain unknown.

Professor Eui-Cheol Shin and his collaborators investigated the characteristics and functions of stem cell-like memory T cells, which are expected to play a crucial role in long-term immunity. Researchers analyzed the generation of stem cell-like memory T cells and multi-cytokine producing polyfunctional memory T cells, using cutting-edge immunological techniques.

This research is significant in that revealing the long-term immunity of COVID-19 convalescent patients provides an indicator regarding the long-term persistence of T cell immunity, one of the main goals of future vaccine development, as well as evaluating the long-term efficacy of currently available COVID-19 vaccines.

The research team is presently conducting a follow-up study to identify the memory T cell formation and functional characteristics of those who received COVID-19 vaccines, and to understand the immunological effect of COVID-19 vaccines by comparing the characteristics of memory T cells from vaccinated individuals with those of COVID-19 convalescent patients.

PhD candidate Jae Hyung Jung and Dr. Min-Seok Rha, a clinical fellow at Yonsei Severance Hospital, who led the study together explained, “Our analysis will enhance the understanding of COVID-19 immunity and establish an index for COVID-19 vaccine-induced memory T cells.”

“This study is the world’s longest longitudinal study on differentiation and functions of memory T cells among COVID-19 convalescent patients. The research on the temporal dynamics of immune responses has laid the groundwork for building a strategy for next-generation vaccine development,” Professor Shin added. This work was supported by the Samsung Science and Technology Foundation and KAIST, and was published in Nature Communications on June 30.

-Publication:

Jung, J.H., Rha, MS., Sa, M. et al. SARS-CoV-2-specific T cell memory is sustained in COVID-19 convalescent patients for 10 months with successful development of stem cell-like memory T cells. Nat Communications 12, 4043 (2021). https://doi.org/10.1038/s41467-021-24377-1

-Profile:

Professor Eui-Cheol Shin

Laboratory of Immunology & Infectious Diseases (http://liid.kaist.ac.kr/)

Graduate School of Medical Science and Engineering

KAIST

2021.07.05 View 7602

Study of T Cells from COVID-19 Convalescents Guides Vaccine Strategies

Researchers confirm that most COVID-19 patients in their convalescent stage carry stem cell-like memory T cells for months

A KAIST immunology research team found that most convalescent patients of COVID-19 develop and maintain T cell memory for over 10 months regardless of the severity of their symptoms. In addition, memory T cells proliferate rapidly after encountering their cognate antigen and accomplish their multifunctional roles. This study provides new insights for effective vaccine strategies against COVID-19, considering the self-renewal capacity and multipotency of memory T cells.

COVID-19 is a disease caused by severe acute respiratory syndrome coronavirus-2 (SARS-CoV-2) infection. When patients recover from COVID-19, SARS-CoV-2-specific adaptive immune memory is developed. The adaptive immune system consists of two principal components: B cells that produce antibodies and T cells that eliminate infected cells. The current results suggest that the protective immune function of memory T cells will be implemented upon re-exposure to SARS-CoV-2.

Recently, the role of memory T cells against SARS-CoV-2 has been gaining attention as neutralizing antibodies wane after recovery. Although memory T cells cannot prevent the infection itself, they play a central role in preventing the severe progression of COVID-19. However, the longevity and functional maintenance of SARS-CoV-2-specific memory T cells remain unknown.

Professor Eui-Cheol Shin and his collaborators investigated the characteristics and functions of stem cell-like memory T cells, which are expected to play a crucial role in long-term immunity. Researchers analyzed the generation of stem cell-like memory T cells and multi-cytokine producing polyfunctional memory T cells, using cutting-edge immunological techniques.

This research is significant in that revealing the long-term immunity of COVID-19 convalescent patients provides an indicator regarding the long-term persistence of T cell immunity, one of the main goals of future vaccine development, as well as evaluating the long-term efficacy of currently available COVID-19 vaccines.

The research team is presently conducting a follow-up study to identify the memory T cell formation and functional characteristics of those who received COVID-19 vaccines, and to understand the immunological effect of COVID-19 vaccines by comparing the characteristics of memory T cells from vaccinated individuals with those of COVID-19 convalescent patients.

PhD candidate Jae Hyung Jung and Dr. Min-Seok Rha, a clinical fellow at Yonsei Severance Hospital, who led the study together explained, “Our analysis will enhance the understanding of COVID-19 immunity and establish an index for COVID-19 vaccine-induced memory T cells.”

“This study is the world’s longest longitudinal study on differentiation and functions of memory T cells among COVID-19 convalescent patients. The research on the temporal dynamics of immune responses has laid the groundwork for building a strategy for next-generation vaccine development,” Professor Shin added. This work was supported by the Samsung Science and Technology Foundation and KAIST, and was published in Nature Communications on June 30.

-Publication:

Jung, J.H., Rha, MS., Sa, M. et al. SARS-CoV-2-specific T cell memory is sustained in COVID-19 convalescent patients for 10 months with successful development of stem cell-like memory T cells. Nat Communications 12, 4043 (2021). https://doi.org/10.1038/s41467-021-24377-1

-Profile:

Professor Eui-Cheol Shin

Laboratory of Immunology & Infectious Diseases (http://liid.kaist.ac.kr/)

Graduate School of Medical Science and Engineering

KAIST

2021.07.05 View 7602 -

What Guides Habitual Seeking Behavior Explained

A new role of the ventral striatum explains habitual seeking behavior

Researchers have been investigating how the brain controls habitual seeking behaviors such as addiction. A recent study by Professor Sue-Hyun Lee from the Department of Bio and Brain Engineering revealed that a long-term value memory maintained in the ventral striatum in the brain is a neural basis of our habitual seeking behavior. This research was conducted in collaboration with the research team lead by Professor Hyoung F. Kim from Seoul National University. Given that addictive behavior is deemed a habitual one, this research provides new insights for developing therapeutic interventions for addiction.

Habitual seeking behavior involves strong stimulus responses, mostly rapid and automatic ones. The ventral striatum in the brain has been thought to be important for value learning and addictive behaviors. However, it was unclear if the ventral striatum processes and retains long-term memories that guide habitual seeking.

Professor Lee’s team reported a new role of the human ventral striatum where long-term memory of high-valued objects are retained as a single representation and may be used to evaluate visual stimuli automatically to guide habitual behavior.

“Our findings propose a role of the ventral striatum as a director that guides habitual behavior with the script of value information written in the past,” said Professor Lee.

The research team investigated whether learned values were retained in the ventral striatum while the subjects passively viewed previously learned objects in the absence of any immediate outcome. Neural responses in the ventral striatum during the incidental perception of learned objects were examined using fMRI and single-unit recording.

The study found significant value discrimination responses in the ventral striatum after learning and a retention period of several days. Moreover, the similarity of neural representations for good objects increased after learning, an outcome positively correlated with the habitual seeking response for good objects.

“These findings suggest that the ventral striatum plays a role in automatic evaluations of objects based on the neural representation of positive values retained since learning, to guide habitual seeking behaviors,” explained Professor Lee.

“We will fully investigate the function of different parts of the entire basal ganglia including the ventral striatum. We also expect that this understanding may lead to the development of better treatment for mental illnesses related to habitual behaviors or addiction problems.”

This study, supported by the National Research Foundation of Korea, was reported at Nature Communications (https://doi.org/10.1038/s41467-021-22335-5.)

-ProfileProfessor Sue-Hyun LeeDepartment of Bio and Brain EngineeringMemory and Cognition Laboratoryhttp://memory.kaist.ac.kr/lecture

KAIST

2021.06.03 View 5930

What Guides Habitual Seeking Behavior Explained

A new role of the ventral striatum explains habitual seeking behavior

Researchers have been investigating how the brain controls habitual seeking behaviors such as addiction. A recent study by Professor Sue-Hyun Lee from the Department of Bio and Brain Engineering revealed that a long-term value memory maintained in the ventral striatum in the brain is a neural basis of our habitual seeking behavior. This research was conducted in collaboration with the research team lead by Professor Hyoung F. Kim from Seoul National University. Given that addictive behavior is deemed a habitual one, this research provides new insights for developing therapeutic interventions for addiction.

Habitual seeking behavior involves strong stimulus responses, mostly rapid and automatic ones. The ventral striatum in the brain has been thought to be important for value learning and addictive behaviors. However, it was unclear if the ventral striatum processes and retains long-term memories that guide habitual seeking.

Professor Lee’s team reported a new role of the human ventral striatum where long-term memory of high-valued objects are retained as a single representation and may be used to evaluate visual stimuli automatically to guide habitual behavior.

“Our findings propose a role of the ventral striatum as a director that guides habitual behavior with the script of value information written in the past,” said Professor Lee.

The research team investigated whether learned values were retained in the ventral striatum while the subjects passively viewed previously learned objects in the absence of any immediate outcome. Neural responses in the ventral striatum during the incidental perception of learned objects were examined using fMRI and single-unit recording.

The study found significant value discrimination responses in the ventral striatum after learning and a retention period of several days. Moreover, the similarity of neural representations for good objects increased after learning, an outcome positively correlated with the habitual seeking response for good objects.

“These findings suggest that the ventral striatum plays a role in automatic evaluations of objects based on the neural representation of positive values retained since learning, to guide habitual seeking behaviors,” explained Professor Lee.

“We will fully investigate the function of different parts of the entire basal ganglia including the ventral striatum. We also expect that this understanding may lead to the development of better treatment for mental illnesses related to habitual behaviors or addiction problems.”

This study, supported by the National Research Foundation of Korea, was reported at Nature Communications (https://doi.org/10.1038/s41467-021-22335-5.)

-ProfileProfessor Sue-Hyun LeeDepartment of Bio and Brain EngineeringMemory and Cognition Laboratoryhttp://memory.kaist.ac.kr/lecture

KAIST

2021.06.03 View 5930 -

Acoustic Graphene Plasmons Study Paves Way for Optoelectronic Applications

- The first images of mid-infrared optical waves compressed 1,000 times captured using a highly sensitive scattering-type scanning near-field optical microscope. -

KAIST researchers and their collaborators at home and abroad have successfully demonstrated a new methodology for direct near-field optical imaging of acoustic graphene plasmon fields. This strategy will provide a breakthrough for the practical applications of acoustic graphene plasmon platforms in next-generation, high-performance, graphene-based optoelectronic devices with enhanced light-matter interactions and lower propagation loss.

It was recently demonstrated that ‘graphene plasmons’ – collective oscillations of free electrons in graphene coupled to electromagnetic waves of light – can be used to trap and compress optical waves inside a very thin dielectric layer separating graphene from a metallic sheet. In such a configuration, graphene’s conduction electrons are “reflected” in the metal, so when the light waves “push” the electrons in graphene, their image charges in metal also start to oscillate. This new type of collective electronic oscillation mode is called ‘acoustic graphene plasmon (AGP)’.

The existence of AGP could previously be observed only via indirect methods such as far-field infrared spectroscopy and photocurrent mapping. This indirect observation was the price that researchers had to pay for the strong compression of optical waves inside nanometer-thin structures. It was believed that the intensity of electromagnetic fields outside the device was insufficient for direct near-field optical imaging of AGP.

Challenged by these limitations, three research groups combined their efforts to bring together a unique experimental technique using advanced nanofabrication methods. Their findings were published in Nature Communications on February 19.

A KAIST research team led by Professor Min Seok Jang from the School of Electrical Engineering used a highly sensitive scattering-type scanning near-field optical microscope (s-SNOM) to directly measure the optical fields of the AGP waves propagating in a nanometer-thin waveguide, visualizing thousand-fold compression of mid-infrared light for the first time.

Professor Jang and a post-doc researcher in his group, Sergey G. Menabde, successfully obtained direct images of AGP waves by taking advantage of their rapidly decaying yet always present electric field above graphene. They showed that AGPs are detectable even when most of their energy is flowing inside the dielectric below the graphene.

This became possible due to the ultra-smooth surfaces inside the nano-waveguides where plasmonic waves can propagate at longer distances. The AGP mode probed by the researchers was up to 2.3 times more confined and exhibited a 1.4 times higher figure of merit in terms of the normalized propagation length compared to the graphene surface plasmon under similar conditions.

These ultra-smooth nanostructures of the waveguides used in the experiment were created using a template-stripping method by Professor Sang-Hyun Oh and a post-doc researcher, In-Ho Lee, from the Department of Electrical and Computer Engineering at the University of Minnesota.

Professor Young Hee Lee and his researchers at the Center for Integrated Nanostructure Physics (CINAP) of the Institute of Basic Science (IBS) at Sungkyunkwan University synthesized the graphene with a monocrystalline structure, and this high-quality, large-area graphene enabled low-loss plasmonic propagation.

The chemical and physical properties of many important organic molecules can be detected and evaluated by their absorption signatures in the mid-infrared spectrum. However, conventional detection methods require a large number of molecules for successful detection, whereas the ultra-compressed AGP fields can provide strong light-matter interactions at the microscopic level, thus significantly improving the detection sensitivity down to a single molecule.

Furthermore, the study conducted by Professor Jang and the team demonstrated that the mid-infrared AGPs are inherently less sensitive to losses in graphene due to their fields being mostly confined within the dielectric. The research team’s reported results suggest that AGPs could become a promising platform for electrically tunable graphene-based optoelectronic devices that typically suffer from higher absorption rates in graphene such as metasurfaces, optical switches, photovoltaics, and other optoelectronic applications operating at infrared frequencies.

Professor Jang said, “Our research revealed that the ultra-compressed electromagnetic fields of acoustic graphene plasmons can be directly accessed through near-field optical microscopy methods. I hope this realization will motivate other researchers to apply AGPs to various problems where strong light-matter interactions and lower propagation loss are needed.”

This research was primarily funded by the Samsung Research Funding & Incubation Center of Samsung Electronics. The National Research Foundation of Korea (NRF), the U.S. National Science Foundation (NSF), Samsung Global Research Outreach (GRO) Program, and Institute for Basic Science of Korea (IBS) also supported the work.

Publication:

Menabde, S. G., et al. (2021) Real-space imaging of acoustic plasmons in large-area graphene grown by chemical vapor deposition. Nature Communications 12, Article No. 938. Available online at https://doi.org/10.1038/s41467-021-21193-5

Profile:

Min Seok Jang, MS, PhD

Associate Professorjang.minseok@kaist.ac.krhttp://jlab.kaist.ac.kr/

Min Seok Jang Research GroupSchool of Electrical Engineering

http://kaist.ac.kr/en/Korea Advanced Institute of Science and Technology (KAIST)Daejeon, Republic of Korea

(END)

2021.03.16 View 9347

Acoustic Graphene Plasmons Study Paves Way for Optoelectronic Applications

- The first images of mid-infrared optical waves compressed 1,000 times captured using a highly sensitive scattering-type scanning near-field optical microscope. -

KAIST researchers and their collaborators at home and abroad have successfully demonstrated a new methodology for direct near-field optical imaging of acoustic graphene plasmon fields. This strategy will provide a breakthrough for the practical applications of acoustic graphene plasmon platforms in next-generation, high-performance, graphene-based optoelectronic devices with enhanced light-matter interactions and lower propagation loss.

It was recently demonstrated that ‘graphene plasmons’ – collective oscillations of free electrons in graphene coupled to electromagnetic waves of light – can be used to trap and compress optical waves inside a very thin dielectric layer separating graphene from a metallic sheet. In such a configuration, graphene’s conduction electrons are “reflected” in the metal, so when the light waves “push” the electrons in graphene, their image charges in metal also start to oscillate. This new type of collective electronic oscillation mode is called ‘acoustic graphene plasmon (AGP)’.

The existence of AGP could previously be observed only via indirect methods such as far-field infrared spectroscopy and photocurrent mapping. This indirect observation was the price that researchers had to pay for the strong compression of optical waves inside nanometer-thin structures. It was believed that the intensity of electromagnetic fields outside the device was insufficient for direct near-field optical imaging of AGP.

Challenged by these limitations, three research groups combined their efforts to bring together a unique experimental technique using advanced nanofabrication methods. Their findings were published in Nature Communications on February 19.

A KAIST research team led by Professor Min Seok Jang from the School of Electrical Engineering used a highly sensitive scattering-type scanning near-field optical microscope (s-SNOM) to directly measure the optical fields of the AGP waves propagating in a nanometer-thin waveguide, visualizing thousand-fold compression of mid-infrared light for the first time.

Professor Jang and a post-doc researcher in his group, Sergey G. Menabde, successfully obtained direct images of AGP waves by taking advantage of their rapidly decaying yet always present electric field above graphene. They showed that AGPs are detectable even when most of their energy is flowing inside the dielectric below the graphene.

This became possible due to the ultra-smooth surfaces inside the nano-waveguides where plasmonic waves can propagate at longer distances. The AGP mode probed by the researchers was up to 2.3 times more confined and exhibited a 1.4 times higher figure of merit in terms of the normalized propagation length compared to the graphene surface plasmon under similar conditions.

These ultra-smooth nanostructures of the waveguides used in the experiment were created using a template-stripping method by Professor Sang-Hyun Oh and a post-doc researcher, In-Ho Lee, from the Department of Electrical and Computer Engineering at the University of Minnesota.

Professor Young Hee Lee and his researchers at the Center for Integrated Nanostructure Physics (CINAP) of the Institute of Basic Science (IBS) at Sungkyunkwan University synthesized the graphene with a monocrystalline structure, and this high-quality, large-area graphene enabled low-loss plasmonic propagation.

The chemical and physical properties of many important organic molecules can be detected and evaluated by their absorption signatures in the mid-infrared spectrum. However, conventional detection methods require a large number of molecules for successful detection, whereas the ultra-compressed AGP fields can provide strong light-matter interactions at the microscopic level, thus significantly improving the detection sensitivity down to a single molecule.

Furthermore, the study conducted by Professor Jang and the team demonstrated that the mid-infrared AGPs are inherently less sensitive to losses in graphene due to their fields being mostly confined within the dielectric. The research team’s reported results suggest that AGPs could become a promising platform for electrically tunable graphene-based optoelectronic devices that typically suffer from higher absorption rates in graphene such as metasurfaces, optical switches, photovoltaics, and other optoelectronic applications operating at infrared frequencies.

Professor Jang said, “Our research revealed that the ultra-compressed electromagnetic fields of acoustic graphene plasmons can be directly accessed through near-field optical microscopy methods. I hope this realization will motivate other researchers to apply AGPs to various problems where strong light-matter interactions and lower propagation loss are needed.”

This research was primarily funded by the Samsung Research Funding & Incubation Center of Samsung Electronics. The National Research Foundation of Korea (NRF), the U.S. National Science Foundation (NSF), Samsung Global Research Outreach (GRO) Program, and Institute for Basic Science of Korea (IBS) also supported the work.

Publication:

Menabde, S. G., et al. (2021) Real-space imaging of acoustic plasmons in large-area graphene grown by chemical vapor deposition. Nature Communications 12, Article No. 938. Available online at https://doi.org/10.1038/s41467-021-21193-5

Profile:

Min Seok Jang, MS, PhD

Associate Professorjang.minseok@kaist.ac.krhttp://jlab.kaist.ac.kr/

Min Seok Jang Research GroupSchool of Electrical Engineering

http://kaist.ac.kr/en/Korea Advanced Institute of Science and Technology (KAIST)Daejeon, Republic of Korea

(END)

2021.03.16 View 9347 -

Visualization of Functional Components to Characterize Optimal Composite Electrodes

Researchers have developed a visualization method that will determine the distribution of components in battery electrodes using atomic force microscopy. The method provides insights into the optimal conditions of composite electrodes and takes us one step closer to being able to manufacture next-generation all-solid-state batteries.

Lithium-ion batteries are widely used in smart devices and vehicles. However, their flammability makes them a safety concern, arising from potential leakage of liquid electrolytes.

All-solid-state lithium ion batteries have emerged as an alternative because of their better safety and wider electrochemical stability. Despite their advantages, all-solid-state lithium ion batteries still have drawbacks such as limited ion conductivity, insufficient contact areas, and high interfacial resistance between the electrode and solid electrolyte.

To solve these issues, studies have been conducted on composite electrodes in which lithium ion conducting additives are dispersed as a medium to provide ion conductive paths at the interface and increase the overall ionic conductivity.

It is very important to identify the shape and distribution of the components used in active materials, ion conductors, binders, and conductive additives on a microscopic scale for significantly improving the battery operation performance.

The developed method is able to distinguish regions of each component based on detected signal sensitivity, by using various modes of atomic force microscopy on a multiscale basis, including electrochemical strain microscopy and lateral force microscopy.

For this research project, both conventional electrodes and composite electrodes were tested, and the results were compared. Individual regions were distinguished and nanoscale correlation between ion reactivity distribution and friction force distribution within a single region was determined to examine the effect of the distribution of binder on the electrochemical strain.

The research team explored the electrochemical strain microscopy amplitude/phase and lateral force microscopy friction force dependence on the AC drive voltage and the tip loading force, and used their sensitivities as markers for each component in the composite anode.

This method allows for direct multiscale observation of the composite electrode in ambient condition, distinguishing various components and measuring their properties simultaneously.

Lead author Dr. Hongjun Kim said, “It is easy to prepare the test sample for observation while providing much higher spatial resolution and intensity resolution for detected signals.” He added, “The method also has the advantage of providing 3D surface morphology information for the observed specimens.”

Professor Seungbum Hong from the Department of Material Sciences and Engineering said, “This analytical technique using atomic force microscopy will be useful for quantitatively understanding what role each component of a composite material plays in the final properties.”

“Our method not only will suggest the new direction for next-generation all-solid-state battery design on a multiscale basis but also lay the groundwork for innovation in the manufacturing process of other electrochemical materials.”

This study is published in ACS Applied Energy Materials and supported by the Big Science Research and Development Project under the Ministry of Science and ICT and the National Research Foundation of Korea, the Basic Research Project under the Wearable Platform Materials Technology Center, and KAIST Global Singularity Research Program for 2019 and 2020.

Publication:Kim, H, et al. (2020) ‘Visualization of Functional Components in a Lithium Silicon Titanium Phosphate-Natural Graphite Composite Anode’. ACS Applied Energy Materials, Volume 3, Issue 4, pp. 3253-3261. Available online at https://doi.org/10.1021/acsaem.9b02045

Profile:

Seungbum Hong

Professor

seungbum@kaist.ac.kr

http://mii.kaist.ac.kr/

Materials Imaging and Integration Laboratory

Department of Material Sciences and Engineering

KAIST

2020.05.22 View 6612

Visualization of Functional Components to Characterize Optimal Composite Electrodes

Researchers have developed a visualization method that will determine the distribution of components in battery electrodes using atomic force microscopy. The method provides insights into the optimal conditions of composite electrodes and takes us one step closer to being able to manufacture next-generation all-solid-state batteries.

Lithium-ion batteries are widely used in smart devices and vehicles. However, their flammability makes them a safety concern, arising from potential leakage of liquid electrolytes.

All-solid-state lithium ion batteries have emerged as an alternative because of their better safety and wider electrochemical stability. Despite their advantages, all-solid-state lithium ion batteries still have drawbacks such as limited ion conductivity, insufficient contact areas, and high interfacial resistance between the electrode and solid electrolyte.

To solve these issues, studies have been conducted on composite electrodes in which lithium ion conducting additives are dispersed as a medium to provide ion conductive paths at the interface and increase the overall ionic conductivity.

It is very important to identify the shape and distribution of the components used in active materials, ion conductors, binders, and conductive additives on a microscopic scale for significantly improving the battery operation performance.

The developed method is able to distinguish regions of each component based on detected signal sensitivity, by using various modes of atomic force microscopy on a multiscale basis, including electrochemical strain microscopy and lateral force microscopy.

For this research project, both conventional electrodes and composite electrodes were tested, and the results were compared. Individual regions were distinguished and nanoscale correlation between ion reactivity distribution and friction force distribution within a single region was determined to examine the effect of the distribution of binder on the electrochemical strain.

The research team explored the electrochemical strain microscopy amplitude/phase and lateral force microscopy friction force dependence on the AC drive voltage and the tip loading force, and used their sensitivities as markers for each component in the composite anode.

This method allows for direct multiscale observation of the composite electrode in ambient condition, distinguishing various components and measuring their properties simultaneously.

Lead author Dr. Hongjun Kim said, “It is easy to prepare the test sample for observation while providing much higher spatial resolution and intensity resolution for detected signals.” He added, “The method also has the advantage of providing 3D surface morphology information for the observed specimens.”

Professor Seungbum Hong from the Department of Material Sciences and Engineering said, “This analytical technique using atomic force microscopy will be useful for quantitatively understanding what role each component of a composite material plays in the final properties.”

“Our method not only will suggest the new direction for next-generation all-solid-state battery design on a multiscale basis but also lay the groundwork for innovation in the manufacturing process of other electrochemical materials.”

This study is published in ACS Applied Energy Materials and supported by the Big Science Research and Development Project under the Ministry of Science and ICT and the National Research Foundation of Korea, the Basic Research Project under the Wearable Platform Materials Technology Center, and KAIST Global Singularity Research Program for 2019 and 2020.

Publication:Kim, H, et al. (2020) ‘Visualization of Functional Components in a Lithium Silicon Titanium Phosphate-Natural Graphite Composite Anode’. ACS Applied Energy Materials, Volume 3, Issue 4, pp. 3253-3261. Available online at https://doi.org/10.1021/acsaem.9b02045

Profile:

Seungbum Hong

Professor

seungbum@kaist.ac.kr

http://mii.kaist.ac.kr/

Materials Imaging and Integration Laboratory

Department of Material Sciences and Engineering

KAIST

2020.05.22 View 6612 -

Mathematical Principle behind AI's 'Black Box'

(from left: Professor Jong Chul Ye, PhD candidates Yoseob Han and Eunju Cha)

A KAIST research team identified the geometrical structure of artificial intelligence (AI) and discovered the mathematical principles of highly performing artificial neural networks, which can be applicable in fields such as medical imaging.

Deep neural networks are an exemplary method of implementing deep learning, which is at the core of the AI technology, and have shown explosive growth in recent years. This technique has been used in various fields, such as image and speech recognition as well as image processing.

Despite its excellent performance and usefulness, the exact working principles of deep neural networks has not been well understood, and they often suffer from unexpected results or errors. Hence, there is an increasing social and technical demand for interpretable deep neural network models.

To address these issues, Professor Jong Chul Ye from the Department of Bio & Brain Engineering and his team attempted to find the geometric structure in a higher dimensional space where the structure of the deep neural network can be easily understood. They proposed a general deep learning framework, called deep convolutional framelets, to understand the mathematical principle of a deep neural network in terms of the mathematical tools in Harmonic analysis.

As a result, it was found that deep neural networks’ structure appears during the process of decomposition of high dimensionally lifted signal via Hankel matrix, which is a high-dimensional structure formerly studied intensively in the field of signal processing.

In the process of decomposing the lifted signal, two bases categorized as local and non-local basis emerge. The researchers found that non-local and local basis functions play a role in pooling and filtering operation in convolutional neural network, respectively.

Previously, when implementing AI, deep neural networks were usually constructed through empirical trial and errors. The significance of the research lies in the fact that it provides a mathematical understanding on the neural network structure in high dimensional space, which guides users to design an optimized neural network.

They demonstrated improved performance of the deep convolutional framelets’ neural networks in the applications of image denoising, image pixel in painting, and medical image restoration.

Professor Ye said, “Unlike conventional neural networks designed through trial-and-error, our theory shows that neural network structure can be optimized to each desired application and are easily predictable in their effects by exploiting the high dimensional geometry. This technology can be applied to a variety of fields requiring interpretation of the architecture, such as medical imaging.”

This research, led by PhD candidates Yoseob Han and Eunju Cha, was published in the April 26th issue of the SIAM Journal on Imaging Sciences.

Figure 1. The design of deep neural network using mathematical principles

Figure 2. The results of image noise cancelling

Figure 3. The artificial neural network restoration results in the case where 80% of the pixels are lost

2018.09.12 View 5255

Mathematical Principle behind AI's 'Black Box'

(from left: Professor Jong Chul Ye, PhD candidates Yoseob Han and Eunju Cha)

A KAIST research team identified the geometrical structure of artificial intelligence (AI) and discovered the mathematical principles of highly performing artificial neural networks, which can be applicable in fields such as medical imaging.

Deep neural networks are an exemplary method of implementing deep learning, which is at the core of the AI technology, and have shown explosive growth in recent years. This technique has been used in various fields, such as image and speech recognition as well as image processing.

Despite its excellent performance and usefulness, the exact working principles of deep neural networks has not been well understood, and they often suffer from unexpected results or errors. Hence, there is an increasing social and technical demand for interpretable deep neural network models.

To address these issues, Professor Jong Chul Ye from the Department of Bio & Brain Engineering and his team attempted to find the geometric structure in a higher dimensional space where the structure of the deep neural network can be easily understood. They proposed a general deep learning framework, called deep convolutional framelets, to understand the mathematical principle of a deep neural network in terms of the mathematical tools in Harmonic analysis.

As a result, it was found that deep neural networks’ structure appears during the process of decomposition of high dimensionally lifted signal via Hankel matrix, which is a high-dimensional structure formerly studied intensively in the field of signal processing.

In the process of decomposing the lifted signal, two bases categorized as local and non-local basis emerge. The researchers found that non-local and local basis functions play a role in pooling and filtering operation in convolutional neural network, respectively.

Previously, when implementing AI, deep neural networks were usually constructed through empirical trial and errors. The significance of the research lies in the fact that it provides a mathematical understanding on the neural network structure in high dimensional space, which guides users to design an optimized neural network.

They demonstrated improved performance of the deep convolutional framelets’ neural networks in the applications of image denoising, image pixel in painting, and medical image restoration.

Professor Ye said, “Unlike conventional neural networks designed through trial-and-error, our theory shows that neural network structure can be optimized to each desired application and are easily predictable in their effects by exploiting the high dimensional geometry. This technology can be applied to a variety of fields requiring interpretation of the architecture, such as medical imaging.”

This research, led by PhD candidates Yoseob Han and Eunju Cha, was published in the April 26th issue of the SIAM Journal on Imaging Sciences.

Figure 1. The design of deep neural network using mathematical principles

Figure 2. The results of image noise cancelling

Figure 3. The artificial neural network restoration results in the case where 80% of the pixels are lost

2018.09.12 View 5255 -

Adding Smart to Science Museum

KAIST and the National Science Museum (NSM) created an Exhibition Research Center for Smart Science to launch exhibitions that integrate emerging technologies in the Fourth Industrial Revolution, including augmented reality (AR), virtual reality (VR), Internet of Things (IoTs), and artificial intelligence (AI).

There has been a great demand for a novel technology for better, user-oriented exhibition services. The NSM continuously faces the problem of not having enough professional guides. Additionally, there have been constant complaints about its current mobile application for exhibitions not being very effective.

To tackle these problems, the new center was founded, involving 11 institutes and universities. Sponsored by the National Research Foundation, it will oversee 15 projects in three areas: exhibition-based technology, exhibition operational technology, and exhibition content.

The group first aims to provide a location-based exhibition guide system service, which allows it to incorporate various technological services, such as AR/VR to visitors. An indoor locating system named KAILOS, which was developed by KAIST, will be applied to this service. They will also launch a mobile application service that provides audio-based exhibition guides.

To further cater to visitors’ needs, the group plans to apply a user-centered ecosystem, a living lab concept to create pleasant environment for visitors.

“Every year, hundred thousands of young people visit the National Science Museum. I believe that the exhibition guide system has to be innovative, using cutting-edge IT technology in order to help them cherish their dreams and inspirations through science,” Jeong Heoi Bae, President of Exhibition and Research Bureau of NSM, emphasized.

Professor Dong Soo Han from the School of Computing, who took the position of research head of the group, said, “We will systematically develop exhibition technology and contents for the science museum to create a platform for smart science museums. It will be the first time to provide an exhibition guide system that integrates AR/VR with an indoor location system.”

The center will first apply the new system to the NSM and then expand it to 167 science museums and other regional museums.

2018.09.04 View 5624

Adding Smart to Science Museum

KAIST and the National Science Museum (NSM) created an Exhibition Research Center for Smart Science to launch exhibitions that integrate emerging technologies in the Fourth Industrial Revolution, including augmented reality (AR), virtual reality (VR), Internet of Things (IoTs), and artificial intelligence (AI).

There has been a great demand for a novel technology for better, user-oriented exhibition services. The NSM continuously faces the problem of not having enough professional guides. Additionally, there have been constant complaints about its current mobile application for exhibitions not being very effective.

To tackle these problems, the new center was founded, involving 11 institutes and universities. Sponsored by the National Research Foundation, it will oversee 15 projects in three areas: exhibition-based technology, exhibition operational technology, and exhibition content.

The group first aims to provide a location-based exhibition guide system service, which allows it to incorporate various technological services, such as AR/VR to visitors. An indoor locating system named KAILOS, which was developed by KAIST, will be applied to this service. They will also launch a mobile application service that provides audio-based exhibition guides.

To further cater to visitors’ needs, the group plans to apply a user-centered ecosystem, a living lab concept to create pleasant environment for visitors.

“Every year, hundred thousands of young people visit the National Science Museum. I believe that the exhibition guide system has to be innovative, using cutting-edge IT technology in order to help them cherish their dreams and inspirations through science,” Jeong Heoi Bae, President of Exhibition and Research Bureau of NSM, emphasized.

Professor Dong Soo Han from the School of Computing, who took the position of research head of the group, said, “We will systematically develop exhibition technology and contents for the science museum to create a platform for smart science museums. It will be the first time to provide an exhibition guide system that integrates AR/VR with an indoor location system.”

The center will first apply the new system to the NSM and then expand it to 167 science museums and other regional museums.

2018.09.04 View 5624 -

Augmented Reality Application for Smart Tour

‘K-Culture Time Machine,’ an augmented and virtual reality application will create a new way to take a tour. Prof. Woon-taek Woo's research team of Graduate School of Culture Technology of KAIST developed AR/VR application for smart tourism.

The 'K-Culture Time Machine' application (iOS App Store app name: KCTM) was launched on iOS App Store in Korea on May 22 as a pilot service that is targetting the Changdeokgung Palace of Seoul.

The application provides remote experience over time and space for cultural heritage or relics thorough wearable 360-degree video. Users can remotely experience cultural heritage sites with 360-degree video provided by installing a smartphone in a smartphone HMD device, and can search information on historical figures, places, and events related to cultural heritage. Also, 3D reconstruction of lost cultural heritage can be experienced.

Without using wearable HMD devices, mobile-based cultural heritage guides can be provided based on the vision-based recognition on the cultural heritages. Through the embedded camera in smartphone, the application can identify the heritages and provide related information and contents of the hertages. For example, in Changdeokgung Palace, a user can move inside the Changdeokgung Palace from Donhwa-Gate (the main gate of the Changdeokgung Palace), Injeong-Jeon(main hall), Injeong-Moon (Main gate of Injeong-Jeon), and to Huijeongdang (rest place for the king). Through the 360 degree panoramic image or video, the user can experience the virtual scene of heritages.

The virtual 3D reconstruction of the seungjeongwon (Royal Secretariat) which does not exist at present can be shown of the east side of the Injeong-Jeon The functions can be experienced on a smartphone without a wearable device, and it would be a commercial application that can be utilized in the field once the augmented reality function which is under development is completed.

Professor Woo and his research team constructed and applied standardized metadata of cultural heritage database and AR/VR contents. Through this standardized metadata, unlike existing applications which are temporarily consumed after development, reusable and interoperable contents can be made.Professor Woo said, "By enhancing the interoperability and reusability of AR contents, we will be able to preoccupy new markets in the field of smart tourism."

The research was conducted through the joint work with Post Media (CEO Hong Seung-mo) in the CT R&D project of the Ministry of Culture, Sports and Tourism of Korea. The results of the research will be announced through the HCI International 2017 conference in Canada this July.

Figure 1. 360 degree panorama image / video function screen of 'K-Culture Time Machine'. Smartphone HMD allows users to freely experience various cultural sites remotely.

Figure 2. 'K-Culture Time Machine' mobile augmented reality function screen. By analyzing the location of the user and the screen viewed through the camera, information related to the cultural heritage are provided to enhance the user experience.

Figure 3. The concept of 360-degree panoramic video-based VR service of "K-Culture Time Machine", a wearable application supporting smart tour of the historical sites. Through the smartphone HMD, a user can remotely experience cultural heritage sites and 3D reconstruction of cultural heritage that does not currently exist.

2017.05.30 View 9885

Augmented Reality Application for Smart Tour

‘K-Culture Time Machine,’ an augmented and virtual reality application will create a new way to take a tour. Prof. Woon-taek Woo's research team of Graduate School of Culture Technology of KAIST developed AR/VR application for smart tourism.

The 'K-Culture Time Machine' application (iOS App Store app name: KCTM) was launched on iOS App Store in Korea on May 22 as a pilot service that is targetting the Changdeokgung Palace of Seoul.

The application provides remote experience over time and space for cultural heritage or relics thorough wearable 360-degree video. Users can remotely experience cultural heritage sites with 360-degree video provided by installing a smartphone in a smartphone HMD device, and can search information on historical figures, places, and events related to cultural heritage. Also, 3D reconstruction of lost cultural heritage can be experienced.

Without using wearable HMD devices, mobile-based cultural heritage guides can be provided based on the vision-based recognition on the cultural heritages. Through the embedded camera in smartphone, the application can identify the heritages and provide related information and contents of the hertages. For example, in Changdeokgung Palace, a user can move inside the Changdeokgung Palace from Donhwa-Gate (the main gate of the Changdeokgung Palace), Injeong-Jeon(main hall), Injeong-Moon (Main gate of Injeong-Jeon), and to Huijeongdang (rest place for the king). Through the 360 degree panoramic image or video, the user can experience the virtual scene of heritages.

The virtual 3D reconstruction of the seungjeongwon (Royal Secretariat) which does not exist at present can be shown of the east side of the Injeong-Jeon The functions can be experienced on a smartphone without a wearable device, and it would be a commercial application that can be utilized in the field once the augmented reality function which is under development is completed.

Professor Woo and his research team constructed and applied standardized metadata of cultural heritage database and AR/VR contents. Through this standardized metadata, unlike existing applications which are temporarily consumed after development, reusable and interoperable contents can be made.Professor Woo said, "By enhancing the interoperability and reusability of AR contents, we will be able to preoccupy new markets in the field of smart tourism."

The research was conducted through the joint work with Post Media (CEO Hong Seung-mo) in the CT R&D project of the Ministry of Culture, Sports and Tourism of Korea. The results of the research will be announced through the HCI International 2017 conference in Canada this July.

Figure 1. 360 degree panorama image / video function screen of 'K-Culture Time Machine'. Smartphone HMD allows users to freely experience various cultural sites remotely.

Figure 2. 'K-Culture Time Machine' mobile augmented reality function screen. By analyzing the location of the user and the screen viewed through the camera, information related to the cultural heritage are provided to enhance the user experience.

Figure 3. The concept of 360-degree panoramic video-based VR service of "K-Culture Time Machine", a wearable application supporting smart tour of the historical sites. Through the smartphone HMD, a user can remotely experience cultural heritage sites and 3D reconstruction of cultural heritage that does not currently exist.

2017.05.30 View 9885 -

Parasitic Robot System for Turtle's Waypoint Navigation

A KAIST research team presented a hybrid animal-robot interaction called “the parasitic robot system,” that imitates the nature relationship between parasites and host.

The research team led by Professor Phil-Seung Lee of the Department of Mechanical Engineering took an animal’s locomotive abilities to apply the theory of using a robot as a parasite. The robot is attached to its host animal in a way similar to an actual parasite, and it interacts with the host through particular devices and algorithms.

Even with remarkable technology advancements, robots that operate in complex and harsh environments still have some serious limitations in moving and recharging. However, millions of years of evolution have led to there being many real animals capable of excellent locomotion and survive in actual natural environment.

Certain kinds of real parasites can manipulate the behavior of the host to increase the probability of its own reproduction. Similarly, in the proposed concept of a “parasitic robot,” a specific behavior is induced by the parasitic robot in its host to benefit the robot.

The team chose a turtle as their first host animal and designed a parasitic robot that can perform “stimulus-response training.” The parasitic robot, which is attached to the turtle, can induce the turtle’s object-tracking behavior through repeated training sessions.

The robot then simply guides it using LEDs and feeds it snacks as a reward for going in the right direction through a programmed algorithm. After training sessions lasting five weeks, the parasitic robot can successfully control the direction of movement of the host turtles in the waypoint navigation task in a water tank.

This hybrid animal–robot interaction system could provide an alternative solution of the limitations of conventional mobile robot systems in various fields. Ph.D. candidate Dae-Gun Kim, the first author of this research said that there are a wide variety of animals including mice, birds, and fish that could perform equally as well at such tasks. He said that in the future, this system will be applied to various exploration and reconnaissance missions that humans and robots find it difficult to do on their own.

Kim said, “This hybrid animal-robot interaction system could provide an alternative solution to the limitations of conventional mobile robot systems in various fields, and could also act as a useful interaction system for the behavioral sciences.”

The research was published in the Journal of Bionic Engineering April issue.

2017.05.19 View 8706

Parasitic Robot System for Turtle's Waypoint Navigation

A KAIST research team presented a hybrid animal-robot interaction called “the parasitic robot system,” that imitates the nature relationship between parasites and host.

The research team led by Professor Phil-Seung Lee of the Department of Mechanical Engineering took an animal’s locomotive abilities to apply the theory of using a robot as a parasite. The robot is attached to its host animal in a way similar to an actual parasite, and it interacts with the host through particular devices and algorithms.

Even with remarkable technology advancements, robots that operate in complex and harsh environments still have some serious limitations in moving and recharging. However, millions of years of evolution have led to there being many real animals capable of excellent locomotion and survive in actual natural environment.

Certain kinds of real parasites can manipulate the behavior of the host to increase the probability of its own reproduction. Similarly, in the proposed concept of a “parasitic robot,” a specific behavior is induced by the parasitic robot in its host to benefit the robot.

The team chose a turtle as their first host animal and designed a parasitic robot that can perform “stimulus-response training.” The parasitic robot, which is attached to the turtle, can induce the turtle’s object-tracking behavior through repeated training sessions.

The robot then simply guides it using LEDs and feeds it snacks as a reward for going in the right direction through a programmed algorithm. After training sessions lasting five weeks, the parasitic robot can successfully control the direction of movement of the host turtles in the waypoint navigation task in a water tank.

This hybrid animal–robot interaction system could provide an alternative solution of the limitations of conventional mobile robot systems in various fields. Ph.D. candidate Dae-Gun Kim, the first author of this research said that there are a wide variety of animals including mice, birds, and fish that could perform equally as well at such tasks. He said that in the future, this system will be applied to various exploration and reconnaissance missions that humans and robots find it difficult to do on their own.

Kim said, “This hybrid animal-robot interaction system could provide an alternative solution to the limitations of conventional mobile robot systems in various fields, and could also act as a useful interaction system for the behavioral sciences.”

The research was published in the Journal of Bionic Engineering April issue.

2017.05.19 View 8706 -

KAIST studnets win 2014 Creative Vitamin Project Competition

A team of KAIST students have won the grand prize for the “2014 Creative Vitamin Project Competition” held on May 28, 2014 in Seoul. The event was co-hosted by the Ministry of Science, ICT and Future Planning, National Information Society Agency, and Korea IT Convergence Technology Association. The Creative Vitamin Project is the Korean government’s initiative to grow the Korean economy and generate job creation by applying science and technology, information and communications technology in particular, to the existing industry and social issues.

The winners were Hyeong-Min Son, a student in the master’s program in the Department of Civil and Environmental Engineering, KAIST and Su-Yeon Yoo, a Ph.D. student from the Graduate School of Information Security, KAIST.

Son and Yoo proposed a sustainable crop protection system using directional speakers. This technique not only efficiently protects crops from harmful animals, but also effectively guides the animals outside the farmland.

Kwang-Soo Jang, the Director of the National Information Society Agency, said, “This competition provides an opportunity to develop public consensus and interest in the Creative Vitamin Project. We hope that through the participation of all citizens, the project can become an instrument to realizing the creative economy.”

2014.06.18 View 8288

KAIST studnets win 2014 Creative Vitamin Project Competition

A team of KAIST students have won the grand prize for the “2014 Creative Vitamin Project Competition” held on May 28, 2014 in Seoul. The event was co-hosted by the Ministry of Science, ICT and Future Planning, National Information Society Agency, and Korea IT Convergence Technology Association. The Creative Vitamin Project is the Korean government’s initiative to grow the Korean economy and generate job creation by applying science and technology, information and communications technology in particular, to the existing industry and social issues.

The winners were Hyeong-Min Son, a student in the master’s program in the Department of Civil and Environmental Engineering, KAIST and Su-Yeon Yoo, a Ph.D. student from the Graduate School of Information Security, KAIST.

Son and Yoo proposed a sustainable crop protection system using directional speakers. This technique not only efficiently protects crops from harmful animals, but also effectively guides the animals outside the farmland.

Kwang-Soo Jang, the Director of the National Information Society Agency, said, “This competition provides an opportunity to develop public consensus and interest in the Creative Vitamin Project. We hope that through the participation of all citizens, the project can become an instrument to realizing the creative economy.”

2014.06.18 View 8288 -

KAIST Participates in the 2014 Davos Forum on January 22-25 in Switzerland

Through the sessions of the Global University Leaders Forum, IdeasLab, and Global Agenda Councils on Biotechnology, KAIST participants will actively engage with global leaders in the discussion of issues on education innovation and technological breakthroughs.

The 2014 Annual Meeting of the World Economic Forum (WEF), known as the Davos Forum, will kick off on January 22-25 in Davos-Klosters, Switzerland, under the theme of "The Reshaping of the World: Consequences for Society, Politics, and Business." Each year, the Forum attracts about 2,500 distinguished leaders from all around the world and provides an open platform to identify the current and emerging challenges facing the global community and to develop ideas and actions necessary to respond to such challenges.

President Sung-Mo Steve Kang and Distinguished Professor Sang Yup Lee from the Department of Chemical and Biomolecular Engineering, KAIST, will attend the Forum and engage in a series of dialogues on such issues as Massive Open Online Courses, new paradigms for universities and researchers, the transformation of higher education, the role and value of scientific discoveries, and the impact of biotechnology on the future of society and business.

At the session entitled "New Paradigms for Universities of the Future" hosted by the Global University Leaders Forum (GULF), President Kang will introduce KAIST"s ongoing online education program, Education 3.0. GULF was created in 2006 by WEF, which is a small community of the presidents and senior representatives of the top universities in the world.

Implemented in 2012, Education 3.0 incorporates advanced information and communications technology (ICT) to offer students and teachers a learner-based, team-oriented learning and teaching environment. Under Education 3.0, students study online and meet in groups with a professor for in-depth discussions, collaboration, and problem-solving. KAIST plans to expand the program to embrace the global community in earnest by establishing Education 3.0 Global in order to have interactive real-time classes for students and researchers across regions and cultures.

President Kang will also present a paper entitled "Toward Socially Responsible Technology: KAIST"s Approach to Integrating Social and Behavioral Perspectives into Technology Development" at another session of GULF called "Seeking New Approaches to Critical Global Challenges." In the paper, President Kang points out that notwithstanding the many benefits we enjoy from the increasingly interconnected world, digital media may pose a threat to become a new outlet for social problems, for example, Internet or digital addiction.

Experts say that early exposure to digital devices harms the healthy development of cognitive functions, emotions, and social behavior. President Kang will introduce KAIST"s recent endeavor to develop a non-intrusive technology to help prevent digital addiction, which will ultimately be embedded in the form of a virtual coach or mentor that helps and guides people under risk to make constructive use of digital devices. President Kang stresses the fundamental shift in the science and technology development paradigm from research and development (R&D) to a research and solution development (R&SD), taking serious consideration of societal needs, quality of life, and social impacts when conducting research.

Professor Sang Yup Lee will moderate the IdeasLab session at the Davos Forum entitled "From Lab to Life with the California Institute of Technology (Caltech)." Together with scientists from Caltech, he will discuss scientific breakthroughs that transform institutions, industries, and individuals in the near future, such as the development of damage-tolerant lightweight materials with nanotechnology, the ability to read and write genomes, and wireless lab-in-the-body monitors. In addition, he will meet global business leaders at the session of "Sustainability, Innovation, and Growth" and speak about how emerging technologies, biotechnology in particular, will transform future societies, business, and industries.

As a current special adviser of the World Economic Forum"s (WEF) Chemicals Industry Community, Professor Lee will meet global chairs and chief executive officers of chemical companies and discuss ways to advance the industry to become more bio-based and environmentally friendly. He served as a founding chairman of WEF"s Global Agenda Councils on Biotechnology in 2013.

President Sung-Mo Steve Kang Distinguished Professor Sang Yup Lee

2014.01.17 View 8552

KAIST Participates in the 2014 Davos Forum on January 22-25 in Switzerland

Through the sessions of the Global University Leaders Forum, IdeasLab, and Global Agenda Councils on Biotechnology, KAIST participants will actively engage with global leaders in the discussion of issues on education innovation and technological breakthroughs.