research

A KAIST research team has developed a new context-awareness technology that enables AI assistants to determine when to talk to their users based on user circumstances. This technology can contribute to developing advanced AI assistants that can offer pre-emptive services such as reminding users to take medication on time or modifying schedules based on the actual progress of planned tasks.

Unlike conventional AI assistants that used to act passively upon users’ commands, today’s AI assistants are evolving to provide more proactive services through self-reasoning of user circumstances. This opens up new opportunities for AI assistants to better support users in their daily lives. However, if AI assistants do not talk at the right time, they could rather interrupt their users instead of helping them.

The right time for talking is more difficult for AI assistants to determine than it appears. This is because the context can differ depending on the state of the user or the surrounding environment.

A group of researchers led by Professor Uichin Lee from the KAIST School of Computing identified key contextual factors in user circumstances that determine when the AI assistant should start, stop, or resume engaging in voice services in smart home environments. Their findings were published in the Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT) in September.

The group conducted this study in collaboration with Professor Jae-Gil Lee’s group in the KAIST School of Computing, Professor Sangsu Lee’s group in the KAIST Department of Industrial Design, and Professor Auk Kim’s group at Kangwon National University.

After developing smart speakers equipped with AI assistant function for experimental use, the researchers installed them in the rooms of 40 students who live in double-occupancy campus dormitories and collected a total of 3,500 in-situ user response data records over a period of a week.

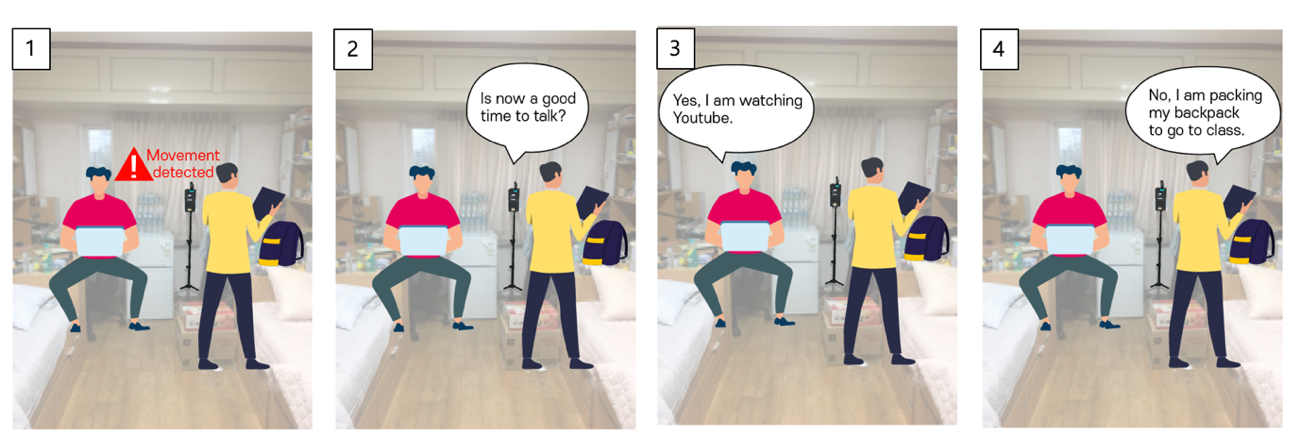

The smart speakers repeatedly asked the students a question, “Is now a good time to talk?” at random intervals or whenever a student’s movement was detected. Students answered with either “yes” or “no” and then explained why, describing what they had been doing before being questioned by the smart speakers.

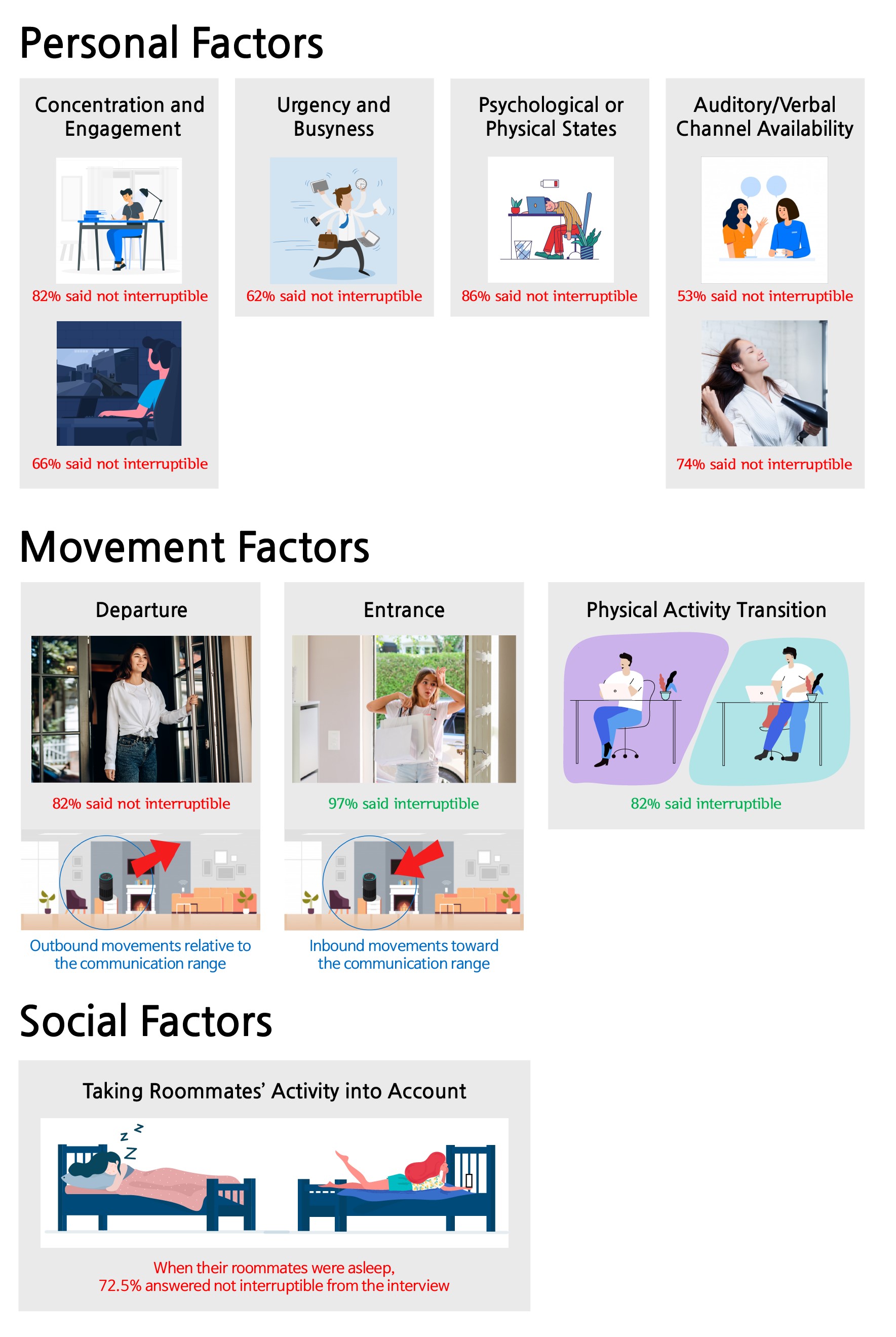

Data analysis revealed that 47% of user responses were “no” indicating they did not want to be interrupted. The research team then created 19 home activity categories to cross-analyze the key contextual factors that determine opportune moments for AI assistants to talk, and classified these factors into ‘personal,’ ‘movement,’ and ‘social’ factors respectively.

Personal factors, for instance, include:

1. the degree of concentration on or engagement in activities,

2. the degree urgency and busyness,

3. the state of user’s mental or physical condition, and

4. the state of being able to talk or listen while multitasking.

While users were busy concentrating on studying, tired, or drying hair, they found it difficult to engage in conversational interactions with the smart speakers.

Some representative movement factors include departure, entrance, and physical activity transitions. Interestingly, in movement scenarios, the team found that the communication range was an important factor. Departure is an outbound movement from the smart speaker, and entrance is an inbound movement. Users were much more available during inbound movement scenarios as opposed to outbound movement scenarios.

In general, smart speakers are located in a shared place at home, such as a living room, where multiple family members gather at the same time. In Professor Lee’s group’s experiment, almost half of the in-situ user responses were collected when both roommates were present. The group found social presence also influenced interruptibility. Roommates often wanted to minimize possible interpersonal conflicts, such as disturbing their roommates' sleep or work.

Narae Cha, the lead author of this study, explained, “By considering personal, movement, and social factors, we can envision a smart speaker that can intelligently manage the timing of conversations with users.”

She believes that this work lays the foundation for the future of AI assistants, adding, “Multi-modal sensory data can be used for context sensing, and this context information will help smart speakers proactively determine when it is a good time to start, stop, or resume conversations with their users.”

This work was supported by the National Research Foundation (NRF) of Korea.

< Image 1. In-situ experience sampling of user availability for conversations with AI assistants >

< Image 2. Key Contextual Factors that Determine Optimal Timing for AI Assistants to Talk >

Publication:

Cha, N, et al. (2020) “Hello There! Is Now a Good Time to Talk?”: Opportune Moments for Proactive Interactions with Smart Speakers. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT), Vol. 4, No. 3, Article No. 74, pp. 1-28. Available online at https://doi.org/10.1145/3411810

Link to Introductory Video:

https://youtu.be/AA8CTi2hEf0

Profile:

Uichin Lee

Associate Professor

uclee@kaist.ac.kr

http://ic.kaist.ac.kr

Interactive Computing Lab.

School of Computing

https://www.kaist.ac.kr

Korea Advanced Institute of Science and Technology (KAIST)

Daejeon, Republic of Korea

(END)

-

research KAIST introduces microbial food as a strategy food production of the future

The global food crisis is increasing due to rapid population growth and declining food productivity to climate change. Moreover, today's food production and supply system emit a huge amount of carbon dioxide, reaching 30% of the total amount emitted by humanity, aggravating climate change. Sustainable and nutritious microbial food is attracting attention as a key to overcoming this impasse. KAIST (President Kwang Hyung Lee) announced on April 12th that Research Professor Kyeong Rok Choi of th

2024-04-12 -

research KAIST Research Team Creates the Scent of Jasmine from Microorganisms

The fragrance of jasmine and ylang-ylang, used widely in the manufacturing of cosmetics, foods, and beverages, can be produced by direct extraction from their respective flowers. In reality, this makes it difficult for production to meet demand, so companies use benzyl acetate, a major aromatic component of the two fragrances that is chemically synthesized from raw materials derived from petroleum. On February 26, a KAIST research team led by Research Professor Kyeong Rok Choi from the BioPro

2024-03-05 -

event KAIST to begin Joint Research to Develop Next-Generation LiDAR System with Hyundai Motor Group

< (From left) Jong-Soo Lee, Executive Vice President at Hyundai Motor, Sang-Yup Lee, Senior Vice President for Research at KAIST > The ‘Hyundai Motor Group-KAIST On-Chip LiDAR Joint Research Lab’ was opened at KAIST’s main campus in Daejeon to develop LiDAR sensors for advanced autonomous vehicles. The joint research lab aims to develop high-performance and compact on-chip sensors and new signal detection technology, which are essential in the increasingly compe

2024-02-27 -

event KAIST Demonstrates AI and sustainable technologies at CES 2024

On January 2, KAIST announced it will be participating in the Consumer Electronics Show (CES) 2024, held between January 9 and 12. CES 2024 is one of the world’s largest tech conferences to take place in Las Vegas. Under the slogan “KAIST, the Global Value Creator” for its exhibition, KAIST has submitted technologies falling under one of following themes: “Expansion of Human Intelligence, Mobility, and Reality”, and “Pursuit of Human Security and Sustaina

2024-01-05 -

research A KAIST Research Team Develops High-Performance Stretchable Solar Cells

With the market for wearable electric devices growing rapidly, stretchable solar cells that can function under strain have received considerable attention as an energy source. To build such solar cells, it is necessary that their photoactive layer, which converts light into electricity, shows high electrical performance while possessing mechanical elasticity. However, satisfying both of these two requirements is challenging, making stretchable solar cells difficult to develop. On December 26,

2024-01-04