research

A KAIST research team has developed a new context-awareness technology that enables AI assistants to determine when to talk to their users based on user circumstances. This technology can contribute to developing advanced AI assistants that can offer pre-emptive services such as reminding users to take medication on time or modifying schedules based on the actual progress of planned tasks.

Unlike conventional AI assistants that used to act passively upon users’ commands, today’s AI assistants are evolving to provide more proactive services through self-reasoning of user circumstances. This opens up new opportunities for AI assistants to better support users in their daily lives. However, if AI assistants do not talk at the right time, they could rather interrupt their users instead of helping them.

The right time for talking is more difficult for AI assistants to determine than it appears. This is because the context can differ depending on the state of the user or the surrounding environment.

A group of researchers led by Professor Uichin Lee from the KAIST School of Computing identified key contextual factors in user circumstances that determine when the AI assistant should start, stop, or resume engaging in voice services in smart home environments. Their findings were published in the Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT) in September.

The group conducted this study in collaboration with Professor Jae-Gil Lee’s group in the KAIST School of Computing, Professor Sangsu Lee’s group in the KAIST Department of Industrial Design, and Professor Auk Kim’s group at Kangwon National University.

After developing smart speakers equipped with AI assistant function for experimental use, the researchers installed them in the rooms of 40 students who live in double-occupancy campus dormitories and collected a total of 3,500 in-situ user response data records over a period of a week.

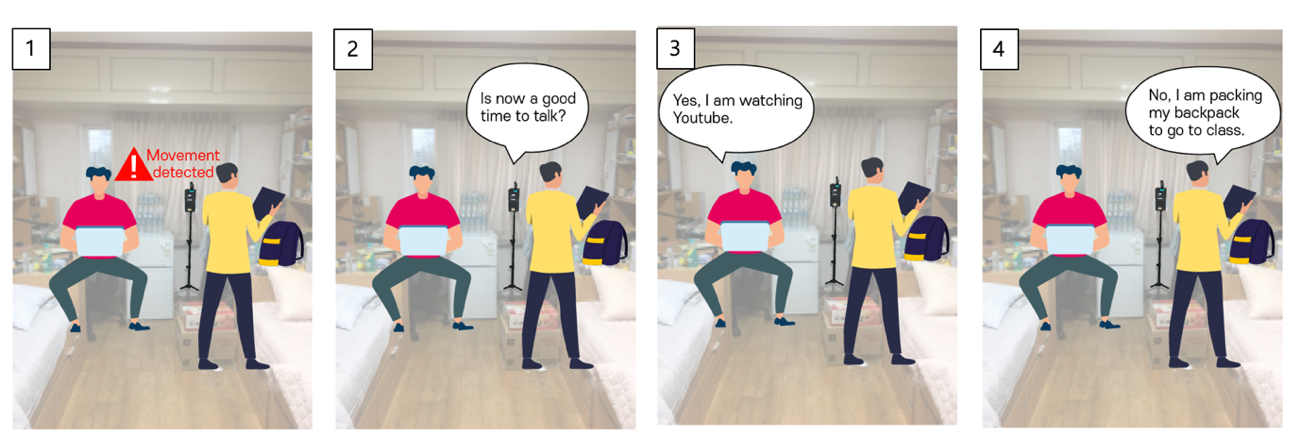

The smart speakers repeatedly asked the students a question, “Is now a good time to talk?” at random intervals or whenever a student’s movement was detected. Students answered with either “yes” or “no” and then explained why, describing what they had been doing before being questioned by the smart speakers.

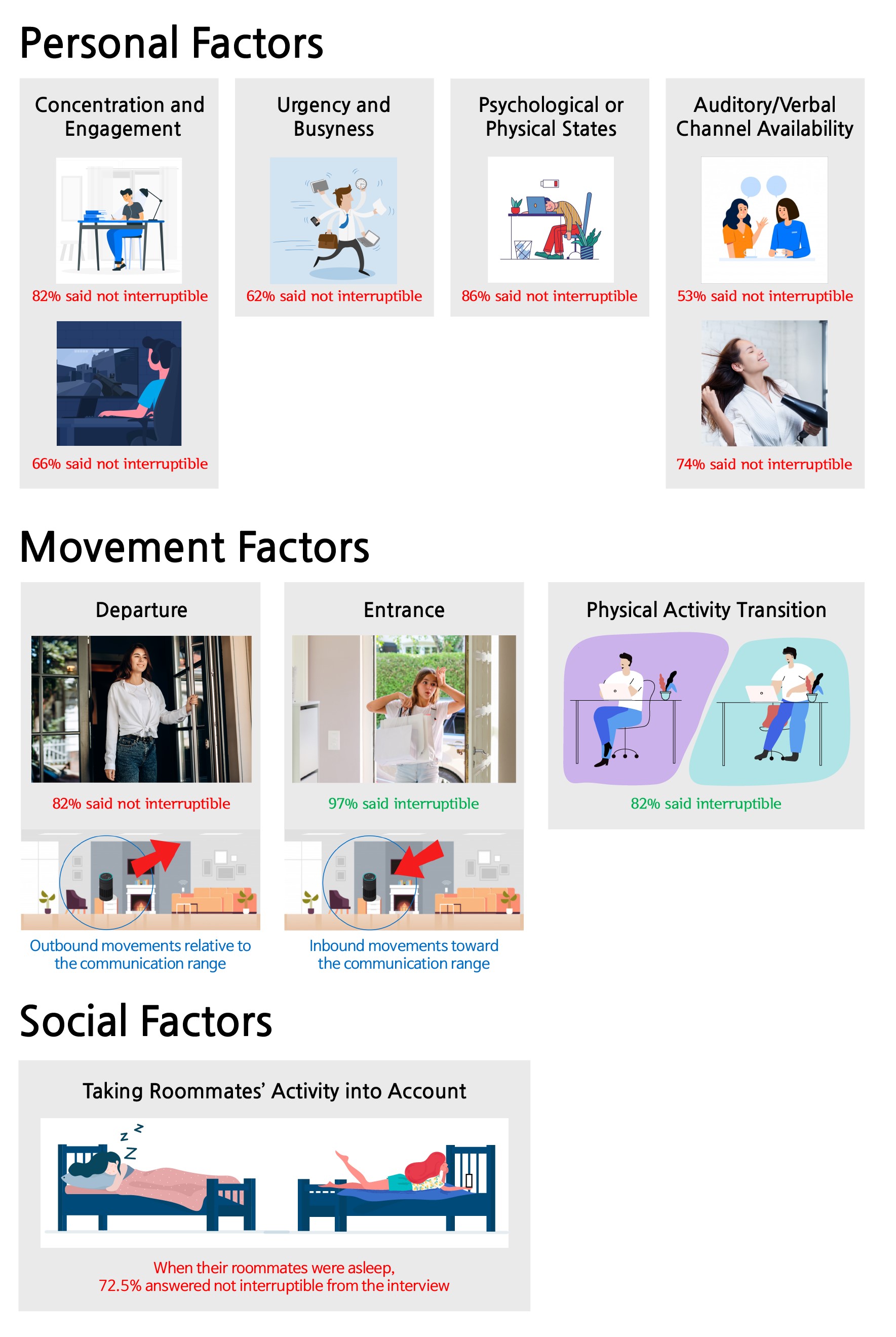

Data analysis revealed that 47% of user responses were “no” indicating they did not want to be interrupted. The research team then created 19 home activity categories to cross-analyze the key contextual factors that determine opportune moments for AI assistants to talk, and classified these factors into ‘personal,’ ‘movement,’ and ‘social’ factors respectively.

Personal factors, for instance, include:

1. the degree of concentration on or engagement in activities,

2. the degree urgency and busyness,

3. the state of user’s mental or physical condition, and

4. the state of being able to talk or listen while multitasking.

While users were busy concentrating on studying, tired, or drying hair, they found it difficult to engage in conversational interactions with the smart speakers.

Some representative movement factors include departure, entrance, and physical activity transitions. Interestingly, in movement scenarios, the team found that the communication range was an important factor. Departure is an outbound movement from the smart speaker, and entrance is an inbound movement. Users were much more available during inbound movement scenarios as opposed to outbound movement scenarios.

In general, smart speakers are located in a shared place at home, such as a living room, where multiple family members gather at the same time. In Professor Lee’s group’s experiment, almost half of the in-situ user responses were collected when both roommates were present. The group found social presence also influenced interruptibility. Roommates often wanted to minimize possible interpersonal conflicts, such as disturbing their roommates' sleep or work.

Narae Cha, the lead author of this study, explained, “By considering personal, movement, and social factors, we can envision a smart speaker that can intelligently manage the timing of conversations with users.”

She believes that this work lays the foundation for the future of AI assistants, adding, “Multi-modal sensory data can be used for context sensing, and this context information will help smart speakers proactively determine when it is a good time to start, stop, or resume conversations with their users.”

This work was supported by the National Research Foundation (NRF) of Korea.

< Image 1. In-situ experience sampling of user availability for conversations with AI assistants >

< Image 2. Key Contextual Factors that Determine Optimal Timing for AI Assistants to Talk >

Publication:

Cha, N, et al. (2020) “Hello There! Is Now a Good Time to Talk?”: Opportune Moments for Proactive Interactions with Smart Speakers. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT), Vol. 4, No. 3, Article No. 74, pp. 1-28. Available online at https://doi.org/10.1145/3411810

Link to Introductory Video:

https://youtu.be/AA8CTi2hEf0

Profile:

Uichin Lee

Associate Professor

uclee@kaist.ac.kr

http://ic.kaist.ac.kr

Interactive Computing Lab.

School of Computing

https://www.kaist.ac.kr

Korea Advanced Institute of Science and Technology (KAIST)

Daejeon, Republic of Korea

(END)

-

event Formosa Group of Taiwan to Establish Bio R&D Center at KAIST Investing 12.5 M USD

KAIST (President Kwang-Hyung Lee) announced on February 17th that it signed an agreement for cooperation in the bio-medical field with Formosa Group, one of the three largest companies in Taiwan. < Formosa Group Chairman Sandy Wang and KAIST President Kwang-Hyung Lee at the signing ceremony > Formosa Group Executive Committee member and Chairman Sandy Wang, who leads the group's bio and eco-friendly energy sectors, decided to establish a bio-medical research center within KAIST and i

2025-02-17 -

event KAIST Holds 2025 Commencement Ceremony

KAIST (President Kwang-Hyung Lee) held its 2025 Commencement Ceremony at the Lyu Keun-Chul Sports Complex on the Daejeon Main Campus at 2 p.m. on the 14th of February. < A scene from KAIST Commencement 2025 - Guests of Honor and Administrative Professors Entering the Stage headed by the color guards of the ELKA (Encouraging Leaders of KAIST) > At this ceremony, a total of 3,144 degrees were conferred, including 785 doctorates, 1,643 masters, and 716 bachelors. With this, KAIST has

2025-02-14 -

research KAIST Develops Wearable Carbon Dioxide Sensor to Enable Real-time Apnea Diagnosis

- Professor Seunghyup Yoo’s research team of the School of Electrical Engineering developed an ultralow-power carbon dioxide (CO2) sensor using a flexible and thin organic photodiode, and succeeded in real-time breathing monitoring by attaching it to a commercial mask - Wearable devices with features such as low power, high stability, and flexibility can be utilized for early diagnosis of various diseases such as chronic obstructive pulmonary disease and sleep apnea < Photo 1. Fro

2025-02-13 -

research KAIST Proves Possibility of Preventing Hair Loss with Polyphenol Coating Technology

- KAIST's Professor Haeshin Lee's research team of the Department of Chemistry developed tannic scid-based hair coating technology - Hair protein (hair and hair follicle) targeting delivery technology using polyphenol confirms a hair loss reduction effect of up to 90% to manifest within 7 Days - This technology, first applied to 'Grabity' shampoo, proves effect of reducing hair loss chemically and physically < Photo. (From left) KAIST Chemistry Department Ph.D. candidate Eunu Kim, Pro

2025-02-06 -

research KAIST Uncovers the Principles of Gene Expression Regulation in Cancer and Cellular Functions

< (From left) Professor Seyun Kim, Professor Gwangrog Lee, Dr. Hyoungjoon Ahn, Dr. Jeongmin Yu, Professor Won-Ki Cho, and (below) PhD candidate Kwangmin Ryu of the Department of Biological Sciences> A research team at KAIST has identified the core gene expression networks regulated by key proteins that fundamentally drive phenomena such as cancer development, metastasis, tissue differentiation from stem cells, and neural activation processes. This discovery lays the foundation for dev

2025-01-24